Detector Data Acquistion: Difference between revisions

No edit summary |

No edit summary |

||

| (5 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

Back to [[Documentation]] | |||

= Table of Figures = | = Table of Figures = | ||

[[#_Toc489943228| | [[#_Toc489943228|Figure 1 - Overall data flow for the scheme from the detector to the data storage area. 10]] | ||

[[#_Toc489943229| | [[#_Toc489943229|Figure 2 - Frontend local fragment assembly, similar data for the event builder. Each grey box refers to a Thread collecting and filtering locally its fragment before making it available to the fragment builder 10]] | ||

= Introduction = | = Introduction = | ||

| Line 150: | Line 152: | ||

The Data Acquisition system (MIDAS) will not be aware of the trigger therefore will have no interaction with the hardware for the trigger decision. The data stream will constantly be pulled from Ethernet of the acquisition modules and an “event builder” application using the timestamp information attached to each received packet for fragment reassembly. | The Data Acquisition system (MIDAS) will not be aware of the trigger therefore will have no interaction with the hardware for the trigger decision. The data stream will constantly be pulled from Ethernet of the acquisition modules and an “event builder” application using the timestamp information attached to each received packet for fragment reassembly. | ||

= Data Rate consideration = | |||

The data and event rate capabilities of the overall acquisition system have an important impact on the design of the data flow scheme. Several factors are to be considered. | |||

* The event rate is to satisfy the required statistics of the physics goal in the time frame of the experiment. | |||

* The data rate is to be minimized to prevent “bottle neck” issues along the data path. | |||

* The overall data and data rate is to be compatible with the computing infrastructure available for the experiment. | |||

Considering the current data flow layout based on known data size for all three main data sources (anode wire, cathode pad, barrel scintillator) together represent about 20MB/event in the case of uncompressed format. Based on a single 10Gb/s network bandwidth, the overall trigger rate is limited around 50 Hz and not sufficient for the goal of the experiment. That 20MB/s has also some other constraints on the data storage link as well. | |||

In the other hand, the hardware foreseen for the acquisition may have their own constrains. The anode wire and barrel scintillator use a WFD which produce large amount daa with almost “no limit” on the event rate (dependent on the sampling rate), while the cathode pad readout board uses an SCA which has an intrinsic acquisition dead time limiting the readout speed to 500Hz. | |||

Taking all these in consideration, effort is placed on the data reduction and compression to increase the trigger rate and at the same time reduce the amount of data produced. | |||

== Data size and data rates == | |||

* Event size computation for rTPC anode wires (AW): | |||

2 sides * 16 preamps/side * 16 channels/preamp = 512 channels * 700 samples at 2 bytes/sample = 0.7 Mbyte/event | |||

* Event size computation for rTPC cathode pads: | |||

64 feam boards * 4 after/feam * 72 ch/after = 18432 channels * 512 samples at 2 bytes = 19 MB/event | |||

* Event size computation for barrel scintillator ADC (BSC): | |||

64 bars * 2 sides = 128 channels * 700 samples at 2 bytes = 0.2 Mbyte/event | |||

* Event size computation for barrel scintillator TDC (BSC): | |||

There is no fixed TDC event size per event, number of TDC hits depends on actual channel occupancy during data taking. For typical annihilation event, with 3-5 pion tracks, should see hits in 3-5 bars at both ends will be 6-10 TDC hits, at 4 bytes per hit, 24-40 bytes per event. Typical cosmic ray event with 2 tracks (1 in, 1 out), is similar. | |||

Grand total is: 0.7 + 19 + 0.2 = 20 MB/event (before data reduction and data compression) | |||

Data rates into the DAQ (uncompressed data, i.e. diagnostics and pedestals mode): | |||

Assuming 10GigE network: 1000 MB/s / 20 MB = 50 Hz (max uncompressed event rate into the DAQ) storage capacity of 8TB data array (200 MB/sec typical write rate): 8'000'000 MB / 200 MB/sec = 40 Ksec = 11 hrs. | |||

Assuming data reduction (removal of empty channels, removal of baseline) and data compression (gzip, lz4 and similar) combined yield 10-to-1: | |||

Compressed data size: 20 MB/event -> 2 MB/event compressed data max data rate into the DAQ: 1000 MB/sec / 2 MB/event = 500 Hz (compressed data). | |||

Storage capacity of 8TB data array: 11 hrs. -> 110 hrs. (4 days) max data rate to EOS/Castor (1gige network) 100 MB/sec / 2 MB/event = 50 Hz (compressed data) | |||

== Discussion of assumptions == | |||

The 3 main assumptions made in these calculations are: | |||

* Data compression ratio of 2-to-1 (50%) is typical for this type of data (using gzip, lz4 and similar) | |||

* Data reduction ratio of 5-to-1 (removal of empty channels, removal of waveform baseline, etc.). For now this ratio is conservative. Electronic noise level in the detector being the main contributor to this ratio. | |||

* "background" cosmic trigger rate of 10 Hz | |||

The data reduction by removing empty channels, etc. mostly depends on the detector noise levels which are not known a priory. The noise levels will be measured on location at TRIUMF (once the full size TPC is available for data acquisition) and on location at CERN. Measurements using the short-length prototype TPC are not representative of the final detector because coupling between anode wire preamps on the two ends of the wire will be different (longer length of the full size TPC is expected to have less noise). | |||

The ALPHA-g experiment requires that all cosmic ray events should be recorded, because it is impossible discriminate them from 2-track annihilation events online in the trigger hardware. In the existing ALPHA-2 experiment cosmic ray events are separated from annihilation events offline using sophisticated multi-variate analysis techniques (MVA). | |||

This requires the DAQ to run with a very open trigger, basically only "empty" events and "all noise" events will be rejected online in the trigger hardware. The firing rate of this type of trigger in ALPHA-2 is 10 Hz (multiplicity trigger from the 3-layer Si strip detector). | |||

In ALPHA-g, the trigger will fire on a combination of TPC anode wires and Barrel Scintillator bars, which are expected to provide more a clean signal compared to the Si detector in ALPHA-2. | |||

The target rate for the "background" (or cosmic) trigger rate is around 10 Hz. | |||

== Discussion of readout time and DAQ dead time == | |||

The ALPHA-16 digitizers for the TPC anode wires and for the Barrel scintillator, and the TDC for the Barrel scintillator are expected to run in a dead-time free mode: | |||

* Event size: 16+32 channels * 700 samples/ch * 2 bytes/sample = 70 KB/event (uncompressed) | |||

* Max event rate: 1gige network link: 100'000 KB/sec / 70 KB/event = 1.5 kHz (uncompressed) | |||

* Compressed event rate: assuming 5-to-1 data reduction, event rate = 7.5 kHz (compressed data). | |||

Because 16 ADC modules (1gige each) feed into a single 10gige network link, dead time due to full data buffers may occur if too many events come in quick bursts. | |||

The FEAM digitizers for the TPC pads have significant dead time for reading out the AFTER SCA ASICs. | |||

All 4 SCAs are read in parallel, but readout of each SCA takes a very long time: | |||

512 time bins * 80 channels / 20 Msamples/sec = 2 ms. | |||

This limits the maximum theoretical readout rate to 500 Hz. | |||

At the assumed 10 Hz cosmic trigger rate, the dead time is: 10 Hz * 2 ms / 1000 ms = 2% (dead time at 10 Hz). | |||

* Event size: 72 ch * 511 samples * 2 bytes/sample * 4 SCA = 300 KB/event (uncompressed) | |||

* Max event rate: 1gige network link: 100'000 KB/sec / 300 KB = 300 Hz (uncompressed) | |||

* Compressed event rate: assuming 5-to-1 data reduction, event size is 60 KB, rate is 1500 Hz (compressed data) | |||

The data transmission rate for compressed data 1500 Hz is higher than the 500 Hz limit of the SCA readout, this means data can be transmitted out of the FEAM board faster than it is acquired and there is no built-in dead time due to data transmission rate mismatch (no more than 2% dead time) | |||

However, the 64 FEAM 1gige links feed into a single 10gige link, so again, dead time will occur if data buffers become full when multiple events arrive in a quick burst. | |||

For 64 FEAMs feeding into a single 10gige link, max compressed event rate is: 1000 MB/sec / 64 FEAMs / 60 KB = 260 Hz (compressed data). | |||

'''''Note''''': This single 10gige link is also shared with the ALPHA-16 digitizers. | |||

== Conclusion == | |||

TPC-type detectors readout by waveform digitizers produce large amounts of data. | |||

Using state-of-the-art electronics - FPGAs with built-in 1gige network links, heavy duty 10Gige networking, latest generation back-end computers and high capacity, high speed data storage (8-10TB disks) - allows us to build a detector system with main characteristics - max trigger rate, readout dead time, etc. - comparable to the much smaller and simpler detector system used for the current ALPHA-2 experiment. The data volume will be much higher, but still manageable as data storage and data processing and analysis capabilities at CERN have also have grown significantly. | |||

The data flow path using a single 10GigE limits the event rate around 250Hz based on a compression/reduction of 10:1, while the slowest hardware (FEAM) can operate up to 500Hz. | |||

= Midas Software configuration = | = Midas Software configuration = | ||

| Line 191: | Line 262: | ||

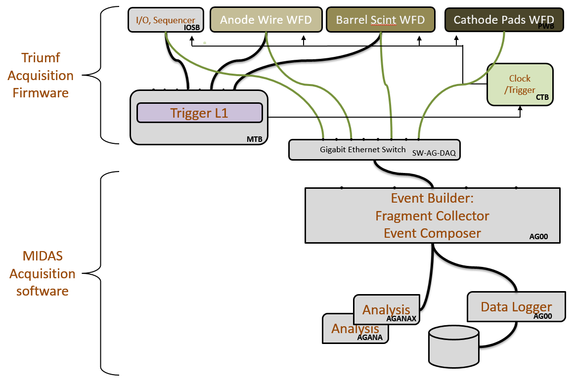

''Figure 1, 2'' and the diagram below illustrate the data flow described in this section: | ''Figure 1, 2'' and the diagram below illustrate the data flow described in this section: | ||

[[File: | [[File:imagea2.png|576x386px]] | ||

<span id="_Toc489943228" class="anchor"></span>''Figure 1 - Overall dataflow for scheme from the detector to the data storage area.'' | <span id="_Toc489943228" class="anchor"></span>''Figure 1 - Overall dataflow for scheme from the detector to the data storage area.'' | ||

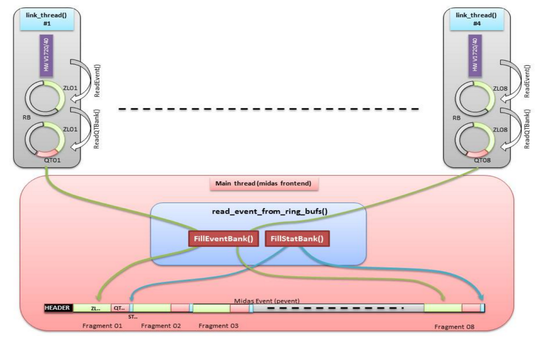

[[File: | [[File:imagea3.png|536x343px]] | ||

<span id="_Ref489943269" class="anchor"><span id="_Toc489943229" class="anchor"></span></span>''Figure 2 - Frontend local fragment assembly, similar data for the event builder. Each grey box refers to a Thread collecting and filtering locally its fragment before making it available to the fragment builder '' | <span id="_Ref489943269" class="anchor"><span id="_Toc489943229" class="anchor"></span></span>''Figure 2 - Frontend local fragment assembly, similar data for the event builder. Each grey box refers to a Thread collecting and filtering locally its fragment before making it available to the fragment builder '' | ||

Latest revision as of 21:22, 11 October 2017

Back to Documentation

Table of Figures

Figure 1 - Overall data flow for the scheme from the detector to the data storage area. 10

Introduction

The ALPHA-g experiment is the next step in the line of successful anti-hydrogen experiments at CERN - ATHENA, ALPHA, ALPHA-2. These experiments have been designed, built and operated by mostly the same group of people, making it important to maximally maintain similarity and compatibility between the existing ALPHA-2 data acquisition system and the new ALPHA-g system. The ALPHA-2 and ALPHA-g machines are expected to operate together, at the same time, by the same set of people, for several years.

Looking back, the ATHENA experiment introduced the concept of using an advanced Si-strip particle detector to image annihilations of anti-hydrogen. The ALPHA experiment brought in the highly reliable MIDAS data acquisition software and the modular VME-based electronics (built at/for TRIUMF). The ALPHA-2 experiment saw the data acquisition system expanded by 50% without having to make any architectural changes.

ALPHA-g continues to build on the foundation, but because a 2 meter long Si strip detector is not practical, a TPC gas detector will be used instead.

Scope and Assumptions

Similar to ALPHA-2, ALPHA-g will consist of several computer controls systems in addition to data acquisition for the annihilation imaging detector.

There will be Labview and CompactPCI-based control systems for trap vacuum, for magnet controls, for positron source controls, for trap controls, for microwave and laser system controls.

All these systems are outside the scope of this document, except where, same as in ALPHA-2, they will log data into the MIDAS history system via network socket connections.

The data acquisition system for the particle detector includes a number of hardware components - digitizers for analog signals, firmware-based signal processing, trigger decision making, etc.

All these components are outside of the scope of this document, except where they require network communications for receiving experiment data, for configuration management and for monitoring.

Thus the scope of this document is limited to the software (and to hardware required to run it) that receives experiment data across the network, communicates with other hardware and software systems over the network.

Definitions and Abbreviations

General acronyms or terms used for the ALPHA-g experiment

| rTPC | Radial Time projection Chamber |

|---|---|

| AD | Antiproton Decelerator (CERN building where ALPHA-g is installed) |

| Trap | Device where the Antihydrogen annihilation take place within the cryostat. |

| AW | TPC Anode Wires |

| BSC | Barrel Scintillator Detector |

| GRIFFIN | Major Experiment facility at TRIUMF |

| ALPHA-16, ALPHA-T, FMC-32 | Similar electronics designed and built at Triumf for Griffin |

| MIDAS | Data Acquisition software package from PSI & TRIUMF |

| MIDAS ODB | Shared memory tree-structured database |

| SiPM | Solid-state light sensors used in the BSC detector |

| TPC AFTER ASIC | Custom chip from Saclay for recording TPC analog signals |

| TPC pas board (PADWING) | TPC Electronics signed and build at TRIUMF for ALPHA-g |

Table 1 – ALPHA-g Abbreviations

Edev-gitalb repository (restricted)

Specifications

The Data Acquisition system is overlooking all the detector parameters settings and manage the collection, analysis, recording and monitoring of the detector incoming data. These tasks can be described as follow.

Handling of experiment data

Experiment data will arrive over multiple 1GigE network links into the main DAQ computer, will be processed and transmitted to permanent storage at the main CERN data center, also across a 1GigE link.

The existing network link from CERN shared by ALPHA-2 and ALPHA-g runs at 1GigE speed and the collaboration does not plan to request that CERN upgrade this link to 10GigE or faster speed.

This limits the overall data throughput to 100 Mbytes/sec shared with ALPHA-2 (which in turn is limited to 20-30 Mbytes/sec).

Sources of experimental data:

- AW and BSC digitizers (FMC-32, ALPHA-16) – 16x1GigE network links.

- TPC data concentrators (FEAM) – 96x1GigE network links.

Data communication protocol and data format will be similar to the GRIFFIN setup.

Handling of Labview and Trap sequencer data

Same as in ALPHA-2, there will be a special MIDAS frontend to receive data from labview-based control systems (vacuum, magnets, trap sequencer, etc.). Data communication protocol and data format will be the same in ALPHA-2.

Configuration data

All configuration data will be stored in the MIDAS ODB database.

Major configuration items:

- Trigger configuration (multiplicity settings, channel mapping, dead channel blanking, etc.)

- AW and BSC digitizer settings

- TPC pad board settings and AFTER ASIC settings (FEAMs)

- UPS uninterruptible power supply with battery backup and "double conversion" power conditioning

Slow Controls data

All slow controls settings will be stored in the MIDAS ODB database. Slow controls data will be logged through the MIDAS history system.

Major slow controls items:

- Low voltage power supply controls

- High voltage power supply controls

- Environment monitoring (temperature probes, etc.)

- SiPM bias voltage controls

- TPC gas system control and monitoring

- TPC calibration system controls

- cooling system control and monitoring

- UPS monitoring

Some slow controls components will have software interlocks for non-safety critical protection of equipment, such as automatic shutdown of electronics on overheating, automatic shutdown of detectors on UPS alarms (i.e. loss of external AC power).

(It is assumed that safety-critical interlocks will be implemented in hardware, this is outside the scope of this particular document).

Trigger consideration

The Alpha-g physics experiment follows different particle trapping stages such as: Positron accumulation, Antiproton accumulation, mixing stage for formation of anti-hydrogen in the trap and annihilation stage for the actual measurement. The whole cycle takes several minutes and needs to be constantly monitored with the detector to ensure that external background events (cosmics, noise) are properly tagged off by the detector and that annihilation events from the trap are all recorded for further analysis. This is mainly done using the barrel scintillator which will record external incoming events and internal trapped events (annihilation). Timing analysis will resolve the distinction of the two main sources of events.

Therefore the barrel scintillator is the main contributor to the trigger decision. Additional information from the anode wires is beneficial even though its timing is extremely loose due to the implicit drift time range of the detector.

The Trigger decision is based initially on simple multiplicity of the barrel scintillator detector which tags the event to record. This multiplicity is computed in each of the 16 Alpha-16 boards and transmitted to the Mater Trigger Module (Alpha-T). There the individual fragments are matched in time and final trigger decision is taken, which in turn will be redistributed to all the acquisition modules.

In case the anode wire information is needed as well, the same fragment collection scheme is done for the wire and sent through the same physical link as the Alpha-16 modules are populated with the 32 channels at 62.5Msps mezzanine (FMC-32).

The Data Acquisition system (MIDAS) will not be aware of the trigger therefore will have no interaction with the hardware for the trigger decision. The data stream will constantly be pulled from Ethernet of the acquisition modules and an “event builder” application using the timestamp information attached to each received packet for fragment reassembly.

Data Rate consideration

The data and event rate capabilities of the overall acquisition system have an important impact on the design of the data flow scheme. Several factors are to be considered.

- The event rate is to satisfy the required statistics of the physics goal in the time frame of the experiment.

- The data rate is to be minimized to prevent “bottle neck” issues along the data path.

- The overall data and data rate is to be compatible with the computing infrastructure available for the experiment.

Considering the current data flow layout based on known data size for all three main data sources (anode wire, cathode pad, barrel scintillator) together represent about 20MB/event in the case of uncompressed format. Based on a single 10Gb/s network bandwidth, the overall trigger rate is limited around 50 Hz and not sufficient for the goal of the experiment. That 20MB/s has also some other constraints on the data storage link as well. In the other hand, the hardware foreseen for the acquisition may have their own constrains. The anode wire and barrel scintillator use a WFD which produce large amount daa with almost “no limit” on the event rate (dependent on the sampling rate), while the cathode pad readout board uses an SCA which has an intrinsic acquisition dead time limiting the readout speed to 500Hz. Taking all these in consideration, effort is placed on the data reduction and compression to increase the trigger rate and at the same time reduce the amount of data produced.

Data size and data rates

- Event size computation for rTPC anode wires (AW):

2 sides * 16 preamps/side * 16 channels/preamp = 512 channels * 700 samples at 2 bytes/sample = 0.7 Mbyte/event

- Event size computation for rTPC cathode pads:

64 feam boards * 4 after/feam * 72 ch/after = 18432 channels * 512 samples at 2 bytes = 19 MB/event

- Event size computation for barrel scintillator ADC (BSC):

64 bars * 2 sides = 128 channels * 700 samples at 2 bytes = 0.2 Mbyte/event

- Event size computation for barrel scintillator TDC (BSC):

There is no fixed TDC event size per event, number of TDC hits depends on actual channel occupancy during data taking. For typical annihilation event, with 3-5 pion tracks, should see hits in 3-5 bars at both ends will be 6-10 TDC hits, at 4 bytes per hit, 24-40 bytes per event. Typical cosmic ray event with 2 tracks (1 in, 1 out), is similar. Grand total is: 0.7 + 19 + 0.2 = 20 MB/event (before data reduction and data compression)

Data rates into the DAQ (uncompressed data, i.e. diagnostics and pedestals mode): Assuming 10GigE network: 1000 MB/s / 20 MB = 50 Hz (max uncompressed event rate into the DAQ) storage capacity of 8TB data array (200 MB/sec typical write rate): 8'000'000 MB / 200 MB/sec = 40 Ksec = 11 hrs.

Assuming data reduction (removal of empty channels, removal of baseline) and data compression (gzip, lz4 and similar) combined yield 10-to-1: Compressed data size: 20 MB/event -> 2 MB/event compressed data max data rate into the DAQ: 1000 MB/sec / 2 MB/event = 500 Hz (compressed data). Storage capacity of 8TB data array: 11 hrs. -> 110 hrs. (4 days) max data rate to EOS/Castor (1gige network) 100 MB/sec / 2 MB/event = 50 Hz (compressed data)

Discussion of assumptions

The 3 main assumptions made in these calculations are:

- Data compression ratio of 2-to-1 (50%) is typical for this type of data (using gzip, lz4 and similar)

- Data reduction ratio of 5-to-1 (removal of empty channels, removal of waveform baseline, etc.). For now this ratio is conservative. Electronic noise level in the detector being the main contributor to this ratio.

- "background" cosmic trigger rate of 10 Hz

The data reduction by removing empty channels, etc. mostly depends on the detector noise levels which are not known a priory. The noise levels will be measured on location at TRIUMF (once the full size TPC is available for data acquisition) and on location at CERN. Measurements using the short-length prototype TPC are not representative of the final detector because coupling between anode wire preamps on the two ends of the wire will be different (longer length of the full size TPC is expected to have less noise). The ALPHA-g experiment requires that all cosmic ray events should be recorded, because it is impossible discriminate them from 2-track annihilation events online in the trigger hardware. In the existing ALPHA-2 experiment cosmic ray events are separated from annihilation events offline using sophisticated multi-variate analysis techniques (MVA). This requires the DAQ to run with a very open trigger, basically only "empty" events and "all noise" events will be rejected online in the trigger hardware. The firing rate of this type of trigger in ALPHA-2 is 10 Hz (multiplicity trigger from the 3-layer Si strip detector). In ALPHA-g, the trigger will fire on a combination of TPC anode wires and Barrel Scintillator bars, which are expected to provide more a clean signal compared to the Si detector in ALPHA-2. The target rate for the "background" (or cosmic) trigger rate is around 10 Hz.

Discussion of readout time and DAQ dead time

The ALPHA-16 digitizers for the TPC anode wires and for the Barrel scintillator, and the TDC for the Barrel scintillator are expected to run in a dead-time free mode:

- Event size: 16+32 channels * 700 samples/ch * 2 bytes/sample = 70 KB/event (uncompressed)

- Max event rate: 1gige network link: 100'000 KB/sec / 70 KB/event = 1.5 kHz (uncompressed)

- Compressed event rate: assuming 5-to-1 data reduction, event rate = 7.5 kHz (compressed data).

Because 16 ADC modules (1gige each) feed into a single 10gige network link, dead time due to full data buffers may occur if too many events come in quick bursts. The FEAM digitizers for the TPC pads have significant dead time for reading out the AFTER SCA ASICs. All 4 SCAs are read in parallel, but readout of each SCA takes a very long time: 512 time bins * 80 channels / 20 Msamples/sec = 2 ms.

This limits the maximum theoretical readout rate to 500 Hz.

At the assumed 10 Hz cosmic trigger rate, the dead time is: 10 Hz * 2 ms / 1000 ms = 2% (dead time at 10 Hz).

- Event size: 72 ch * 511 samples * 2 bytes/sample * 4 SCA = 300 KB/event (uncompressed)

- Max event rate: 1gige network link: 100'000 KB/sec / 300 KB = 300 Hz (uncompressed)

- Compressed event rate: assuming 5-to-1 data reduction, event size is 60 KB, rate is 1500 Hz (compressed data)

The data transmission rate for compressed data 1500 Hz is higher than the 500 Hz limit of the SCA readout, this means data can be transmitted out of the FEAM board faster than it is acquired and there is no built-in dead time due to data transmission rate mismatch (no more than 2% dead time) However, the 64 FEAM 1gige links feed into a single 10gige link, so again, dead time will occur if data buffers become full when multiple events arrive in a quick burst. For 64 FEAMs feeding into a single 10gige link, max compressed event rate is: 1000 MB/sec / 64 FEAMs / 60 KB = 260 Hz (compressed data).

Note: This single 10gige link is also shared with the ALPHA-16 digitizers.

Conclusion

TPC-type detectors readout by waveform digitizers produce large amounts of data. Using state-of-the-art electronics - FPGAs with built-in 1gige network links, heavy duty 10Gige networking, latest generation back-end computers and high capacity, high speed data storage (8-10TB disks) - allows us to build a detector system with main characteristics - max trigger rate, readout dead time, etc. - comparable to the much smaller and simpler detector system used for the current ALPHA-2 experiment. The data volume will be much higher, but still manageable as data storage and data processing and analysis capabilities at CERN have also have grown significantly. The data flow path using a single 10GigE limits the event rate around 250Hz based on a compression/reduction of 10:1, while the slowest hardware (FEAM) can operate up to 500Hz.

Midas Software configuration

For maximum familiarity, similarity and compatibility with the existing ALPHA-2 system, ALPHA-g will also use the MIDAS data acquisition software package.

The main features of MIDAS is a modern web-based user interface, good robustness, high reliability and high scalability. The system is easy to operate by non-experts.

Outside of ALPHA-2 at CERN, other major users of MIDAS are TIGRESS and GRIFFIN at TRIUMF, MEG at PSI, DEAP at SNOLAB, T2K/ND280 at JPARC (Japan).

Similar to ALPHA-2 the MIDAS software will be running on one single high-end computer with an Intel socket 1151 CPU and 32/64 GB RAM. This is adequate for achieving the 100 Mbytes/sec data rates expected for ALPHA-g. During tests of 10GigE networking for the GRIFFIN experiment at TRIUMF this type of hardware was capable of recording 1000 Mbytes/sec of data from network to disk. (The required disk I/O Capability will be absent in ALPHA-g).

This computer will have 2 1GigE network interfaces, one for connecting to the CERN network, the second for connecting to the ALPHA-g private network.

Data storage will be dual mirrored (RAID1) SSDs for the operating system and home directories and dual mirrored (RAID1) 6TB HDDs for local data storage.

The MIDAS software running on this computer will have following components:

- Mhttpd - the MIDAS web server providing the main user interface for running the system

- Mlogger - the MIDAS data logger for writing experiment data to local storage and for recording MIDAS slow controls history data

- Lazylogger - for moving experiment data from local storage to permanent storage at the CERN data center (EOS and Castor).

- MIDAS frontend programs custom written to receive experiment data

- The event builder - for assembling experiment data from different frontends into events to simplify further analysis. The event builder will have code for initial data quality tests (correct data formatting, correct event timestamps, etc.).

- The online analyzer - for initial data analysis, visualization and additional data quality checks.

The MIDAS frontend programs are written using a common template, with each frontend program specialized to receive data in the format of the attached equipment:

- Frontend for AW and BSC digitizers - receive data from ALPHA-16 digitizers using TCP and UDP network socket connections (will use a modification of the frontend from GRIFFIN), provide configuration and monitoring (slow controls) of the digitizers.

- Frontend for the TPC pad boards - receive data from the TPC using TCP and UDP network socket connections. This frontend will also provide configuration and monitoring (slow controls) of the TPC pad boards and of the TPC AFTER ASICs.

- Frontend for the clock board - configuration and control of the clock board using UDP and TCP network connections.

- Frontends for slow controls, according to equipment (see list in the "specification" section). Most frontend programs will be reused from previous experiments (i.e. UPS monitoring frontends from DEAP, ALPHA-II).

Data from all the hardware components arrives through the MIDAS frontend programs asynchronously. This makes further analysis and data quality tests rather difficult. To rearrange the data into sensible pieces MIDAS provides an event builder component.

The event builder program is usually written specifically for each experiment (also using a MIDAS template). The ALPHA-g event builder will check incoming data for basic correctness, assemble event fragments from different frontends into coherent events, and check timestamps and event numbers, possibly apply additional data reduction and data compression algorithms. We expect to reuse the multi-threaded event builder developed for DEAP as the basis of the ALPHA-g event builder.

The online analyzer will look at a sample of events produced by the event builder and produce histograms and other plots of most important quantities (pulse heights, timing, etc) to confirm correct function of the detector components (i.e. each TPC pad produces hits) and of the detector as whole (i.e. hit multiplicity in the TPC pads matches hit multiplicity in the TPC AW and in the BSC). We do not expect to do full tracking and vertex reconstruction in the online analyzer - this will be done in the "near offline" analysis using the CERN data center resources (similar to ALPHA-2 procedures).

The mlogger component writes MIDAS data to local disk (or remote disk using NFS or UNIX pipe methods) and provides standard data compression (gzip-1 by default, bzip2 for maximum compression and LZ4 for maximum speed). As an option CRC32c and SHA-256/SHA-512 checksums can be computed to detect data corruption.

Figure 1, 2 and the diagram below illustrate the data flow described in this section:

Figure 1 - Overall dataflow for scheme from the detector to the data storage area.

Figure 2 - Frontend local fragment assembly, similar data for the event builder. Each grey box refers to a Thread collecting and filtering locally its fragment before making it available to the fragment builder

Computer Network configuration

Operation of the ALPHA-g experiment will involve 2 computer networks: the ALPHA-g private network and the CERN network.

The private network is required to isolate experiment data traffic from the CERN network data traffic (this was not required for ALPHA-2, where there is no private network) and to restrict access to network-attached slow controls devices (power supplies, gas system controllers, etc.).

This private network (192.168.x.y) will be connected to 2x 1GigE network switches (96, 24ports). The first one will connect all the FEAM (64) and the ALPHA-16 (16). The other networked equipment such as the slow controls/monitoring devices etc, will be connected to the second switch (24 ports).

The 1GigE network port of the main data acquisition computer will connect to the CERN network for transmitting experiment data to the CERN data center, for receiving experiment data from the Labview based control systems (which are outside of the scope of the particle detector and of this document).

The CERN network currently consists of a single fiber 1GigE network link feeding a small (24-port) 1GigE network switch in the experiment counting room. It is not anticipated that CERN will upgrade this link to higher speeds (10GigE, etc.) within the lifetime of ALPHA-g and it is not expected that ALPHA-g will ever require these higher speeds because excessive amount of data recorded to CERN storage will create significant problems for data analysis. If the detector produces too much data, it will be handled by using stronger data reduction and data compression algorithms.

The ALPHA-g data acquisition computer will provide one-way network gateway connection from the private network to the CERN network using Linux based NAT. This is required to provide devices attached to the private network with access to DNS, NTP and other network services, i.e. for installing software and firmware updates.

This configuration is very similar to what is in use by the DEAP experiment at SNOLAB. The main difference is ALPHA-g will run the main data acquisition and NAT/gateway services on the same computers as opposed to two separate computers at DEAP.

Computer Security considerations

In the ALPHA-2 and ALPHA-g experiments, p-bar beam time is very precious and any disturbances during beam data taking must be minimized.

One the computer network side, the ALPHA-g data acquisition system needs to be protected from:

- Unauthorized access to proprietary information

- Unauthorized access to network-controlled devices (power supplies, etc.)

- Sundry network disturbances (unwanted network port scans, unwanted web scans, accidental or malicious denial-of-service attacks, etc.).

It is important to note that the CERN network is isolated from the main Internet by the CERN firewall. Generally, the CERN firewall allows all outgoing traffic rom CERN to the Internet (can be selectively blocked by special request to CERN IT), but blocks all incoming traffic, except where specifically permitted. A typical machine connected to the CERN network can talk to anywhere in the Internet, but from the Internet, only "ping" is permitted. "ssh" access is disabled (one is supposed to ssh gateways at lxplus.cern.ch) and "http" access is allowed by special permission from CERN IT.

Thus we do not need to worry about protecting the ALPHA-g data acquisition system against "global Internet" threats (the CERN firewall does this job). Protection is required only against access from other machines attached to the CERN network, which can be generally considered as non-malicious. Still, over the years of operating ALPHA and ALPHA-2, we have seen unwanted network scans (that required special hardening of MIDAS) and other strange activity.

The security model of ALPHA-g combines the security features of ALPHA-2 and the security features of DEAP (at SNOLAB).

Access to the MIDAS Web server will be exclusively through the apache httpd proxy with password-protected HTTPS connections (same as in ALPHA, ALPHA-2 and DEAP).

Access to machines in the private network will be generally blocked (by Linux firewall rules and NAT). Exceptions like access to UPS controls, webcams, etc. will be via the http proxy, with password-protected HTTPS connections, same as in DEAP.

Access to MIDAS ports in the data acquisition computer will be blocked by Linux firewall rules. Exceptions will be made for specific connections such as Labview controls sending data to MIDAS.

In ALPHA, ALPHA-2 and DEAP the apache httpd proxy server runs on a separate machine. In ALPHA-g we may run it on the same machine as the main data acquisition, subject to approval by CERN (they have to open HTTPS access in the CERN firewall - from the Internet to the HTTPS port on the ALPHA-g DAQ computer).

Over the many years of operating ALPHA, ALPHA-2, DEAP and other similarly configured experiments, this security model has worked well for us, with no complaints from experiment users and no security incidents.

Safety and Hazard considerations

There are no safety and hazard concerns about any software or equipment inside the scope of this document.

All hardware consists of commodity computer components (computers, network switches, etc.) that carries appropriate government safety certifications (i.e. CSA in Canada).

All software and software controlled equipment will have hardware-enforced limits and interlocks to ensure safe operation even in case of software failure. (This is outside the scope of this document, for more information, please refer to documents or each relevant hardware component).