| ID |

Date |

Author |

Topic |

Subject |

|

3220

|

27 Apr 2026 |

Pavel Murat | Bug Report | increasing the max number of hot links in ODB | > > Indeed, updating MIDAS clients on each and every RPI etc in a running experiment may be a real challenge.

>

> actually, only local clients must be rebuilt, remote clients connecting to the mserver do not care about ODB

> internal structure.

thanks! I see - local clients do know about the memory mapping, remote ones - don't

> unfortunately, the "open records" structure is allocated at compile-time inside the ODB header,

> making any change to this would break binary compatibility.

right, I guess, what I had in mind would require the very first fODB record to be a format descriptor,

and that would be a breaking change... Anyway, the practical part of the problem is addressed,

so I just add here a link which contains an answer to the original posting (I found it only after the fact):

https://daq00.triumf.ca/MidasWiki/index.php/FAQ#Increasing_Number_of_Hot-links

-- thanks again, regards, Pavel |

|

3219

|

27 Apr 2026 |

Pavel Murat | Bug Report | increasing the max number of hot links in ODB | > > I wonder why one needs more than 256 hotlinks at all.

>

> I confirm that ALPHA is running with MAX_OPEN_RECORDS changed from 256 to 2048,

> this is the only experiment I know of that had to increase any MIDAS ODB defaults.

>

> The reason for this is mlogger, it opens an open record for each variable in each equipment.

>

> This should be changed to 1 db_watch per equipment. We talked about it, but I guess we never did it.

>

> I think this task just went almost to the top of my MIDAS to-do list.

I definitely had many more than 256 variables successfully monitored with MAX_OPEN_RECORDS=256.

Is it possible that mlogger creates a hotlink per monitoring event, not per variable ?

- I think, that would make more sense in almost any scenario...

-- thanks, regards, Pavel |

|

3218

|

27 Apr 2026 |

Pavel Murat | Bug Report | increasing the max number of hot links in ODB | > I wonder why one needs more than 256 hotlinks at all. Please note that with the odbxx "watch" API, you can hotline a whole subdirectory, and get notified if ANY of the

> underlying values or subdirectories change. In principle, one could have one hotlink to "/" and see all changes in the ODB (although that does not make sense and might slow

> down ODB access a bit).

Thanks ! - I didn't know that. I did run into a number of hotlinks limit via mlogger which complained about not being able to create a hotlink

to yet another event. Doubling the default value of MAX_OPEN_RECORDS solved the problem.

I don't know the exact arithmetic defining the number of hotlinks in the system, but my today's case is a case of

- 36 (linux servers) +18 (RPI) monitoring frontends managing one or several different equipment items each.

- Each equipment item sends to ODB at least one monitoring event

- in addition, each frontend created an individual hotlink for handling interactive commands

- for MAX_OPEN_RECORDS=256, 4 equipment items per frontend easily make it into the dangerous zone.

"Equipment items" also include the online processes running on the distributed computing farm processing the data ..

(we are not using MIDAS event building capabilities)

>

> Try the odbxx_test.cpp example in MIDAS. In line 210 it puts a single hotlink to /Experiment. If you change anything under /Experiment, the program gets notified. By checking the

> path of the changed ODB entry, it can figure out which of the subways have been changed:

>

> // watch ODB key for any change with lambda function

> midas::odb ow("/Experiment");

> ow.watch([](midas::odb &o) {

> std::cout << "Value of key \"" + o.get_full_path() + "\" changed to " << o << std::endl;

> });

>

>

> Maybe that would solve your problem without having to change the maximum number of hotlinks.

I'll see how much mileage one can make here, but so far it looks that it is the number of various monitoring events

handled by the mlogger which drives the number of hotlinks

-- thanks, regards, Pavel |

|

3217

|

27 Apr 2026 |

Nick Hastings | Bug Report | increasing the max number of hot links in ODB | For the record, the ND280 (T2K near detector) MIDAS GSC was initially set up

with MAX_OPEN_RECORDS = 2560 and MAX_CLIENTS = 128.

In 2023 one fairly simple part of the detector was replaced with several

other more complex systems (many more midas frontends, equipments, and

variables being logged) so we updated MAX_OPEN_RECORDS = 4096 and

MAX_CLIENTS = 256.

Nick. |

|

3216

|

27 Apr 2026 |

Konstantin Olchanski | Bug Report | increasing the max number of hot links in ODB | > I wonder why one needs more than 256 hotlinks at all.

I confirm that ALPHA is running with MAX_OPEN_RECORDS changed from 256 to 2048,

this is the only experiment I know of that had to increase any MIDAS ODB defaults.

The reason for this is mlogger, it opens an open record for each variable in each equipment.

This should be changed to 1 db_watch per equipment. We talked about it, but I guess we never did it.

I think this task just went almost to the top of my MIDAS to-do list.

K.O. |

|

3215

|

27 Apr 2026 |

Konstantin Olchanski | Bug Report | increasing the max number of hot links in ODB | > Indeed, updating MIDAS clients on each and every RPI etc in a running experiment may be a real challenge.

actually, only local clients must be rebuilt, remote clients connecting to the mserver do not care about ODB

internal structure.

> Thinking forward - would it help if the ODB clients, upon initial connection but before doing anything else

> were reading the ODB parameters from the ODB itself, so the clients were "learning" about the ODB structure

> dynamically, at run time? Or that knowledge has to be static ?

unfortunately, the "open records" structure is allocated at compile-time inside the ODB header,

making any change to this would break binary compatibility.

I think it is possible to allocate "space for additional open records" in the ODB data area

and have the ODB open records code use it in addition to the compile-time allocated

space in the database header. This would also work for extending MAX_CLIENTS.

Of course in this approach, old midas clients would see only the clients and open records

in the database header, new midas clients would see the additional data.

It is not super hard to add this code...

K.O. |

|

3214

|

26 Apr 2026 |

Stefan Ritt | Bug Report | increasing the max number of hot links in ODB | I wonder why one needs more than 256 hotlinks at all. Please note that with the odbxx "watch" API, you can hotline a whole subdirectory, and get notified if ANY of the

underlying values or subdirectories change. In principle, one could have one hotlink to "/" and see all changes in the ODB (although that does not make sense and might slow

down ODB access a bit).

Try the odbxx_test.cpp example in MIDAS. In line 210 it puts a single hotlink to /Experiment. If you change anything under /Experiment, the program gets notified. By checking the

path of the changed ODB entry, it can figure out which of the subways have been changed:

// watch ODB key for any change with lambda function

midas::odb ow("/Experiment");

ow.watch([](midas::odb &o) {

std::cout << "Value of key \"" + o.get_full_path() + "\" changed to " << o << std::endl;

});

Maybe that would solve your problem without having to change the maximum number of hotlinks.

Stefan |

|

3213

|

25 Apr 2026 |

Pavel Murat | Bug Report | increasing the max number of hot links in ODB | I see - thank you for the explanation!

Indeed, updating MIDAS clients on each and every RPI etc in a running experiment may be a real challenge.

Thinking forward - would it help if the ODB clients, upon initial connection but before doing anything else

were reading the ODB parameters from the ODB itself, so the clients were "learning" about the ODB structure

dynamically, at run time? Or that knowledge has to be static ?

-- thanks, regards, Pavel |

|

3212

|

24 Apr 2026 |

Konstantin Olchanski | Bug Report | increasing the max number of hot links in ODB | > when I attempted to increase the max number of hotlinks in ODB , defined as

> #define MAX_OPEN_RECORDS 256 /**< number of open DB records */

> assert(sizeof(DATABASE_CLIENT) == 2112);

Yes, it is intended to work like this. If you change MAX_OPEN_RECORDS (and some settings),

you break binary compatibility with standard MIDAS and the asserts inform you about it.

It is not a light step to take - you have to recompile all MIDAS clients, and if you miss

one and run it against your non-standard MIDAS, kaboom everything will go,

there is no safety net against this.

In the ALPHA experiment at CERN, for years we have been running with MAX_OPEN_RECORDS set to 2560,

and it works, you have to change both MAX_OPEN_RECORDS in midas.h and the expected values

in the assert() statements.

The new correct values you do not need to guess or compute yourself, the code to print

them is right there and it is easy to enable.

Replacing the numeric constants with computed values of course would completely defeat

the purpose of the tests - to catch the situation where by mistake or by ignorance

(or by miscompilation) sizes of critical data structures become different from those

normally expected.

K.O. |

|

Draft

|

23 Apr 2026 |

Nick Hastings | Bug Report | increasing the max number of hot links in ODB | > Dear MIDAS experts,

>

> when I attempted to increase the max number of hotlinks in ODB , defined as

>

> #define MAX_OPEN_RECORDS 256 /**< number of open DB records */

>

> I started running into an assertion in midas/src/odb.cxx

>

> https://bitbucket.org/tmidas/midas/src/fa5457b5274a6b42c5ed8b6dea5e3cdd43de38fe/src/odb.cxx#lines-1525 :

>

> assert(sizeof(DATABASE_CLIENT) == 2112);

>

> is it possible that the size of the DATABASE_CLIENT structure should be checked against 64+sizeof(OPEN_RECORD)*MAX_OPEN_RECORDS ?

> - 64 clearly can be expressed in a better maintainable form

Yes, this assert needs to be updated if you increase MAX_OPEN_RECORDS. See

https://daq00.triumf.ca/MidasWiki/index.php/FAQ#Increasing_Number_of_Hot-links

> UPDATE: similar consideration holds for the size of the DATABLE_HEADER structure, which is also checked against a constant

>

> https://bitbucket.org/tmidas/midas/src/fa5457b5274a6b42c5ed8b6dea5e3cdd43de38fe/src/odb.cxx#lines-1526

Yes DATABASE_HEADER can also be updated, but the associated assert()s need to be updated too.

FYI I have done both of these things and have attached patches.

Cheers,

Nick. |

| Attachment 1: 0001-Increase-MAX_OPEN_RECORDS-to-increase-the-max-number.patch

|

From 6ac1ad23e1e0c8fcfdbb25844ace9de3ab7a993b Mon Sep 17 00:00:00 2001

From: Nick Hastings <hastings@post.kek.jp>

Date: Tue, 19 Sep 2023 08:57:11 +0900

Subject: [PATCH 1/2] Increase MAX_OPEN_RECORDS to increase the max number of

hot_links

In 2011 the number of hotlinks for the gsc was increased from the

default 256 to 2560. See https://elog.nd280.org/elog/GSC/425. Since we

are getting more equipment, increase to 4096. General

instructions can be found on the midas wiki at.

https://daq00.triumf.ca/MidasWiki/index.php/FAQ#Increasing_Number_of_Hot-links

When increasing this number the size of two structs will also

increase. Midas checks the size of these structs are correct, so

updated the check sizes.

DATABASE_CLIENT = 64 + 8*MAX_OPEN_ERCORDS

default: = 64 + 8*256

= 2112 -> Confirmed in odb.cxx

updated: = 64 + 8*4096

= 32832

DATABASE_HEADER = 64 + 64*DATABASE_CLIENT

default: = 64 + 64*2112

= 135232 -> Confirmed in odb.cxx

updated: = 64 + 64*32832

= 2101312

---

include/midas.h | 2 +-

src/odb.cxx | 4 ++--

2 files changed, 3 insertions(+), 3 deletions(-)

diff --git a/include/midas.h b/include/midas.h

index ec6b54a1..46d653f4 100644

--- a/include/midas.h

+++ b/include/midas.h

@@ -273,7 +273,7 @@ class MJsonNode; // forward declaration from mjson.h

#define HOST_NAME_LENGTH 256 /**< length of TCP/IP names */

#define MAX_CLIENTS 64 /**< client processes per buf/db */

#define MAX_EVENT_REQUESTS 10 /**< event requests per client */

-#define MAX_OPEN_RECORDS 256 /**< number of open DB records */

+#define MAX_OPEN_RECORDS 4096 /**< number of open DB records */

#define MAX_ODB_PATH 256 /**< length of path in ODB */

#define BANKLIST_MAX 4096 /**< max # of banks in event */

#define STRING_BANKLIST_MAX BANKLIST_MAX * 4 /**< for bk_list() */

diff --git a/src/odb.cxx b/src/odb.cxx

index e4e2bd35..bc084612 100644

--- a/src/odb.cxx

+++ b/src/odb.cxx

@@ -1477,8 +1477,8 @@ static void db_validate_sizes()

assert(sizeof(KEY) == 68);

assert(sizeof(KEYLIST) == 12);

assert(sizeof(OPEN_RECORD) == 8);

- assert(sizeof(DATABASE_CLIENT) == 2112);

- assert(sizeof(DATABASE_HEADER) == 135232);

+ assert(sizeof(DATABASE_CLIENT) == 32832);

+ assert(sizeof(DATABASE_HEADER) == 2101312);

assert(sizeof(EVENT_HEADER) == 16);

//assert(sizeof(EQUIPMENT_INFO) == 696); has been moved to dynamic checking inside mhttpd.c

assert(sizeof(EQUIPMENT_STATS) == 24);

--

2.47.3

|

| Attachment 2: 0002-Increase-MAX_CLIENTS-from-64-to-256.patch

|

From d0b0c5f316944a3133a26cc5340ec293f719b500 Mon Sep 17 00:00:00 2001

From: Nick Hastings <hastings@post.kek.jp>

Date: Tue, 19 Sep 2023 09:43:04 +0900

Subject: [PATCH 2/2] Increase MAX_CLIENTS from 64 to 256

In 2012 MAX_CLIENTS was increased from 64 to 128 for the t2kgsc. This

allowed double the number of frontends or clients. See elog entry at

https://elog.nd280.org/elog/GSC/808

For the new gsc this is further increased to 256. This necessitates

updating the size checks of the BUFFER_HEADER and DATABASE_HEADER structs.

BUFFER_HEADER = 32 + 7*4 + 256*MAX_CLIENTS

current: = 32 + 7*4 + 256*64

= 16444 -> Confirmed in odb.cxx

updated: = 32 + 7*4 + 256*256

= 65596

DATABASE_HEADER = 32 + 8*4 + 32832*MAX_CLIENTS

current: = 32 + 8*4 + 32832*64

= 2101312 -> Confirmed in odb.cxx

updated: = 32 + 8*4 + 32832*256

= 8405056

N.B. When the corresponding change was made in 2012 the value of

MAX_RPC_CONNECTION was increased from 64 to 96. No equivalent change

is made now since it was removed from midas in 2021 commit 9c93bc7f

"RPC_SERVER_ACCEPTION cleanup".

---

include/midas.h | 2 +-

src/odb.cxx | 4 ++--

2 files changed, 3 insertions(+), 3 deletions(-)

diff --git a/include/midas.h b/include/midas.h

index 46d653f4..43ca476e 100644

--- a/include/midas.h

+++ b/include/midas.h

@@ -271,7 +271,7 @@ class MJsonNode; // forward declaration from mjson.h

#define NAME_LENGTH 32 /**< length of names, mult.of 8! */

#define HOST_NAME_LENGTH 256 /**< length of TCP/IP names */

-#define MAX_CLIENTS 64 /**< client processes per buf/db */

+#define MAX_CLIENTS 256 /**< client processes per buf/db */

#define MAX_EVENT_REQUESTS 10 /**< event requests per client */

#define MAX_OPEN_RECORDS 4096 /**< number of open DB records */

#define MAX_ODB_PATH 256 /**< length of path in ODB */

diff --git a/src/odb.cxx b/src/odb.cxx

index bc084612..b94cef29 100644

--- a/src/odb.cxx

+++ b/src/odb.cxx

@@ -1469,7 +1469,7 @@ static void db_validate_sizes()

#ifdef OS_LINUX

assert(sizeof(EVENT_REQUEST) == 16); // ODB v3

assert(sizeof(BUFFER_CLIENT) == 256);

- assert(sizeof(BUFFER_HEADER) == 16444);

+ assert(sizeof(BUFFER_HEADER) == 65596);

assert(sizeof(HIST_RECORD) == 20);

assert(sizeof(DEF_RECORD) == 40);

assert(sizeof(INDEX_RECORD) == 12);

@@ -1478,7 +1478,7 @@ static void db_validate_sizes()

assert(sizeof(KEYLIST) == 12);

assert(sizeof(OPEN_RECORD) == 8);

assert(sizeof(DATABASE_CLIENT) == 32832);

- assert(sizeof(DATABASE_HEADER) == 2101312);

+ assert(sizeof(DATABASE_HEADER) == 8405056);

assert(sizeof(EVENT_HEADER) == 16);

//assert(sizeof(EQUIPMENT_INFO) == 696); has been moved to dynamic checking inside mhttpd.c

assert(sizeof(EQUIPMENT_STATS) == 24);

--

2.47.3

|

|

3210

|

23 Apr 2026 |

Pavel Murat | Bug Report | increasing the max number of hot links in ODB | Dear MIDAS experts,

when I attempted to increase the max number of hotlinks in ODB , defined as

#define MAX_OPEN_RECORDS 256 /**< number of open DB records */

I started running into an assertion in midas/src/odb.cxx

https://bitbucket.org/tmidas/midas/src/fa5457b5274a6b42c5ed8b6dea5e3cdd43de38fe/src/odb.cxx#lines-1525 :

assert(sizeof(DATABASE_CLIENT) == 2112);

is it possible that the size of the DATABASE_CLIENT structure should be checked against 64+sizeof(OPEN_RECORD)*MAX_OPEN_RECORDS ?

- 64 clearly can be expressed in a better maintainable form

UPDATE: similar consideration holds for the size of the DATABLE_HEADER structure, which is also checked against a constant

https://bitbucket.org/tmidas/midas/src/fa5457b5274a6b42c5ed8b6dea5e3cdd43de38fe/src/odb.cxx#lines-1526

-- many thanks, regards, Pavel

|

|

3209

|

16 Apr 2026 |

Konstantin Olchanski | Suggestion | mhttpd user permissions | We had our periodic discussion on MIDAS web page user permissions. (I cannot

find the link to the previous discussions, ouch!)

Currently any logged in user can do anything - start stop runs, start/stop

programs, edit odb, etc.

Regularly, we have experiments that ask about "read-only" access to MIDAS and

about more granular user permissions.

In the past, I suggested a permissions scheme that is easy to implement

with the current code base. Permission level for each user can

be stored in ODB and allow:

level 0 - root user, as now

level 1 - experiment user, any restrictions are implemented in javascript, i.e.

all custom pages work as they do now, but (i.e.) the odb editor is read-only

level 2 - experiment operator, restrictions are implemented in the mjsonrpc

code, i.e. can start/stop runs, start/stop programs, but cannot make any

changes, i.e. cannot write to ODB

level 3 - read-only user - only mjsonrpc calls that do not change anything are

permitted.

(to implement level 2, obviously, the "start run" mjsonrpc call has to be

changed to accept the run comments, current code writes them to odb directly and

that would fail).

First step towards implementing this was made today. Ben and Derek figured out

the apache incantation to pass the logged user name to MIDAS and I added

decoding of this user name in mhttpd. I do not do anything with it, yet.

In apache config, one change is needed:

> For Apache, add this line in your VirtualHost section (tested as working):

> RequestHeader set X-Remote-User %{REMOTE_USER}s

https://daq00.triumf.ca/DaqWiki/index.php/Ubuntu#Install_apache_httpd_proxy_for_midas_and_elog

K.O. |

|

3208

|

16 Apr 2026 |

Konstantin Olchanski | Forum | Migrate Legacy code to current Midas version | > I am migrating the full CRIPT muon tomography detector from MIDAS (SVN Rev.

> 5238, circa 2012) to a more modern release.

> The current system runs on Scientific Linux 6 and very old hardware.

Right, good vintage midas and linux. But in the current security environment,

we must run currently supported OS (and MIDAS), and we must never fall off

the yearly/bi-yearly OS upgrade threadmill.

How old is your computer hardware and do you plan to update it, as well? If you OS

is installed on an HDD, definitely move to an SSD would be good. If you are hard

on money, a RaspberryPi5 with 16GB RAM may be good enough for what you have.

Anyhow new OS choice would be Ubuntu 24 or Debian 13. I do not recommend Red Hat based OS (vanilla

RHEL, Fedora, Alma, Rocky), they have become niche OSes with minimal vendor and community support.

> Due to substantial changes in the MIDAS codebase over the years ...

The big change in MIDAS land is move to c++, then c++11, then to c++17, and move from vanilla make to

cmake.

MIDAS API has been reasonably stable since then, but very old MIDAS frontends would fail to build with

latest compilers because of changes in c++ language and changes in the c and c++ libraries.

> I have encountered multiple compatibility issues during the migration. I have also attempted to

build and run the legacy MIDAS version and the front-end code using GCC 4.8 on a modern Linux system

(Ubuntu 24.04), but without success.

this is non-viable, latest C/C++ compilers reject perfectly good SL6-era C/C++ code, old MIDAS would

not compile, old frontends would not compile.

> Could you please advise on the recommended approach ...

What you are doing, we have done several times with TRIUMF experiments,

updating SL6 and CentOS-7 MIDAS instances to current MIDAS, C++ and OS:

1) new computer with Ubuntu 24 (or Debian or Raspbian). (U-26 will come out roughly in August, fo rth

epurposes of this discussion).

2) new MIDAS. we generally recommend the head of the develop branch, but older tagged version are

okey, too.

3) apache https proxy, etc, for secure browser connections, see

https://daq00.triumf.ca/DaqWiki/index.php/Ubuntu#Install_apache_httpd_proxy_for_midas_and_elog

4) reload your old ODB into the new MIDAS

5) your old history, etc should work

6a) build your old frontends, this will be a chore, but if you look at the compile errors, you will

see that most changes are very mechanical (i.e. const char*, etc). biggest hassle is ot make your old

C/C++ code to build with current C++17 compiler, smaller hassle is to update for minor changes in the

mfe.h API.

6b) bite the bullet and rewrite your frontends using the C++ tmfe API, start with

tmfe_example_everything.cxx, remove unnecessary, add required, pretty straightforward, I can guide you

through this (contact me directly by email).

7) minor tweeks to mlogger, mhttpd and history settings

8) rewrite all customs pages to the current mjsonrpc API

Best of luck, if you have more questions, please ask here or by direct email.

K.O. |

|

3207

|

09 Apr 2026 |

Nick Hastings | Forum | Migrate Legacy code to current Midas version | > I am an applied physicist at Canadian Nuclear Laboratories and am in the process

> of migrating the full experiment configuration�including the front-end interface�

> of the CRIPT muon tomography detector from a legacy version of MIDAS (SVN Rev.

> 5238, circa 2012) to a more modern release.

> The current system runs on Scientific Linux 6 and very old hardware. Due to

> substantial changes in the MIDAS codebase over the years, I have encountered

> multiple compatibility issues during the migration. I have also attempted to build

> and run the legacy MIDAS version and the front-end code using GCC 4.8 on a modern

> Linux system (Ubuntu 24.04), but without success.

I suggest updating the midas version. You should be able to get to at least midas 2024-12-a

without breaking anything.

> Could you please advise on the recommended approach for upgrading or refactoring

> legacy MIDAS front-end code to the current framework? Any guidance or best

> practices would be greatly appreciated.

Start here: https://daq00.triumf.ca/MidasWiki/index.php/Changelog

The 2019-06 release was the c -> c++ transition. Read that section carefully.

The 2020-12 update also had a change that you will also likely want to update your FEs for.

You will also likely encounter a quite a few non-midas issues related to the newer compilers.

Updates to your front ends may include things like needing to use "const char*" instead of "char*",

adding extra headers like <cstring> and <cstdlib>, using ctime_r() instead of ctime(), etc

Post back if you have specific questions.

P.S. I'm maintaining a number of midas FEs mostly written around 2007-2009.

These were running on SL5 and SL6 for many years and are now on Alma 9 with

midas 2024-12-a. |

|

3206

|

09 Apr 2026 |

David Perez Loureiro | Forum | Migrate Legacy code to current Midas version | I am an applied physicist at Canadian Nuclear Laboratories and am in the process

of migrating the full experiment configuration�including the front-end interface�

of the CRIPT muon tomography detector from a legacy version of MIDAS (SVN Rev.

5238, circa 2012) to a more modern release.

The current system runs on Scientific Linux 6 and very old hardware. Due to

substantial changes in the MIDAS codebase over the years, I have encountered

multiple compatibility issues during the migration. I have also attempted to build

and run the legacy MIDAS version and the front-end code using GCC 4.8 on a modern

Linux system (Ubuntu 24.04), but without success.

Could you please advise on the recommended approach for upgrading or refactoring

legacy MIDAS front-end code to the current framework? Any guidance or best

practices would be greatly appreciated.

Many thanks in advance.

David Perez Loureiro, PhD (he/him)

Applied Physicist, Applied Physics Branch

Canadian Nuclear Laboratories |

|

3205

|

12 Feb 2026 |

Stefan Ritt | Bug Report | omnibus bugs from running DarkLight | Now I had a similar case that the browser froze when showing 24h of data. Tuned out that 80k points are a bit much. I changed the code so that it starts binning when showing 8h or more. This is not a perfect solution. The code should check at which interval data is written, then

automatically start binning when approaching 4000 points or more. That would however require more complicated code, so I leave it as it is right now. Feedback welcome.

Stefan |

|

3204

|

06 Feb 2026 |

Stefan Ritt | Bug Report | omnibus bugs from running DarkLight | > 5) ODB editor "create link" link target name is limited to 32 bytes, links cannot be created (dl-server-2), ok

> on daq17 with current MIDAS.

Works for me with the current version.

> 6) MIDAS on dl-server-2 is "installed" in such a way that there is no connection to the git repository, no way

> to tell what git checkout it corresponds to. Help page just says "branch master", git-revision.h is empty. We

> should discourage such use of MIDAS and promote our "normal way" where for all MIDAS binary programs we know

> what source code and what git commit was used to build them.

Not sure if you have seen it. I make a "install" script to clone, compile and install midas. Some people use this already. Maybe give it a shot. Might need

adjustment for different systems, I certainly haven't covered all corner cases. But on a RaspberryPi it's then just one command to install midas, modify

the environment, install mhttpd as a service and load the ODB defaults. I know that some people want it "their way" and that's ok, but for the novice user

that might be a good starting point. It's documented here: https://daq00.triumf.ca/MidasWiki/index.php/Install_Script

The install script is plain shell, so should be easy to be understandable.

> 6a) MIDAS on dl-server-2 had a pretty much non-functional history display, I reported it here, Stefan provided

> a fix, I manually retrofitted it into dl-server-2 MIDAS and we were able to run the experiment. (good)

>

> 6b) bug (5) suggests that there is more bugs being introduced and fixed without any notice to other midas

> users (via this forum or via the bitbucket bug tracker).

If I would notify everybody about a new bug I introduced, I would know that it's a bug and I would not introduce it ;-)

For all the fixes I encourage people to check the commit log. Doing an elog entry for every bug fix would be considered spam by many people because

that can be many emails per week. The commit log is here: https://bitbucket.org/tmidas/midas/commits/branch/develop

If somebody volunteers to consolidate all commits and make a monthly digest to be posted here, I'm all in favor, but I'm not that individual.

Stefan |

|

3203

|

06 Feb 2026 |

Stefan Ritt | Bug Report | omnibus bugs from running DarkLight | > 4) ODB editor "right click" to "delete" or "rename" key does not work, the right-click menu disappears

> immediately before I can use it (dl-server-2), click on item (it is now blue), right-click menu disappears

> before I can use it (daq17). it looks like a timing or race condition.

Confirmed and fixed: https://bitbucket.org/tmidas/midas/commits/4ba30761683ac9aa558471d2d2d35ce05e72096a

/Stefan |

|

3202

|

06 Feb 2026 |

Stefan Ritt | Bug Report | omnibus bugs from running DarkLight | > 3) ODB editor clicking on hex number versus decimal number no longer allows editing in hex, Stefan implemented

> this useful feature and it worked for a while, but now seems broken.

I cannot confirm. See below. There was some issue some time ago, but that's fixed since a while. Please pull on develop and try again.

Here is the change: https://bitbucket.org/tmidas/midas/commits/882974260876529c43811c63a16b4a32395d416a

Stefan |

| Attachment 1: Screenshot_2026-02-06_at_12.44.16.png

|

|

|

3201

|

06 Feb 2026 |

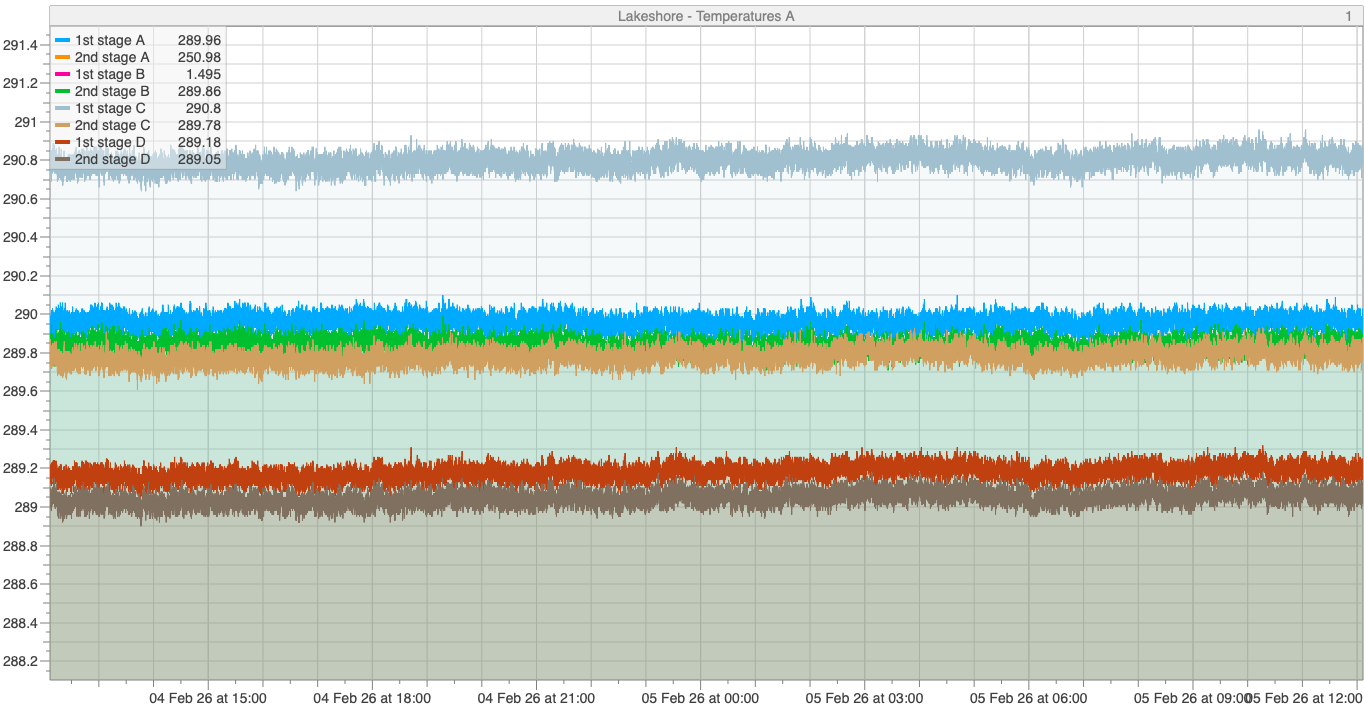

Stefan Ritt | Bug Report | omnibus bugs from running DarkLight | Thanks for the detailed report. Let me reply one-by-one.

> 1) history plots on 12 hrs, 24 hrs tend to hang with "page not responsive". most plots have 16-20 variables,

> which are recorded at 1/sec interval. (yes, we must see all the variables at the same time and yes, we want to

> record them with fine granularity).

Attached is a similar plot. 8 values recorded every second, displayed for 24h. The backend is actually a Raspberry Pi! I see no issues there. Do you have

the current history version which does the re-binning? Actually the plot below is still without rebinding (see the "1" at the top right), and it contains ~72000 points x 8. The browser does not have any issue

with it.

Stefan |

| Attachment 1: Lakeshore-Temperatures_A-20260204-120628-20260205-120628.png

|

|

|