| ID |

Date |

Author |

Topic |

Subject |

|

2165

|

12 May 2021 |

Stefan Ritt | Bug Report | mhttpd WebServer ODBTree initialization | > It looks like mhttpd managed to bind to the IPv4 address (localhost), but not the IPv6 address (::1). If you don't need it, try setting "/Webserver/Enable IPv6" to false.

We had this issue already several times. This info should be put into the documentation at a prominent location.

Stefan |

|

2166

|

12 May 2021 |

Pierre Gorel | Bug Report | History formula not correctly managed | OS: OSX 10.14.6 Mojave

MIDAS: Downloaded from repo on April 2021.

I have a slow control frontend doing the command/readout of a MPOD HV/LV. Since I am reading out the current that are in nA (after updating snmp), I wanted to multiply the number by 1e9.

I noticed the new "Formula" field (introduced in 2019 it seems) instead of the "Factor/Offset" I was used to. None of my entries seems to be accepted (after hitting save, when coming back thee field is empty).

Looking in ODB in "/History/Display/MPOD/HV (Current)/", the field "Formula" is a string of size 32 (even if I have multiple plots in that display). I noticed that the fields "Factor" and "Offset" are still existing and they are arrays with the correct size. However, changing the values does not seem to do anything.

Deleting "Formula" by hand and creating a new field as an array of string (of correct length) seems to do the trick: the formula is displayed in the History display config, and correctly used. |

|

2167

|

13 May 2021 |

Mathieu Guigue | Bug Report | mhttpd WebServer ODBTree initialization | > > It looks like mhttpd managed to bind to the IPv4 address (localhost), but not the IPv6 address (::1). If you don't need it, try setting "/Webserver/Enable IPv6" to false.

>

> We had this issue already several times. This info should be put into the documentation at a prominent location.

>

> Stefan

Thanks a lot, this solved my issue! |

|

2168

|

14 May 2021 |

Stefan Ritt | Bug Report | mhttpd WebServer ODBTree initialization | > Thanks a lot, this solved my issue!

... or we should turn IPv6 off by default, since not many people use this right now. |

|

2172

|

24 May 2021 |

Mathieu Guigue | Bug Report | Bug "is of type" | Hi,

I am running a simple FE executable that is supposed to define a PRAW DWORD bank.

The issue is that, right after the start of the run, the logger crashes without messages.

Then the FE reports this error, which is rather confusing.

```

12:59:29.140 2021/05/24 [feTestDatastruct,ERROR] [odb.cxx:6986:db_set_data1,ERROR] "/Equipment/Trigger/Variables/PRAW" is of type UINT32, not UINT32

``` |

|

2174

|

27 May 2021 |

Lukas Gerritzen | Bug Report | Wrong location for mysql.h on our Linux systems | Hi,

with the recent fix of the CMakeLists.txt, it seems like another bug surfaced.

In midas/progs/mlogger.cxx:48/49, the mysql header files are included without a

prefix. However, mysql.h and mysqld_error.h are in a subdirectory, so for our

systems, the lines should be

48 #include <mysql/mysql.h>

49 #include <mysql/mysqld_error.h>

This is the case with MariaDB 10.5.5 on OpenSuse Leap 15.2, MariaDB 10.5.5 on

Fedora Workstation 34 and MySQL 5.5.60 on Raspbian 10.

If this problem occurs for other Linux/MySQL versions as well, it should be

fixed in mlogger.cxx and midas/src/history_schema.cxx.

If this problem only occurs on some distributions or MySQL versions, it needs

some more differentiation than #ifdef OS_UNIX.

Also, this somehow seems familiar, wasn't there such a problem in the past? |

|

2176

|

27 May 2021 |

Nick Hastings | Bug Report | Wrong location for mysql.h on our Linux systems | Hi,

> with the recent fix of the CMakeLists.txt, it seems like another bug

surfaced.

> In midas/progs/mlogger.cxx:48/49, the mysql header files are included without

a

> prefix. However, mysql.h and mysqld_error.h are in a subdirectory, so for our

> systems, the lines should be

> 48 #include <mysql/mysql.h>

> 49 #include <mysql/mysqld_error.h>

> This is the case with MariaDB 10.5.5 on OpenSuse Leap 15.2, MariaDB 10.5.5 on

> Fedora Workstation 34 and MySQL 5.5.60 on Raspbian 10.

>

> If this problem occurs for other Linux/MySQL versions as well, it should be

> fixed in mlogger.cxx and midas/src/history_schema.cxx.

> If this problem only occurs on some distributions or MySQL versions, it needs

> some more differentiation than #ifdef OS_UNIX.

What does "mariadb_config --cflags" or "mysql_config --cflags" return on

these systems? For mariadb 10.3.27 on Debian 10 it returns both paths:

% mariadb_config --cflags

-I/usr/include/mariadb -I/usr/include/mariadb/mysql

Note also that mysql.h and mysqld_error.h reside in /usr/include/mariadb *not*

/usr/include/mariadb/mysql so using "#include <mysql/mysql.h>" would not work.

On CentOS 7 with mariadb 5.5.68:

% mysql_config --include

-I/usr/include/mysql

% ls -l /usr/include/mysql/mysql*.h

-rw-r--r--. 1 root root 38516 May 6 2020 /usr/include/mysql/mysql.h

-r--r--r--. 1 root root 76949 Oct 2 2020 /usr/include/mysql/mysqld_ername.h

-r--r--r--. 1 root root 28805 Oct 2 2020 /usr/include/mysql/mysqld_error.h

-rw-r--r--. 1 root root 24717 May 6 2020 /usr/include/mysql/mysql_com.h

-rw-r--r--. 1 root root 1167 May 6 2020 /usr/include/mysql/mysql_embed.h

-rw-r--r--. 1 root root 2143 May 6 2020 /usr/include/mysql/mysql_time.h

-r--r--r--. 1 root root 938 Oct 2 2020 /usr/include/mysql/mysql_version.h

So this seems to be the correct setup for both Debian and RHEL. If this is to

be worked around in Midas I would think it would be better to do it at the

cmake level than by putting another #ifdef in the code.

Cheers,

Nick. |

|

2180

|

28 May 2021 |

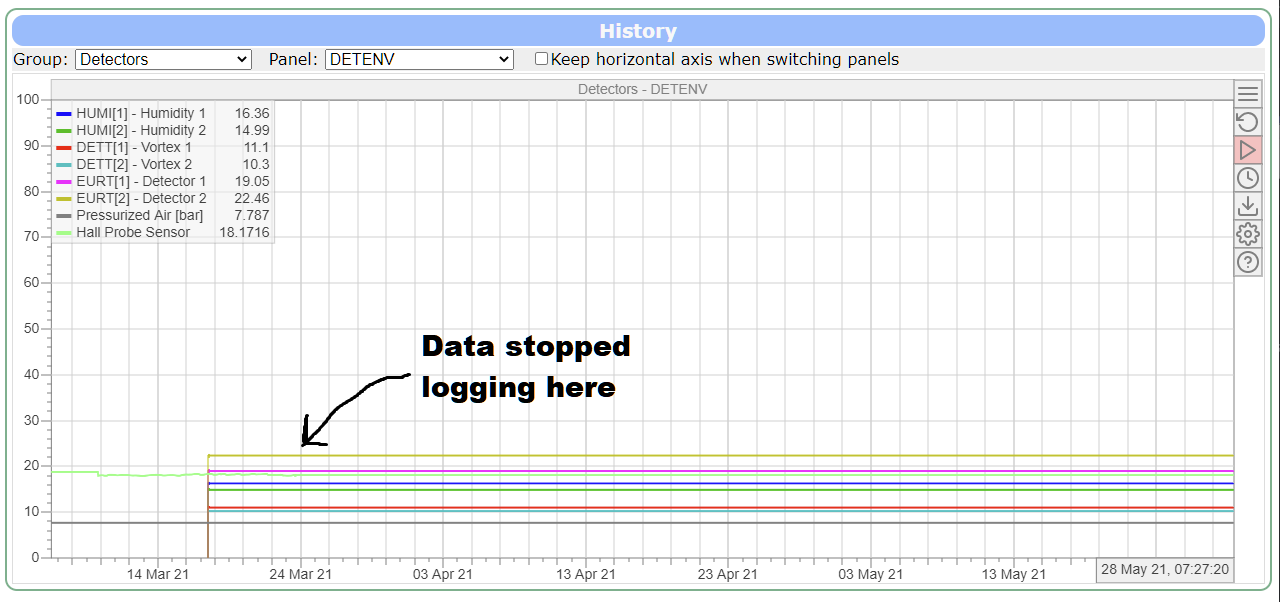

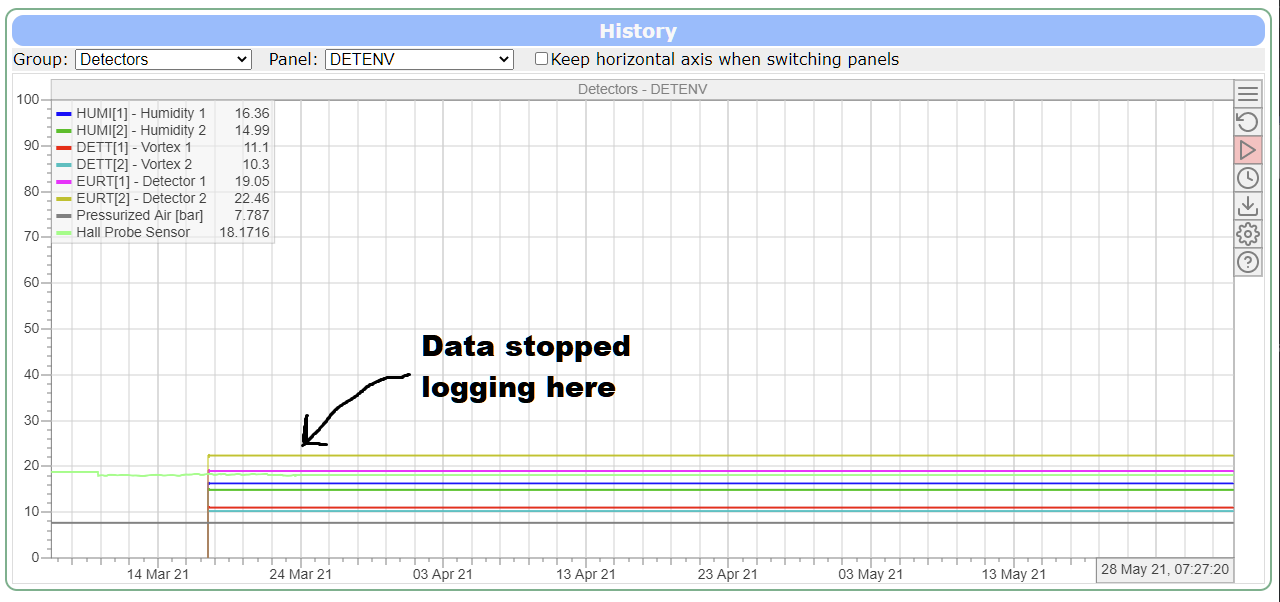

Joseph McKenna | Bug Report | History plots deceiving users into thinking data is still logging |

I have been trying to fix this myself but my javascript isn't strong... The

'new' history plot render fills in missing data with the last ODB value (even

when this value is very old...

elog:2180/1 shows this... The data logging stopped, but the history plot can

fool

users into thinking data is logging (The export button generates CSVs with

entires every 10 seconds also). Grepping through the history files behind the

scenes, I found only one match for an example variable from this plot, so it

looks like there are no entries after March 24th (although I may be mistaken,

I've not studied the history files data structure in detail), ie this is a

artifact from the mhistory.js rather than the mlogger...

Have I missed something simple?

Would it be possible to not draw the line if there are no datapoints in a

significant time? Or maybe render a dashed line that doesn't export to CSV?

Thanks in advance

Edit, I see certificate errors this forum and I think its preventing my upload

an image... inlining it into the text here:

|

| Attachment 1: flatline.png

|

|

| Attachment 2: flatline.png

|

|

|

2181

|

28 May 2021 |

Stefan Ritt | Bug Report | History plots deceiving users into thinking data is still logging | This is a known problem and I'm working on. See the discussion at:

https://bitbucket.org/tmidas/midas/issues/305/log_history_periodic-doesnt-account-for

Stefan |

|

2194

|

02 Jun 2021 |

Konstantin Olchanski | Bug Report | History plots deceiving users into thinking data is still logging | https://bitbucket.org/tmidas/midas/issues/305/log_history_periodic-doesnt-account-for

this problem is a blocker for the next midas release.

the best I can tell, current development version of midas writes history data incorrectly,

but I do not have time to look at it at this moment.

I recommend that people use the latest released version, midas-2020-12. (this is what we have on alphag and

should have in alpha2).

midas-2020-12 uses mlogger from midas-2020-08.

If I cannot find time to figure out what is going on in the mlogger,

the next release may have to be done the same way (with mlogger from midas-2020-08).

K.O. |

|

2196

|

02 Jun 2021 |

Konstantin Olchanski | Bug Report | Wrong location for mysql.h on our Linux systems | > % mariadb_config --cflags

> -I/usr/include/mariadb -I/usr/include/mariadb/mysql

I get similar, both .../include and .../include/mysql are in my include path,

so both #include "mysql/mysql.h" and #include "mysql.h" work.

I added a message to cmake to report the MySQL CFLAGS and libraries, so next time

this is a problem, we can see what happened from the cmake output:

4ed0:midas olchansk$ make cmake | grep MySQL

...

-- MIDAS: Found MySQL version 10.4.16

-- MIDAS: MySQL CFLAGS: -I/opt/local/include/mariadb-10.4/mysql;-I/opt/local/include/mariadb-

10.4/mysql/mysql and libs: -L/opt/local/lib/mariadb-10.4/mysql/ -lmariadb

K.O. |

|

2199

|

02 Jun 2021 |

Konstantin Olchanski | Bug Report | Bug "is of type" | > Hi,

>

> I am running a simple FE executable that is supposed to define a PRAW DWORD bank.

> The issue is that, right after the start of the run, the logger crashes without messages.

> Then the FE reports this error, which is rather confusing.

> ```

> 12:59:29.140 2021/05/24 [feTestDatastruct,ERROR] [odb.cxx:6986:db_set_data1,ERROR] "/Equipment/Trigger/Variables/PRAW" is of type UINT32, not UINT32

> ```

I think this is fixed in latest midas. There was a typo in this message, the same tid was printed twice,

with result you report "mismatch UINT32 and UINT32", instead of "mismatch of UINT32 vs what is actually there".

This fixes the message, after that you have to manually fix the mismatch in the data type in ODB (delete old one, I guess).

K.O. |

|

2200

|

02 Jun 2021 |

Konstantin Olchanski | Bug Report | mhttpd WebServer ODBTree initialization | > > Thanks a lot, this solved my issue!

>

> ... or we should turn IPv6 off by default, since not many people use this right now.

IPv6 certainly works and is used at CERN.

But I am not sure why people see this message. I do not see it on any machines at

TRIUMF, even those with IPv6 turned off.

K.O. |

|

2201

|

02 Jun 2021 |

Konstantin Olchanski | Bug Report | History formula not correctly managed | > OS: OSX 10.14.6 Mojave

> MIDAS: Downloaded from repo on April 2021.

>

> I have a slow control frontend doing the command/readout of a MPOD HV/LV. Since I am reading out the current that are in nA (after updating snmp), I wanted to multiply the number by 1e9.

>

> I noticed the new "Formula" field (introduced in 2019 it seems) instead of the "Factor/Offset" I was used to. None of my entries seems to be accepted (after hitting save, when coming back thee field is empty).

>

> Looking in ODB in "/History/Display/MPOD/HV (Current)/", the field "Formula" is a string of size 32 (even if I have multiple plots in that display). I noticed that the fields "Factor" and "Offset" are still existing and they are arrays with the correct size. However, changing the values does not seem to do anything.

>

> Deleting "Formula" by hand and creating a new field as an array of string (of correct length) seems to do the trick: the formula is displayed in the History display config, and correctly used.

I see this, too. Problem is that the history plot code must be compatible with both

the old scheme (factor/offset) and the new scheme (formula). But something goes wrong somewhere.

https://bitbucket.org/tmidas/midas/issues/307/history-plot-config-incorrect-in-odb

Why?

- new code cannot to "3 year" plots, old code has no problem with it

- old experiments (alpha1, etc) have only the old-style history plot definitions,

and both old and new plotting code should be able to show them (there is nobody

to convert this old stuff to the "new way", but we still desire to be able to look at it!)

K.O. |

|

2203

|

04 Jun 2021 |

Andreas Suter | Bug Report | cmake with CMAKE_INSTALL_PREFIX fails | Hi,

if I check out midas and try to configure it with

cmake ../ -DCMAKE_INSTALL_PREFIX=/usr/local/midas

I do get the error messages:

Target "midas" INTERFACE_INCLUDE_DIRECTORIES property contains path:

"<path>/tmidas/midas/include"

which is prefixed in the source directory.

Is the cmake setup not relocatable? This is new and was working until recently:

MIDAS version: 2.1

GIT revision: Thu May 27 12:56:06 2021 +0000 - midas-2020-08-a-295-gfd314ca8-dirty on branch HEAD

ODB version: 3 |

|

2204

|

04 Jun 2021 |

Konstantin Olchanski | Bug Report | cmake with CMAKE_INSTALL_PREFIX fails | > cmake ../ -DCMAKE_INSTALL_PREFIX=/usr/local/midas

good timing, I am working on cmake for manalyzer and rootana and I have not tested

the install prefix business.

now I know to test it for all 3 packages.

I will also change find_package(Midas) slightly, (see my other message here),

I hope you can confirm that I do not break it for you.

K.O. |

|

2206

|

04 Jun 2021 |

Konstantin Olchanski | Bug Report | cmake with CMAKE_INSTALL_PREFIX fails | > cmake ../ -DCMAKE_INSTALL_PREFIX=/usr/local/midas

> Is the cmake setup not relocatable? This is new and was working until recently:

Indeed. Not relocatable. This is because we do not install the header files.

When you use the CMAKE_INSTALL_PREFIX, you get MIDAS "installed" in:

prefix/lib

prefix/bin

$MIDASSYS/include <-- this is the source tree and so not "relocatable"!

Before, this was kludged and cmake did not complain about it.

Now I changed cmake to handle the include path "the cmake way", and now it knows to complain about it.

I am not sure how to fix this: we have a conflict between:

- our normal way of using midas (include $MIDASSYS/include, link $MIDASSYS/lib, run $MIDASSYS/bin)

- the cmake way (packages *must be installed* or else! but I do like install(EXPORT)!)

- and your way (midas include files are in $MIDASSYS/include, everything else is in your special location)

I think your case is strange. I am curious why you want midas libraries to be in prefix/lib instead of in

$MIDASSYS/lib (in the source tree), but are happy with header files remaining in the source tree.

K.O. |

|

2208

|

04 Jun 2021 |

Andreas Suter | Bug Report | cmake with CMAKE_INSTALL_PREFIX fails | > > cmake ../ -DCMAKE_INSTALL_PREFIX=/usr/local/midas

> > Is the cmake setup not relocatable? This is new and was working until recently:

>

> Indeed. Not relocatable. This is because we do not install the header files.

>

> When you use the CMAKE_INSTALL_PREFIX, you get MIDAS "installed" in:

>

> prefix/lib

> prefix/bin

> $MIDASSYS/include <-- this is the source tree and so not "relocatable"!

>

> Before, this was kludged and cmake did not complain about it.

>

> Now I changed cmake to handle the include path "the cmake way", and now it knows to complain about it.

>

> I am not sure how to fix this: we have a conflict between:

>

> - our normal way of using midas (include $MIDASSYS/include, link $MIDASSYS/lib, run $MIDASSYS/bin)

> - the cmake way (packages *must be installed* or else! but I do like install(EXPORT)!)

> - and your way (midas include files are in $MIDASSYS/include, everything else is in your special location)

>

> I think your case is strange. I am curious why you want midas libraries to be in prefix/lib instead of in

> $MIDASSYS/lib (in the source tree), but are happy with header files remaining in the source tree.

>

> K.O.

We do it this way, since the lib and bin needs to be in a place where standard users have no access to.

If I think an all other packages I am working with, e.g. ROOT, the includes are also installed under CMAKE_INSTALL_PREFIX.

Up until recently there was no issue to work with CMAKE_INSTALL_PREFIX, accepting that the includes stay under

$MIDASSYS/include, even though this is not quite the standard way, but no problem here. Anyway, since CMAKE_INSTALL_PREFIX

is a standard option from cmake, I think things should not "break" if you want to use it.

A.S. |

|

2210

|

08 Jun 2021 |

Konstantin Olchanski | Bug Report | cmake with CMAKE_INSTALL_PREFIX fails | > > > cmake ../ -DCMAKE_INSTALL_PREFIX=/usr/local/midas

> > > Is the cmake setup not relocatable? This is new and was working until recently:

> > Not relocatable. This is because we do not install the header files.

>

> We do it this way, since the lib and bin needs to be in a place where standard users have no access to.

hmm... i did not get this. "needs to be in a place where standard users have no access to". what do you

mean by this? you install midas in a secret location to prevent somebody from linking to it?

> If I think an all other packages I am working with, e.g. ROOT, the includes are also installed under CMAKE_INSTALL_PREFIX.

cmake and other frameworks tend to be like procrustean beds (https://en.wikipedia.org/wiki/Procrustes),

pre-cmake packages never quite fit perfectly, and either the legs or the heads get cut off. post-cmake packages

are constructed to fit the bed, whether it makes sense or not.

given how this situation is known since antiquity, I doubt we will solve it today here.

(I exercise my freedom of speech rights to state that I object being put into

such situations. And I would like to have it clear that I hate cmake (ask me why)).

>

> Up until recently there was no issue to work with CMAKE_INSTALL_PREFIX, accepting that the includes stay under

> $MIDASSYS/include, even though this is not quite the standard way, but no problem here.

>

I think a solution would be to add install rules for include files. There will be a bit of trouble,

normal include path is $MIDASSYS/include,$MIDASSYS/mxml,$MIDASSYS/mjson,etc, after installing

it will be $CMAKE_INSTALL_PREFIX/include (all header files from different git submodules all

dumped into one directory). I do not know what problems will show up from that.

I think if midas is used as a subproject of a bigger project, this is pretty much required

(and I have seen big experiments, like STAR and ND280, do this type of stuff with CMT,

another horror and the historical precursor of cmake)

The problem is that we do not have any super-project like this here, so I cannot ever

be sure that I have done everything correctly. cmake itself can be helpful, like

in the current situation where it told us about a problem. but I will never trust

cmake completely, I see cmake do crazy and unreasonable things way too often.

One solution would be for you or somebody else to contribute such a cmake super-project,

that would build midas as a subproject, install it with a CMAKE_INSTALL_PREFIX and

try to link some trivial frontend or analyzer to check that everything is installed

correctly. It would become an example for "how to use midas as a subproject").

Ideally, it should be usable in a bitbucket automatic build (assuming bitbucket

has correct versions of cmake, which it does not half the time).

P.S. I already spent half-a-week tinkering with cmake rules, only to discover

that I broke a kludge that allows you to do something strange (if I have it right,

the CMAKE_PREFIX_INSTALL code is your contribution). This does not encourage

me to tinker with cmake even more. who knows against what other

kludge I bump into. (oh, yes, I know, I already bumped into the nonsense

find_package(Midas) implementation).

K.O. |

|

2211

|

09 Jun 2021 |

Andreas Suter | Bug Report | cmake with CMAKE_INSTALL_PREFIX fails | > > > > cmake ../ -DCMAKE_INSTALL_PREFIX=/usr/local/midas

> > > > Is the cmake setup not relocatable? This is new and was working until recently:

> > > Not relocatable. This is because we do not install the header files.

> >

> > We do it this way, since the lib and bin needs to be in a place where standard users have no access to.

>

> hmm... i did not get this. "needs to be in a place where standard users have no access to". what do you

> mean by this? you install midas in a secret location to prevent somebody from linking to it?

>

This was a wrong wording from my side. We do not want the the users have write access to the midas installation libs and bins.

I have submitted the pull request which should resolve this without interfere with your usage.

Hope this will resolve the issue. |

|