| ID |

Date |

Author |

Topic |

Subject |

|

2719

|

27 Feb 2024 |

Pavel Murat | Forum | displaying integers in hex format ? | Dear MIDAS Experts,

I'm having an odd problem when trying to display an integer stored in ODB on a custom

web page: the hex specifier, "%x", displays integers as if it were "%d" .

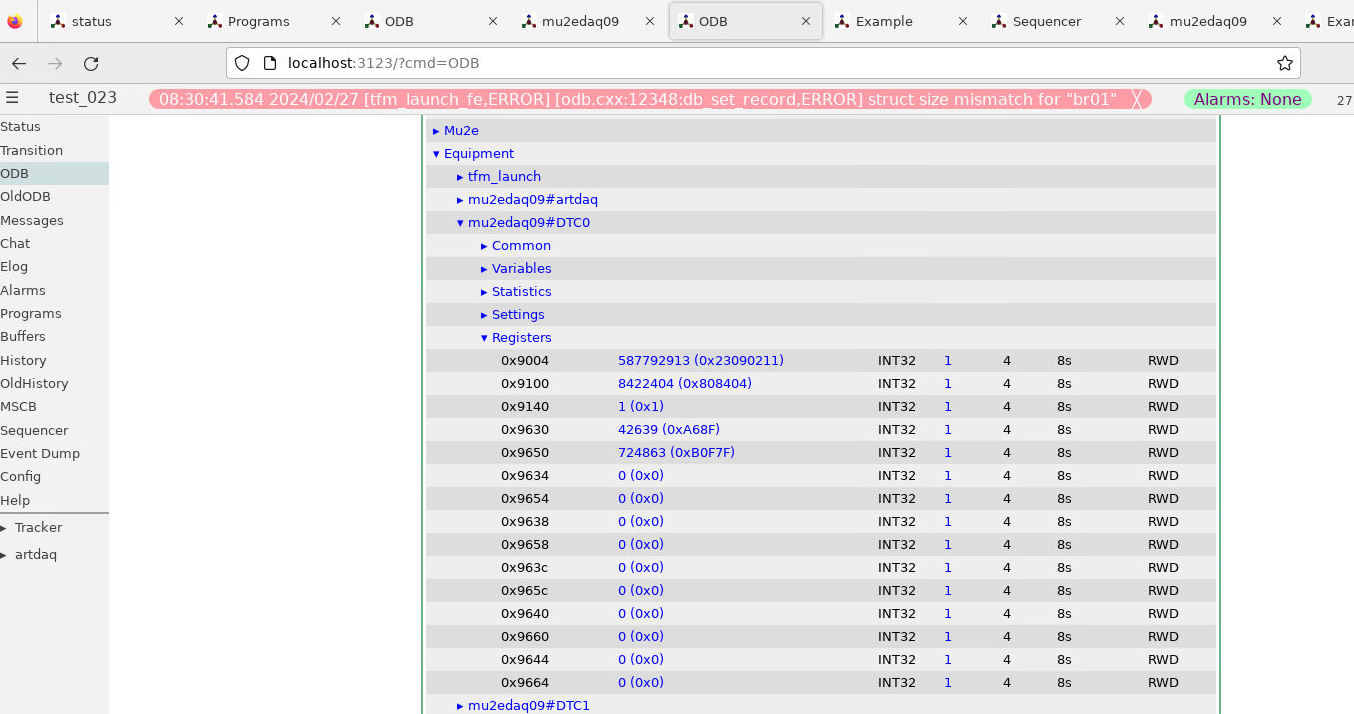

- attachment 1 shows the layout and the contents of the ODB sub-tree in question

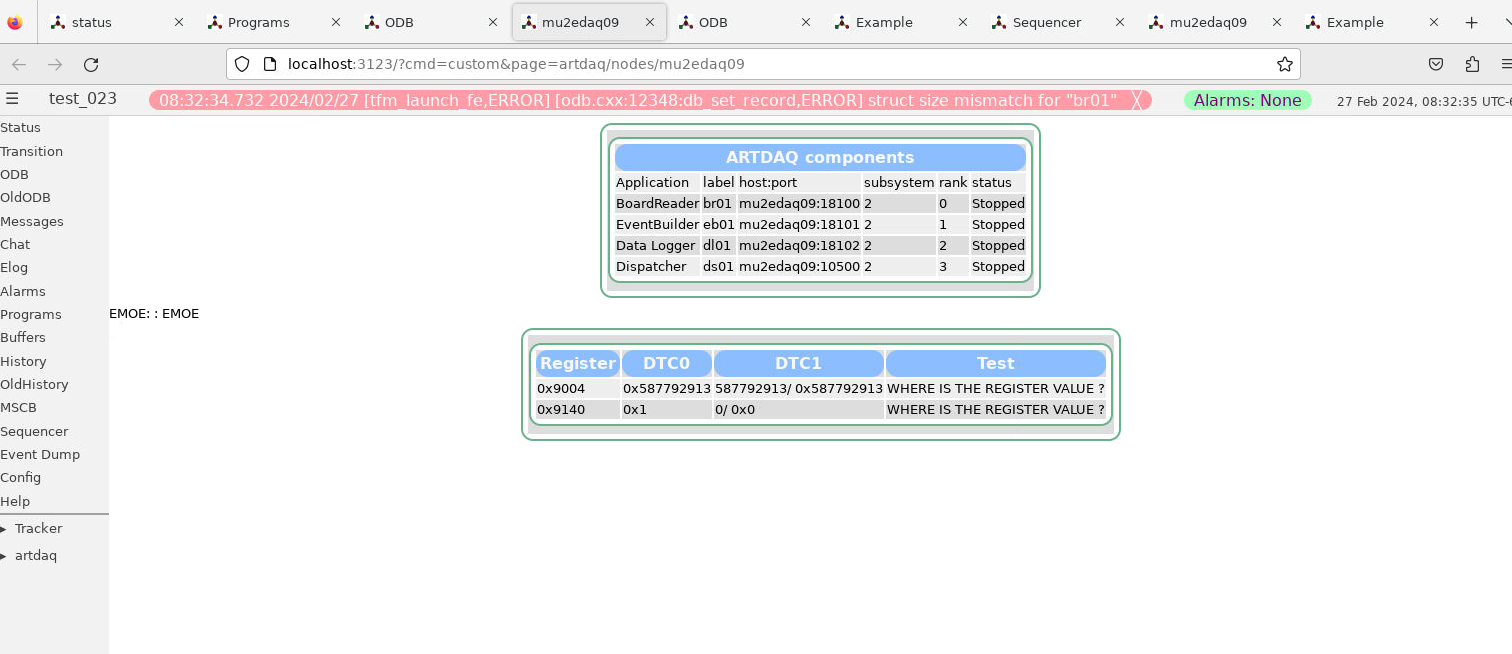

- attachment 2 shows the web page as it is displayed

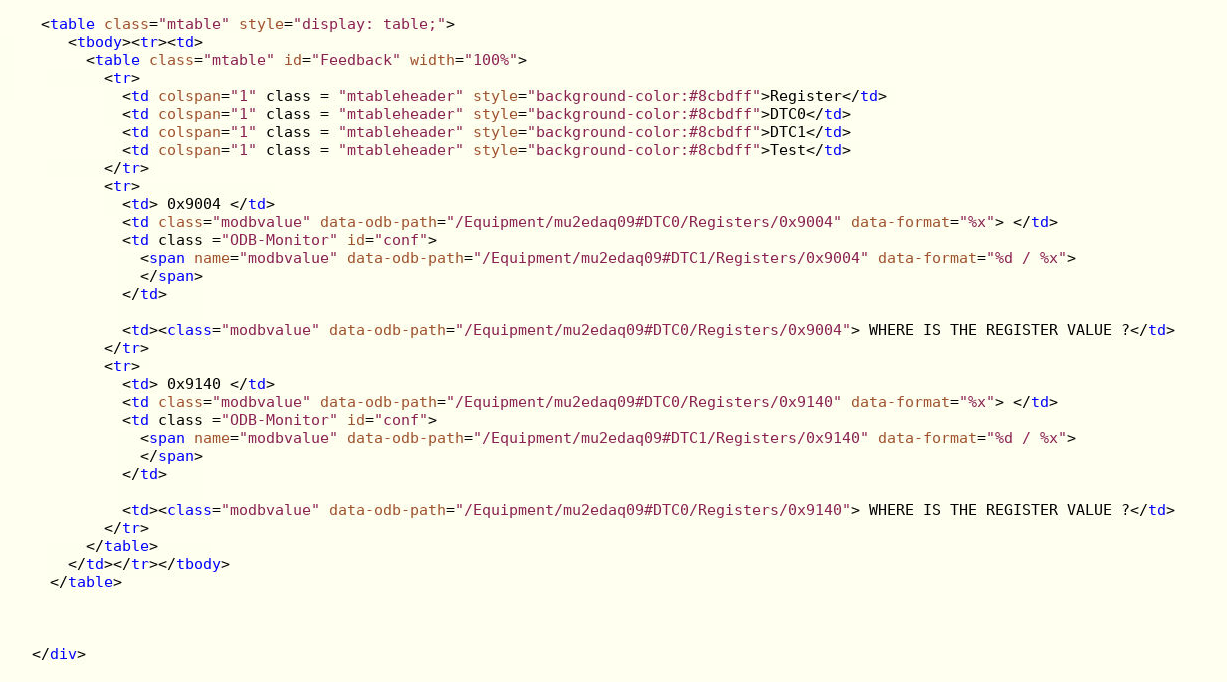

- attachment 3 shows the snippet of html/js producing the web page

I bet I'm missing smth trivial - an advice is greatly appreciated!

Also, is there an equivalent of a "0x%04x" specifier to have the output formatted

into a fixed length string ?

-- thanks, regards, Pasha |

| Attachment 1: 2024_02_27_dtc_registers_in_odb.png

|

|

| Attachment 2: 2024_02_27_custom_page.png

|

|

| Attachment 3: 2024_02_27_custom_page_html.png

|

|

|

2718

|

26 Feb 2024 |

Maia Henriksson-Ward | Forum | mserver ERR message saying data area 100% full, though it is free | > Hi,

>

> I have just installed Midas and set-up the ODB for a SuperCDMS test-facility (on

> a SL6.7 machine). All works fine except that I receive the following error message:

>

> [mserver,ERROR] [odb.c:944:db_validate_db,ERROR] Warning: database data area is

> 100% full

>

> Which is puzzling for the following reason:

>

> -> I have created the ODB with: odbedit -s 4194304

> -> Checking the size of the .ODB.SHM it says: 4.2M

> -> When I save the ODB as .xml and check the file's size it says: 1.1M

> -> When I start odbedit and check the memory usage issuing 'mem', it says:

> ...

> Free Key area: 1982136 bytes out of 2097152 bytes

> ...

> Free Data area: 2020072 bytes out of 2097152 bytes

> Free: 1982136 (94.5%) keylist, 2020072 (96.3%) data

>

> So it seems like nearly all memory is still free. As a test I created more

> instances of one of our front-ends and checked 'mem' again. As expected the free

> memory was decreasing. I did this ten times in fact, reaching

>

> ...

> Free Key area: 1440976 bytes out of 2097152 bytes

> ...

> Free Data area: 1861264 bytes out of 2097152 bytes

> Free: 1440976 (68.7%) keylist, 1861264 (88.8%) data

>

> So I could use another >20% of the database data area, which is according to the

> error message 100% (resp. >95%) full. Am I misunderstanding the error message?

> I'd appreciate any comments or ideas on that subject!

>

> Thanks, Belina

This is an old post, but I encountered the same error message recently and was looking for a

solution here. Here's how I solved it, for anyone else who finds this:

The size of .ODB.SHM was bigger than the maximum ODB size (4.2M > 4194304 in Belina's case). For us,

the very large odb size was in error and I suspect it happened because we forgot to shut down midas

cleanly before shutting the computer down. Using odbedit to load a previously saved copy of the ODB

did not help me to get .ODB.SHM back to a normal size. Following the instructions on the wiki for

recovery from a corrupted odb,

https://daq00.triumf.ca/MidasWiki/index.php/FAQ#How_to_recover_from_a_corrupted_ODB, (odbinit with --cleanup option) should

work, but didn't for me. Unfortunately I didn't save the output to figure out why. My solution was to manually delete/move/hide

the .ODB.SHM file, and an equally large file called .ODB.SHM.1701109528, then run odbedit again and reload that same saved copy of my ODB.

Manually changing files used by mserver is risky - for anyone who has the same problem, I suggest trying odbinit --cleanup -s

<yoursize> first. |

|

2717

|

19 Feb 2024 |

Pavel Murat | Forum | number of entries in a given ODB subdirectory ? | > > Hmm... is there any use case where you want to know the number of directory entries, but you will not iterate

> > over them later?

>

> I agree.

here comes the use case:

I have a slow control frontend which monitors several DAQ components - software processes.

The components are listed in the system configuration stored in ODB, a subkey per component.

Each component has its own driver, so the length of the driver list, defined by the number of components,

needs to be determined at run time.

I calculate the number of components by iterating over the list of component subkeys in the system configuration,

allocate space for the driver list, and store the pointer to the driver list in the equipment record.

The approach works, but it does require pre-calculating the number of subkeys of a given key.

-- regards, Pasha |

|

2716

|

18 Feb 2024 |

Frederik Wauters | Forum | dump history FILE files | > $ cat mhf_1697445335_20231016_run_transitions.dat

> event name: [Run transitions], time [1697445335]

> tag: tag: /DWORD 1 4 /timestamp

> tag: tag: UINT32 1 4 State

> tag: tag: UINT32 1 4 Run number

> record size: 12, data offset: 1024

> ...

>

> data is in fixed-length record format. from the file header, you read "record size" is 12 and data starts at offset 1024.

>

> the 12 bytes of the data record are described by the tags:

> 4 bytes of timestamp (DWORD, unix time)

> 4 bytes of State (UINT32)

> 4 bytes of "Run number" (UINT32)

>

> endianess is "local endian", which means "little endian" as we have no big-endian hardware anymore to test endian conversions.

>

> file format is designed for reading using read() or mmap().

>

> and you are right mhdump, does not work on these files, I guess I can write another utility that does what I just described and spews the numbers to stdout.

>

> K.O.

Thanks for the answer. As this FILE system is advertised as the new default (eog:2617), this format does merit some more WIKI info. |

|

2715

|

15 Feb 2024 |

Stefan Ritt | Forum | number of entries in a given ODB subdirectory ? | > Hmm... is there any use case where you want to know the number of directory entries, but you will not iterate

> over them later?

I agree.

One more way to iterate over subkeys by name is by using the new odbxx API:

midas::odb tree("/Test/Settings");

for (midas::odb& key : tree)

std::cout << key.get_name() << std::endl;

Stefan |

|

2714

|

15 Feb 2024 |

Konstantin Olchanski | Forum | number of entries in a given ODB subdirectory ? | > > You are right, num_values is always 1 for TID_KEYS. The number of subkeys is stored in

> > ((KEYLIST *) ((char *)pheader + pkey->data))->num_keys

> > Maybe we should add a function to return this. But so far db_enum_key() was enough.

Hmm... is there any use case where you want to know the number of directory entries, but you will not iterate

over them later?

K.O. |

|

2713

|

15 Feb 2024 |

Konstantin Olchanski | Forum | number of entries in a given ODB subdirectory ? | > > > For ODB keys of type TID_KEY, the value num_values IS the number of subkeys.

> >

> > this logic makes sense, however it doesn't seem to be consistent with the printout of the test example

> > at the end of https://daq00.triumf.ca/elog-midas/Midas/240203_095803/a.cc . The printout reports

> >

> > key.num_values = 1, but the actual number of subkeys = 6, and all subkeys being of TID_KEY type

> >

> > I'm certain that the ODB subtree in question was not accessed concurrently during the test.

>

> You are right, num_values is always 1 for TID_KEYS. The number of subkeys is stored in

>

> ((KEYLIST *) ((char *)pheader + pkey->data))->num_keys

>

> Maybe we should add a function to return this. But so far db_enum_key() was enough.

>

> Stefan

I would rather add a function that atomically returns an std::vector<KEY>. number of entries

is vector size, entry names are in key.name. If you need to do something with an entry,

like iterate a subdirectory, you have to go by name (not by HNDLE), and if somebody deleted

it, you get an error "entry deleted, tough!", (HNDLE becomes invalid without any error message about it,

subsequent db_get_data() likely returns gibberish, subsequent db_set_data() likely corrupts ODB).

K.O. |

|

2712

|

14 Feb 2024 |

Konstantin Olchanski | Info | bitbucket permissions | I pushed some buttons in bitbucket user groups and permissions to make it happy

wrt recent changes.

The intended configuration is this:

- two user groups: admins and developers

- admins has full control over the workspace, project and repositories ("Admin"

permission)

- developers have push permission for all repositories (not the "create

repository" permission, this is limited to admins) ("Write" permission).

- there seems to be a quirk, admins also need to be in the developers group or

some things do not work (like "run pipeline", which set me off into doing all

this).

- admins "Admin" permission is set at the "workspace" level and is inherited

down to project and repository level.

- developers "Write" permission is set at the "project" level and is inherited

down to repository level.

- individual repositories in the "MIDAS" project also seem to have explicit

(non-inhertited) permissions, I think this is redundant and I will probably

remove them at some point (not today).

K.O. |

|

2711

|

14 Feb 2024 |

Konstantin Olchanski | Bug Fix | added ubuntu-22 to nightly build on bitbucket, now need python! | > Are we running these tests as part of the nightly build on bitbucket? They would be part of

> the "make test" target. Correct python dependancies may need to be added to the bitbucket OS

> image in bitbucket-pipelines.yml. (This is a PITA to get right).

I added ubuntu-22 to the nightly builds.

but I notice the build says "no python" and I am not sure what packages I need to install for

midas python to work.

Ben, can you help me with this?

https://bitbucket.org/tmidas/midas/pipelines/results/1106/steps/%7B9ef2cf97-bd9f-4fd3-9ca2-9c6aa5e20828%7D

K.O. |

|

2710

|

13 Feb 2024 |

Konstantin Olchanski | Bug Fix | string --> int64 conversion in the python interface ? | > > The symptoms are consistent with a string --> int64 conversion not happening

> > where it is needed.

>

> Thanks for the report Pasha. Indeed I was missing a conversion in one place. Fixed now!

>

Are we running these tests as part of the nightly build on bitbucket? They would be part of

the "make test" target. Correct python dependancies may need to be added to the bitbucket OS

image in bitbucket-pipelines.yml. (This is a PITA to get right).

K.O. |

|

2709

|

13 Feb 2024 |

Konstantin Olchanski | Forum | forbidden equipment names ? | > equipment names are 'mu2edaq09:DTC0' and 'mu2edaq09:DTC1'

I think all names permitted for ODB keys are allowed as equipment names, any valid UTF-8,

forbidden chars are "/" (ODB path separator) and "\0" (C string terminator). Maximum length

is 31 byte (plus "\0" string terminator). (Fixed length 32-byte names with implied terminator

are no longer permitted).

The ":" character is used in history plot definitions and we are likely eventually change that,

history event names used to be pairs of "equipment_name:tag_name" but these days with per-variable

history, they are triplets "equipment_name,variable_name,tag_name". The history plot editor

and the corresponding ODB entries need to be updated for this. Then, ":" will again be a valid

equipment name.

I think if you disable the history for your equipments, MIDAS will stop complaining about ":" in the name.

K.O. |

|

2708

|

13 Feb 2024 |

Stefan Ritt | Forum | number of entries in a given ODB subdirectory ? | > > For ODB keys of type TID_KEY, the value num_values IS the number of subkeys.

>

> this logic makes sense, however it doesn't seem to be consistent with the printout of the test example

> at the end of https://daq00.triumf.ca/elog-midas/Midas/240203_095803/a.cc . The printout reports

>

> key.num_values = 1, but the actual number of subkeys = 6, and all subkeys being of TID_KEY type

>

> I'm certain that the ODB subtree in question was not accessed concurrently during the test.

You are right, num_values is always 1 for TID_KEYS. The number of subkeys is stored in

((KEYLIST *) ((char *)pheader + pkey->data))->num_keys

Maybe we should add a function to return this. But so far db_enum_key() was enough.

Stefan |

|

2707

|

12 Feb 2024 |

Konstantin Olchanski | Info | MIDAS and ROOT 6.30 | Starting around ROOT 6.30, there is a new dependency requirement for nlohmann-json3-dev from https://github.com/nlohmann/json.

If you use a Ubuntu-22 ROOT binary kit from root.cern.ch, MIDAS build will bomb with errors: Could not find a package configuration file provided by "nlohmann_json"

Per https://root.cern/install/dependencies/ install it:

apt install nlohmann-json3-dev

After this MIDAS builds ok.

K.O. |

|

2706

|

11 Feb 2024 |

Pavel Murat | Forum | number of entries in a given ODB subdirectory ? | > For ODB keys of type TID_KEY, the value num_values IS the number of subkeys.

this logic makes sense, however it doesn't seem to be consistent with the printout of the test example

at the end of https://daq00.triumf.ca/elog-midas/Midas/240203_095803/a.cc . The printout reports

key.num_values = 1, but the actual number of subkeys = 6, and all subkeys being of TID_KEY type

I'm certain that the ODB subtree in question was not accessed concurrently during the test.

-- regards, Pasha |

|

2705

|

08 Feb 2024 |

Stefan Ritt | Forum | number of entries in a given ODB subdirectory ? | > Konstantin is right: KEY.num_values is not the same as the number of subkeys (should it be ?)

For ODB keys of type TID_KEY, the value num_values IS the number of subkeys. The only issue here is

what KO mentioned already. If you obtain num_values, start iterating, then someone else might

change the number of subkeys, then your (old) num_values is off. Therefore it's always good to

check the return status of all subkey accesses. To do a truely atomic access to a subtree, you need

db_copy(), but then you have to parse the JSON yourself, and again you have no guarantee that the

ODB hasn't changed in meantime.

Stefan |

|

2704

|

05 Feb 2024 |

Pavel Murat | Forum | forbidden equipment names ? | Dear MIDAS experts,

I have multiple daq nodes with two data receiving FPGAs on the PCIe bus each.

The FPGAs come under the names of DTC0 and DTC1. Both FPGAs are managed by the same slow control frontend.

To distinguish FPGAs of different nodes from each other, I included the hostname to the equipment name,

so for node=mu2edaq09 the FPGA names are 'mu2edaq09:DTC0' and 'mu2edaq09:DTC1'.

The history system didn't like the names, complaining that

21:26:06.334 2024/02/05 [Logger,ERROR] [mlogger.cxx:5142:open_history,ERROR] Equipment name 'mu2edaq09:DTC1'

contains characters ':', this may break the history system

So the question is : what are the safe equipment/driver naming rules and what characters

are not allowed in them? - I think this is worth documenting, and the current MIDAS docs at

https://daq00.triumf.ca/MidasWiki/index.php/Equipment_List_Parameters#Equipment_Name

don't say much about it.

-- many thanks, regards, Pasha |

|

2703

|

05 Feb 2024 |

Ben Smith | Bug Fix | string --> int64 conversion in the python interface ? | > The symptoms are consistent with a string --> int64 conversion not happening

> where it is needed.

Thanks for the report Pasha. Indeed I was missing a conversion in one place. Fixed now!

Ben |

|

2702

|

03 Feb 2024 |

Pavel Murat | Bug Report | string --> int64 conversion in the python interface ? | Dear MIDAS experts,

I gave a try to the MIDAS python interface and ran all tests available in midas/python/tests.

Two Int64 tests from test_odb.py had failed (see below), everthong else - succeeded

I'm using a ~ 2.5 weeks-old commit and python 3.9 on SL7 Linux platform.

commit c19b4e696400ee437d8790b7d3819051f66da62d (HEAD -> develop, origin/develop, origin/HEAD)

Author: Zaher Salman <zaher.salman@gmail.com>

Date: Sun Jan 14 13:18:48 2024 +0100

The symptoms are consistent with a string --> int64 conversion not happening

where it is needed.

Perhaps the issue have already been fixed?

-- many thanks, regards, Pasha

-------------------------------------------------------------------------------------------

Traceback (most recent call last):

File "/home/mu2etrk/test_stand/pasha_020/midas/python/tests/test_odb.py", line 178, in testInt64

self.set_and_readback_from_parent_dir("/pytest", "int64_2", [123, 40000000000000000], midas.TID_INT64, True)

File "/home/mu2etrk/test_stand/pasha_020/midas/python/tests/test_odb.py", line 130, in set_and_readback_from_parent_dir

self.validate_readback(value, retval[key_name], expected_key_type)

File "/home/mu2etrk/test_stand/pasha_020/midas/python/tests/test_odb.py", line 87, in validate_readback

self.assert_equal(val, retval[i], expected_key_type)

File "/home/mu2etrk/test_stand/pasha_020/midas/python/tests/test_odb.py", line 60, in assert_equal

self.assertEqual(val1, val2)

AssertionError: 123 != '123'

with the test on line 178 commented out, the test on the next line fails in a similar way:

Traceback (most recent call last):

File "/home/mu2etrk/test_stand/pasha_020/midas/python/tests/test_odb.py", line 179, in testInt64

self.set_and_readback_from_parent_dir("/pytest", "int64_2", 37, midas.TID_INT64, True)

File "/home/mu2etrk/test_stand/pasha_020/midas/python/tests/test_odb.py", line 130, in set_and_readback_from_parent_dir

self.validate_readback(value, retval[key_name], expected_key_type)

File "/home/mu2etrk/test_stand/pasha_020/midas/python/tests/test_odb.py", line 102, in validate_readback

self.assert_equal(value, retval, expected_key_type)

File "/home/mu2etrk/test_stand/pasha_020/midas/python/tests/test_odb.py", line 60, in assert_equal

self.assertEqual(val1, val2)

AssertionError: 37 != '37'

--------------------------------------------------------------------------- |

|

2701

|

03 Feb 2024 |

Pavel Murat | Forum | number of entries in a given ODB subdirectory ? | Konstantin is right: KEY.num_values is not the same as the number of subkeys (should it be ?)

For those looking for an example in the future, I attach a working piece of code converted

from the ChatGPT example, together with its printout.

-- regards, Pasha |

| Attachment 1: a.cc

|

#include <stdio.h>

#include <stdlib.h>

#include "midas.h"

int main(int argc, char **argv) {

HNDLE hDB, hKey;

INT status, num_subkeys;

KEY key;

cm_connect_experiment (NULL, NULL, "Example", NULL);

cm_get_experiment_database(&hDB, NULL);

char dir[] = "/ArtdaqConfigurations/demo/mu2edaq09.fnal.gov";

status = db_find_key(hDB, 0, dir , &hKey);

if (status != DB_SUCCESS) {

printf("Error: Cannot find the ODB directory\n");

return 1;

}

//-----------------------------------------------------------------------------

// Iterate over all subkeys in the directory

// note: key.num_values is NOT the number of subkeys in the directory

//-----------------------------------------------------------------------------

db_get_key(hDB, hKey, &key);

printf("key.num_values: %d\n",key.num_values);

HNDLE hSubkey;

KEY subkey;

num_subkeys = 0;

for (int i=0; db_enum_key(hDB, hKey, i, &hSubkey) != DB_NO_MORE_SUBKEYS; ++i) {

db_get_key(hDB, hSubkey, &subkey);

printf("Subkey %d: %s, Type: %d\n", i, subkey.name, subkey.type);

num_subkeys++;

}

printf("number of subkeys: %d\n",num_subkeys);

// Disconnect from MIDAS

cm_disconnect_experiment();

return 0;

}

------------------------------------------------------ output:

mu2etrk@mu2edaq09:~/test_stand>test_001

key.num_values: 1

Subkey 0: BoardReader_01, Type: 15

Subkey 1: BoardReader_02, Type: 15

Subkey 2: EventBuilder_01, Type: 15

Subkey 3: EventBuilder_02, Type: 15

Subkey 4: DataLogger_01, Type: 15

Subkey 5: Dispatcher_01, Type: 15

number of subkeys: 6

---------------------------------------------------------------

|

|

2699

|

29 Jan 2024 |

Konstantin Olchanski | Forum | a scroll option for "add history variables" window? | familiar situation, "too much data", you dice t or slice it, still too much. BTW, you can try to generate history

plot ODB entries from your program instead of from the history plot editor. K.O. |

|