| ID |

Date |

Author |

Topic |

Subject |

|

2731

|

01 Apr 2024 |

Konstantin Olchanski | Info | xz-utils bomb out, compression benchmarks | you may have heard the news of a major problem with the xz-utils project, authors of the popular "xz" file compression,

https://nvd.nist.gov/vuln/detail/CVE-2024-3094

the debian bug tracker is interesting reading on this topic, "750 commits or contributions to xz by Jia Tan, who backdoored it",

https://bugs.debian.org/cgi-bin/bugreport.cgi?bug=1068024

and apparently there is problems with the deisng of the .xz file format, making it vulnerable to single-bit errors and unreliable checksums,

https://www.nongnu.org/lzip/xz_inadequate.html

this moved me to review status of file compression in MIDAS.

MIDAS does not use or recommend xz compression, MIDAS programs to not link to xz and lzma libraries provided by xz-utils.

mlogger has built-in support for:

- gzip-1, enabled by default, as the most safe and bog-standard compression method

- bzip2 and pbzip2, as providing the best compression

- lz4, for high data rate situations where gzip and bzip2 cannot keep up with the data

compression benchmarks on an AMD 7700 CPU (8-core, DDR5 RAM) confirm the usual speed-vs-compression tradeoff:

note: observe how both lz4 and pbzip2 compress time is the time it takes to read the file from ZFS cache, around 6 seconds.

note: decompression stacks up in the same order: lz4, gzip fastest, pbzip2 same speed using 10x CPU, bzip2 10x slower uses 1 CPU.

note: because of the fast decompression speed, gzip remains competitive.

no compression: 6 sec, 270 MiBytes/sec,

lz4, bpzip2: 6 sec, same, (pbzip2 uses 10 CPU vs lz4 uses 1 CPU)

gzip -1: 21 sec, 78 MiBytes/sec

bzip2: 70 sec, 23 MiBytes/sec (same speed as pbzip2, but using 1 CPU instead of 10 CPU)

file sizes:

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ ls -lSr test.mid*

-rw-r--r-- 1 dsdaqdev users 483319523 Apr 1 14:06 test.mid.bz2

-rw-r--r-- 1 dsdaqdev users 631575929 Apr 1 14:06 test.mid.gz

-rw-r--r-- 1 dsdaqdev users 1002432717 Apr 1 14:06 test.mid.lz4

-rw-r--r-- 1 dsdaqdev users 1729327169 Apr 1 14:06 test.mid

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$

actual benchmarks:

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ /usr/bin/time cat test.mid > /dev/null

0.00user 6.00system 0:06.00elapsed 99%CPU (0avgtext+0avgdata 1408maxresident)k

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ /usr/bin/time gzip -1 -k test.mid

14.70user 6.42system 0:21.14elapsed 99%CPU (0avgtext+0avgdata 1664maxresident)k

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ /usr/bin/time lz4 -k -f test.mid

2.90user 6.44system 0:09.39elapsed 99%CPU (0avgtext+0avgdata 7680maxresident)k

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ /usr/bin/time bzip2 -k -f test.mid

64.76user 8.81system 1:13.59elapsed 99%CPU (0avgtext+0avgdata 8448maxresident)k

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ /usr/bin/time pbzip2 -k -f test.mid

86.76user 15.39system 0:09.07elapsed 1125%CPU (0avgtext+0avgdata 114596maxresident)k

decompression benchmarks:

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ /usr/bin/time lz4cat test.mid.lz4 > /dev/null

0.68user 0.23system 0:00.91elapsed 99%CPU (0avgtext+0avgdata 7680maxresident)k

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ /usr/bin/time zcat test.mid.gz > /dev/null

6.61user 0.23system 0:06.85elapsed 99%CPU (0avgtext+0avgdata 1408maxresident)k

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ /usr/bin/time bzcat test.mid.bz2 > /dev/null

27.99user 1.59system 0:29.58elapsed 99%CPU (0avgtext+0avgdata 4656maxresident)k

(vslice) dsdaqdev@dsdaqgw:/zdata/vslice$ /usr/bin/time pbzip2 -dc test.mid.bz2 > /dev/null

37.32user 0.56system 0:02.75elapsed 1377%CPU (0avgtext+0avgdata 157036maxresident)k

K.O. |

|

2733

|

02 Apr 2024 |

Konstantin Olchanski | Info | Sequencer editor | > Stefan and I have been working on improving the sequencer editor ...

Looks grand! Congratulations with getting it completed. The previous version was

my rewrite of the old generated-C pages into html+javascript, nothing to write

home about, I even kept the 1990-ies-style html formatting and styling as much as

possible.

K.O. |

|

2734

|

02 Apr 2024 |

Konstantin Olchanski | Bug Report | Midas (manalyzer) + ROOT 6.31/01 - compilation error | > I found solution for my trouble. With MIDAS and ROOT everything is OK,

> the trobule was with my Ubuntu enviroment.

Congratulations with figuring this out.

BTW, this is the 2nd case of contaminated linker environment I run into in the last 30 days. We

just had a problem of "cannot link MIDAS with ROOT" (resolving by "make cmake NO_ROOT=1 NO_CURL=1

NO_MYSQL=1").

This all seems to be a flaw in cmake, it reports "found ROOT at XXX", "found CURL at YYY", "found

MYSQL at ZZZ", then proceeds to link ROOT, CURL and MYSQL libraries from somewhere else,

resulting in shared library version mismatch.

With normal Makefiles, this is fixable by changing the link command from:

g++ -o rmlogger ... -LAAA/lib -LXXX/lib -LYYY/lib -lcurl -lmysql -lROOT

into explicit

g++ -o rmlogger ... -LAAA/lib XXX/lib/libcurl.a YYY/lib/libmysql.a ...

defeating the bogus CURL and MYSQL libraries in AAA.

With cmake, I do not think it is possible to make this transformation.

Maybe it is possible to add a cmake rules to at least detect this situation, i.e. compare library

paths reported by "ldd rmlogger" to those found and expected by cmake.

K.O. |

|

2735

|

04 Apr 2024 |

Konstantin Olchanski | Info | MIDAS RPC data format | I am not sure I have seen this documented before. MIDAS RPC data format.

1) RPC request (from client to mserver), in rpc_call_encode()

1.1) header:

4 bytes NET_COMMAND.header.routine_id is the RPC routine ID

4 bytes NET_COMMAND.header.param_size is the size of following data, aligned to 8 bytes

1.2) followed by values of RPC_IN parameters:

arg_size is the actual data size

param_size = ALIGN8(arg_size)

for TID_STRING||TID_LINK, arg_size = 1+strlen()

for TID_STRUCT||RPC_FIXARRAY, arg_size is taken from RPC_LIST.param[i].n

for RPC_VARARRAY|RPC_OUT, arg_size is pointed to by the next argument

for RPC_VARARRAY, arg_size is the value of the next argument

otherwise arg_size = rpc_tid_size()

data encoding:

RPC_VARARRAY:

4 bytes of ALIGN8(arg_size)

4 bytes of padding

param_size bytes of data

TID_STRING||TID_LINK:

param_size of string data, zero terminated

otherwise:

param_size of data

2) RPC dispatch in rpc_execute

for each parameter, a pointer is placed into prpc_param[i]:

RPC_IN: points to the data inside the receive buffer

RPC_OUT: points to the data buffer allocated inside the send buffer

RPC_IN|RPC_OUT: data is copied from the receive buffer to the send buffer, prpc_param[i] is a pointer to the copy in the send buffer

prpc_param[] is passed to the user handler function.

user function reads RPC_IN parameters by using the CSTRING(i), etc macros to dereference prpc_param[i]

user function modifies RPC_IN|RPC_OUT parameters pointed to by prpc_param[i] (directly in the send buffer)

user function places RPC_OUT data directly to the send buffer pointed to by prpc_param[i]

size of RPC_VARARRAY|RPC_OUT data should be written into the next/following parameter.

3) RPC reply

3.1) header:

4 bytes NET_COMMAND.header.routine_id contains the value returned by the user function (RPC_SUCCESS)

4 bytes NET_COMMAND.header.param_size is the size of following data aligned to 8 bytes

3.2) followed by data for RPC_OUT parameters:

data sizes and encodings are the same as for RPC_IN parameters.

for variable-size RPC_OUT parameters, space is allocated in the send buffer according to the maximum data size

that the user code expects to receive:

RPC_VARARRAY||TID_STRING: max_size is taken from the first 4 bytes of the *next* parameter

otherwise: max_size is same as arg_size and param_size.

when encoding and sending RPC_VARARRAY data, actual data size is taken from the next parameter, which is expected to be

TID_INT32|RPC_IN|RPC_OUT.

4) Notes:

4.1) RPC_VARARRAY should always be sent using two parameters:

a) RPC_VARARRAY|RPC_IN is pointer to the data we are sending, next parameter must be TID_INT32|RPC_IN is data size

b) RPC_VARARRAY|RPC_OUT is pointer to the data buffer for received data, next parameter must be TID_INT32|RPC_IN|RPC_OUT before the call should

contain maximum data size we expect to receive (size of malloc() buffer), after the call it may contain the actual data size returned

c) RPC_VARARRAY|RPC_IN|RPC_OUT is pointer to the data we are sending, next parameter must be TID_INT32|RPC_IN|RPC_OUT containing the maximum

data size we are expected to receive.

4.2) during dispatching, RPC_VARARRAY|RPC_OUT and TID_STRING|RPC_OUT both have 8 bytes of special header preceeding the actual data, 4 bytes of

maximum data size and 4 bytes of padding. prpc_param[] points to the actual data and user does not see this special header.

4.3) when encoding outgoing data, this special 8 byte header is removed from TID_STRING|RPC_OUT parameters using memmove().

4.4) TID_STRING parameters:

TID_STRING|RPC_IN can be sent using oe parameter

TID_STRING|RPC_OUT must be sent using two parameters, second parameter should be TID_INT32|RPC_IN to specify maximum returned string length

TID_STRING|RPC_IN|RPC_OUT ditto, but not used anywhere inside MIDAS

4.5) TID_IN32|RPC_VARARRAY does not work, corrupts following parameters. MIDAS only uses TID_ARRAY|RPC_VARARRAY

4.6) TID_STRING|RPC_IN|RPC_OUT does not seem to work.

4.7) RPC_VARARRAY does not work is there is preceding TID_STRING|RPC_OUT that returned a short string. memmove() moves stuff in the send buffer,

this makes prpc_param[] pointers into the send buffer invalid. subsequent RPC_VARARRAY parameter refers to now-invalid prpc_param[i] pointer to

get param_size and gets the wrong value. MIDAS does not use this sequence of RPC parameters.

4.8) same bug is in the processing of TID_STRING|RPC_OUT parameters, where it refers to invalid prpc_param[i] to get the string length.

K.O. |

|

2736

|

15 Apr 2024 |

Konstantin Olchanski | Bug Report | open MIDAS RPC ports | we had a bit of trouble with open network ports recently and I now think security of MIDAS RPC

ports needs to be tightened.

TL;DR, this is a non-trivial network configuration problem, TL required, DR up to you.

as background, right now we have two settings in ODB, "/expt/security/enable non-localhost

RPC" set to "no" (the default) and set to "yes". Set to "no" is very secure, all RPC sockets

listen only on the "localhost" interface (127.0.0.1) and do not accept connections from other

computers. Set to "yes", RPC sockets accept connections from everywhere in the world, but

immediately close them without reading any data unless connection origins are listed in ODB

"/expt/security/RPC hosts" (white-listed).

the problem, one. for security and robustness we place most equipments on a private network

(192.168.1.x). MIDAS frontends running on these equipments must connect to MIDAS running on

the main computer. This requires setting "enable non-localhost RPC" to "yes" and white-listing

all private network equipments. so far so good.

the problem, one, continued. in this configuration, the MIDAS main computer is usually also

the network gateway (with NAT, IP forwarding, DHCP, DNS, etc). so now MIDAS RPC ports are open

to all external connections (in the absence of restrictive firewall rules). one would hope for

security-through-obscurity and expect that "external threat actors" will try to bother them,

but in reality we eventually see large numbers of rejected unwanted connections logged in

midas.log (we log the first 10 rejected connections to help with maintaining the RPC

connections white-list).

the problem, two. central IT do not like open network ports. they run their scanners, discover

the MIDAS RPC ports, complain about them, require lengthy explanations, etc.

it would be much better if in the typical configuration, MIDAS RPC ports did not listen on

external interfaces (campus network). only listen on localhost and on private network

interfaces (192.168.1.x).

I am not yet of the simplest way to implement this. But I think this is the direction we

should go.

P.S. what about firewall rules? two problems: one: from statistic-of-one, I make mistakes

writing firewall rules, others also will make mistakes, a literally fool-proof protection of

MIDAS RPC ports is needed. two: RHEL-derived Linuxes by-default have restrictive firewall

rules, and this is good for security, except that there is a failure mode where at boot time

something can go wrong and firewall rules are not loaded at all. we have seen this happen.

this is a complete disaster on a system that depends on firewall rules for security. better to

have secure applications (TCP ports protected by design and by app internals) with firewall

rules providing a secondary layer of protection.

P.P.S. what about MIDAS frontend initial connection to the mserver? this is currently very

insecure, but the vulnerability window is very small. Ideally we should rework the mserver

connection to make it simpler, more secure and compatible with SSH tunneling.

P.P.S. Typical network diagram:

internet - campus firewall - campus network - MIDAS host (MIDAS) - 192.168.1.x network - power

supplies, digitizers, MIDAS frontends.

P.P.S. mserver connection sequence:

1) midas frontend opens 3 tcp sockets, connections permitted from anywhere

2) midas frontend opens tcp socket to main mserver, sends port numbers of the 3 tcp sockets

3) main mserver forks out a secondary (per-client) mserver

4) secondary mserver connects to the 3 tcp sockets of the midas frontend created in (1)

5) from here midas rpc works

6) midas frontend loads the RPC white-list

7) from here MIDAS RPC sockets are secure (protected by the white-list).

(the 3 sockets are: RPC recv_sock, RPC send_sock and event_sock)

P.P.S. MIDAS UDP sockets used for event buffer and odb notifications are secure, they bind to

localhost interface and do not accept external connections.

K.O. |

|

2738

|

24 Apr 2024 |

Konstantin Olchanski | Info | MIDAS RPC data format | > 4.5) TID_IN32|RPC_VARARRAY does not work, corrupts following parameters. MIDAS only uses TID_ARRAY|RPC_VARARRAY

fixed in commit 0f5436d901a1dfaf6da2b94e2d87f870e3611cf1, TID_ARRAY|RPC_VARARRAY was okey (i.e. db_get_value()), bug happened only if rpc_tid_size()

is not zero.

>

> 4.6) TID_STRING|RPC_IN|RPC_OUT does not seem to work.

>

> 4.7) RPC_VARARRAY does not work is there is preceding TID_STRING|RPC_OUT that returned a short string. memmove() moves stuff in the send buffer,

> this makes prpc_param[] pointers into the send buffer invalid. subsequent RPC_VARARRAY parameter refers to now-invalid prpc_param[i] pointer to

> get param_size and gets the wrong value. MIDAS does not use this sequence of RPC parameters.

>

> 4.8) same bug is in the processing of TID_STRING|RPC_OUT parameters, where it refers to invalid prpc_param[i] to get the string length.

fixed in commits e45de5a8fa81c75e826a6a940f053c0794c962f5 and dc08fe8425c7d7bfea32540592b2c3aec5bead9f

K.O. |

|

2739

|

24 Apr 2024 |

Konstantin Olchanski | Info | MIDAS RPC add support for std::string and std::vector<char> | I now fully understand the MIDAS RPC code, had to add some debugging printfs,

write some test code (odbedit test_rpc), catch and fix a few bugs.

Fixes for the bugs are now committed.

Small refactor of rpc_execute() should be committed soon, this removes the

"goto" in the memory allocation of output buffer. Stefan's original code used a

fixed size buffer, I later added allocation "as-neeed" but did not fully

understand everything and implemented it as "if buffer too small, make it

bigger, goto start over again".

After that, I can implement support for std::string and std::vector<char>.

The way it looks right now, the on-the-wire data format is flexible enough to

make this change backward-compatible and allow MIDAS programs built with old

MIDAS to continue connecting to the new MIDAS and vice-versa.

MIDAS RPC support for std::string should let us improve security by removing

even more uses of fixed-size string buffers.

Support for std::vector<char> will allow removal of last places where

MAX_EVENT_SIZE is used and simplify memory allocation in other "give me data"

RPC calls, like RPC_JRPC and RPC_BRPC.

K.O. |

|

1833

|

14 Feb 2020 |

Konrad Briggl | Forum | Writting Midas Events via FPGAs | Hello Stefan,

is there a difference for the later data processing (after writing the ring buffer blocks)

if we write single events or multiple in one rb_get_wp - memcopy - rb_increment_wp cycle?

Both Marius and me have seen some inconsistencies in the number of events produced that is reported in the status page when writing multiple events in one go,

so I was wondering if this is due to us treating the buffer badly or the way midas handles the events after that.

Given that we produce the full event in our (FPGA) domain, an option would be to always copy one event from the dma to the midas-system buffer in a loop.

The question is if there is a difference (for midas) between

[pseudo code, much simplified]

while(dma_read_index < last_dma_write_index){

if(rb_get_wp(pdata)!=SUCCESS){

dma_read_index+=event_size;

continue;

}

copy_n(dma_buffer, pdata, event_size);

rb_increment_wp(event_size);

dma_read_index+=event_size;

}

and

while(dma_read_index < last_dma_write_index){

if(rb_get_wp(pdata)!=SUCCESS){

...

};

total_size=max_n_events_that_fit_in_rb_block();

copy_n(dma_buffer, pdata, total_size);

rb_increment_wp(total_size);

dma_read_index+=total_size;

}

Cheers,

Konrad

> The rb_xxx function are (thoroughly tested!) robust against high data rate given that you use them as intended:

>

> 1) Once you create the ring buffer via rb_create(), specify the maximum event size (overall event size, not bank size!). Later there is no protection any more, so if you obtain pdata from rb_get_wp, you can of course write 4GB to pdata, overwriting everything in your memory, causing a total crash. It's your responsibility to not write more bytes into pdata then

> what you specified as max event size in rb_create()

>

> 2) Once you obtain a write pointer to the ring buffer via rb_get_wp, this function might fail when the receiving side reads data slower than the producing side, simply because the buffer is full. In that case the producing side has to wait until space is freed up in the buffer by the receiving side. If your call to rb_get_wp returns DB_TIMEOUT, it means that the

> function did not obtain enough free space for the next event. In that case you have to wait (like ss_sleep(10)) and try again, until you succeed. Only when rb_get_wp() returns DB_SUCCESS, you are allowed to write into pdata, up to the maximum event size specified in rb_create of course. I don't see this behaviour in your code. You would need something

> like

>

> do {

> status = rb_get_wp(rbh, (void **)&pdata, 10);

> if (status == DB_TIMEOUT)

> ss_sleep(10);

> } while (status == DB_TIMEOUT);

>

> Best,

> Stefan

>

>

> > Dear all,

> >

> > we creating Midas events directly inside a FPGA and send them off via DMA into the PC RAM. For reading out this RAM via Midas the FPGA sends as a pointer where it has written the last 4kB of data. We use this pointer for telling the ring buffer of midas where the new events are. The buffer looks something like:

> >

> > // event 1

> > dma_buf[0] = 0x00000001; // Trigger and Event ID

> > dma_buf[1] = 0x00000001; // Serial number

> > dma_buf[2] = TIME; // time

> > dma_buf[3] = 18*4-4*4; // event size

> > dma_buf[4] = 18*4-6*4; // all bank size

> > dma_buf[5] = 0x11; // flags

> > // bank 0

> > dma_buf[6] = 0x46454230; // bank name

> > dma_buf[7] = 0x6; // bank type TID_DWORD

> > dma_buf[8] = 0x3*4; // data size

> > dma_buf[9] = 0xAFFEAFFE; // data

> > dma_buf[10] = 0xAFFEAFFE; // data

> > dma_buf[11] = 0xAFFEAFFE; // data

> > // bank 1

> > dma_buf[12] = 0x1; // bank name

> > dma_buf[12] = 0x46454231; // bank name

> > dma_buf[13] = 0x6; // bank type TID_DWORD

> > dma_buf[14] = 0x3*4; // data size

> > dma_buf[15] = 0xAFFEAFFE; // data

> > dma_buf[16] = 0xAFFEAFFE; // data

> > dma_buf[17] = 0xAFFEAFFE; // data

> >

> > // event 2

> > .....

> >

> > dma_buf[fpga_pointer] = 0xXXXXXXXX;

> >

> >

> > And we do something like:

> >

> > while{true}

> > // obtain buffer space

> > status = rb_get_wp(rbh, (void **)&pdata, 10);

> > fpga_pointer = fpga.read_last_data_add();

> >

> > wlen = last_fpga_pointer - fpga_pointer; \\ in 32 bit words

> > copy_n(&dma_buf[last_fpga_pointer], wlen, pdata);

> > rb_status = rb_increment_wp(rbh, wlen * 4); \\ in byte

> >

> > last_fpga_pointer = fpga_pointer;

> >

> > Leaving the case out where the dma_buf wrap around this works fine for a small data rate. But if we increase the rate the fpga_pointer also increases really fast and wlen gets quite big. Actually it gets bigger then max_event_size which is checked in rb_increment_wp leading to an error.

> >

> > The problem now is that the event size is actually not to big but since we have multi events in the buffer which are read by midas in one step. So we think in this case the function rb_increment_wp is comparing actually the wrong thing. Also increasing the max_event_size does not help.

> >

> > Remark: dma_buf is volatile so memcpy is not possible here.

> >

> > Cheers,

> > Marius |

|

357

|

02 Mar 2007 |

Kevin Lynch | Forum | event builder scalability | > Hi there:

> I have a question if there's anybody out there running MIDAS with event builder

> that assembles events from more that just a few front ends (say on the order of

> 0x10 or more)?

> Any experiences with scalability?

>

> Cheers

> Piotr

Mulan (which you hopefully remember with great fondness :-) is currently running

around ten frontends, six of which produce data at any rate. If I'm remembering

correctly, the event builder handles about 30-40MB/s. You could probably ping Tim

Gorringe or his current postdoc Volodya Tishenko (tishenko@pa.uky.edu) if you want

more details. Volodya solved a significant number of throughput related

bottlenecks in the year leading up to our 2006 run. |

|

1225

|

15 Dec 2016 |

Kevin Giovanetti | Bug Report | midas.h error | creating a frontend on MAC Sierra OSX 10

include the midas.h file and when compiling with XCode I get an error based on

this entry in the midas.h include

#if !defined(OS_IRIX) && !defined(OS_VMS) && !defined(OS_MSDOS) &&

!defined(OS_UNIX) && !defined(OS_VXWORKS) && !defined(OS_WINNT)

#error MIDAS cannot be used on this operating system

#endif

Perhaps I should not use Xcode?

Perhaps I won't need Midas.h?

The MIDAS system is running on my MAC but I need to add a very simple front end

for testing and I encounted this error. |

|

1404

|

30 Oct 2018 |

Joseph McKenna | Bug Report | Side panel auto-expands when history page updates |

One can collapse the side panel when looking at history pages with the button in

the top left, great! We want to see many pages so screen real estate is important

The issue we face is that when the page refreshes, the side panel expands. Can

we make the panel state more 'sticky'?

Many thanks

Joseph (ALPHA)

Version: 2.1

Revision: Mon Mar 19 18:15:51 2018 -0700 - midas-2017-07-c-197-g61fbcd43-dirty

on branch feature/midas-2017-10 |

|

1406

|

31 Oct 2018 |

Joseph McKenna | Bug Report | Side panel auto-expands when history page updates | > >

> >

> > One can collapse the side panel when looking at history pages with the button in

> > the top left, great! We want to see many pages so screen real estate is important

> >

> > The issue we face is that when the page refreshes, the side panel expands. Can

> > we make the panel state more 'sticky'?

> >

> > Many thanks

> > Joseph (ALPHA)

> >

> > Version: 2.1

> > Revision: Mon Mar 19 18:15:51 2018 -0700 - midas-2017-07-c-197-g61fbcd43-dirty

> > on branch feature/midas-2017-10

>

> Hi Joseph,

>

> In principle a page refresh should now not be necessary, since pages should reload automatically

> the contents which changes. If a custom page needs a reload, it is not well designed. If necessary, I

> can explain the details.

>

> Anyhow I implemented your "stickyness" of the side panel in the last commit to the develop branch.

>

> Best regards,

> Stefan

Hi Stefan,

I apologise for miss using the word refresh. The re-appearing sidebar was also seen with the automatic

reload, I have implemented your fix here and it now works great!

Thank you very much!

Joseph |

|

Draft

|

14 Oct 2019 |

Joseph McKenna | Forum | tmfe.cxx - Future frontend design | Hi,

I have been looking at the 2019 workshop slides, I am interested in the C++ future of MIDAS.

I am quite interested in using the object oriented

ALPHA will start data taking in 2021 |

|

1727

|

18 Oct 2019 |

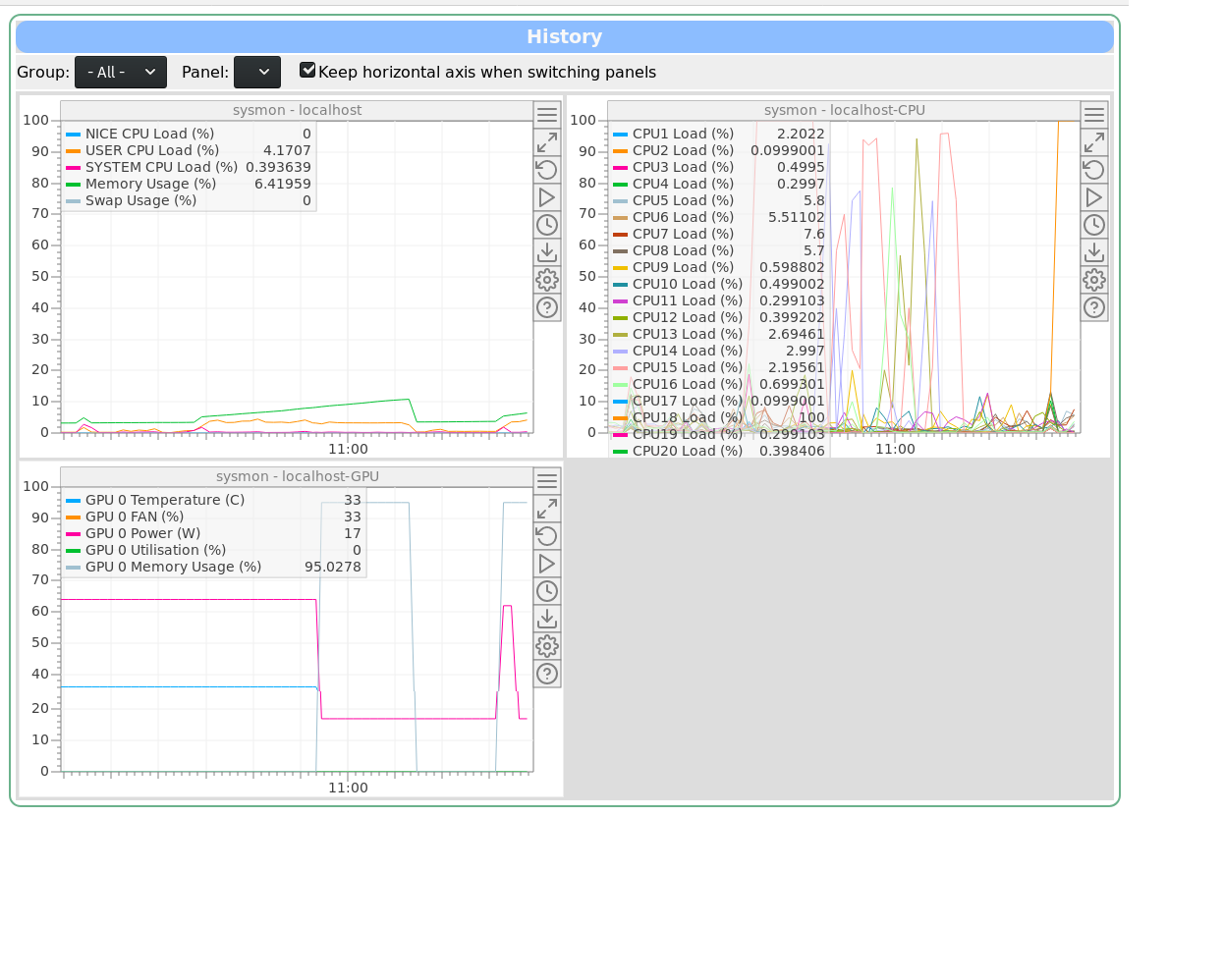

Joseph McKenna | Info | sysmon: New system monitor and performance logging frontend added to MIDAS |

I have written a system monitor tool for MIDAS, that has been merged in the develop branch today: sysmon

https://bitbucket.org/tmidas/midas/pull-requests/8/system-monitoring-a-new-frontend-to-log/diff

To use it, simply run the new program

sysmon

on any host that you want to monitor, no configuring required.

The program is a frontend for MIDAS, there is no need for configuration, as upon initialisation it builds a history display for you. Simply run one instance per machine you want to monitor. By default, it only logs once per 10 seconds.

The equipment name is derived from the hostname, so multiple instances can be run across multiple machines without conflict. A new history display will be created for each host.

sysmon uses the /proc pseudo-filesystem, so unfortunately only linux is supported. It does however work with multiple architectures, so x86 and ARM processors are supported.

If the build machine has NVIDIA drivers installed, there is an additional version of sysmon that gets built: sysmon-nvidia. This will log the GPU temperature and usage, as well as CPU, memory and swap. A host should only run either sysmon or sysmon-nvidia

elog:1727/1 shows the History Display generated by sysmon-nvidia. sysmon would only generate the first two displays (sysmon/localhost and sysmon/localhost-CPU) |

| Attachment 1: sysmon-gpu.png

|

|

|

1746

|

03 Dec 2019 |

Joseph McKenna | Info | mfe.c: MIDAS frontend's 'Equipment name' can embed hostname, determined at run-time | A little advertised feature of the modifications needed support the msysmon program is

that MIDAS equipment names can support the injecting of the hostname of the system

running the frontend at runtime (register_equipment(void)).

https://midas.triumf.ca/MidasWiki/index.php/Equipment_List_Parameters#Equipment_Name

A special string ${HOSTNAME} can be put in any position in the equipment name. It will

be replaced with the hostname of the computer running the frontend at run-time. Note,

the frontend_name string will be trimmed down to 32 characters.

Example usage: msysmon

EQUIPMENT equipment[] = {

{ "${HOSTNAME}_msysmon", /* equipment name */ {

EVID_MONITOR, 0, /* event ID, trigger mask */

"SYSTEM", /* event buffer */

EQ_PERIODIC, /* equipment type */

0, /* event source */

"MIDAS", /* format */

TRUE, /* enabled */

RO_ALWAYS, /* Read when running */

10000, /* poll every so milliseconds */

0, /* stop run after this event limit */

0, /* number of sub events */

1, /* history period */

"", "", ""

},

read_system_load,/* readout routine */

},

{ "" }

}; |

|

1891

|

01 May 2020 |

Joseph McKenna | Forum | Taking MIDAS beyond 64 clients |

Hi all,

I have been experimenting with a frontend solution for my experiment

(ALPHA). The intention to replace how we log data from PCs running LabVIEW.

I am at the proof of concept stage. So far I have some promising

performance, able to handle 10-100x more data in my test setup (current

limitations now are just network bandwith, MIDAS is impressively efficient).

==========================================================================

Our experiment has many PCs using LabVIEW which all log to MIDAS, the

experiment has grown such that we need some sort of load balancing in our

frontend.

The concept was to have a 'supervisor frontend' and an array of 'worker

frontend' processes.

-A LabVIEW client would connect to the supervisor, then be referred to a

worker frontend for data logging.

-The supervisor could start a 'worker frontend' process as the demand

required.

To increase accountability within the experiment, I intend to have a 'worker

frontend' per PC connecting. Then any rouge behavior would be clear from the

MIDAS frontpage.

Presently there around 20-30 of these LabVIEW PCs, but given how the group

is growing, I want to be sure that my data logging solution will be viable

for the next 5-10 years. With the increased use of single board computers, I

chose the target of benchmarking upto 1000 worker frontends... but I quickly

hit the '64 MAX CLIENTS' and '64 RPC CONNECTION' limit. Ok...

branching and updating these limits:

https://bitbucket.org/tmidas/midas/branch/experimental-beyond_64_clients

I have two commits.

1. update the memory layout assertions and use MAX_CLIENTS as a variable

https://bitbucket.org/tmidas/midas/commits/302ce33c77860825730ce48849cb810cf

366df96?at=experimental-beyond_64_clients

2. Change the MAX_CLIENTS and MAX_RPC_CONNECTION

https://bitbucket.org/tmidas/midas/commits/f15642eea16102636b4a15c8411330969

6ce3df1?at=experimental-beyond_64_clients

Unintended side effects:

I break compatibility of existing ODB files... the database layout has

changed and I read my old ODB as corrupt. In my test setup I can start from

scratch but this would be horrible for any existing experiment.

Edit: I noticed 'make testdiff' pipeline is failing... also fails locally...

investigating

Early performance results:

In early tests, ~700 PCs logging 10 unique arrays of 10 doubles into

Equipment variables in the ODB seems to perform well... All transactions

from client PCs are finished within a couple of ms or less

==========================================================================

Questions:

Does the community here have strong opinions about increasing the

MAX_CLIENTS and MAX_RPC_CONNECTION limits?

Am I looking at this problem in a naive way?

Potential solutions other than increasing the MAX_CLIENTS limit:

-Make worker threads inside the supervisor (not a separate process), I am

using TMFE, so I can dynamically create equipment. I have not yet taken a

deep dive into how any multithreading is implemented

-One could have a round robin system to load balance between a limited pool

of 'worker frontend' proccesses. I don't like this solution as I want to

able to clearly see which client PCs have been setup to log too much data

========================================================================== |

|

1895

|

02 May 2020 |

Joseph McKenna | Forum | Taking MIDAS beyond 64 clients |

Thank you very much for feedback.

I am satisfied with not changing the 64 client limit. I will look at re-writing my frontend to spawn threads rather than

processses. The load of my frontend is low, so I do not anticipate issues with a threaded implementation.

In this threaded scenario, it will be a reasonable amount of time until ALPHA bumps into the 64 client limit.

If it avoids confusion, I am happy for my experimental branch 'experimental-beyond_64_clients' to be deleted.

Perhaps a item for future discussion would be for the odbinit program to be able to 'upgrade' the ODB and enable some backwards

compatibility.

Thanks again

Joseph |

|

2015

|

19 Nov 2020 |

Joseph McKenna | Forum | History plot consuming too much memory |

A user reported an issue that if they were to plot some history data from

2019 (a range of one day), the plot would spend ~4 minutes loading then

crash the browser tab. This seems to effect chrome (under default settings)

and not firefox

I can reproduce the issue, "Data Being Loaded" shows, then the page and

canvas loads, then all variables get a correct "last data" timestamp, then

the 'Updating data ...' status shows... then the tab crashes (chrome)

It seems that the browser is loading all data until the present day (maybe 4

Gb of data in this case). In chrome the tab then crashes. In firefox, I do

not suffer the same crash, but I can see the single tab is using ~3.5 Gb of

RAM

Tested with midas-2020-08-a up until the HEAD of develop

I could propose the user use firefox, or increase the memory limit in

chrome, however are there plans to limit the data loaded when specifically

plotting between two dates? |

|

2017

|

20 Nov 2020 |

Joseph McKenna | Forum | History plot consuming too much memory | Poking at the behavior of this, its fairly clear the slow response is from the data

being loaded off an HDD, when we upgrade this system we will allocate enough SSD

storage for the histories.

Using Firefox has resolved this issue for the user's project here

Taking this down a tangent, I have a mild concern that a user could temporarily

flood our gigabit network if we do have faster disks to read the history data. Have

there been any plans or thoughts on limiting the bandwidth users can pull from

mhttpd? I do not see this as a critical item as I can plan the future network

infrastructure at the same time as the next system upgrade (putting critical data

taking traffic on a separate physical network).

> Of course one can only

> load that specific window, but when the user then scrolls right, one has to

> append new data to the "right side" of the array stored in the browser. If the

> user jumps to another location, then the browser has to keep track of which

> windows are loaded and which windows not, making the history code much more

> complicated. Therefore I'm only willing to spend a few days of solid work

> if this really becomes a problem.

For now the user here has retrieved all the data they need, and I can direct others

towards mhist in the near future. Being able to load just a specific window would be

very useful in the future, but I comprehend how it would be a spike in complexity. |

|

2175

|

27 May 2021 |

Joseph McKenna | Info | MIDAS Messenger - A program to send MIDAS messages to Discord, Slack and or Mattermost |

I have created a simple program that parses the message buffer in MIDAS and

sends notifications by webhook to Discord, Slack and or Mattermost.

Active pull request can be found here:

https://bitbucket.org/tmidas/midas/pull-requests/21

Its written in python and CMake will install it in bin (if the Python3 binary

is found by cmake). The only dependency outside of the MIDAS python library is

'requests', full documentation are in the mmessenger.md |

|