| ID |

Date |

Author |

Topic |

Subject |

|

1220

|

04 Nov 2016 |

Thomas Lindner | Bug Report | problem with error code DB_NO_MEMORY from db_open_record() call when establish additional hotlinks | Hi Tim,

I reproduced your problem and then managed to go through a procedure to increase the number

of allowable open records. The following is the procedure that I used

1) Use odbedit to save current ODB

odbedit

save current_odb.odb

2) Stop all the running MIDAS processes, including mlogger and mserver using the web

interface. Then stop mhttpd as well.

3) Remove your old ODB (we will recreate it after modifying MIDAS, using the backup you just

made).

mv .ODB.SHM .ODB.SHM.20161104

rm /dev/shm/thomas_ODB_SHM

4) Make the following modifications to midas. In this particular case I have increased the

max number of open records from 256 to 1024. You would need to change the constants if you

want to change to other values

diff --git a/include/midas.h b/include/midas.h

index 02b30dd..33be7be 100644

--- a/include/midas.h

+++ b/include/midas.h

@@ -254,7 +254,7 @@ typedef std::vector<std::string> STRING_LIST;

-#define MAX_OPEN_RECORDS 256 /**< number of open DB records */

+#define MAX_OPEN_RECORDS 1024 /**< number of open DB records */

diff --git a/src/odb.c b/src/odb.c

index 47ace8f..ac1bef3 100755

--- a/src/odb.c

+++ b/src/odb.c

@@ -699,8 +699,8 @@ static void db_validate_sizes()

- assert(sizeof(DATABASE_CLIENT) == 2112);

- assert(sizeof(DATABASE_HEADER) == 135232);

+ assert(sizeof(DATABASE_CLIENT) == 8256);

+ assert(sizeof(DATABASE_HEADER) == 528448);

The calculation is as follows (in case you want a different number of open records):

DATABASE_CLIENT = 64 + 8*MAX_OPEN_ERCORDS = 64 + 8*1024 = 8256

DATABASE_HEADER = 64 + 64*DATABASE_CLIENT = 64 + 64*8256 = 528448

5) Rebuild MIDAS

make clean; make

6) Create new ODB

odbedit -s 1000000

Change the size of the ODB to whatever you want.

7) reload your original ODB

load current_odb.odb

8) Rebuild your frontend against new MIDAS; then it should work and you should be able to

produce more open records.

8.5*) Actually, I had a weird error where I needed to remove my .SYSTEM.SHM file as well

when I first restarted my front-end. Not sure if that was some unrelated error, but I

mention it here for completeness.

This was a procedure based on something that originally was used for T2K (procedure by Renee

Poutissou). It is possible that not all steps are necessary and that there is a better way.

But this worked for me.

Also, any objections from other developers to tweaking the assert checks in odb.c so that

the values are calculated automatically and MIDAS only needs to be touched in one place to

modify the number of open records?

Let me know if it worked for you and I'll add these instructions to the Wiki.

Thomas

> oOne additional comment. I was able to trace the setting of the error code DB_NO_MEMORY

> to a call to the db_add_open_record() by mserver that is initiated during the start-up

> of my frontend via an RPC call. I checked with a debug printout that I have indeed

> reached the number of MAX_OPEN_RECORDS

>

> > Hi Midas forum,

> >

> > I'm having a problem with odb hotlinks after increasing sub-directories in an

> > odb. I now get the error code DB_NO_MEMORY after some db_open_record() calls. I

> > tried

> >

> > 1) increasing the parameter DEFAULT_ODB_SIZE in midas.h and make clean, make

> > but got the same error

> >

> > 2) increasing the parameter MAX_OPEN_RECORDS in midas.h and make clean, make

> > but got fatal errors from odbedit and my midas FE and couldnt run anything

> >

> > 3) deleting my expts SHM files and starting odbedit with "odbedit -e SLAC -s

> > 0x1000000" to increse the odb size but got the same error?

> >

> > 4) I tried a different computer and got the same error code DB_NO_MEMORY

> >

> > Maybe I running into some system limit that restricts the humber of open records?

> > Or maybe I've not increased the correct midas parameter?

> >

> > Best ,Tim. |

|

1221

|

25 Nov 2016 |

Thomas Lindner | Bug Report | problem with error code DB_NO_MEMORY from db_open_record() call when establish additional hotlinks | The procedure I wrote seemed to work for Tim too, so I added a page to the wiki about it here:

https://midas.triumf.ca/MidasWiki/index.php/FAQ

> Hi Tim,

>

> I reproduced your problem and then managed to go through a procedure to increase the number

> of allowable open records. The following is the procedure that I used

>

> 1) Use odbedit to save current ODB

>

> odbedit

> save current_odb.odb

>

> 2) Stop all the running MIDAS processes, including mlogger and mserver using the web

> interface. Then stop mhttpd as well.

>

>

> 3) Remove your old ODB (we will recreate it after modifying MIDAS, using the backup you just

> made).

>

> mv .ODB.SHM .ODB.SHM.20161104

> rm /dev/shm/thomas_ODB_SHM

>

> 4) Make the following modifications to midas. In this particular case I have increased the

> max number of open records from 256 to 1024. You would need to change the constants if you

> want to change to other values

>

> diff --git a/include/midas.h b/include/midas.h

> index 02b30dd..33be7be 100644

> --- a/include/midas.h

> +++ b/include/midas.h

> @@ -254,7 +254,7 @@ typedef std::vector<std::string> STRING_LIST;

> -#define MAX_OPEN_RECORDS 256 /**< number of open DB records */

> +#define MAX_OPEN_RECORDS 1024 /**< number of open DB records */

> diff --git a/src/odb.c b/src/odb.c

> index 47ace8f..ac1bef3 100755

> --- a/src/odb.c

> +++ b/src/odb.c

> @@ -699,8 +699,8 @@ static void db_validate_sizes()

> - assert(sizeof(DATABASE_CLIENT) == 2112);

> - assert(sizeof(DATABASE_HEADER) == 135232);

> + assert(sizeof(DATABASE_CLIENT) == 8256);

> + assert(sizeof(DATABASE_HEADER) == 528448);

>

> The calculation is as follows (in case you want a different number of open records):

> DATABASE_CLIENT = 64 + 8*MAX_OPEN_ERCORDS = 64 + 8*1024 = 8256

> DATABASE_HEADER = 64 + 64*DATABASE_CLIENT = 64 + 64*8256 = 528448

>

> 5) Rebuild MIDAS

>

> make clean; make

>

> 6) Create new ODB

>

> odbedit -s 1000000

>

> Change the size of the ODB to whatever you want.

>

> 7) reload your original ODB

>

> load current_odb.odb

>

> 8) Rebuild your frontend against new MIDAS; then it should work and you should be able to

> produce more open records.

>

> 8.5*) Actually, I had a weird error where I needed to remove my .SYSTEM.SHM file as well

> when I first restarted my front-end. Not sure if that was some unrelated error, but I

> mention it here for completeness.

>

> This was a procedure based on something that originally was used for T2K (procedure by Renee

> Poutissou). It is possible that not all steps are necessary and that there is a better way.

> But this worked for me.

>

> Also, any objections from other developers to tweaking the assert checks in odb.c so that

> the values are calculated automatically and MIDAS only needs to be touched in one place to

> modify the number of open records?

>

> Let me know if it worked for you and I'll add these instructions to the Wiki.

>

> Thomas

>

>

>

> > oOne additional comment. I was able to trace the setting of the error code DB_NO_MEMORY

> > to a call to the db_add_open_record() by mserver that is initiated during the start-up

> > of my frontend via an RPC call. I checked with a debug printout that I have indeed

> > reached the number of MAX_OPEN_RECORDS

> >

> > > Hi Midas forum,

> > >

> > > I'm having a problem with odb hotlinks after increasing sub-directories in an

> > > odb. I now get the error code DB_NO_MEMORY after some db_open_record() calls. I

> > > tried

> > >

> > > 1) increasing the parameter DEFAULT_ODB_SIZE in midas.h and make clean, make

> > > but got the same error

> > >

> > > 2) increasing the parameter MAX_OPEN_RECORDS in midas.h and make clean, make

> > > but got fatal errors from odbedit and my midas FE and couldnt run anything

> > >

> > > 3) deleting my expts SHM files and starting odbedit with "odbedit -e SLAC -s

> > > 0x1000000" to increse the odb size but got the same error?

> > >

> > > 4) I tried a different computer and got the same error code DB_NO_MEMORY

> > >

> > > Maybe I running into some system limit that restricts the humber of open records?

> > > Or maybe I've not increased the correct midas parameter?

> > >

> > > Best ,Tim. |

|

1223

|

01 Dec 2016 |

Thomas Lindner | Bug Report | control characters not sanitized by json_write - can cause JSON.parse of mhttpd result to fail | > > I've recently run into issues when using JSON.parse on ODB keys containing

> > 8-bit data.

>

> I am tempted to take a hard line and say that in general MIDAS TID_STRING data should be valid

> UTF-8 encoded Unicode. In the modern mixed javascript/json/whatever environment I think

> it is impractical to handle or permit invalid UTF-8 strings.

>

> Certainly in the general case, replacing all control characters with something else or escaping them or

> otherwise changing the value if TID_STRING data would wreck *valid* UTF-8 strings, which I would

> assume to be the normal use.

>

> In other words, non-UTF-8 strings are following non-IEEE-754 floating point values into oblivion - as

> we do not check the TID_FLOAT and TID_DOUBLE is valid IEEE-754 values, we should not check

> that TID_STRING is valid UTF-8.

I agree that I think we should start requiring strings to be UTF-8 encoded unicode.

I'd suggest that before worrying about the TID_STRING data, we should start by sanitizing the ODB key names.

I've seen a couple cases where the ODB key name is a non-UTF-8 string. It is very awkward to use odbedit

to delete these keys.

I attach a suggested modification to odb.c that rejects calls to db_create_key with non-UTF-8 key names. It

uses some random function I found on the internet that is supposed to check if a string is valid UTF-8. I

checked a couple of strings with invalid UTF-8 characters and it correctly identified them. But I won't

claim to be certain that this is really identifying all UTF-8 vs non-UTF-8 cases. Maybe others have a

better way of identifying this. |

| Attachment 1: odb_modifications.txt

|

diff --git a/src/odb.c b/src/odb.c

index 47ace8f..041080e 100755

--- a/src/odb.c

+++ b/src/odb.c

@@ -1818,6 +1818,90 @@ BOOL equal_ustring(const char *str1, const char *str2)

return TRUE;

}

+// Method to check if a given string is valid UTF-8. Returns 1 if it is.

+BOOL is_utf8(const char * string)

+{

+ if(!string)

+ return 0;

+

+ const unsigned char * bytes = (const unsigned char *)string;

+ while(*bytes)

+ {

+ if( (// ASCII

+ // use bytes[0] <= 0x7F to allow ASCII control characters

+ bytes[0] == 0x09 ||

+ bytes[0] == 0x0A ||

+ bytes[0] == 0x0D ||

+ (0x20 <= bytes[0] && bytes[0] <= 0x7E)

+ )

+ ) {

+ bytes += 1;

+ continue;

+ }

+

+ if( (// non-overlong 2-byte

+ (0xC2 <= bytes[0] && bytes[0] <= 0xDF) &&

+ (0x80 <= bytes[1] && bytes[1] <= 0xBF)

+ )

+ ) {

+ bytes += 2;

+ continue;

+ }

+

+ if( (// excluding overlongs

+ bytes[0] == 0xE0 &&

+ (0xA0 <= bytes[1] && bytes[1] <= 0xBF) &&

+ (0x80 <= bytes[2] && bytes[2] <= 0xBF)

+ ) ||

+ (// straight 3-byte

+ ((0xE1 <= bytes[0] && bytes[0] <= 0xEC) ||

+ bytes[0] == 0xEE ||

+ bytes[0] == 0xEF) &&

+ (0x80 <= bytes[1] && bytes[1] <= 0xBF) &&

+ (0x80 <= bytes[2] && bytes[2] <= 0xBF)

+ ) ||

+ (// excluding surrogates

+ bytes[0] == 0xED &&

+ (0x80 <= bytes[1] && bytes[1] <= 0x9F) &&

+ (0x80 <= bytes[2] && bytes[2] <= 0xBF)

+ )

+ ) {

+ bytes += 3;

+ continue;

+ }

+

+ if( (// planes 1-3

+ bytes[0] == 0xF0 &&

+ (0x90 <= bytes[1] && bytes[1] <= 0xBF) &&

+ (0x80 <= bytes[2] && bytes[2] <= 0xBF) &&

+ (0x80 <= bytes[3] && bytes[3] <= 0xBF)

+ ) ||

+ (// planes 4-15

+ (0xF1 <= bytes[0] && bytes[0] <= 0xF3) &&

+ (0x80 <= bytes[1] && bytes[1] <= 0xBF) &&

+ (0x80 <= bytes[2] && bytes[2] <= 0xBF) &&

+ (0x80 <= bytes[3] && bytes[3] <= 0xBF)

+ ) ||

+ (// plane 16

+ bytes[0] == 0xF4 &&

+ (0x80 <= bytes[1] && bytes[1] <= 0x8F) &&

+ (0x80 <= bytes[2] && bytes[2] <= 0xBF) &&

+ (0x80 <= bytes[3] && bytes[3] <= 0xBF)

+ )

+ ) {

+ bytes += 4;

+ continue;

+ }

+

+ return 0;

+ }

+

+ return 1;

+}

+

+

+

+

/********************************************************************/

/**

Create a new key in a database

@@ -1829,6 +1913,12 @@ Create a new key in a database

*/

INT db_create_key(HNDLE hDB, HNDLE hKey, const char *key_name, DWORD type)

{

+

+ if(!is_utf8(key_name)){

+ cm_msg(MERROR, "db_create_key", "invalid non-UTF-8 key name \'%s\'", key_name);

+ return DB_INVALID_PARAM;

+ }

+

if (rpc_is_remote())

return rpc_call(RPC_DB_CREATE_KEY, hDB, hKey, key_name, type);

|

|

1225

|

15 Dec 2016 |

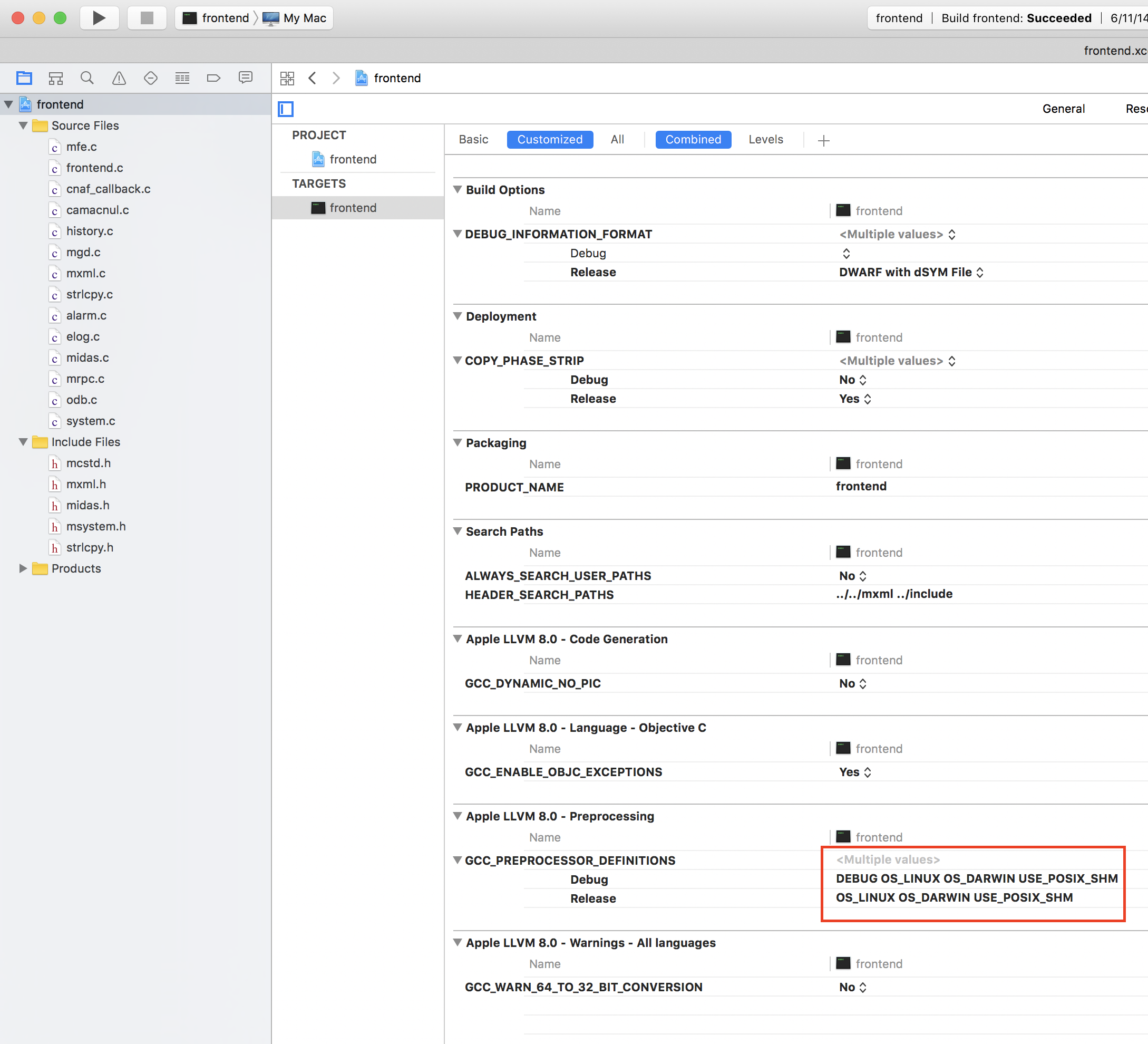

Kevin Giovanetti | Bug Report | midas.h error | creating a frontend on MAC Sierra OSX 10

include the midas.h file and when compiling with XCode I get an error based on

this entry in the midas.h include

#if !defined(OS_IRIX) && !defined(OS_VMS) && !defined(OS_MSDOS) &&

!defined(OS_UNIX) && !defined(OS_VXWORKS) && !defined(OS_WINNT)

#error MIDAS cannot be used on this operating system

#endif

Perhaps I should not use Xcode?

Perhaps I won't need Midas.h?

The MIDAS system is running on my MAC but I need to add a very simple front end

for testing and I encounted this error. |

|

1226

|

15 Dec 2016 |

Stefan Ritt | Bug Report | midas.h error | > creating a frontend on MAC Sierra OSX 10

> include the midas.h file and when compiling with XCode I get an error based on

> this entry in the midas.h include

>

> #if !defined(OS_IRIX) && !defined(OS_VMS) && !defined(OS_MSDOS) &&

> !defined(OS_UNIX) && !defined(OS_VXWORKS) && !defined(OS_WINNT)

> #error MIDAS cannot be used on this operating system

> #endif

>

>

> Perhaps I should not use Xcode?

> Perhaps I won't need Midas.h?

>

> The MIDAS system is running on my MAC but I need to add a very simple front end

> for testing and I encounted this error.

If you compile with the included Makefile, you will see a

-DOS_LINUX -DOS_DARWIN

flag which tells the compiler that we are on a mac. If you do this with XCode, you have to do it via "Build Settings" (see

attached picture).

Stefan |

| Attachment 1: Screen_Shot_2016-12-15_at_17.39.26_.png

|

|

|

1227

|

15 Jan 2017 |

Thomas Lindner | Bug Report | control characters not sanitized by json_write - can cause JSON.parse of mhttpd result to fail | > > In other words, non-UTF-8 strings are following non-IEEE-754 floating point values into oblivion - as

> > we do not check the TID_FLOAT and TID_DOUBLE is valid IEEE-754 values, we should not check

> > that TID_STRING is valid UTF-8.

> ...

> I attach a suggested modification to odb.c that rejects calls to db_create_key with non-UTF-8 key names. It

> uses some random function I found on the internet that is supposed to check if a string is valid UTF-8. I

> checked a couple of strings with invalid UTF-8 characters and it correctly identified them. But I won't

> claim to be certain that this is really identifying all UTF-8 vs non-UTF-8 cases. Maybe others have a

> better way of identifying this.

At Konstantin's suggestion, I committed the function I found for checking if a string was UTF-8 compatible to

odb.c. The function is currently not used; I commented out a proposed use in db_create_key. Experts can decide

if the code was good enough to use. |

|

1228

|

23 Jan 2017 |

Thomas Lindner | Bug Report | control characters not sanitized by json_write - can cause JSON.parse of mhttpd result to fail |

> At Konstantin's suggestion, I committed the function I found for checking if a string was UTF-8 compatible to

> odb.c. The function is currently not used; I commented out a proposed use in db_create_key. Experts can decide

> if the code was good enough to use.

After more discussion, I have enabled the parts of the ODB code that check that key names are UTF-8 compliant.

This check will show up in (at least) two ways:

1) Attempts to create a new ODB variable if the ODB key is not UTF-8 compliant. You will see error messages like

[fesimdaq,ERROR] [odb.c:572:db_validate_name,ERROR] Invalid name "Eur�" passed to db_create_key: UTF-8 incompatible

string

2) When a program first connects to the ODB, it runs a check to ensure that the ODB is valid. This will now include

a check that all key names are UTF-8 compliant. Any non-UTF8 compliant key names will be replaced by a string of the

pointer to the key. You will see error messages like:

[fesimdaq,ERROR] [odb.c:572:db_validate_name,ERROR] Invalid name "Eur�" passed to db_validate_key: UTF-8

incompatible string

[fesimdaq,ERROR] [odb.c:647:db_validate_key,ERROR] Warning: corrected key "/Equipment/SIMDAQ/Eur�": invalid name

"Eur�" replaced with "0x7f74be63f970"

This behaviour (checking UTF-8 compatibility and automatically fixing ODB names) can be disabled by setting an

environment variable

MIDAS_INVALID_STRING_IS_OK

It doesn't matter what the environment variable is set to; it just needs to be set. Note also that this variable is

only checked once, when a program starts. |

|

1229

|

30 Jan 2017 |

Stefan Ritt | Bug Report | control characters not sanitized by json_write - can cause JSON.parse of mhttpd result to fail | >

> > At Konstantin's suggestion, I committed the function I found for checking if a string was UTF-8 compatible to

> > odb.c. The function is currently not used; I commented out a proposed use in db_create_key. Experts can decide

> > if the code was good enough to use.

>

> After more discussion, I have enabled the parts of the ODB code that check that key names are UTF-8 compliant.

>

> This check will show up in (at least) two ways:

>

> 1) Attempts to create a new ODB variable if the ODB key is not UTF-8 compliant. You will see error messages like

>

> [fesimdaq,ERROR] [odb.c:572:db_validate_name,ERROR] Invalid name "Eur�" passed to db_create_key: UTF-8 incompatible

> string

>

> 2) When a program first connects to the ODB, it runs a check to ensure that the ODB is valid. This will now include

> a check that all key names are UTF-8 compliant. Any non-UTF8 compliant key names will be replaced by a string of the

> pointer to the key. You will see error messages like:

>

> [fesimdaq,ERROR] [odb.c:572:db_validate_name,ERROR] Invalid name "Eur�" passed to db_validate_key: UTF-8

> incompatible string

> [fesimdaq,ERROR] [odb.c:647:db_validate_key,ERROR] Warning: corrected key "/Equipment/SIMDAQ/Eur�": invalid name

> "Eur�" replaced with "0x7f74be63f970"

>

> This behaviour (checking UTF-8 compatibility and automatically fixing ODB names) can be disabled by setting an

> environment variable

>

> MIDAS_INVALID_STRING_IS_OK

>

> It doesn't matter what the environment variable is set to; it just needs to be set. Note also that this variable is

> only checked once, when a program starts.

I see you put some switches into the environment ("MIDAS_INVALID_STRING_IS_OK"). Do you think this is a good idea? Most variables are

sitting in the ODB (/experiment/xxx), except those which cannot be in the ODB because we need it before we open the ODB, like MIDAS_DIR.

Having them in the ODB has the advantage that everything is in one place, and we see a "list" of things we can change. From an empty

environment it is not clear that such a thing like "MIDAS_INVALID_STRING_IS_OK" does exist, while if it would be an ODB key it would be

obvious. Can I convince you to move this flag into the ODB? |

|

1230

|

01 Feb 2017 |

Konstantin Olchanski | Bug Report | control characters not sanitized by json_write - can cause JSON.parse of mhttpd result to fail | >

> I see you put some switches into the environment ("MIDAS_INVALID_STRING_IS_OK"). Do you think this is a good idea? Most variables are

> sitting in the ODB (/experiment/xxx), except those which cannot be in the ODB because we need it before we open the ODB, like MIDAS_DIR.

> Having them in the ODB has the advantage that everything is in one place, and we see a "list" of things we can change. From an empty

> environment it is not clear that such a thing like "MIDAS_INVALID_STRING_IS_OK" does exist, while if it would be an ODB key it would be

> obvious. Can I convince you to move this flag into the ODB?

>

Some additional explanation.

Time passed, the world turned, and the current web-compatible standard for text strings is UTF-8 encoded Unicode, see

https://en.wikipedia.org/wiki/UTF-8

(ObCanadianContent, UTF-8 was invented the Canadian Rob Pike https://en.wikipedia.org/wiki/Rob_Pike)

(and by some other guy https://en.wikipedia.org/wiki/Ken_Thompson).

It turns out that not every combination of 8-bit characters (char*) is valid UTF-8 Unicode.

In the MIDAS world we run into this when MIDAS ODB strings are exported to Javascript running inside web

browsers ("custom pages", etc). ODB strings (TID_STRING) and ODB key names that are not valid UTF-8

make such web pages malfunction and do not work right.

One solution to this is to declare that ODB strings (TID_STRING) and ODB key names *must* be valid UTF-8 Unicode.

The present commits implemented this solution. Invalid UTF-8 is rejected by db_create() & co and by the ODB integrity validator.

This means some existing running experiment may suddenly break because somehow they have "old-style" ODB entries

or they mistakenly use TID_STRING to store arbitrary binary data (use array of TID_CHAR instead).

To permit such experiments to use current releases of MIDAS, we include a "defeat" device - to disable UTF-8 checks

until they figure out where non-UTF-8 strings come from and correct the problem.

Why is this defeat device non an ODB entry? Because it is not a normal mode of operation - there is no use-case where

an experiment will continue to use non-UTF-8 compatible ODB indefinitely, in the long term. For example, as the MIDAS user

interface moves to more and more to HTML+Javascript+"AJAX", such experiments will see that non-UTF-8 compatible ODB entries

cause all sorts of problems and will have to convert.

K.O. |

|

1231

|

01 Feb 2017 |

Konstantin Olchanski | Bug Report | midas.h error | >

> If you compile with the included Makefile, you will see a

>

> -DOS_LINUX -DOS_DARWIN

>

Moving forward, it looks like I can define these variables in midas.h and remove the need to define them on the compiler command line.

This would be part of the Makefile and header files cleanup to get things working on Windows10.

K.O. |

|

1234

|

01 Feb 2017 |

Stefan Ritt | Bug Report | midas.h error | > >

> > If you compile with the included Makefile, you will see a

> >

> > -DOS_LINUX -DOS_DARWIN

> >

>

> Moving forward, it looks like I can define these variables in midas.h and remove the need to define them on the compiler command line.

>

> This would be part of the Makefile and header files cleanup to get things working on Windows10.

>

> K.O.

Will you detect the underlying OS automatically in midas.h? Note that you have several compilers in MacOS (llvm and gcc), and they might use different

predefined symbols. I appreciate however getting rid of these flags in the Makefile.

Stefan |

|

1235

|

01 Feb 2017 |

Stefan Ritt | Bug Report | control characters not sanitized by json_write - can cause JSON.parse of mhttpd result to fail | > Some additional explanation.

>

> Time passed, the world turned, and the current web-compatible standard for text strings is UTF-8 encoded Unicode, see

> https://en.wikipedia.org/wiki/UTF-8

> (ObCanadianContent, UTF-8 was invented the Canadian Rob Pike https://en.wikipedia.org/wiki/Rob_Pike)

> (and by some other guy https://en.wikipedia.org/wiki/Ken_Thompson).

>

> It turns out that not every combination of 8-bit characters (char*) is valid UTF-8 Unicode.

>

> In the MIDAS world we run into this when MIDAS ODB strings are exported to Javascript running inside web

> browsers ("custom pages", etc). ODB strings (TID_STRING) and ODB key names that are not valid UTF-8

> make such web pages malfunction and do not work right.

>

> One solution to this is to declare that ODB strings (TID_STRING) and ODB key names *must* be valid UTF-8 Unicode.

>

> The present commits implemented this solution. Invalid UTF-8 is rejected by db_create() & co and by the ODB integrity validator.

>

> This means some existing running experiment may suddenly break because somehow they have "old-style" ODB entries

> or they mistakenly use TID_STRING to store arbitrary binary data (use array of TID_CHAR instead).

>

> To permit such experiments to use current releases of MIDAS, we include a "defeat" device - to disable UTF-8 checks

> until they figure out where non-UTF-8 strings come from and correct the problem.

>

> Why is this defeat device non an ODB entry? Because it is not a normal mode of operation - there is no use-case where

> an experiment will continue to use non-UTF-8 compatible ODB indefinitely, in the long term. For example, as the MIDAS user

> interface moves to more and more to HTML+Javascript+"AJAX", such experiments will see that non-UTF-8 compatible ODB entries

> cause all sorts of problems and will have to convert.

>

>

> K.O.

Ok, I agree.

Stefan |

|

1237

|

15 Feb 2017 |

NguyenMinhTruong | Bug Report | increase event buffer size | Dear all,

I have problem in event buffer size.

When run MIDAS, I got error "total event size (1307072) larger than buffer size

(1048576)", so I guess that the EVENT_BUFFER_SIZE is small.

I change EVENT_BUFFER_SIZE in midas.h from 0x100000 to 0x200000. After compiling

and run MIDAS, I got other error "Shared memory segment with key 0x4d040761

already exists, please remove it manually: ipcrm -M 0x4d040761 size0x204a3c" in

system.C

I check the shmget() function in system.C and it is said that error come from

Shared memory segments larger than 16,773,120 bytes and create teraspace shared

memory segments

Anyone has this problem before?

Thanks for your help

M.T |

|

1238

|

15 Feb 2017 |

NguyenMinhTruong | Bug Report | increase event buffer size | Dear all,

I have problem in event buffer size.

When run MIDAS, I got error "total event size (1307072) larger than buffer size

(1048576)", so I guess that the EVENT_BUFFER_SIZE is small.

I change EVENT_BUFFER_SIZE in midas.h from 0x100000 to 0x200000. After compiling

and run MIDAS, I got other error "Shared memory segment with key 0x4d040761

already exists, please remove it manually: ipcrm -M 0x4d040761 size0x204a3c" in

system.C

I check the shmget() function in system.C and it is said that error come from

Shared memory segments larger than 16,773,120 bytes and create teraspace shared

memory segments

Anyone has this problem before?

Thanks for your help

M.T |

|

1239

|

16 Feb 2017 |

Konstantin Olchanski | Bug Report | increase event buffer size | > I have problem in event buffer size.

>

> When run MIDAS, I got error "total event size (1307072) larger than buffer size

> (1048576)", so I guess that the EVENT_BUFFER_SIZE is small.

>

Correct. You have a choice of sending smaller events or increasing the buffer size.

Increasing the buffer size consumes computer memory, how much memory do you have on your machine?

>

> I change EVENT_BUFFER_SIZE in midas.h from 0x100000 to 0x200000. After compiling

> and run MIDAS, I got other error "Shared memory segment with key 0x4d040761

> already exists, please remove it manually: ipcrm -M 0x4d040761 size0x204a3c" in

> system.C

>

This is not normal. In recent versions of MIDAS (for the last few years)

a) buffer size is changed via ODB "/Experiment/buffer sizes", no need to edit midas.h

b) shared memory was switched from SYSV shared memory to POSIX shared memory, and you should not see any references to

SYSV shared memory functions like "ipcrm", "shmget" and "segment key".

Are you using a very old version of MIDAS? Or maybe you have a MIDAS installation that still uses SYSV shared memory. Check

the contents of .SHM_TYPE.TXT (in the same directory as .ODB.SHM), if would normally say "POSIXv2_SHM". If it says

something else, it is best to convert to POSIX SHM. Simplest way is to stop everything, save odb to text file, delete

.SHM_TYPE.TXT, restart odb with odbedit, reload from text file. Now check that .SHM_TYPE.TXT says "POSIXv2_SHM".

>

> I check the shmget() function in system.C and it is said that error come from

> Shared memory segments larger than 16,773,120 bytes and create teraspace shared

> memory segments

>

What teraspace?!? You changed the size from 1 Mbyte to 2 Mbyte (0x200000), this is still below even the value you have above

(16,773,120).

At the end, it is not clear what your problem is. After changing the shared memory size (via odb or via midas.h),

the midas *will* complain about the mismatch in size (existing vs expected) and will tell you how to fix it, (run "ipcrm").

After does this, is there still an error? Normally everything will just work. (you might also have to erase .SYSTEM.SHM,

midas will tell you to do so if it is needed).

So what is your final error? (After running ipcrm?)

K.O. |

|

Draft

|

19 Feb 2017 |

NguyenMinhTruong | Bug Report | increase event buffer size | I am sorry for my late reply memory in my PC is 16 GB I check the contents of .SHM_TYPE.TXT and it is "POSIXv2_SHM". But there is no buffer sizes in "/Experiment" After run "ipcrm -M 0x4d040761 size0x204a3c", remove .SYSTEM.SHM and run MIDAS again, I still get error "Shared memory segment with key 0x4d040761 already exists, please remove it manually: ipcrm -M 0x4d040761 size0x204a3c" M.T |

|

1241

|

20 Feb 2017 |

NguyenMinhTruong | Bug Report | increase event buffer size | I am sorry for my late reply

memory in my PC is 16 GB

I check the contents of .SHM_TYPE.TXT and it is "POSIXv2_SHM".

But there is no buffer sizes in "/Experiment"

After run "ipcrm -M 0x4d040761 size0x204a3c", remove .SYSTEM.SHM and run MIDAS again, I still get error "Shared memory segment

with key 0x4d040761 already exists, please remove it manually: ipcrm -M 0x4d040761 size0x204a3c" M.T |

|

Draft

|

20 Feb 2017 |

Konstantin Olchanski | Bug Report | increase event buffer size | > memory in my PC is 16 GB

You can safely go to buffer size 100 Mbytes or more.

> I check the contents of .SHM_TYPE.TXT and it is "POSIXv2_SHM".

Good.

> But there is no buffer sizes in "/Experiment"

This is strange. How old is your midas? What does it say on the "help" page in "Revision"?

> After run "ipcrm -M 0x4d040761 size0x204a3c"

This command is wrong. It probably gave you an error instead of removing the shared memory, that's why

nothing worked afterwards.

My copy of system.c reads this:

cm_msg(MERROR, "ss_shm_open", "Shared memory segment with key 0x%x already exists, please remove it manually: ipcrm -M 0x%x", key, key);

Note how there is no text "size0x..." in my copy? What does your copy say? Did somebody change it?

> remove .SYSTEM.SHM and run MIDAS again, I still get error "Shared memory segment

> with key 0x4d040761 already exists, please remove it manually: ipcrm -M 0x4d040761 size0x204a3c" M.T

Yes, that's because the ipcrm command is wrong and did not work,

it should read "ipcrm -M 0x4d040761" without the spurious "size..." text.

K.O. |

|

1243

|

20 Feb 2017 |

Konstantin Olchanski | Bug Report | increase event buffer size | > memory in my PC is 16 GB

You can safely go to buffer size 100 Mbytes or more.

> I check the contents of .SHM_TYPE.TXT and it is "POSIXv2_SHM".

Good.

> But there is no buffer sizes in "/Experiment"

This is strange. How old is your midas? What does it say on the "help" page in "Revision"?

> After run "ipcrm -M 0x4d040761 size0x204a3c"

This command is wrong. It probably gave you an error instead of removing the shared memory, that's why

nothing worked afterwards.

My copy of system.c reads this:

cm_msg(MERROR, "ss_shm_open", "Shared memory segment with key 0x%x already exists, please remove it manually: ipcrm -M 0x%x", key,

key);

Note how there is no text "size0x..." in my copy? What does your copy say? Did somebody change it?

> remove .SYSTEM.SHM and run MIDAS again, I still get error "Shared memory segment

> with key 0x4d040761 already exists, please remove it manually: ipcrm -M 0x4d040761 size0x204a3c" M.T

Yes, that's because the ipcrm command is wrong and did not work,

it should read "ipcrm -M 0x4d040761" without the spurious "size..." text.

K.O. |

|

1244

|

20 Feb 2017 |

Konstantin Olchanski | Bug Report | increase event buffer size | > > memory in my PC is 16 GB

>

> You can safely go to buffer size 100 Mbytes or more.

>

> > I check the contents of .SHM_TYPE.TXT and it is "POSIXv2_SHM".

>

> Good.

No, wait, this is all wrong. If it says POSIX shared memory, how come it later

complains about SYSV shared memory and tells you to run SYSV shared memory

commands like ipcrm?!?

> > But there is no buffer sizes in "/Experiment"

Now this kind of makes sense - you are probably running a strange mixture

of very old and recently new MIDAS. Probably you current version is so old

that it does not use .SHM_TYPE.TXT and can only do SYSV shared memory

and so old it does not have "/Experiment/buffer sizes".

But at some point you must have run a recent version of midas, or you would

not have the file .SHM_TYPE.TXT in your experiment directory.

I say:

a) run the correct ipcrm command (without the spurious "size..." text)

b) review your computer contents to identify all the versions of midas

and to make sure you are using the midas you want to use (old or new,

whatever), but not some wrong version by accident (incorrect PATH setting, etc)

As MIDAS developers, we usually recommend that you use the latest version of MIDAS,

certainly latest version is simpler to debug.

K.O. |

|