| ID |

Date |

Author |

Topic |

Subject |

|

1873

|

03 Apr 2020 |

Stefan Ritt | Info | CLOCK_REALTIME on MacOS | > Dear all,

> I'm trying to compile MIDAS on MacOS 10.10 and I get this error:

>

> /Users/francesco/MIDAS/midas/src/system.cxx:3187:18: error: use of undeclared identifier

> 'CLOCK_REALTIME'

> clock_settime(CLOCK_REALTIME, <m);

>

> Is it related to my (old) version of MacOS? Can I fix it somehow?

>

> Thank you,

> Francesco

If I see this correctly, you need at least MacOSX 10.12. If you can't upgrade, you can just remove line 3187

from system.cxx. This function is only used in an online environment, where you would run a frontend on your

Mac, which you probably don't do. So removing it does not hurt you.

Stefan |

|

1875

|

21 Apr 2020 |

Stefan Ritt | Suggestion | Sequencer loop break | > I am using the Midas sequencer to run subsequent measurements in a loop, without

> knowing how many iterations in advance. Therefore, I am using the "infinity"

> option. Since I have other commands after the loop, it would be nice to have the

> possibility to break the loop, but let the sequencer then finish the rest of the

> commands.

> Cheers,

> Ivo

You can do that with the "GOTO" statement, jumping to the first line after the loop.

Here is a working example:

LOOP runs, 5

WAIT Seconds 3

IF $runs > 2

GOTO 7

ENDIF

ENDLOOP

MESSAGE "Finished", 1

Best,

Stefan |

|

1877

|

23 Apr 2020 |

Stefan Ritt | Suggestion | Sequencer loop break | > > You can do that with the "GOTO" statement, jumping to the first line after the loop.

> >

> > Here is a working example:

> >

> >

> > LOOP runs, 5

> > WAIT Seconds 3

> > IF $runs > 2

> > GOTO 7

> > ENDIF

> > ENDLOOP

> > MESSAGE "Finished", 1

> >

> > Best,

> > Stefan

>

> Hoi Stefan

>

> Thanks for your answer. As I understand it, this has to be in the sequence script before

> running. So, in the end, it is not different than just saying "LOOP runs, 2" and

> therefore the number of runs has do be known in advance as well. Or is there an option to

> change the script on runtime? What I would like, is to start a sequence with "LOOP runs,

> infinite" and when I come back to the experiment after falling asleep being able to break

> the loop after the next iteration, but still execute everything after ENDLOOP, i.e. the

> MESSAGE statement in your example. Because if I do a "Stop after current run", this seems

> not to happen.

>

> Best, Ivo

First, you have the sequencer button "Stop after current run", but that does of course ot

execute anything after ENDLOOP.

Second, you can put anything in the IF statement. Like create a variable on the ODB like

/Experiment/Run parameters/Stop loop and set this to zero. Then put in your script:

...

ODBGET /Experiment/Run parameters/Stop loop, flag

IF $flag == 1

GOTO 7

...

So once you want to stop the loop, set the flag in the ODB to one.

Best,

Stefan |

|

Draft

|

24 Apr 2020 |

Stefan Ritt | Forum | API to read MIDAS format file | |

|

1880

|

24 Apr 2020 |

Stefan Ritt | Forum | API to read MIDAS format file | I guess all three options would work. I just tried mhist and it still works with the "FILE" history

mhist -e <equipment name> -v <variable name> -h 10

for dumping a variable for the last 10 hours.

I could not get mhdump to work with current history files, maybe it only works with "MIDAS" history and not "FILE" history (see https://midas.triumf.ca/MidasWiki/index.php/History_System#History_drivers). Maybe Konstantin who wrote mhdump has some idea.

Writing your own parser is certainly possible (even in Python), but of course more work.

Stefan |

|

1883

|

24 Apr 2020 |

Stefan Ritt | Forum | API to read MIDAS format file |

| Pintaudi Giorgio wrote: |

Hypothetically which one between the two lends itself the better to being "batched"? I mean to be read and controlled by a program/routine. For example, some programs give the option to have the output formatted in json, etc... |

Can't say on the top of my head. Both program are pretty old (written well before JSON has been invented, so there is no support for that in both). mhist was written by me mhdump was written by Konstantin. I would both give a try and see what you like more.

Stefan |

|

1889

|

26 Apr 2020 |

Stefan Ritt | Forum | Questions and discussions on the Frontend ODB tree structure. | Dear Yu Chen,

in my opinion, you can follow two strategies:

1) Follow the EQ_SLOW example from the distribution, and write some device driver for you hardware to control. There are dozens of experiments worldwide which use that scheme and it works ok for lots of

different devices. By doing that, you get automatically things like write cache (only write a value to the actual device if it really has chande) and dead band (only write measured data to the ODB if it changes more

than a certain value to suppress noise). Furthermore, you get events created automatically and history for all your measured variables. This scheme might look complicated on the first look, and it's quite old-

fashioned (no C++ yet) but it has proven stable over the last ~20 years or so. Maybe the biggest advantage of this system is that each device gets its own readout and control thread, so one slow device cannot

block other devices. Once this has been introduced more than 10 years ago, we saw a big improvement in all experiments.

2) Do everything by yourself. This is the way you describe below. Of course you are free to do whatever you like, you will find "special" solutions for all your problems. But then you move away from the "standard

scheme" and you loose all the benefits I described under 1) and of course you are on your own. If something does not work, it will be in your code and nobody from the community can help you.

So choose carefully between 1) and 2).

Best regards,

Stefan

> Dear MIDAS developers and colleagues,

>

> This is Yu CHEN of School of Physics, Sun Yat-sen University, China, working in the PandaX-III collaboration, an experiment under development to search the neutrinoless double

> beta decay. We are working on the DAQ and slow control systems and would like to use Midas framework to communicate with the custom hardware systems, generally via Ethernet

> interfaces. So currently we are focusing on the development of the FRONTEND program of Midas and have some questions and discussions on its ODB structure. Since I�m still not

> experienced in the framework, it would be precious that you can provide some suggestions on the topic.

> The current structure of the frontend ODB tree we have designed, together with our understanding on them, is as follows:

> /Equipment/<frontend_name>/

> -> /Common/: Basic controls and left unchanged.

> -> /Variables/: (ODB records with MODE_READ) Monitored values that are AUTOMATICALLY updated from the bank data within each packed event. It is done by the update_odb()

> function in mfe.cxx.

> -> /Statistics/: (ODB records with MODE_WRITE) Default status values that are AUTOMATICALLY updated by the calling of db_send_changed_records() within the mfe.cxx.

> -> /Settings/: All the user defined data can be located here.

> -> /stat/: (ODB records with MODE_WRITE) All the monitored values as well as program internal status. The update operation is done simultaneously when

> db_send_changed_records() is called within the mfe.cxx.

> -> /set/: (ODB records with MODE_READ) All the �Control� data used to configure the custom program and custom hardware.

>

> For our application, some of the our detector equipment outputs small amount of status and monitored data together with the event data, so we currently choose not to use EQ_SLOW

> and 3-layer drivers for the readout. Our solution is to create two ODB sub-trees in the /Settings/ similar to what the device_driver does. However, this could introduce two

> troubles:

> 1) For /Settings/stat/: To prevent the potential destroy on the hot-links of /Variables/ and /Statistics/ sub-trees, all our status and monitored data are stored separately in

> the /Settings/stat/ sub-tree. Another consideration is that some monitored data are not directly from the raw data, so packaging them into the Bank for later showing in /Variables/

> could somehow lead to a complicated readout() function. However, this solution may be complicated for history loggings. I have find that the ANALYZER modules could provide some

> processes for writing to the /Variables/ sub-tree, so I would like to know whether an analyzer should be used in this case.

> 2) For /Settings/set/: The �control� data (similar to the �demand� data in the EQ_SLOW equipment) are currently put in several /Settings/set/ sub-trees where each key in

> them is hot-linked to a pre-defined hardware configuration function. However, some control operations are not related to a certain ODB key or related to several ODB keys (e.g.

> configuration the Ethernet sockets), so the dispatcher function should be assigned to the whole sub-tree, which I think can slow the response speed of the software. What we are

> currently using is to setup a dedicated �control key�, and then the input of different value means different operations (e.g. 1 means socket opening, 2 means sending the UDP

> packets to the target hardware, et al.). This �control key� is also used to develop the buttons to be shown on the Status/Custom webpage. However, we would like to have your

> suggestions or better solutions on that, considering the stability and fast response of the control.

>

> We are not sure whether the above understanding and troubles on the Midas framework are correct or they are just due to our limits on the knowledge of the framework, so we

> really appreciate your knowledge and help for a better using on Midas. Thank you so much! |

|

1890

|

26 Apr 2020 |

Stefan Ritt | Info | CLOCK_REALTIME on MacOS | > > > /Users/francesco/MIDAS/midas/src/system.cxx:3187:18: error: use of undeclared identifier

> > > 'CLOCK_REALTIME'

> > > clock_settime(CLOCK_REALTIME, <m);

> > >

> > > Is it related to my (old) version of MacOS? Can I fix it somehow?

>

> I think the "set clock" function is a holdover from embedded operating systems

> that did not keep track of clock time, i.e. VxWorks, and similar. Here a midas program

> will get the time from the mserver and set it on the local system. Poor man's ntp,

> poor man's ntpd/chronyd.

>

> We should check if this function is called by anything, and if nothing calls it, maybe remove it?

>

> K.O.

It's called in mfe.cxx via cm_synchronize:

/* set time from server */

#ifdef OS_VXWORKS

cm_synchronize(NULL);

#endif

This was for old VxWorks systems which had no ntp/crond. Was asked for by Pierre long time ago. I don't use it

(have no VxWorks). We can either remove it completely, or remove just the MacOSX part and just exit the program

if called with an error message "not implemented on this OS".

Stefan |

|

1892

|

01 May 2020 |

Stefan Ritt | Forum | Taking MIDAS beyond 64 clients | Hi Joseph,

here some thoughts from my side:

- Breaking ODB compatibility in the master/develop midas branch is very bad, since almost all experiments worldwide are affected if they just do blindly a pull and want to recompile and rerun. Currently,

even during our Corona crisis, still some experiments are running and monitored remotely.

- On the other hand, if we have to break compatibility, now is maybe a good time since most accelerators worldwide are off. But before doing so, I would like to get feedback from the main experiments

around the world (MEG, T2K, g-2, DEAP besides ALPHA).

- Having a maximum of 64 clients was originally decided when memory was scarce. In the early days one had just a couple of megabytes of share memory. Now this is not an issue any more, but I see

another problem. The main status page gives a nice overview of the experiment. This only works because there is a limited number of midas clients and equipments. If we blow up to 1000+, the status

page would be rather long and we have to scroll up and down forever. In such a scenario one would have at least to redesign the status and program pages. To start your experiment, you would have to

click 1000 times to start each front-end, also not very practicable.

- Having 100's or 1000's of front-ends calls rather for a hierarchical design, like the LHC experiments have. That would be a major change of midas and cannot be done quickly. It would also result in

much slower run start/stops.

- If you see limitations with your LabVIEW PCs, have you considered multi-threading on your front-ends? Note that the standard midas slow control system supports multithreaded devices

(DF_MULTITHREAD). In MEG, we use about 800 microcontrollers via the MSCB protocol. They are grouped together and each group is a multithreaded device in the midas slow control lingo, meaning the

group gets its own thread for control and readout in the midas frontend. This way, one group cannot slow down all other groups. There is one front-end for all groups, which can be started/stopped with

a single click, it shows up just as one line in the status page, and still it's pretty fast. Have you considered such a scheme? So your LabVIEW PCs would not be individual front-ends, but just make a

network connection to the midas front-end which then manages all LabVEIW PCs. The midas slow control system allows to define custom commands (besides the usual read/write command for slow

control data), so you could maybe integrate all you need into that scheme.

Best,

Stefan

>

> Hi all,

> I have been experimenting with a frontend solution for my experiment

> (ALPHA). The intention to replace how we log data from PCs running LabVIEW.

> I am at the proof of concept stage. So far I have some promising

> performance, able to handle 10-100x more data in my test setup (current

> limitations now are just network bandwith, MIDAS is impressively efficient).

> ==========================================================================

> Our experiment has many PCs using LabVIEW which all log to MIDAS, the

> experiment has grown such that we need some sort of load balancing in our

> frontend.

> The concept was to have a 'supervisor frontend' and an array of 'worker

> frontend' processes.

> -A LabVIEW client would connect to the supervisor, then be referred to a

> worker frontend for data logging.

> -The supervisor could start a 'worker frontend' process as the demand

> required.

> To increase accountability within the experiment, I intend to have a 'worker

> frontend' per PC connecting. Then any rouge behavior would be clear from the

> MIDAS frontpage.

> Presently there around 20-30 of these LabVIEW PCs, but given how the group

> is growing, I want to be sure that my data logging solution will be viable

> for the next 5-10 years. With the increased use of single board computers, I

> chose the target of benchmarking upto 1000 worker frontends... but I quickly

> hit the '64 MAX CLIENTS' and '64 RPC CONNECTION' limit. Ok...

> branching and updating these limits:

> https://bitbucket.org/tmidas/midas/branch/experimental-beyond_64_clients

> I have two commits.

> 1. update the memory layout assertions and use MAX_CLIENTS as a variable

> https://bitbucket.org/tmidas/midas/commits/302ce33c77860825730ce48849cb810cf

> 366df96?at=experimental-beyond_64_clients

> 2. Change the MAX_CLIENTS and MAX_RPC_CONNECTION

> https://bitbucket.org/tmidas/midas/commits/f15642eea16102636b4a15c8411330969

> 6ce3df1?at=experimental-beyond_64_clients

> Unintended side effects:

> I break compatibility of existing ODB files... the database layout has

> changed and I read my old ODB as corrupt. In my test setup I can start from

> scratch but this would be horrible for any existing experiment.

> Edit: I noticed 'make testdiff' is failing... also fails lok

> Early performance results:

> In early tests, ~700 PCs logging 10 unique arrays of 10 doubles into

> Equipment variables in the ODB seems to perform well... All transactions

> from client PCs are finished within a couple of ms or less

> ==========================================================================

> Questions:

> Does the community here have strong opinions about increasing the

> MAX_CLIENTS and MAX_RPC_CONNECTION limits?

> Am I looking at this problem in a naive way?

>

> Potential solutions other than increasing the MAX_CLIENTS limit:

> -Make worker threads inside the supervisor (not a separate process), I am

> using TMFE, so I can dynamically create equipment. I have not yet taken a

> deep dive into how any multithreading is implemented

> -One could have a round robin system to load balance between a limited pool

> of 'worker frontend' proccesses. I don't like this solution as I want to

> able to clearly see which client PCs have been setup to log too much data

> ========================================================================== |

|

1894

|

02 May 2020 |

Stefan Ritt | Forum | Taking MIDAS beyond 64 clients | TRIUMF stayed quiet, probably they have other things to do.

I allowed myself to move the maximum number of clients back to its original value, in order not to break running experiments.

This does not mean that the increase is a bad idea, we just have to be careful not to break running experiments. Let's discuss it

more thoroughly here before we make a decision in that direction.

Best regards,

Stefan |

|

1896

|

02 May 2020 |

Stefan Ritt | Forum | Taking MIDAS beyond 64 clients | > Perhaps a item for future discussion would be for the odbinit program to be able to 'upgrade' the ODB and enable some backwards

> compatibility.

We had this discussion already a few times. There is an ODB version number (DATABSE_VERSION 3 in midas.h) which is intended for that. If we break teh

binary compatibility, programs should complain "ODB version has changed, please run ...", then odbinit (written by KO) should have a well-defined

procedure to upgrade existing ODBs by re-creating them, but keeping all old contents. This should be tested on a few systems.

Stefan |

|

1903

|

03 May 2020 |

Stefan Ritt | Forum | API to read MIDAS format file |

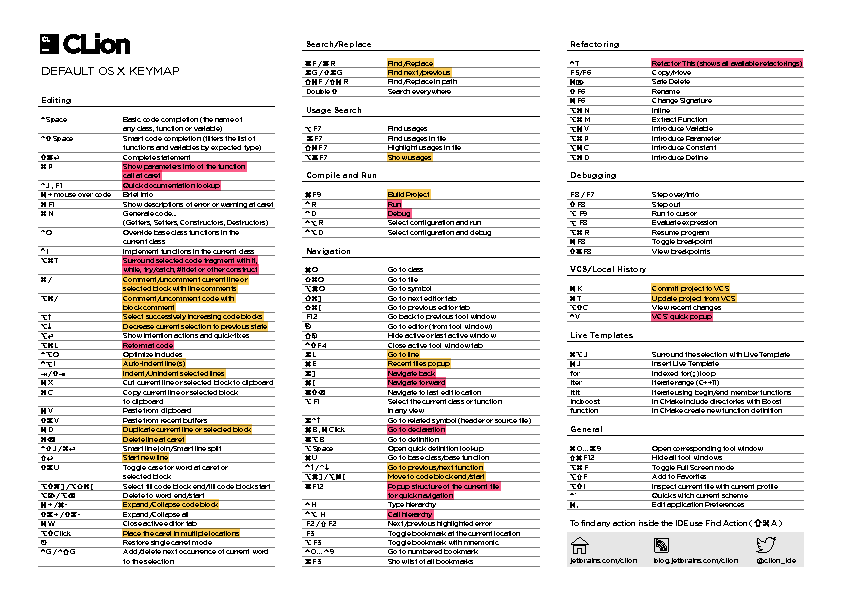

> PS some time ago, I don't remember if you or Stefan, recommended CLion as C++ IDE. I have tried it

> (together with PyCharm) and I must admit that it is really good. It took me years to configure Emacs

> as a IDE, while it took me minutes to have much better results in CLion. Thank you very much for

> your recommendation.

Was probably me. I use it as my standard IDE and am quite happy with it. All the things KO likes with emacs, plus much

more. Especially the CMake integration is nice, since you don't have to leave the IDE for editing, compiling and debugging.

The tooltips the IDE gave me in the past months made me write code much better. So quite an opposite opinion compared

with KO, but luckily this planet has space for all kinds of opinions. I made myself the cheat sheet attached, which lets me

do things much faster. Maybe you can use it.

Stefan |

| Attachment 1: ReferenceCardForMac.pdf

|

|

|

1906

|

12 May 2020 |

Stefan Ritt | Info | New ODB++ API | Since the beginning of the lockdown I have been working hard on a new object-oriented interface to the online database ODB. I have the code now in an initial state where it is ready for

testing and commenting. The basic idea is that there is an object midas::odb, which represents a value or a sub-tree in the ODB. Reading, writing and watching is done through this

object. To get started, the new API has to be included with

#include <odbxx.hxx>

To create ODB values under a certain sub-directory, you can either create one key at a time like:

midas::odb o;

o.connect("/Test/Settings", true); // this creates /Test/Settings

o.set_auto_create(true); // this turns on auto-creation

o["Int32 Key"] = 1; // create all these keys with different types

o["Double Key"] = 1.23;

o["String Key"] = "Hello";

or you can create a whole sub-tree at once like:

midas::odb o = {

{"Int32 Key", 1},

{"Double Key", 1.23},

{"String Key", "Hello"},

{"Subdir", {

{"Another value", 1.2f}

}

};

o.connect("/Test/Settings");

To read and write to the ODB, just read and write to the odb object

int i = o["Int32 Key];

o["Int32 Key"] = 42;

std::cout << o << std::endl;

This works with basic types, strings, std::array and std::vector. Each read access to this object triggers an underlying read from the ODB, and each write access triggers a write to the

ODB. To watch a value for change in the odb (the old db_watch() function), you can use now c++ lambdas like:

o.watch([](midas::odb &o) {

std::cout << "Value of key \"" + o.get_full_path() + "\" changed to " << o << std::endl;

});

Attached is a full running example, which is now also part of the midas repository. I have tested most things, but would not yet use it in a production environment. Not 100% sure if there

are any memory leaks. If someone could valgrind the test program, I would appreciate (currently does not work on my Mac).

Have fun!

Stefan

|

| Attachment 1: odbxx_test.cxx

|

/********************************************************************\

Name: odbxx_test.cxx

Created by: Stefan Ritt

Contents: Test and Demo of Object oriented interface to ODB

\********************************************************************/

#include <string>

#include <iostream>

#include <array>

#include <functional>

#include "odbxx.hxx"

#include "midas.h"

/*------------------------------------------------------------------*/

int main() {

cm_connect_experiment(NULL, NULL, "test", NULL);

midas::odb::set_debug(true);

// create ODB structure...

midas::odb o = {

{"Int32 Key", 42},

{"Bool Key", true},

{"Subdir", {

{"Int32 key", 123 },

{"Double Key", 1.2},

{"Subsub", {

{"Float key", 1.2f}, // floats must be explicitly specified

{"String Key", "Hello"},

}}

}},

{"Int Array", {1, 2, 3}},

{"Double Array", {1.2, 2.3, 3.4}},

{"String Array", {"Hello1", "Hello2", "Hello3"}},

{"Large Array", std::array<int, 10>{} }, // array with explicit size

{"Large String", std::string(63, '\0') }, // string with explicit size

};

// ...and push it to ODB. If keys are present in the

// ODB, their value is kept. If not, the default values

// from above are copied to the ODB

o.connect("/Test/Settings", true);

// alternatively, a structure can be created from an existing ODB subtree

midas::odb o2("/Test/Settings/Subdir");

std::cout << o2 << std::endl;

// retrieve, set, and change ODB value

int i = o["Int32 Key"];

o["Int32 Key"] = i+1;

o["Int32 Key"]++;

o["Int32 Key"] *= 1.3;

std::cout << "Should be 57: " << o["Int32 Key"] << std::endl;

// test with bool

o["Bool Key"] = !o["Bool Key"];

// test with std::string

std::string s = o["Subdir"]["Subsub"]["String Key"];

s += " world!";

o["Subdir"]["Subsub"]["String Key"] = s;

// test with a vector

std::vector<int> v = o["Int Array"];

v[1] = 10;

o["Int Array"] = v; // assign vector to ODB object

o["Int Array"][1] = 2; // modify ODB object directly

i = o["Int Array"][1]; // read from ODB object

o["Int Array"].resize(5); // resize array

o["Int Array"]++; // increment all values of array

// test with a string vector

std::vector<std::string> sv;

sv = o["String Array"];

sv[1] = "New String";

o["String Array"] = sv;

o["String Array"][2] = "Another String";

// iterate over array

int sum = 0;

for (int e : o["Int Array"])

sum += e;

std::cout << "Sum should be 11: " << sum << std::endl;

// creat key from other key

midas::odb oi(o["Int32 Key"]);

oi = 123;

// test auto refresh

std::cout << oi << std::endl; // each read access reads value from ODB

oi.set_auto_refresh_read(false); // turn off auto refresh

std::cout << oi << std::endl; // this does not read value from ODB

oi.read(); // this does manual read

std::cout << oi << std::endl;

midas::odb ox("/Test/Settings/OTF");

ox.delete_key();

// create ODB entries on-the-fly

midas::odb ot;

ot.connect("/Test/Settings/OTF", true); // this forces /Test/OTF to be created if not already there

ot.set_auto_create(true); // this turns on auto-creation

ot["Int32 Key"] = 1; // create all these keys with different types

ot["Double Key"] = 1.23;

ot["String Key"] = "Hello";

ot["Int Array"] = std::array<int, 10>{};

ot["Subdir"]["Int32 Key"] = 42;

ot["String Array"] = std::vector<std::string>{"S1", "S2", "S3"};

std::cout << ot << std::endl;

o.read(); // re-read the underlying ODB tree which got changed by above OTF code

std::cout << o.print() << std::endl;

// iterate over sub-keys

for (auto& oit : o)

std::cout << oit.get_odb()->get_name() << std::endl;

// print whole sub-tree

std::cout << o.print() << std::endl;

// dump whole subtree

std::cout << o.dump() << std::endl;

// delete test key from ODB

o.delete_key();

// watch ODB key for any change with lambda function

midas::odb ow("/Experiment");

ow.watch([](midas::odb &o) {

std::cout << "Value of key \"" + o.get_full_path() + "\" changed to " << o << std::endl;

});

do {

int status = cm_yield(100);

if (status == SS_ABORT || status == RPC_SHUTDOWN)

break;

} while (!ss_kbhit());

cm_disconnect_experiment();

return 1;

}

|

|

1908

|

13 May 2020 |

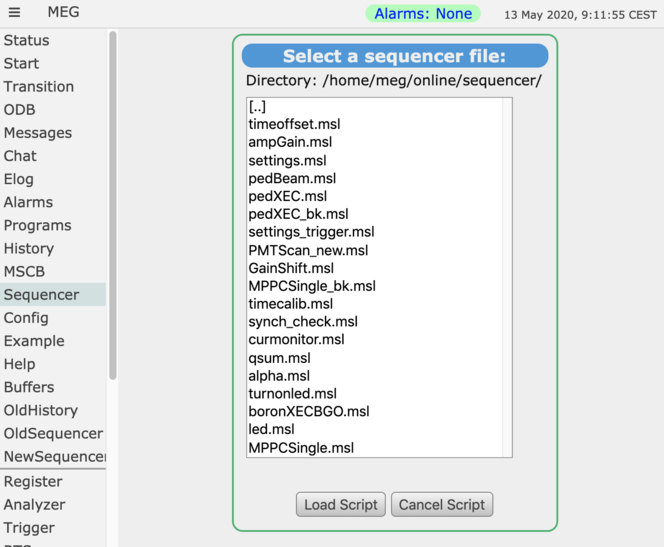

Stefan Ritt | Forum | List of sequencer files | If you load a file into the sequencer from the web interface, you get a list of all files in that directory.

This basically gives you a list of possible sequencer files. It's even more powerful, since you can

create subdirectories and thus group the sequencer files. Attached an example from our

experiment.

Stefan |

| Attachment 1: Screenshot_2020-05-13_at_9.11.55_.png

|

|

|

1914

|

20 May 2020 |

Stefan Ritt | Info | New ODB++ API | In meanwhile, there have been minor changes and improvements to the API:

Previously, we had:

> midas::odb o;

> o.connect("/Test/Settings", true); // this creates /Test/Settings

> o.set_auto_create(true); // this turns on auto-creation

> o["Int32 Key"] = 1; // create all these keys with different types

> o["Double Key"] = 1.23;

> o["String Key"] = "Hello";

Now, we only need:

o.connect("/Test/Settings");

o["Int32 Key"] = 1; // create all these keys with different types

...

no "true" needed any more. If the ODB tree does not exist, it gets created. Similarly, set_auto_create() can be dropped, it's on by default (thought this makes more sense). Also the iteration over subkeys has

been changed slightly.

The full example attached has been updated accordingly.

Best,

Stefan |

| Attachment 1: odbxx_test.cxx

|

/********************************************************************\

Name: odbxx_test.cxx

Created by: Stefan Ritt

Contents: Test and Demo of Object oriented interface to ODB

\********************************************************************/

#include <string>

#include <iostream>

#include <array>

#include <functional>

#include "midas.h"

#include "odbxx.hxx"

/*------------------------------------------------------------------*/

int main() {

cm_connect_experiment(NULL, NULL, "test", NULL);

midas::odb::set_debug(true);

// create ODB structure...

midas::odb o = {

{"Int32 Key", 42},

{"Bool Key", true},

{"Subdir", {

{"Int32 key", 123 },

{"Double Key", 1.2},

{"Subsub", {

{"Float key", 1.2f}, // floats must be explicitly specified

{"String Key", "Hello"},

}}

}},

{"Int Array", {1, 2, 3}},

{"Double Array", {1.2, 2.3, 3.4}},

{"String Array", {"Hello1", "Hello2", "Hello3"}},

{"Large Array", std::array<int, 10>{} }, // array with explicit size

{"Large String", std::string(63, '\0') }, // string with explicit size

};

// ...and push it to ODB. If keys are present in the

// ODB, their value is kept. If not, the default values

// from above are copied to the ODB

o.connect("/Test/Settings");

// alternatively, a structure can be created from an existing ODB subtree

midas::odb o2("/Test/Settings/Subdir");

std::cout << o2 << std::endl;

// set, retrieve, and change ODB value

o["Int32 Key"] = 42;

int i = o["Int32 Key"];

o["Int32 Key"] = i+1;

o["Int32 Key"]++;

o["Int32 Key"] *= 1.3;

std::cout << "Should be 57: " << o["Int32 Key"] << std::endl;

// test with bool

o["Bool Key"] = false;

o["Bool Key"] = !o["Bool Key"];

// test with std::string

o["Subdir"]["Subsub"]["String Key"] = "Hello";

std::string s = o["Subdir"]["Subsub"]["String Key"];

s += " world!";

o["Subdir"]["Subsub"]["String Key"] = s;

// test with a vector

std::vector<int> v = o["Int Array"]; // read vector

std::fill(v.begin(), v.end(), 10);

o["Int Array"] = v; // assign vector to ODB array

o["Int Array"][1] = 2; // modify array element

i = o["Int Array"][1]; // read from array element

o["Int Array"].resize(5); // resize array

o["Int Array"]++; // increment all values of array

// test with a string vector

std::vector<std::string> sv;

sv = o["String Array"];

sv[1] = "New String";

o["String Array"] = sv;

o["String Array"][2] = "Another String";

// iterate over array

int sum = 0;

for (int e : o["Int Array"])

sum += e;

std::cout << "Sum should be 47: " << sum << std::endl;

// creat key from other key

midas::odb oi(o["Int32 Key"]);

oi = 123;

// test auto refresh

std::cout << oi << std::endl; // each read access reads value from ODB

oi.set_auto_refresh_read(false); // turn off auto refresh

std::cout << oi << std::endl; // this does not read value from ODB

oi.read(); // this forces a manual read

std::cout << oi << std::endl;

// create ODB entries on-the-fly

midas::odb ot;

ot.connect("/Test/Settings/OTF");// this forces /Test/OTF to be created if not already there

ot["Int32 Key"] = 1; // create all these keys with different types

ot["Double Key"] = 1.23;

ot["String Key"] = "Hello";

ot["Int Array"] = std::array<int, 10>{};

ot["Subdir"]["Int32 Key"] = 42;

ot["String Array"] = std::vector<std::string>{"S1", "S2", "S3"};

std::cout << ot << std::endl;

o.read(); // re-read the underlying ODB tree which got changed by above OTF code

std::cout << o.print() << std::endl;

// iterate over sub-keys

for (midas::odb& oit : o)

std::cout << oit.get_name() << std::endl;

// print whole sub-tree

std::cout << o.print() << std::endl;

// dump whole subtree

std::cout << o.dump() << std::endl;

// delete test key from ODB

o.delete_key();

// watch ODB key for any change with lambda function

midas::odb ow("/Experiment");

ow.watch([](midas::odb &o) {

std::cout << "Value of key \"" + o.get_full_path() + "\" changed to " << o << std::endl;

});

do {

int status = cm_yield(100);

if (status == SS_ABORT || status == RPC_SHUTDOWN)

break;

} while (!ss_kbhit());

cm_disconnect_experiment();

return 1;

}

|

|

1922

|

28 May 2020 |

Stefan Ritt | Suggestion | ODB++ API - documantion updates and odb view after key creation | > 2. When I create an ODB structure with the new API I do for example:

>

> midas::odb stream_settings = {

> {"Test_odb_api", {

> {"Divider", 1000}, // int

> {"Enable", false}, // bool

> }},

> };

> stream_settings.connect("/Equipment/Test/Settings", true);

>

> and with

>

> midas::odb datagen("/Equipment/Test/Settings/Test_odb_api");

> std::cout << "Datagenerator Enable is " << datagen["Enable"] << std::endl;

>

> I am getting back false. Which looks nice but when I look into the odb via the browser the value is actually "y" meaning true which is stange. I added my frontend where I cleaned all function leaving only the frontend_init() one where I create this key. Its a cuda program but since I clean everything no cuda function is called anymore.

I cannot confirm this behaviour. Just put following code in a standalone program:

cm_connect_experiment(NULL, NULL, "test", NULL);

midas::odb::set_debug(true);

midas::odb stream_settings = {

{"Test_odb_api", {

{"Divider", 1000}, // int

{"Enable", false}, // bool

}},

};

stream_settings.connect("/Equipment/Test/Settings", true);

midas::odb datagen("/Equipment/Test/Settings/Test_odb_api");

std::cout << "Datagenerator Enable is " << datagen["Enable"] << std::endl;

and run it. The result is:

...

Get ODB key "/Equipment/Test/Settings/Test_odb_api/Enable": false

Datagenerator Enable is Get ODB key "/Equipment/Test/Settings/Test_odb_api/Enable": false

false

Looking in the ODB, I also see

[local:Online:S]/>cd Equipment/Test/Settings/Test_odb_api/

[local:Online:S]Test_odb_api>ls

Divider 1000

Enable n

[local:Online:S]Test_odb_api>

So not sure what is different in your case. Are you looking to the same ODB? Maybe you have one remote, and local?

Note that the "true" flag in stream_settings.connect(..., true); forces all default values into the ODB.

So if the ODB value is "y", it will be cdhanged to "n".

Best,

Stefan |

|

1924

|

30 May 2020 |

Stefan Ritt | Suggestion | ODB++ API - documantion updates and odb view after key creation | Marius, has the problem been fixed in meantime?

Stefan

> I am getting back false. Which looks nice but when I look into the odb via the browser the value is actually "y" meaning true which is stange.

> I added my frontend where I cleaned all function leaving only the frontend_init() one where I create this key. Its a cuda program but since

> I clean everything no cuda function is called anymore. |

|

1932

|

04 Jun 2020 |

Stefan Ritt | Forum | Template of slow control frontend | > I�m beginner of Midas, and trying to develop the slow control front-end with the latest Midas.

> I found the scfe.cxx in the �example�, but not enough to refer to write the front-end for my own devices

> because it contains only nulldevice and null bus driver case...

> (I could have succeeded to run the HV front-end for ISEG MPod, because there is the device driver...)

>

> Can I get some frontend examples such as simple TCP/IP and/or RS232 devices?

> Hopefully, I would like to have examples of frontend and device driver.

> (if any device driver which is included in the package is similar, please tell me.)

Have you checked the documentation?

https://midas.triumf.ca/MidasWiki/index.php/Slow_Control_System

Basically you have to replace the nulldevice driver with a "real" driver. You find all existing drivers under

midas/drivers/device. If your favourite is not there, you have to write it. Use one which is close to the one

you need and modify it.

Best,

Stefan |

|

1939

|

05 Jun 2020 |

Stefan Ritt | Suggestion | ODB++ API - documantion updates and odb view after key creation | Hi Marius,

your fix is good. Thanks for digging out this deep-lying issue, which would have haunted us if we would not fix it.

The problem is that in midas, the "BOOL" type is 4 Bytes long, actually modelled after MS Windows. Now I realized

that in c++, the "bool" type is only 1 Byte wide. So if we do the memcopy from a "c++ bool" to a "MIDAS BOOL", we

always copy four bytes, meaning that we copy three Bytes beyond the one-byte value of the c++ bool. So your fix

is absolutely correct, and I added it in one more space where we deal with bool arrays, where we need the same.

What I don't understand however is the fact why this fails for you. The ODB values are stored in the C union under

union {

...

bool m_bool;

double m_double;

std::string *m_string;

...

}

Now the C compiler puts all values at the lowest address, so m_bool is at offset zero, and the string pointer reaches

over all eight bytes (we are on 64-bit OS).

Now when I initialize this union in odbxx.h:66, I zero the string pointer which is the widest object:

u_odb() : m_string{} {};

which (at least on my Mac) sets all eight bytes to zero. If I then use the wrong code to set the bool value to the ODB

in odbxx.cxx:756, I do

db_set_data_index(... &u, rpc_tid_size(m_tid), ...);

so it copies four bytes (=rpc_tid_size(TID_BOOL)) to the ODB. The first byte should be the c++ bool value (0 or 1),

and the other three bytes should be zero from the initialization above. Apparently on your system, this is not

the case, and I would like you to double check it. Maybe there is another underlying problem which I don't understand

at the moment but which we better fix.

Otherwise the change is committed and your code should work. But we should not stop here! I really want to understand

why this is not working for you, maybe I miss something.

Best,

Stefan

> Hi Stefan,

>

> your test program was only working for me after I changed the following lines inside the odbxx.cpp

>

> diff --git a/src/odbxx.cxx b/src/odbxx.cxx

> index 24b5a135..48edfd15 100644

> --- a/src/odbxx.cxx

> +++ b/src/odbxx.cxx

> @@ -753,7 +753,12 @@ namespace midas {

> }

> } else {

> u_odb u = m_data[index];

> - status = db_set_data_index(m_hDB, m_hKey, &u, rpc_tid_size(m_tid), index, m_tid);

> + if (m_tid == TID_BOOL) {

> + BOOL ss = bool(u);

> + status = db_set_data_index(m_hDB, m_hKey, &ss, rpc_tid_size(m_tid), index, m_tid);

> + } else {

> + status = db_set_data_index(m_hDB, m_hKey, &u, rpc_tid_size(m_tid), index, m_tid);

> + }

> if (m_debug) {

> std::string s;

> u.get(s);

>

> Likely not the best fix but otherwise I was always getting after running the test program:

>

> [ODBEdit,INFO] Program ODBEdit on host localhost started

> [local:Default:S]/>cd Equipment/Test/Settings/Test_odb_api/

> key not found

> makoeppe@office ~/mu3e/online/online (git)-[odb++_api] % test_connect

> Created ODB key /Equipment/Test/Settings

> Created ODB key /Equipment/Test/Settings/Test_odb_api

> Created ODB key /Equipment/Test/Settings/Test_odb_api/Divider

> Set ODB key "/Equipment/Test/Settings/Test_odb_api/Divider" = 1000

> Created ODB key /Equipment/Test/Settings/Test_odb_api/Enable

> Set ODB key "/Equipment/Test/Settings/Test_odb_api/Enable" = false

> Get definition for ODB key "/Equipment/Test/Settings/Test_odb_api"

> Get definition for ODB key "/Equipment/Test/Settings/Test_odb_api/Divider"

> Get ODB key "/Equipment/Test/Settings/Test_odb_api/Divider": 1000

> Get definition for ODB key "/Equipment/Test/Settings/Test_odb_api/Enable"

> Get ODB key "/Equipment/Test/Settings/Test_odb_api/Enable": false

> Get definition for ODB key "/Equipment/Test/Settings/Test_odb_api/Divider"

> Get ODB key "/Equipment/Test/Settings/Test_odb_api/Divider": 1000

> Get definition for ODB key "/Equipment/Test/Settings/Test_odb_api/Enable"

> Get ODB key "/Equipment/Test/Settings/Test_odb_api/Enable": false

> Datagenerator Enable is Get ODB key "/Equipment/Test/Settings/Test_odb_api/Enable": false

> false

> makoeppe@office ~/mu3e/online/online (git)-[odb++_api] % odbedit

> [ODBEdit,INFO] Program ODBEdit on host localhost started

> [local:Default:S]/>cd Equipment/Test/Settings/Test_odb_api/

> [local:Default:S]Test_odb_api>ls

> Divider 1000

> Enable y

>

> > > I am getting back false. Which looks nice but when I look into the odb via the browser the value is actually "y" meaning true which is stange.

> > > I added my frontend where I cleaned all function leaving only the frontend_init() one where I create this key. Its a cuda program but since

> > > I clean everything no cuda function is called anymore. |

|

1945

|

10 Jun 2020 |

Stefan Ritt | Forum | slow-control equipment crashes when running multi-threaded on a remote machine | Few comments:

- As KO write, we might need semaphores also on a remote front-end, in case several programs share the same hardware. So it should work and cm_get_path() should not just exit

- When I wrote the multi-threaded device drivers, I did use semaphores instead of mutexes, but I forgot why. Might be that midas semaphores have a timeout and mutexes not, or

something along those lines.

- I do need either semaphores or mutexes since in a multi-threaded slow-control font-end (too many dashes...) several threads have to access an internal data exchange buffer, which

needs protection for multi-threaded environments.

So we can how either fix cm_get_path() or replace all semaphores in with mutexes in midas/src/device_driver.cxx. I have kind of a feeling that we should do both. And what about

switching to c++ std::mutex instead of pthread mutexes?

Stefan |

|