| ID |

Date |

Author |

Topic |

Subject |

|

2348

|

23 Feb 2022 |

Stefan Ritt | Info | Midas slow control event generation switched to 32-bit banks | The midas slow control system class drivers automatically read their equipment and generate events containing midas banks. So far these have been 16-bit banks using bk_init(). But now more and more experiments use large amount of channels, so the 16-bit address space is exceeded. Until last week, there was even no check that this happens, leading to unpredictable crashes.

Therefore I switched the bank generation in the drivers generic.cxx, hv.cxx and multi.cxx to 32-bit banks via bk_init32(). This should be in principle transparent, since the midas bank functions automatically detect the bank type during reading. But I thought I let everybody know just in case.

Stefan |

|

2347

|

16 Feb 2022 |

Marius Koeppel | Bug Report | Writting MIDAS Events via FPGAs | I just came back to this and started to use the dummy frontend.

Unfortunately, I have a problem during run cycles:

Starting the frontend and starting a run works fine -> seeing events with mdump and also on the web GUI.

But when I stop the run and try to start the next run the frontend is sending no events anymore.

It get stuck at line 221 (if (status == DB_TIMEOUT)).

I tried to reduce the nEvents to 1 which helped in terms of DB_TIMEOUT but still I don't get any events after I did a stop / start cycle -> no events in mdump and no events counting up at the web GUI.

If I kill the frontend in the terminal (ctrl+c) and restart it, while the run is still running, it starts to send events again.

Cheers,

Marius |

|

2346

|

16 Feb 2022 |

jago aartsen | Bug Fix | ODBINC/Sequencer Issue | > > But the error still persists. Is there another way to update which we are missing?

>

> The bug was definitively fixed in this modification:

>

> https://bitbucket.org/tmidas/midas/commits/5f33f9f7f21bcaa474455ab72b15abc424bbebf2

>

> You probably forgot to compile/install correctly after your pull. Of you start "odbedit" and do

> a "ver" you see which git revision you are currently running. Make sure to get this output:

>

> MIDAS version: 2.1

> GIT revision: Fri Feb 11 08:56:02 2022 +0100 - midas-2020-08-a-509-g585faa96 on branch

> develop

> ODB version: 3

>

>

> Stefan

We we're having some problems compiling but have got it sorted now - thanks for your help:)

Jago |

|

Draft

|

15 Feb 2022 |

jago aartsen | Bug Fix | ODBINC/Sequencer Issue | > > But the error still persists. Is there another way to update which we are missing?

>

> The bug was definitively fixed in this modification:

>

> https://bitbucket.org/tmidas/midas/commits/5f33f9f7f21bcaa474455ab72b15abc424bbebf2

>

> You probably forgot to compile/install correctly after your pull. Of you start "odbedit" and do

> a "ver" you see which git revision you are currently running. Make sure to get this output:

>

> MIDAS version: 2.1

> GIT revision: Fri Feb 11 08:56:02 2022 +0100 - midas-2020-08-a-509-g585faa96 on branch

> develop

> ODB version: 3

>

>

> Stefan

Hey Stefan,

We are running the GIT revision midas-2020-08-a-509-g585faa96:

[local:mu3eMSci:S]/>ver

MIDAS version: 2.1

GIT revision: Tue Feb 15 16:31:07 2022 +0000 - midas-2020-08-a-521-ge43ea7c5 on branch develop

ODB version: 3

which is still giving the error unfortunately. |

|

2344

|

15 Feb 2022 |

Stefan Ritt | Bug Fix | ODBINC/Sequencer Issue | > But the error still persists. Is there another way to update which we are missing?

The bug was definitively fixed in this modification:

https://bitbucket.org/tmidas/midas/commits/5f33f9f7f21bcaa474455ab72b15abc424bbebf2

You probably forgot to compile/install correctly after your pull. Of you start "odbedit" and do

a "ver" you see which git revision you are currently running. Make sure to get this output:

MIDAS version: 2.1

GIT revision: Fri Feb 11 08:56:02 2022 +0100 - midas-2020-08-a-509-g585faa96 on branch

develop

ODB version: 3

Stefan |

|

2343

|

14 Feb 2022 |

jago aartsen | Bug Fix | ODBINC/Sequencer Issue | > > I noticed that "Jacob Thorne" in the forum had the same issue as us in Novemeber last

> > year. Indeed we have not installed any later versions of MIDAS since then so we will

> > double check we have the latest version.

>

> As you see from my reply to Jacob, the bug has been fixed in midas since then, so just

> update.

>

> Stefan

We have tried updating using both:

git submodule update --init --recursive

and:

git pull --recurse-submodules

But the error still persists. Is there another way to update which we are missing?

Cheers

Jago |

|

2342

|

14 Feb 2022 |

Stefan Ritt | Bug Fix | ODBINC/Sequencer Issue | > I noticed that "Jacob Thorne" in the forum had the same issue as us in Novemeber last

> year. Indeed we have not installed any later versions of MIDAS since then so we will

> double check we have the latest version.

As you see from my reply to Jacob, the bug has been fixed in midas since then, so just

update.

Stefan |

|

2341

|

14 Feb 2022 |

jago aartsen | Bug Fix | ODBINC/Sequencer Issue | > Just post here a minimal script which produces the error, so that I can try myself.

>

> ... and make sure that you have the latest develop version of midas.

>

> Stefan

Here is the simplest script which produces the error:

WAIT seconds, 3

ODBINC /Equipment/ArduinoTestStation/Variables/_S_

I noticed that "Jacob Thorne" in the forum had the same issue as us in Novemeber last

year. Indeed we have not installed any later versions of MIDAS since then so we will

double check we have the latest version.

Jago |

|

2340

|

14 Feb 2022 |

Stefan Ritt | Bug Fix | ODBINC/Sequencer Issue | Just post here a minimal script which produces the error, so that I can try myself.

... and make sure that you have the latest develop version of midas.

Stefan |

|

2339

|

14 Feb 2022 |

jago aartsen | Bug Fix | ODBINC/Sequencer Issue | > >

> > [local:mu3eMSci:S]/>cd Equipment/ArduinoTestStation/Variables

> > [local:mu3eMSci:S]Variables>ls -l

> > Key name Type #Val Size Last Opn Mode Value

> > ---------------------------------------------------------------------------

> > _T_ FLOAT 1 4 1h 0 RWD 20.93

> > _F_ FLOAT 1 4 1h 0 RWD 12.8

> > _P_ FLOAT 1 4 1h 0 RWD 56

> > _S_ FLOAT 1 4 1h 0 RWD 5

> > _H_ FLOAT 1 4 60h 0 RWD 44.74

> > _B_ FLOAT 1 4 60h 0 RWD 18.54

> > _A_ FLOAT 1 4 1h 0 RWD 14.41

> > _RH_ FLOAT 1 4 1h 0 RWD 41.81

> > _AT_ FLOAT 1 4 1h 0 RWD 20.46

> > SP INT16 1 2 1h 0 RWD 10

> >

>

> This looks okey, so we still have no explanation for your error. Please post your sequencer

> script?

>

> K.O.

Hey, thanks for getting back to me

We are fairly confident the syntax is correct. Having tried the test script posted by Stefan:

> ODBSET /Equipment/ArduinoTestStation/Variables/_S_, 10

>

> LOOP 10

> WAIT seconds, 3

> ODBINC

The same error is returned:/

We will take another look today.

Jago |

|

2338

|

14 Feb 2022 |

jago aartsen | Bug Fix | ODBINC/Sequencer Issue | > I tried following script:

>

> ODBSET /Equipment/ArduinoTestStation/Variables/_S_, 10

>

> LOOP 10

> WAIT seconds, 3

> ODBINC /Equipment/ArduinoTestStation/Variables/_S_

> ENDLOOP

>

> and it worked as expected. So I conclude the problem must be in your script. Probably a typo in

> the ODB path pointing to a 32-byte string instead to a 4-byte float.

>

> Stefan

Hi Stefan,

Cheers for the reply. I believe the syntax we are using is correct. I have tried copying the script

you used above and it results in the same error as before. Perhaps something is going wrong

elsewhere - I will take another look today.

Jago |

|

2337

|

11 Feb 2022 |

Alexey Kalinin | Bug Report | some frontend kicked by cm_periodic_tasks | Thanks for the answer.

As soon as I can(possible in a month) I'll try suggestion below:

> One thing to try is set the write cache size to zero and see if your crash goes away. I see

> some indication of something rotten in the event buffer code if write cache is enabled. This

> is set in ODB "/Eq/XXX/Common/Write Cache Size", set it to zero. (beware recent confusion

> where odb settings have no effect depending on value of "equipment_common_overwrite").

I tried to change this ODB for one of the frontend via mhttpd/browser, and eventually it goes back

to default value (1000 as I remember). but this frontend has the minimum rate 50DWORD/~10sec. and

depending on cashe size it appears in mdump once per 31 events but all aff them . SO its different

story, but m.b. it has the same solution to play with Write Cashe Size.

double free message goes from mserver terminal.

all of the frontends are remote.

I can't exclude crashes of frontend , but when I run ./frontend -i 1(2,3 etc) thet means that I run

one code for all, and only several causes crash.also I found that crash in frontend happened while

it do nothing with collected data (last event reached and new data is not ready), but it tries to

watch for the ODB changes.I mean it crashes iside (while {odb_changes(value in watchdog)}),and I don't

know what else happenned meanwhile with cahed buffer.

Future plans is to use event buider for frontends when data/signals will be perfectly reasonable

i/e/ without broken events. for now i kinda worry about if one of frontends will skip one of the

event inside its buffer.

Thanks for the way to dig into.

A.

> > The problem is that eventually some of frontend closed with message

> > :19:22:31.834 2021/12/02 [rootana,INFO] Client 'Sample Frontend38' on buffer

> > 'SYSMSG' removed by cm_periodic_tasks because process pid 9789 does not exist

>

> This messages means what it says. A client was registered with the SYSMSG buffer and this

> client had pid 9789. At some point some other client (rootana, in this case) checked it and

> process pid 9789 was no longer running. (it then proceeded to remove the registration).

>

> There is 2 possibilities:

> - simplest: your frontend has crashed. best to debug this by running it inside gdb, wait for

> the crash.

> - unlikely: reported pid is bogus, real pid of your frontend is different, the client

> registration in SYSMSG is corrupted. this would indicate massive corruption of midas shared

> memory buffers, not impossible if your frontend misbehaves and writes to random memory

> addresses. ODB has protection against this (normally turned off, easy to enable, set ODB

> "/experiment/protect odb" to yes), shared memory buffers do not have protection against this

> (should be added?).

>

> Do this. When you start your frontend, write down it's pid, when you see the crash message,

> confirm pid number printed is the same. As additional test, run your frontend inside gdb,

> after it crashes, you can print the stack trace, etc.

>

> >

> > in the meantime mserver loggging :

> > mserver started interactively

> > mserver will listen on TCP port 1175

> > double free or corruption (!prev)

> > double free or corruption (!prev)

> > free(): invalid next size (normal)

> > double free or corruption (!prev)

> >

>

> Are these "double free" messages coming from the mserver or from your frontend? (i.e. you run

> them in different terminals, not all in the same terminal?).

>

> If messages are coming from the mserver, this confirms possibility (1),

> except that for frontends connected remotely, the pid is the pid of the mserver,

> and what we see are crashes of mserver, not crashes of your frontend. These are much harder to

> debug.

>

> You will need to enable core dumps (ODB /Experiment/Enable core dumps set to "y"),

> confirm that core dumps work (i.e. "killall -SEGV mserver", observe core files are created

> in the directory where you started the mserver), reproduce the crash, run "gdb mserver

> core.NNNN", run "bt" to print the stack trace, post the stack trace here (or email to me

> directly).

>

> >

> > I can find some correlation between number of events/event size produced by

> > frontend, cause its failed when its become big enough.

> >

>

> There is no limit on event size or event rate in midas, you should not see any crash

> regardless of what you do. (there is a limit of event size, because an event has

> to fit inside an event buffer and event buffer size is limited to 2 GB).

>

> Obviously you hit a bug in mserver that makes it crash. Let's debug it.

>

> One thing to try is set the write cache size to zero and see if your crash goes away. I see

> some indication of something rotten in the event buffer code if write cache is enabled. This

> is set in ODB "/Eq/XXX/Common/Write Cache Size", set it to zero. (beware recent confusion

> where odb settings have no effect depending on value of "equipment_common_overwrite").

>

> >

> > frontend scheme is like this:

> >

>

> Best if you use the tmfe c++ frontend, event data handling is much simpler and we do not

> have to debug the convoluted old code in mfe.c.

>

> K.O.

>

> >

> > poll event time set to 0;

> >

> > poll_event{

> > //if buffer not transferred return (continue cutting the main buffer)

> > //read main buffer from hardware

> > //buffer not transfered

> > }

> >

> > read event{

> > // cut the main buffer to subevents (cut one event from main buffer) return;

> > //if (last subevent) {buffer transfered ;return}

> > }

> >

> > What is strange to me that 2 frontends (1 per remote pc) causing this.

> >

> > Also, I'm executing one FEcode with -i # flag , put setting eventid in

> > frontend_init , and using SYSTEM buffer for all.

> >

> > Is there something I'm missing?

> > Thanks.

> > A. |

|

2336

|

10 Feb 2022 |

Stefan Ritt | Bug Report | History plots deceiving users into thinking data is still logging | The problem has been fixed on commit 825935dc on Oct. 2021 and runs fine since then at PSI. If TRIUMF people

agree, we can close that issue and proceed.

Stefan |

|

2335

|

10 Feb 2022 |

Stefan Ritt | Bug Fix | ODBINC/Sequencer Issue | I tried following script:

ODBSET /Equipment/ArduinoTestStation/Variables/_S_, 10

LOOP 10

WAIT seconds, 3

ODBINC /Equipment/ArduinoTestStation/Variables/_S_

ENDLOOP

and it worked as expected. So I conclude the problem must be in your script. Probably a typo in

the ODB path pointing to a 32-byte string instead to a 4-byte float.

Stefan |

|

2334

|

10 Feb 2022 |

Francesco Renga | Forum | OPC client within MIDAS | Dear all,

I finally succeeded to get a working driver for the communication with an OPC

UA server. It is based on the open62541 library and I use it in combination with the

generic.h driver class. This is still a crude implementation, but let me post it here,

maybe it can be useful to somebody else.

BTW, if there is somebody more skilled than me with OPC UA and MIDAS drivers, who is

willing to give suggestions for improving the implementation, it would be extremely

appreciated.

Best Regards,

Francesco

> Dear all,

> I need to integrate in my MIDAS project the communication with an OPC UA

> server. My plan is to develop an OPC UA client as a "device" in

> midas/drivers/device.

>

> Two questions:

>

> 1) Is anybody aware of some similar effort for some other project, so that I can

> get some example?

>

> 2) What could be the more appropriate driver's class to be used? generic.cxx?

> multi.cxx?

>

> Thank you for your help,

> Francesco |

| Attachment 1: opc.cxx

|

/********************************************************************\

Name: opc.cxx

Created by: Francesco Renga

Contents: Device Driver for generic OPC server

$Id: mscbdev.c 3428 2006-12-06 08:49:38Z ritt $

\********************************************************************/

#include <stdio.h>

#include <stdlib.h>

#include <stdarg.h>

#include "midas.h"

#include "msystem.h"

#include <open62541/client_config_default.h>

#include <open62541/client_highlevel.h>

#include <open62541/client_subscriptions.h>

#include <open62541/plugin/log_stdout.h>

#ifndef _MSC_VER

#include <sys/socket.h>

#include <netinet/in.h>

#include <arpa/inet.h>

#include <errno.h>

#include <string.h>

#endif

#include <vector>

using namespace std;

/*---- globals -----------------------------------------------------*/

typedef struct {

char Name[32]; // System Name (duplication)

char ip[32]; // IP# for network access

int nsIndex;

char TagsGuid[64];

} OPC_SETTINGS;

#define OPC_SETTINGS_STR "\

System Name = STRING : [32] gassys\n\

IP = STRING : [32] 172.17.19.70:4870\n\

Namespace Index = INT : 3\n\

Tags Guid = STRING : [64] ecef81d5-c834-4379-ab79-c8fa3133a311\n\

"

typedef struct {

OPC_SETTINGS opc_settings;

UA_Client* client;

int num_channels;

vector<string> channel_name;

vector<int> channel_type;

} OPC_INFO;

/*---- device driver routines --------------------------------------*/

INT opc_init(HNDLE hKey, void **pinfo, INT channels, INT(*bd) (INT cmd, ...))

{

int status, size;

OPC_INFO *info;

HNDLE hDB;

/* allocate info structure */

info = (OPC_INFO *)calloc(1, sizeof(OPC_INFO));

*pinfo = info;

cm_get_experiment_database(&hDB, NULL);

/* create PSI_BEAMBLOCKER settings record */

status = db_create_record(hDB, hKey, "", OPC_SETTINGS_STR);

if (status != DB_SUCCESS)

return FE_ERR_ODB;

size = sizeof(info->opc_settings);

db_get_record(hDB, hKey, &info->opc_settings, &size, 0);

info->client = UA_Client_new();

UA_ClientConfig_setDefault(UA_Client_getConfig(info->client));

char address[32];

sprintf(address,"opc.tcp://%s",info->opc_settings.ip);

printf("Connecting to %s...\n",address);

UA_StatusCode retval = UA_Client_connect(info->client, address);

if(retval != UA_STATUSCODE_GOOD) {

UA_Client_delete(info->client);

return FE_ERR_HW;

}

////Scan of variables

UA_BrowseRequest bReq;

UA_BrowseRequest_init(&bReq);

bReq.requestedMaxReferencesPerNode = 0;

bReq.nodesToBrowse = UA_BrowseDescription_new();

bReq.nodesToBrowseSize = 1;

UA_Guid guid1;

UA_Guid_parse(&guid1, UA_STRING(info->opc_settings.TagsGuid));

bReq.nodesToBrowse[0].nodeId = UA_NODEID_GUID(info->opc_settings.nsIndex, guid1);

bReq.nodesToBrowse[0].resultMask = UA_BROWSERESULTMASK_ALL; /* return everything */

UA_BrowseResponse bResp = UA_Client_Service_browse(info->client, bReq);

for(size_t i = 0; i < bResp.resultsSize; ++i) {

for(size_t j = 0; j < bResp.results[i].referencesSize; ++j) {

UA_ReferenceDescription *ref = &(bResp.results[i].references[j]);

if ((ref->nodeClass == UA_NODECLASS_OBJECT || ref->nodeClass == UA_NODECLASS_VARIABLE||ref->nodeClass == UA_NODECLASS_METHOD)) {

if(ref->nodeId.nodeId.identifierType == UA_NODEIDTYPE_STRING) {

UA_NodeId dataType;

UA_Client_readDataTypeAttribute(info->client, UA_NODEID_STRING(info->opc_settings.nsIndex, ref->nodeId.nodeId.identifier.string.data), &dataType);

(info->channel_type).push_back(dataType.identifier.numeric-1);

(info->channel_name).push_back((char*)(ref->nodeId.nodeId.identifier.string.data));

}

}

}

}

UA_BrowseNextRequest bNextReq;

UA_BrowseNextResponse bNextResp;

UA_BrowseNextRequest_init(&bNextReq);

bNextReq.releaseContinuationPoints = UA_FALSE;

bNextReq.continuationPoints = &bResp.results[0].continuationPoint;

bNextReq.continuationPointsSize = 1;

bool hasRef;

do {

hasRef = false;

bNextResp = UA_Client_Service_browseNext(info->client, bNextReq);

for (size_t i = 0; i < bNextResp.resultsSize; i++) {

for (size_t j = 0; j < bNextResp.results[i].referencesSize; j++) {

hasRef = true;

UA_ReferenceDescription *ref = &(bNextResp.results[i].references[j]);

if ((ref->nodeClass == UA_NODECLASS_OBJECT || ref->nodeClass == UA_NODECLASS_VARIABLE||ref->nodeClass == UA_NODECLASS_METHOD)) {

if(ref->nodeId.nodeId.identifierType == UA_NODEIDTYPE_STRING) {

UA_NodeId dataType;

UA_Client_readDataTypeAttribute(info->client, UA_NODEID_STRING(info->opc_settings.nsIndex, ref->nodeId.nodeId.identifier.string.data), &dataType);

(info->channel_type).push_back(dataType.identifier.numeric-1);

(info->channel_name).push_back((char*)(ref->nodeId.nodeId.identifier.string.data));

}

}

}

}

bNextReq.continuationPoints = &bNextResp.results[0].continuationPoint;

bNextReq.continuationPointsSize = 1;

} while(hasRef);

info->num_channels = info->channel_name.size();

UA_BrowseRequest_deleteMembers(&bReq);

UA_BrowseResponse_deleteMembers(&bResp);

UA_BrowseRequest_clear(&bReq);

UA_BrowseResponse_clear(&bResp);

return FE_SUCCESS;

}

/*----------------------------------------------------------------------------*/

INT opc_exit(OPC_INFO * info)

{

UA_Client_disconnect(info->client);

UA_Client_delete(info->client);

if (info)

free(info);

return FE_SUCCESS;

}

/*----------------------------------------------------------------------------*/

INT opc_get(OPC_INFO * info, INT channel, float *pvalue)

{

////Read value

UA_Variant *val = UA_Variant_new();

UA_StatusCode retval = UA_Client_readValueAttribute(info->client, UA_NODEID_STRING(info->opc_settings.nsIndex, info->channel_name[channel].c_str()), val);

if (retval == UA_STATUSCODE_GOOD && UA_Variant_isScalar(val) &&

val->type == &UA_TYPES[info->channel_type[channel]]

) {

if(info->channel_type[channel] == UA_TYPES_FLOAT){

*pvalue = *(UA_Float*)val->data;

}

else if(info->channel_type[channel] == UA_TYPES_DOUBLE){

*pvalue = *(UA_Double*)val->data;

}

else if(info->channel_type[channel] == UA_TYPES_INT16){

*pvalue = *(UA_Int16*)val->data;

}

else if(info->channel_type[channel] == UA_TYPES_INT32){

*pvalue = *(UA_Int32*)val->data;

}

else if(info->channel_type[channel] == UA_TYPES_INT64){

*pvalue = *(UA_Int64*)val->data;

}

else if(info->channel_type[channel] == UA_TYPES_BOOLEAN){

*pvalue = (*(UA_Boolean*)val->data) ? 1.0 : 0.0;

}

else if(info->channel_type[channel] == UA_TYPES_BYTE){

*pvalue = *(UA_Byte*)val->data;

}

else {

*pvalue = 0.;

}

}

UA_Variant_delete(val);

return FE_SUCCESS;

}

INT opc_set(OPC_INFO * info, INT channel, float value)

{

////Write value

UA_Variant *myVariant = UA_Variant_new();

if(info->channel_type[channel] == UA_TYPES_FLOAT){

UA_Float typevalue = value;

UA_Variant_setScalarCopy(myVariant, &typevalue, &UA_TYPES[info->channel_type[channel]]);

}

else if(info->channel_type[channel] == UA_TYPES_DOUBLE){

UA_Double typevalue = value;

UA_Variant_setScalarCopy(myVariant, &typevalue, &UA_TYPES[info->channel_type[channel]]);

}

else if(info->channel_type[channel] == UA_TYPES_INT16){

UA_Int16 typevalue = value;

UA_Variant_setScalarCopy(myVariant, &typevalue, &UA_TYPES[info->channel_type[channel]]);

}

else if(info->channel_type[channel] == UA_TYPES_INT32){

UA_Int32 typevalue = value;

UA_Variant_setScalarCopy(myVariant, &typevalue, &UA_TYPES[info->channel_type[channel]]);

}

else if(info->channel_type[channel] == UA_TYPES_INT64){

UA_Int64 typevalue = value;

UA_Variant_setScalarCopy(myVariant, &typevalue, &UA_TYPES[info->channel_type[channel]]);

}

else if(info->channel_type[channel] == UA_TYPES_BOOLEAN){

UA_Boolean typevalue = (value != 0) ? true : false;

UA_Variant_setScalarCopy(myVariant, &typevalue, &UA_TYPES[info->channel_type[channel]]);

}

else if(info->channel_type[channel] == UA_TYPES_BYTE){

UA_Byte typevalue = value;

UA_Variant_setScalarCopy(myVariant, &typevalue, &UA_TYPES[info->channel_type[channel]]);

}

else return FE_SUCCESS;

UA_Client_writeValueAttribute(info->client, UA_NODEID_STRING(info->opc_settings.nsIndex, info->channel_name[channel].c_str()), myVariant);

UA_Variant_delete(myVariant);

return FE_SUCCESS;

}

/*---- device driver entry point -----------------------------------*/

... 62 more lines ...

|

| Attachment 2: opc.h

|

/********************************************************************\

Name: dd_sy4527.h

Created by: based on null.h / Stefan Ritt

Contents: Device driver function declarations for SY4527 device

$Id$

\********************************************************************/

INT opc(INT cmd, ...);

|

|

2333

|

09 Feb 2022 |

Konstantin Olchanski | Bug Fix | ODBINC/Sequencer Issue | >

> [local:mu3eMSci:S]/>cd Equipment/ArduinoTestStation/Variables

> [local:mu3eMSci:S]Variables>ls -l

> Key name Type #Val Size Last Opn Mode Value

> ---------------------------------------------------------------------------

> _T_ FLOAT 1 4 1h 0 RWD 20.93

> _F_ FLOAT 1 4 1h 0 RWD 12.8

> _P_ FLOAT 1 4 1h 0 RWD 56

> _S_ FLOAT 1 4 1h 0 RWD 5

> _H_ FLOAT 1 4 60h 0 RWD 44.74

> _B_ FLOAT 1 4 60h 0 RWD 18.54

> _A_ FLOAT 1 4 1h 0 RWD 14.41

> _RH_ FLOAT 1 4 1h 0 RWD 41.81

> _AT_ FLOAT 1 4 1h 0 RWD 20.46

> SP INT16 1 2 1h 0 RWD 10

>

This looks okey, so we still have no explanation for your error. Please post your sequencer

script?

K.O. |

|

2332

|

09 Feb 2022 |

jago aartsen | Bug Fix | ODBINC/Sequencer Issue | > Please post the output of odbedit "ls -l" for /eq/ar.../variables. (you posted the

> variable name as an image, and I cannot cut-and-paste the odb path!). BTW data size 4 is

> correct, 4 bytes for INT32/UINT32/FLOAT. For DOUBLE it should be 8. For you it prints 32

> and this is wrong, we need to see the output of "ls -l".

> K.O.

Hi,

Thanks for getting back to me regarding this. The output of "ls -l" is:

[local:mu3eMSci:S]/>cd Equipment/ArduinoTestStation/Variables

[local:mu3eMSci:S]Variables>ls -l

Key name Type #Val Size Last Opn Mode Value

---------------------------------------------------------------------------

_T_ FLOAT 1 4 1h 0 RWD 20.93

_F_ FLOAT 1 4 1h 0 RWD 12.8

_P_ FLOAT 1 4 1h 0 RWD 56

_S_ FLOAT 1 4 1h 0 RWD 5

_H_ FLOAT 1 4 60h 0 RWD 44.74

_B_ FLOAT 1 4 60h 0 RWD 18.54

_A_ FLOAT 1 4 1h 0 RWD 14.41

_RH_ FLOAT 1 4 1h 0 RWD 41.81

_AT_ FLOAT 1 4 1h 0 RWD 20.46

SP INT16 1 2 1h 0 RWD 10

Many Thanks

Jago |

|

2331

|

08 Feb 2022 |

Konstantin Olchanski | Bug Fix | ODBINC/Sequencer Issue | Please post the output of odbedit "ls -l" for /eq/ar.../variables. (you posted the

variable name as an image, and I cannot cut-and-paste the odb path!). BTW data size 4 is

correct, 4 bytes for INT32/UINT32/FLOAT. For DOUBLE it should be 8. For you it prints 32

and this is wrong, we need to see the output of "ls -l".

K.O. |

|

2330

|

08 Feb 2022 |

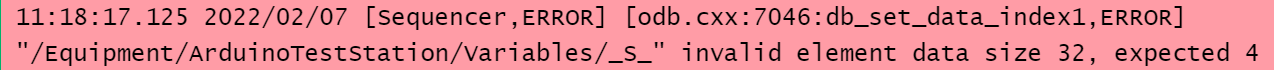

jago aartsen | Bug Fix | ODBINC/Sequencer Issue | Hi all,

I am having some issues getting the ODBINC command to work within the MIDAS

sequencer. I am trying to increment one of the ODB values but it is returning a

mismatch data-type size error (see attached image).

All the ODB variables are MIDAS data-type FLOAT and should all be 32-bit values,

but for some reason MIDAS is thinking they are 4-bit values. I have tried creating

new ODB keys of type INT32, UINT32 and DOUBLE but they all give the same error.

If anybody has any suggestions I would really appreciate some help:)

Thanks

|

| Attachment 1: mslerror.PNG

|

|

|

2329

|

07 Feb 2022 |

Konstantin Olchanski | Forum | MidasWiki moved from ladd00 to daq00.triumf.ca and updated to MediaWiki 1.35 | MidasWiki moved from ladd00 (obsolete SL6) to daq00.triumf.ca (Ubuntu LTS 20.04)

and updated from obsolete MediaWiki LTS 1.27.7 to MediaWiki LTS 1.35, supported

until mid-2023, see https://www.mediawiki.org/wiki/Version_lifecycle

Old URL https://midas.triumf.ca and https://midas.triumf.ca/MidasWiki/...

redirect to new URL https://daq00.triumf.ca/MidasWiki/index.php/Main_Page

All old links and bookmarks should continue to work (via redirect).

To report problems with this MediaWiki instance and to request

any changes in configuration or installed extensions, please reply to this

message here.

K.O. |

|