| ID |

Date |

Author |

Topic |

Subject |

|

2766

|

14 May 2024 |

Konstantin Olchanski | Info | ROOT v6.30.6 requires libtbb-dev | root_v6.30.06.Linux-ubuntu22.04-x86_64-gcc11.4 the libtbb-dev package.

This is a new requirement and it is not listed in the ROOT dependancies page (I left a note on the ROOT forum, hopefully it will be

fixed quickly). https://root.cern/install/dependencies/

Symptom is when starting ROOT, you get an error:

cling::DynamicLibraryManager::loadLibrary(): libtbb.so.12: cannot open shared object file: No such file or directory

and things do not work.

Fix is to:

apt install libtbb-dev

K.O. |

|

2767

|

16 May 2024 |

Konstantin Olchanski | Bug Report | midas alarm borked condition evaluation | I am updating the TRIUMF IRIS experiment to the latest version of MIDAS. I see following error messages in midas.log:

19:06:16.806 2024/05/16 [mhttpd,ERROR] [odb.cxx:6967:db_get_data_index,ERROR] index (29) exceeds array length (20) for key

"/Equipment/Beamline/Variables/Measured"

19:06:15.806 2024/05/16 [mhttpd,ERROR] [odb.cxx:6967:db_get_data_index,ERROR] index (30) exceeds array length (20) for key

"/Equipment/Beamline/Variables/Measured"

The errors are correct, there is only 20 elements in that array. The errors are coming every few seconds, they spam midas.log. How do I fix

them? Where do they come from? There is no additional diagnostics or information to go from.

In the worst case, they come from some custom web page that reads wrong index variables from ODB. mhttpd currently provides no diagnostics to

find out which web page could be causing this.

But maybe it's internal to MIDAS? I save odb to odb.json, "grep Measured odb.json" yields:

iris00:~> grep Measured odb.json

"Condition" : "/Equipment/Beamline/Variables/Measured[29] > 1e-5",

"Condition" : "/Equipment/Beamline/Variables/Measured[30] < 0.5",

So wrong index errors is coming from evaluated alarms.

ODB "/Alarms/Alarm system active" is set to "no" (alarm system is disabled), the errors are coming.

ODB "/Alarms/Alarms/TP4/Active" is set to "no" (specific alarm is disabled), the errors are coming.

WTF? (and this is recentish borkage, old IRIS MIDAS had the same wrong index alarms and did not generate these errors).

Breakage:

- where is the error message "evaluated alarm XXX cannot be computed because YYY cannot be read from ODB!"

- disabled alarm should not be computed

- alarm system is disabled, alarms should not be computed

K.O.

P.S. I am filing bug reports here, I cannot be bothered with the 25-factor authentication to access bitbucket. |

|

2769

|

17 May 2024 |

Konstantin Olchanski | Bug Report | midas alarm borked condition evaluation | > This is a common problem I also encountered in the past. You get a low-level ODB access error (could also be a read of a non-existing key) and you

> have no idea where this comes from. Could be the alarm system, a mhttpd web page, even some user code in a front-end over which the midas library

> has no control.

committed a partial fix, added an error message in alarm condition evaluation code to report alarm name and odb paths when we cannot get something from

ODB. Now at least midas.log gives some clue that ODB errors are coming from alarms.

and the errors are actually coming from the alarms web page.

the alarms web page shows all the alarms even if alarms are disabled and it shows evaluated alarm conditions and current values even for alarms that

are disabled.

I could change it to show "disabled" for disabled alarms, but I think it would not be an improvement,

right now it is quite convenient to see the alarm values for disabled/inactive alarms,

it is easy to see if they will immediately trip if I enable them. Hiding the values would make

them blind.

And I think I know what caused the original problem in IRIS experiment, I think the list of EPICS variables got truncated from 30 to 20 and EPICS

values 29 and 30 used in the alarm conditions have become lost.

So the next step is to fix feepics to not truncate the list of variables (right now it is hardwired to 20 variables) and restore

the lost variable definition from a saved odb dump.

K.O.

>

> One option would be to add a complete stack dump to each of these error (ROOT does something like that), but I hear already people shouting "my

> midas.log is flooded with stack dumps!". So what I do in this case is I run a midas program in the debugger and set a breakpoint (in your case at

> odb.cxx:6967). If the breakpoint triggers, I inspect the stack and find out where this comes from.

>

> Not that I print a stack dump for such error in the odbxx API. This goes to stdout, not the midas log, and it helped me in the past. Unfortunately

> stack dumps work only under linux (not MacOSX), and they do not contain all information a debugger can show you.

>

> It is not true that alarm conditions are evaluated when the alarm system is off. I just tried and it works fine. The code is here:

>

> alarm.cxx:591

>

> /* check global alarm flag */

> flag = TRUE;

> size = sizeof(flag);

> db_get_value(hDB, 0, "/Alarms/Alarm system active", &flag, &size, TID_BOOL, TRUE);

> if (!flag)

> return AL_SUCCESS;

>

> so no idea why you see this error if you correctly st "Alarm system active" to false.

>

> Stefan |

|

2770

|

17 May 2024 |

Konstantin Olchanski | Bug Report | odbedit load into the wrong place | Trying to restore IRIS ODB was a nasty surprise, old save files are in .odb format and odbedit "load xxx.odb"

does an unexpected thing.

mkdir tmp

cd tmp

load odb.xml loads odb.xml into the current directory "tmp"

load odb.json same thing

load odb.odb loads into "/" unexpectedly overwriting everything in my ODB with old data

this makes it impossible for me to restore just /equipment/beamline from old .odb save file (without

overwriting all of my odb with old data).

I look inside db_paste() and it looks like this is intentional, if ODB path names in the odb save file start

with "/" (and they do), instead of loading into the current directory it loads into "/", overwriting existing

data.

The fix would be to ignore the leading "/", always restore into the current directory. This will make odbedit

load consistent between all 3 odb save file formats.

Should I apply this change?

K.O. |

|

2771

|

17 May 2024 |

Konstantin Olchanski | Bug Report | midas alarm borked condition evaluation | >

> And I think I know what caused the original problem in IRIS experiment, I think the list of EPICS variables got truncated from 30 to 20 and EPICS

> values 29 and 30 used in the alarm conditions have become lost.

>

> So the next step is to fix feepics to not truncate the list of variables (right now it is hardwired to 20 variables) and restore

> the lost variable definition from a saved odb dump.

for the record, I restored the old ODB settings from feepics, my EPICS variables now have the correct size and the alarm now works correctly.

I also updated the example feepics to read the number of EPICS variables from ODB instead of always truncating them to 20 (IRIS MIDAS had a local change

setting number of variables to 40).

I think I will make no more changes to the alarms, leave well enough alone.

K.O. |

|

2780

|

24 May 2024 |

Konstantin Olchanski | Info | added ubuntu 22 arm64 cross-compilation | Ubuntu 22 has almost everything necessary to cross-build arm64 MIDAS frontends:

# apt install g++-12-aarch64-linux-gnu gcc-12-aarch64-linux-gnu-base libstdc++-12-dev-arm64-cross

$ aarch64-linux-gnu-gcc-12 -o ttcp.aarch64 ttcp.c -static

to cross-build MIDAS:

make arm64_remoteonly -j

run programs from $MIDASSYS/linux-arm64-remoteonly/bin

link frontends to libraries in $MIDASSYS/linux-arm64-remoteonly/lib

Ubuntu 22 do not provide an arm64 libz.a, as a workaround, I build a fake one. (we do not have HAVE_ZLIB anymore...). or you

can link to libz.a from your arm64 linux image, assuming include/zlib.h are compatible.

K.O. |

|

2781

|

29 May 2024 |

Konstantin Olchanski | Info | MIDAS RPC add support for std::string and std::vector<char> | This is moving slowly. I now have RPC caller side support for std::string and

std::vector<char>. RPC server side is next. K.O. |

|

2782

|

02 Jun 2024 |

Konstantin Olchanski | Info | MIDAS RPC data format | > MIDAS RPC data format.

> 3) RPC reply

> 3.1) header:

> 3.2) followed by data for RPC_OUT parameters:

>

> data sizes and encodings are the same as for RPC_IN parameters.

Correction:

RPC_VARARRAY data encoding for data returned by RPC is different from data sent to RPC:

4 bytes of arg_size (before 8-byte alignement), (for data sent to RPC, it's 4 bytes of param_size, after 8-byte alignment)

4 bytes of padding

param_size of data

K.O.

P.S. bug/discrepancy caught by GCC/LLVM address sanitizer. |

|

1833

|

14 Feb 2020 |

Konrad Briggl | Forum | Writting Midas Events via FPGAs | Hello Stefan,

is there a difference for the later data processing (after writing the ring buffer blocks)

if we write single events or multiple in one rb_get_wp - memcopy - rb_increment_wp cycle?

Both Marius and me have seen some inconsistencies in the number of events produced that is reported in the status page when writing multiple events in one go,

so I was wondering if this is due to us treating the buffer badly or the way midas handles the events after that.

Given that we produce the full event in our (FPGA) domain, an option would be to always copy one event from the dma to the midas-system buffer in a loop.

The question is if there is a difference (for midas) between

[pseudo code, much simplified]

while(dma_read_index < last_dma_write_index){

if(rb_get_wp(pdata)!=SUCCESS){

dma_read_index+=event_size;

continue;

}

copy_n(dma_buffer, pdata, event_size);

rb_increment_wp(event_size);

dma_read_index+=event_size;

}

and

while(dma_read_index < last_dma_write_index){

if(rb_get_wp(pdata)!=SUCCESS){

...

};

total_size=max_n_events_that_fit_in_rb_block();

copy_n(dma_buffer, pdata, total_size);

rb_increment_wp(total_size);

dma_read_index+=total_size;

}

Cheers,

Konrad

> The rb_xxx function are (thoroughly tested!) robust against high data rate given that you use them as intended:

>

> 1) Once you create the ring buffer via rb_create(), specify the maximum event size (overall event size, not bank size!). Later there is no protection any more, so if you obtain pdata from rb_get_wp, you can of course write 4GB to pdata, overwriting everything in your memory, causing a total crash. It's your responsibility to not write more bytes into pdata then

> what you specified as max event size in rb_create()

>

> 2) Once you obtain a write pointer to the ring buffer via rb_get_wp, this function might fail when the receiving side reads data slower than the producing side, simply because the buffer is full. In that case the producing side has to wait until space is freed up in the buffer by the receiving side. If your call to rb_get_wp returns DB_TIMEOUT, it means that the

> function did not obtain enough free space for the next event. In that case you have to wait (like ss_sleep(10)) and try again, until you succeed. Only when rb_get_wp() returns DB_SUCCESS, you are allowed to write into pdata, up to the maximum event size specified in rb_create of course. I don't see this behaviour in your code. You would need something

> like

>

> do {

> status = rb_get_wp(rbh, (void **)&pdata, 10);

> if (status == DB_TIMEOUT)

> ss_sleep(10);

> } while (status == DB_TIMEOUT);

>

> Best,

> Stefan

>

>

> > Dear all,

> >

> > we creating Midas events directly inside a FPGA and send them off via DMA into the PC RAM. For reading out this RAM via Midas the FPGA sends as a pointer where it has written the last 4kB of data. We use this pointer for telling the ring buffer of midas where the new events are. The buffer looks something like:

> >

> > // event 1

> > dma_buf[0] = 0x00000001; // Trigger and Event ID

> > dma_buf[1] = 0x00000001; // Serial number

> > dma_buf[2] = TIME; // time

> > dma_buf[3] = 18*4-4*4; // event size

> > dma_buf[4] = 18*4-6*4; // all bank size

> > dma_buf[5] = 0x11; // flags

> > // bank 0

> > dma_buf[6] = 0x46454230; // bank name

> > dma_buf[7] = 0x6; // bank type TID_DWORD

> > dma_buf[8] = 0x3*4; // data size

> > dma_buf[9] = 0xAFFEAFFE; // data

> > dma_buf[10] = 0xAFFEAFFE; // data

> > dma_buf[11] = 0xAFFEAFFE; // data

> > // bank 1

> > dma_buf[12] = 0x1; // bank name

> > dma_buf[12] = 0x46454231; // bank name

> > dma_buf[13] = 0x6; // bank type TID_DWORD

> > dma_buf[14] = 0x3*4; // data size

> > dma_buf[15] = 0xAFFEAFFE; // data

> > dma_buf[16] = 0xAFFEAFFE; // data

> > dma_buf[17] = 0xAFFEAFFE; // data

> >

> > // event 2

> > .....

> >

> > dma_buf[fpga_pointer] = 0xXXXXXXXX;

> >

> >

> > And we do something like:

> >

> > while{true}

> > // obtain buffer space

> > status = rb_get_wp(rbh, (void **)&pdata, 10);

> > fpga_pointer = fpga.read_last_data_add();

> >

> > wlen = last_fpga_pointer - fpga_pointer; \\ in 32 bit words

> > copy_n(&dma_buf[last_fpga_pointer], wlen, pdata);

> > rb_status = rb_increment_wp(rbh, wlen * 4); \\ in byte

> >

> > last_fpga_pointer = fpga_pointer;

> >

> > Leaving the case out where the dma_buf wrap around this works fine for a small data rate. But if we increase the rate the fpga_pointer also increases really fast and wlen gets quite big. Actually it gets bigger then max_event_size which is checked in rb_increment_wp leading to an error.

> >

> > The problem now is that the event size is actually not to big but since we have multi events in the buffer which are read by midas in one step. So we think in this case the function rb_increment_wp is comparing actually the wrong thing. Also increasing the max_event_size does not help.

> >

> > Remark: dma_buf is volatile so memcpy is not possible here.

> >

> > Cheers,

> > Marius |

|

357

|

02 Mar 2007 |

Kevin Lynch | Forum | event builder scalability | > Hi there:

> I have a question if there's anybody out there running MIDAS with event builder

> that assembles events from more that just a few front ends (say on the order of

> 0x10 or more)?

> Any experiences with scalability?

>

> Cheers

> Piotr

Mulan (which you hopefully remember with great fondness :-) is currently running

around ten frontends, six of which produce data at any rate. If I'm remembering

correctly, the event builder handles about 30-40MB/s. You could probably ping Tim

Gorringe or his current postdoc Volodya Tishenko (tishenko@pa.uky.edu) if you want

more details. Volodya solved a significant number of throughput related

bottlenecks in the year leading up to our 2006 run. |

|

1225

|

15 Dec 2016 |

Kevin Giovanetti | Bug Report | midas.h error | creating a frontend on MAC Sierra OSX 10

include the midas.h file and when compiling with XCode I get an error based on

this entry in the midas.h include

#if !defined(OS_IRIX) && !defined(OS_VMS) && !defined(OS_MSDOS) &&

!defined(OS_UNIX) && !defined(OS_VXWORKS) && !defined(OS_WINNT)

#error MIDAS cannot be used on this operating system

#endif

Perhaps I should not use Xcode?

Perhaps I won't need Midas.h?

The MIDAS system is running on my MAC but I need to add a very simple front end

for testing and I encounted this error. |

|

1404

|

30 Oct 2018 |

Joseph McKenna | Bug Report | Side panel auto-expands when history page updates |

One can collapse the side panel when looking at history pages with the button in

the top left, great! We want to see many pages so screen real estate is important

The issue we face is that when the page refreshes, the side panel expands. Can

we make the panel state more 'sticky'?

Many thanks

Joseph (ALPHA)

Version: 2.1

Revision: Mon Mar 19 18:15:51 2018 -0700 - midas-2017-07-c-197-g61fbcd43-dirty

on branch feature/midas-2017-10 |

|

1406

|

31 Oct 2018 |

Joseph McKenna | Bug Report | Side panel auto-expands when history page updates | > >

> >

> > One can collapse the side panel when looking at history pages with the button in

> > the top left, great! We want to see many pages so screen real estate is important

> >

> > The issue we face is that when the page refreshes, the side panel expands. Can

> > we make the panel state more 'sticky'?

> >

> > Many thanks

> > Joseph (ALPHA)

> >

> > Version: 2.1

> > Revision: Mon Mar 19 18:15:51 2018 -0700 - midas-2017-07-c-197-g61fbcd43-dirty

> > on branch feature/midas-2017-10

>

> Hi Joseph,

>

> In principle a page refresh should now not be necessary, since pages should reload automatically

> the contents which changes. If a custom page needs a reload, it is not well designed. If necessary, I

> can explain the details.

>

> Anyhow I implemented your "stickyness" of the side panel in the last commit to the develop branch.

>

> Best regards,

> Stefan

Hi Stefan,

I apologise for miss using the word refresh. The re-appearing sidebar was also seen with the automatic

reload, I have implemented your fix here and it now works great!

Thank you very much!

Joseph |

|

Draft

|

14 Oct 2019 |

Joseph McKenna | Forum | tmfe.cxx - Future frontend design | Hi,

I have been looking at the 2019 workshop slides, I am interested in the C++ future of MIDAS.

I am quite interested in using the object oriented

ALPHA will start data taking in 2021 |

|

1727

|

18 Oct 2019 |

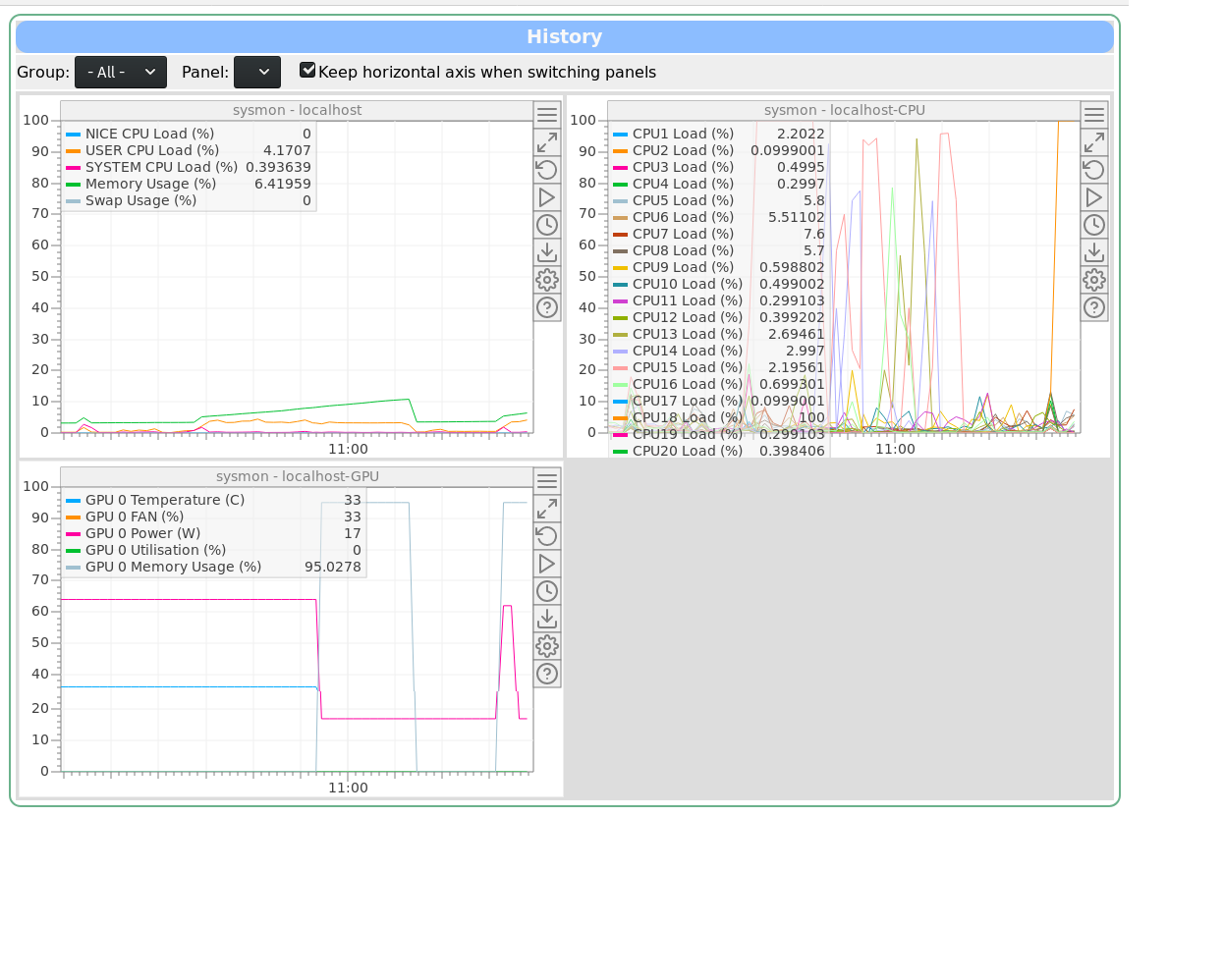

Joseph McKenna | Info | sysmon: New system monitor and performance logging frontend added to MIDAS |

I have written a system monitor tool for MIDAS, that has been merged in the develop branch today: sysmon

https://bitbucket.org/tmidas/midas/pull-requests/8/system-monitoring-a-new-frontend-to-log/diff

To use it, simply run the new program

sysmon

on any host that you want to monitor, no configuring required.

The program is a frontend for MIDAS, there is no need for configuration, as upon initialisation it builds a history display for you. Simply run one instance per machine you want to monitor. By default, it only logs once per 10 seconds.

The equipment name is derived from the hostname, so multiple instances can be run across multiple machines without conflict. A new history display will be created for each host.

sysmon uses the /proc pseudo-filesystem, so unfortunately only linux is supported. It does however work with multiple architectures, so x86 and ARM processors are supported.

If the build machine has NVIDIA drivers installed, there is an additional version of sysmon that gets built: sysmon-nvidia. This will log the GPU temperature and usage, as well as CPU, memory and swap. A host should only run either sysmon or sysmon-nvidia

elog:1727/1 shows the History Display generated by sysmon-nvidia. sysmon would only generate the first two displays (sysmon/localhost and sysmon/localhost-CPU) |

| Attachment 1: sysmon-gpu.png

|

|

|

1746

|

03 Dec 2019 |

Joseph McKenna | Info | mfe.c: MIDAS frontend's 'Equipment name' can embed hostname, determined at run-time | A little advertised feature of the modifications needed support the msysmon program is

that MIDAS equipment names can support the injecting of the hostname of the system

running the frontend at runtime (register_equipment(void)).

https://midas.triumf.ca/MidasWiki/index.php/Equipment_List_Parameters#Equipment_Name

A special string ${HOSTNAME} can be put in any position in the equipment name. It will

be replaced with the hostname of the computer running the frontend at run-time. Note,

the frontend_name string will be trimmed down to 32 characters.

Example usage: msysmon

EQUIPMENT equipment[] = {

{ "${HOSTNAME}_msysmon", /* equipment name */ {

EVID_MONITOR, 0, /* event ID, trigger mask */

"SYSTEM", /* event buffer */

EQ_PERIODIC, /* equipment type */

0, /* event source */

"MIDAS", /* format */

TRUE, /* enabled */

RO_ALWAYS, /* Read when running */

10000, /* poll every so milliseconds */

0, /* stop run after this event limit */

0, /* number of sub events */

1, /* history period */

"", "", ""

},

read_system_load,/* readout routine */

},

{ "" }

}; |

|

1891

|

01 May 2020 |

Joseph McKenna | Forum | Taking MIDAS beyond 64 clients |

Hi all,

I have been experimenting with a frontend solution for my experiment

(ALPHA). The intention to replace how we log data from PCs running LabVIEW.

I am at the proof of concept stage. So far I have some promising

performance, able to handle 10-100x more data in my test setup (current

limitations now are just network bandwith, MIDAS is impressively efficient).

==========================================================================

Our experiment has many PCs using LabVIEW which all log to MIDAS, the

experiment has grown such that we need some sort of load balancing in our

frontend.

The concept was to have a 'supervisor frontend' and an array of 'worker

frontend' processes.

-A LabVIEW client would connect to the supervisor, then be referred to a

worker frontend for data logging.

-The supervisor could start a 'worker frontend' process as the demand

required.

To increase accountability within the experiment, I intend to have a 'worker

frontend' per PC connecting. Then any rouge behavior would be clear from the

MIDAS frontpage.

Presently there around 20-30 of these LabVIEW PCs, but given how the group

is growing, I want to be sure that my data logging solution will be viable

for the next 5-10 years. With the increased use of single board computers, I

chose the target of benchmarking upto 1000 worker frontends... but I quickly

hit the '64 MAX CLIENTS' and '64 RPC CONNECTION' limit. Ok...

branching and updating these limits:

https://bitbucket.org/tmidas/midas/branch/experimental-beyond_64_clients

I have two commits.

1. update the memory layout assertions and use MAX_CLIENTS as a variable

https://bitbucket.org/tmidas/midas/commits/302ce33c77860825730ce48849cb810cf

366df96?at=experimental-beyond_64_clients

2. Change the MAX_CLIENTS and MAX_RPC_CONNECTION

https://bitbucket.org/tmidas/midas/commits/f15642eea16102636b4a15c8411330969

6ce3df1?at=experimental-beyond_64_clients

Unintended side effects:

I break compatibility of existing ODB files... the database layout has

changed and I read my old ODB as corrupt. In my test setup I can start from

scratch but this would be horrible for any existing experiment.

Edit: I noticed 'make testdiff' pipeline is failing... also fails locally...

investigating

Early performance results:

In early tests, ~700 PCs logging 10 unique arrays of 10 doubles into

Equipment variables in the ODB seems to perform well... All transactions

from client PCs are finished within a couple of ms or less

==========================================================================

Questions:

Does the community here have strong opinions about increasing the

MAX_CLIENTS and MAX_RPC_CONNECTION limits?

Am I looking at this problem in a naive way?

Potential solutions other than increasing the MAX_CLIENTS limit:

-Make worker threads inside the supervisor (not a separate process), I am

using TMFE, so I can dynamically create equipment. I have not yet taken a

deep dive into how any multithreading is implemented

-One could have a round robin system to load balance between a limited pool

of 'worker frontend' proccesses. I don't like this solution as I want to

able to clearly see which client PCs have been setup to log too much data

========================================================================== |

|

1895

|

02 May 2020 |

Joseph McKenna | Forum | Taking MIDAS beyond 64 clients |

Thank you very much for feedback.

I am satisfied with not changing the 64 client limit. I will look at re-writing my frontend to spawn threads rather than

processses. The load of my frontend is low, so I do not anticipate issues with a threaded implementation.

In this threaded scenario, it will be a reasonable amount of time until ALPHA bumps into the 64 client limit.

If it avoids confusion, I am happy for my experimental branch 'experimental-beyond_64_clients' to be deleted.

Perhaps a item for future discussion would be for the odbinit program to be able to 'upgrade' the ODB and enable some backwards

compatibility.

Thanks again

Joseph |

|

2015

|

19 Nov 2020 |

Joseph McKenna | Forum | History plot consuming too much memory |

A user reported an issue that if they were to plot some history data from

2019 (a range of one day), the plot would spend ~4 minutes loading then

crash the browser tab. This seems to effect chrome (under default settings)

and not firefox

I can reproduce the issue, "Data Being Loaded" shows, then the page and

canvas loads, then all variables get a correct "last data" timestamp, then

the 'Updating data ...' status shows... then the tab crashes (chrome)

It seems that the browser is loading all data until the present day (maybe 4

Gb of data in this case). In chrome the tab then crashes. In firefox, I do

not suffer the same crash, but I can see the single tab is using ~3.5 Gb of

RAM

Tested with midas-2020-08-a up until the HEAD of develop

I could propose the user use firefox, or increase the memory limit in

chrome, however are there plans to limit the data loaded when specifically

plotting between two dates? |

|

2017

|

20 Nov 2020 |

Joseph McKenna | Forum | History plot consuming too much memory | Poking at the behavior of this, its fairly clear the slow response is from the data

being loaded off an HDD, when we upgrade this system we will allocate enough SSD

storage for the histories.

Using Firefox has resolved this issue for the user's project here

Taking this down a tangent, I have a mild concern that a user could temporarily

flood our gigabit network if we do have faster disks to read the history data. Have

there been any plans or thoughts on limiting the bandwidth users can pull from

mhttpd? I do not see this as a critical item as I can plan the future network

infrastructure at the same time as the next system upgrade (putting critical data

taking traffic on a separate physical network).

> Of course one can only

> load that specific window, but when the user then scrolls right, one has to

> append new data to the "right side" of the array stored in the browser. If the

> user jumps to another location, then the browser has to keep track of which

> windows are loaded and which windows not, making the history code much more

> complicated. Therefore I'm only willing to spend a few days of solid work

> if this really becomes a problem.

For now the user here has retrieved all the data they need, and I can direct others

towards mhist in the near future. Being able to load just a specific window would be

very useful in the future, but I comprehend how it would be a spike in complexity. |

|