| ID |

Date |

Author |

Topic |

Subject |

|

2074

|

13 Jan 2021 |

Stefan Ritt | Forum | poll_event() is very slow. | Something must be wrong on your side. If you take the example frontend under

midas/examples/experiment/frontend.cxx

and let it run to produce dummy events, you get about 90 Hz. This is because we have a

ss_sleep(10);

in the read_trigger_event() routine to throttle things down. If you remove that sleep,

you get an event rate of about 500'000 Hz. So the framework is really quick.

Probably your routine which looks for a 'lam' takes really long and should be fixed.

Stefan |

|

2075

|

14 Jan 2021 |

Pintaudi Giorgio | Forum | poll_event() is very slow. | > Something must be wrong on your side. If you take the example frontend under

>

> midas/examples/experiment/frontend.cxx

>

> and let it run to produce dummy events, you get about 90 Hz. This is because we have a

>

> ss_sleep(10);

>

> in the read_trigger_event() routine to throttle things down. If you remove that sleep,

> you get an event rate of about 500'000 Hz. So the framework is really quick.

>

> Probably your routine which looks for a 'lam' takes really long and should be fixed.

>

> Stefan

Sorry if I am going off-topic but, because the ss_sleep function was mentioned here, I

would like to take the chance and report an issue that I am having.

In all my slow control frontends, the CPU usage for each frontend is close to 100%. This

means that each frontend is monopolizing a single core. When I did some profiling, I

noticed that 99% of the time is spent inside the ss_sleep function. Now, I would expect

that the ss_sleep function should not require any CPU usage at all or very little.

So my two questions are:

Is this a bug or a feature?

Would you able to check/reproduce this behavior or do you need additional info from my

side? |

|

2076

|

14 Jan 2021 |

Isaac Labrie Boulay | Forum | poll_event() is very slow. | > Something must be wrong on your side. If you take the example frontend under

>

> midas/examples/experiment/frontend.cxx

>

> and let it run to produce dummy events, you get about 90 Hz. This is because we have a

>

> ss_sleep(10);

>

> in the read_trigger_event() routine to throttle things down. If you remove that sleep,

> you get an event rate of about 500'000 Hz. So the framework is really quick.

>

> Probably your routine which looks for a 'lam' takes really long and should be fixed.

>

> Stefan

Hi Stefan,

I should mention that I was using midas/examples/Triumf/c++/fevme.cxx. I was trying to see

the max speed so I had the 'lam' always = 1 with nothing else to add overhead in the

poll_event(). I was getting <200 Hz. I am assuming that this is a bug. There is no

ss_sleep() in that function.

Thanks for your quick response!

Isaac |

|

2077

|

15 Jan 2021 |

Isaac Labrie Boulay | Forum | poll_event() is very slow. | > >

> > I'm currently trying to see if I can speed up polling in a frontend I'm testing.

> > Currently it seems like I can't get 'lam's to happen faster than 120 times/second.

> > There must be a way to make this faster. From what I understand, changing the poll

> > time (500ms by default) won't affect the frequency of polling just the 'lam'

> > period.

> >

> > Any suggestions?

> >

>

> You could switch from the traditional midas mfe.c frontend to the C++ TMFE frontend,

> where all this "lam" and "poll" business is removed.

>

> At the moment, there are two example programs using the C++ TMFE frontend,

> single threaded (progs/fetest_tmfe.cxx) and multithreaed (progs/fetest_tmfe_thread.cxx).

>

> K.O.

Ok. I did not know that there was a C++ OOD frontend example in MIDAS. I'll take a look at

it. Is there any documentation on it works?

Thanks for the support!

Isaac |

|

2084

|

08 Feb 2021 |

Konstantin Olchanski | Forum | poll_event() is very slow. | > I should mention that I was using midas/examples/Triumf/c++/fevme.cxx

this is correct, the fevme frontend is written to do 100% CPU-busy polling.

there is several reasons for this:

- on our VME processors, we have 2 core CPUs, 1st core can poll the VME bus, 2nd core can run

mfe.c and the ethernet transmitter.

- interrupts are expensive to use (in latency and in cpu use) because kernel handler has to call

use handler, return back etc

- sub-millisecond sleep used to be expensive and unreliable (on 1-2GHz "core 1" and "core 2"

CPUs running SL6 and SL7 era linux). As I understand, current linux and current 3+GHz CPUs can

do reliable microsecond sleep.

K.O. |

|

434

|

18 Feb 2008 |

Konstantin Olchanski | Bug Report | potential memory corruption in odb,c:extract_key() | It looks like ODB function extract_key() will overwrite the array pointed to by "key_name" if given an odb

path with very long names (as seems to happen when redirection explodes in the Safari web browser, via

db_get_value(TRUE) via mhttpd "start program" button). All callers of this function seem to provide 256

byte strings, so the problem would not show up in normal use - only when abnormal odb paths are being

parsed. Proposed solution is to add a "length" argument to this function. (Actually ODB path elements

should be restricted to NAME_LENGTH (32 bytes), right?). K.O. |

|

444

|

21 Feb 2008 |

Konstantin Olchanski | Bug Report | potential memory corruption in odb,c:extract_key() | > It looks like ODB function extract_key() will overwrite the array pointed to by "key_name" if given an odb

> path with very long names (as seems to happen when redirection explodes in the Safari web browser, via

> db_get_value(TRUE) via mhttpd "start program" button). All callers of this function seem to provide 256

> byte strings, so the problem would not show up in normal use - only when abnormal odb paths are being

> parsed. Proposed solution is to add a "length" argument to this function. (Actually ODB path elements

> should be restricted to NAME_LENGTH (32 bytes), right?). K.O.

This is fixed in svn revision 4129.

K.O. |

|

1216

|

24 Oct 2016 |

Tim Gorringe | Bug Report | problem with error code DB_NO_MEMORY from db_open_record() call when establish additional hotlinks | Hi Midas forum,

I'm having a problem with odb hotlinks after increasing sub-directories in an

odb. I now get the error code DB_NO_MEMORY after some db_open_record() calls. I

tried

1) increasing the parameter DEFAULT_ODB_SIZE in midas.h and make clean, make

but got the same error

2) increasing the parameter MAX_OPEN_RECORDS in midas.h and make clean, make

but got fatal errors from odbedit and my midas FE and couldnt run anything

3) deleting my expts SHM files and starting odbedit with "odbedit -e SLAC -s

0x1000000" to increse the odb size but got the same error?

4) I tried a different computer and got the same error code DB_NO_MEMORY

Maybe I running into some system limit that restricts the humber of open records?

Or maybe I've not increased the correct midas parameter?

Best ,Tim. |

|

1218

|

25 Oct 2016 |

Tim Gorringe | Bug Report | problem with error code DB_NO_MEMORY from db_open_record() call when establish additional hotlinks | oOne additional comment. I was able to trace the setting of the error code DB_NO_MEMORY

to a call to the db_add_open_record() by mserver that is initiated during the start-up

of my frontend via an RPC call. I checked with a debug printout that I have indeed

reached the number of MAX_OPEN_RECORDS

> Hi Midas forum,

>

> I'm having a problem with odb hotlinks after increasing sub-directories in an

> odb. I now get the error code DB_NO_MEMORY after some db_open_record() calls. I

> tried

>

> 1) increasing the parameter DEFAULT_ODB_SIZE in midas.h and make clean, make

> but got the same error

>

> 2) increasing the parameter MAX_OPEN_RECORDS in midas.h and make clean, make

> but got fatal errors from odbedit and my midas FE and couldnt run anything

>

> 3) deleting my expts SHM files and starting odbedit with "odbedit -e SLAC -s

> 0x1000000" to increse the odb size but got the same error?

>

> 4) I tried a different computer and got the same error code DB_NO_MEMORY

>

> Maybe I running into some system limit that restricts the humber of open records?

> Or maybe I've not increased the correct midas parameter?

>

> Best ,Tim. |

|

Draft

|

04 Nov 2016 |

Thomas Lindner | Bug Report | problem with error code DB_NO_MEMORY from db_open_record() call when establish additional hotlinks | Hi Tim,

I reproduced your problem and then managed to go through a procedure to increase the number of allowable open records. The following is the procedure that I used

1) Use odbedit to save current ODB

odbedit

save current_odb.odb

2) Stop all the running MIDAS processes, including mlogger and mserver using the web interface. Then stop mhttpd as well.

3) Remove your old ODB (we will recreate it after modifying MIDAS, using the backup you just made).

mv .ODB.SHM .ODB.SHM.20161104

rm /dev/shm/thomas_ODB_SHM

4) Make the following modifications to midas. In this particular case I have increased the max number of open records from 256 to 1024. You would need to change the constants if you want to change to other values

diff --git a/include/midas.h b/include/midas.h

index 02b30dd..33be7be 100644

--- a/include/midas.h

+++ b/include/midas.h

@@ -254,7 +254,7 @@ typedef std::vector<std::string> STRING_LIST;

-#define MAX_OPEN_RECORDS 256 /**< number of open DB records */

+#define MAX_OPEN_RECORDS 1024 /**< number of open DB records */

diff --git a/src/odb.c b/src/odb.c

index 47ace8f..ac1bef3 100755

--- a/src/odb.c

+++ b/src/odb.c

@@ -699,8 +699,8 @@ static void db_validate_sizes()

- assert(sizeof(DATABASE_CLIENT) == 2112);

- assert(sizeof(DATABASE_HEADER) == 135232);

+ assert(sizeof(DATABASE_CLIENT) == 8256);

+ assert(sizeof(DATABASE_HEADER) == 528448);

The calculation is as follows (in case you want a different number of open records):

DATABASE_CLIENT = 64 + 8*MAX_OPEN_ERCORDS = 64 + 8*1024 = 8256

DATABASE_HEADER = 64 + 64*DATABASE_CLIENT = 64 + 64*8256 = 528448

5) Rebuild MIDAS

make clean; make

6) Create new ODB

odbedit -s 1000000

Change the size of the ODB to whatever you want.

7) reload your original ODB

load current_odb.odb

8) Rebuild your frontend against new MIDAS; then it should work and you should be able to produce more open records.

8.5*) Actually, I had a weird error where I needed to remove my .SYSTEM.SHM file as well when I first restarted my front-end. Not sure if that was some unrelated error, but I mention it here for completeness.

This was a procedure based on something that originally was used for T2K (procedure by Renee Poutissou). It is possible that not all steps are necessary and that there is a better way. But this worked for me.

Also, any objections from other developers to tweaking the assert checks in odb.c so that the values are calculated automatically and MIDAS only needs to be touched in one place to modify the number of open records?

Let me know if it worked for you and I'll add these instructions to the Wiki.

Thomas

> oOne additional comment. I was able to trace the setting of the error code DB_NO_MEMORY

> to a call to the db_add_open_record() by mserver that is initiated during the start-up

> of my frontend via an RPC call. I checked with a debug printout that I have indeed

> reached the number of MAX_OPEN_RECORDS

>

> > Hi Midas forum,

> >

> > I'm having a problem with odb hotlinks after increasing sub-directories in an

> > odb. I now get the error code DB_NO_MEMORY after some db_open_record() calls. I

> > tried

> >

> > 1) increasing the parameter DEFAULT_ODB_SIZE in midas.h and make clean, make

> > but got the same error

> >

> > 2) increasing the parameter MAX_OPEN_RECORDS in midas.h and make clean, make

> > but got fatal errors from odbedit and my midas FE and couldnt run anything

> >

> > 3) deleting my expts SHM files and starting odbedit with "odbedit -e SLAC -s

> > 0x1000000" to increse the odb size but got the same error?

> >

> > 4) I tried a different computer and got the same error code DB_NO_MEMORY

> >

> > Maybe I running into some system limit that restricts the humber of open records?

> > Or maybe I've not increased the correct midas parameter?

> >

> > Best ,Tim. |

|

1220

|

04 Nov 2016 |

Thomas Lindner | Bug Report | problem with error code DB_NO_MEMORY from db_open_record() call when establish additional hotlinks | Hi Tim,

I reproduced your problem and then managed to go through a procedure to increase the number

of allowable open records. The following is the procedure that I used

1) Use odbedit to save current ODB

odbedit

save current_odb.odb

2) Stop all the running MIDAS processes, including mlogger and mserver using the web

interface. Then stop mhttpd as well.

3) Remove your old ODB (we will recreate it after modifying MIDAS, using the backup you just

made).

mv .ODB.SHM .ODB.SHM.20161104

rm /dev/shm/thomas_ODB_SHM

4) Make the following modifications to midas. In this particular case I have increased the

max number of open records from 256 to 1024. You would need to change the constants if you

want to change to other values

diff --git a/include/midas.h b/include/midas.h

index 02b30dd..33be7be 100644

--- a/include/midas.h

+++ b/include/midas.h

@@ -254,7 +254,7 @@ typedef std::vector<std::string> STRING_LIST;

-#define MAX_OPEN_RECORDS 256 /**< number of open DB records */

+#define MAX_OPEN_RECORDS 1024 /**< number of open DB records */

diff --git a/src/odb.c b/src/odb.c

index 47ace8f..ac1bef3 100755

--- a/src/odb.c

+++ b/src/odb.c

@@ -699,8 +699,8 @@ static void db_validate_sizes()

- assert(sizeof(DATABASE_CLIENT) == 2112);

- assert(sizeof(DATABASE_HEADER) == 135232);

+ assert(sizeof(DATABASE_CLIENT) == 8256);

+ assert(sizeof(DATABASE_HEADER) == 528448);

The calculation is as follows (in case you want a different number of open records):

DATABASE_CLIENT = 64 + 8*MAX_OPEN_ERCORDS = 64 + 8*1024 = 8256

DATABASE_HEADER = 64 + 64*DATABASE_CLIENT = 64 + 64*8256 = 528448

5) Rebuild MIDAS

make clean; make

6) Create new ODB

odbedit -s 1000000

Change the size of the ODB to whatever you want.

7) reload your original ODB

load current_odb.odb

8) Rebuild your frontend against new MIDAS; then it should work and you should be able to

produce more open records.

8.5*) Actually, I had a weird error where I needed to remove my .SYSTEM.SHM file as well

when I first restarted my front-end. Not sure if that was some unrelated error, but I

mention it here for completeness.

This was a procedure based on something that originally was used for T2K (procedure by Renee

Poutissou). It is possible that not all steps are necessary and that there is a better way.

But this worked for me.

Also, any objections from other developers to tweaking the assert checks in odb.c so that

the values are calculated automatically and MIDAS only needs to be touched in one place to

modify the number of open records?

Let me know if it worked for you and I'll add these instructions to the Wiki.

Thomas

> oOne additional comment. I was able to trace the setting of the error code DB_NO_MEMORY

> to a call to the db_add_open_record() by mserver that is initiated during the start-up

> of my frontend via an RPC call. I checked with a debug printout that I have indeed

> reached the number of MAX_OPEN_RECORDS

>

> > Hi Midas forum,

> >

> > I'm having a problem with odb hotlinks after increasing sub-directories in an

> > odb. I now get the error code DB_NO_MEMORY after some db_open_record() calls. I

> > tried

> >

> > 1) increasing the parameter DEFAULT_ODB_SIZE in midas.h and make clean, make

> > but got the same error

> >

> > 2) increasing the parameter MAX_OPEN_RECORDS in midas.h and make clean, make

> > but got fatal errors from odbedit and my midas FE and couldnt run anything

> >

> > 3) deleting my expts SHM files and starting odbedit with "odbedit -e SLAC -s

> > 0x1000000" to increse the odb size but got the same error?

> >

> > 4) I tried a different computer and got the same error code DB_NO_MEMORY

> >

> > Maybe I running into some system limit that restricts the humber of open records?

> > Or maybe I've not increased the correct midas parameter?

> >

> > Best ,Tim. |

|

1221

|

25 Nov 2016 |

Thomas Lindner | Bug Report | problem with error code DB_NO_MEMORY from db_open_record() call when establish additional hotlinks | The procedure I wrote seemed to work for Tim too, so I added a page to the wiki about it here:

https://midas.triumf.ca/MidasWiki/index.php/FAQ

> Hi Tim,

>

> I reproduced your problem and then managed to go through a procedure to increase the number

> of allowable open records. The following is the procedure that I used

>

> 1) Use odbedit to save current ODB

>

> odbedit

> save current_odb.odb

>

> 2) Stop all the running MIDAS processes, including mlogger and mserver using the web

> interface. Then stop mhttpd as well.

>

>

> 3) Remove your old ODB (we will recreate it after modifying MIDAS, using the backup you just

> made).

>

> mv .ODB.SHM .ODB.SHM.20161104

> rm /dev/shm/thomas_ODB_SHM

>

> 4) Make the following modifications to midas. In this particular case I have increased the

> max number of open records from 256 to 1024. You would need to change the constants if you

> want to change to other values

>

> diff --git a/include/midas.h b/include/midas.h

> index 02b30dd..33be7be 100644

> --- a/include/midas.h

> +++ b/include/midas.h

> @@ -254,7 +254,7 @@ typedef std::vector<std::string> STRING_LIST;

> -#define MAX_OPEN_RECORDS 256 /**< number of open DB records */

> +#define MAX_OPEN_RECORDS 1024 /**< number of open DB records */

> diff --git a/src/odb.c b/src/odb.c

> index 47ace8f..ac1bef3 100755

> --- a/src/odb.c

> +++ b/src/odb.c

> @@ -699,8 +699,8 @@ static void db_validate_sizes()

> - assert(sizeof(DATABASE_CLIENT) == 2112);

> - assert(sizeof(DATABASE_HEADER) == 135232);

> + assert(sizeof(DATABASE_CLIENT) == 8256);

> + assert(sizeof(DATABASE_HEADER) == 528448);

>

> The calculation is as follows (in case you want a different number of open records):

> DATABASE_CLIENT = 64 + 8*MAX_OPEN_ERCORDS = 64 + 8*1024 = 8256

> DATABASE_HEADER = 64 + 64*DATABASE_CLIENT = 64 + 64*8256 = 528448

>

> 5) Rebuild MIDAS

>

> make clean; make

>

> 6) Create new ODB

>

> odbedit -s 1000000

>

> Change the size of the ODB to whatever you want.

>

> 7) reload your original ODB

>

> load current_odb.odb

>

> 8) Rebuild your frontend against new MIDAS; then it should work and you should be able to

> produce more open records.

>

> 8.5*) Actually, I had a weird error where I needed to remove my .SYSTEM.SHM file as well

> when I first restarted my front-end. Not sure if that was some unrelated error, but I

> mention it here for completeness.

>

> This was a procedure based on something that originally was used for T2K (procedure by Renee

> Poutissou). It is possible that not all steps are necessary and that there is a better way.

> But this worked for me.

>

> Also, any objections from other developers to tweaking the assert checks in odb.c so that

> the values are calculated automatically and MIDAS only needs to be touched in one place to

> modify the number of open records?

>

> Let me know if it worked for you and I'll add these instructions to the Wiki.

>

> Thomas

>

>

>

> > oOne additional comment. I was able to trace the setting of the error code DB_NO_MEMORY

> > to a call to the db_add_open_record() by mserver that is initiated during the start-up

> > of my frontend via an RPC call. I checked with a debug printout that I have indeed

> > reached the number of MAX_OPEN_RECORDS

> >

> > > Hi Midas forum,

> > >

> > > I'm having a problem with odb hotlinks after increasing sub-directories in an

> > > odb. I now get the error code DB_NO_MEMORY after some db_open_record() calls. I

> > > tried

> > >

> > > 1) increasing the parameter DEFAULT_ODB_SIZE in midas.h and make clean, make

> > > but got the same error

> > >

> > > 2) increasing the parameter MAX_OPEN_RECORDS in midas.h and make clean, make

> > > but got fatal errors from odbedit and my midas FE and couldnt run anything

> > >

> > > 3) deleting my expts SHM files and starting odbedit with "odbedit -e SLAC -s

> > > 0x1000000" to increse the odb size but got the same error?

> > >

> > > 4) I tried a different computer and got the same error code DB_NO_MEMORY

> > >

> > > Maybe I running into some system limit that restricts the humber of open records?

> > > Or maybe I've not increased the correct midas parameter?

> > >

> > > Best ,Tim. |

|

1293

|

16 May 2017 |

Konstantin Olchanski | Bug Report | problem with odb strings and db_get_record() | Suddenly the mhttpd odb inline editor is truncating the odb string entries to the actual length of the

stored string value, this causes db_get_record() explode with "structure mismatch" errors. (Not my

fault, You Honor! Honest!). For example, I see these errors from al_check() after changing

"/programs/foo/start command" - suddenly it cannot get the program_info record.

What a mess.

Actually, this is not a new mess, midas was always been rather brittle with db_get_record()

and db_open_record(), always unhappy if something goes wrong in odb, like a lost

entry in equipment statistics or an extra variable in equipment common, etc.

To patch it all up, I added a function db_get_record1() which knows the structure of the data

and can call db_check_record() to fix the odb structure and make db_get_record() happy.

Many places in midas now use it, making odb structure mismatches "self healing" in a way.

But when looking at uses of db_get_record(), I notices that in many places it can be trivially

replaced by one or two db_get_value(). I did change this in a couple of places in mhttpd.

This way of coding is more robust against unexpected contents in odb and is easier

to maintain going forward, when new odb entries must be added for new functionality.

Most uses of db_get_record() are now converted to db_get_record1(), except where it is

used in together with of db_open_record(). (which uses db_get_record() internally).

To fix the db_open_record() uses, I considered adding db_open_record1() which would

also know the data structure and automatically repair any mismatch, but I think instead of that,

I will switch them to use db_watch() (in conjunction with manual db_get_record()/get_record1()

and plain db_get_value()).

When adding automatic repair mechanism like this, one should beware of "update wars",

where two midas programs built against slightly different versions of midas would

each try to change odb in it's way, in an endless loop. (yes, it did happen, more than once).

One solution to this is to assign an "owner" to each data structure, the "consumers"

of the data have to deal with anything missing or unexpected. If they use db_get_value()

it should all be happy. (if the owner has to be reassigned, back to the wars again, until

everything is rebuilt against the same version of midas).

P.S. In languages lacking reflection, like C and C++, it is impossible to trivially implement

a mapping from a data structure to an external entity, such as db_get_record() to map C struct

into ODB. Many attempts have been made, i.e. ROOT CINT, all of them brittle, hard

to maintain, generally unsatisfactory. Java was the first mainstream language

to have reflection. Modern languages, such as Go, have reflection from day 1. Of course

all scripting languages, perl, python, javascript, always had reflection. The C++ language

standard will get reflections some day. Today one can easily do reflection in C++ using the Clang

compiler, the main reason for ROOT v6 switching from CINT to Clang.

K.O. |

|

1295

|

31 May 2017 |

Konstantin Olchanski | Bug Report | problem with odb strings and db_get_record() | > What a mess.

The mess with db_get_record() and db_open_record() is even deeper than I thought. There are several anomalies.

Records opened by db_open_record() are later accessed via db_get_record() which requires

that the odb structure and the C structure match exactly.

Of course anybody can modify anything in odb at any time, so there are protections against

modifying the odb structures "from under" db_open_record():

a) db_open_record(MODE_WRITE) makes the odb structure immutable by setting the "exclusive" flag. This works well. In the past

there were problems with "exclusive mode" getting stuck behind dead clients, but these days it is efficiently cleaned and recovered

by the odb validation code at the start of all midas programs.

b) db_create_record(), db_reorder_key() and db_delete_key() refuse to function on watched/hotlinked odb structures. One would

think this is good, but there is a side-effect. If I run "odbedit watch /", all odb delete operations fail (including deletion of temporary

items in /system/tmp).

c) db_create_key() and db_set_data()/db_set_value() do not have such protections, and they can (and do) add new odb entries and

change size of existing entries (especially size of strings), and make db_get_record() fail. note that db_get_record() inside

db_open_record() fails silently and odb hotlinks mysteriously stop working.

One could keep fixing this by adding protections against modification of hotlinked odb structures, but unfortunately, one cannot tell

db_watch() hotlinks from db_open_record() hotlinks. Only the latter ones require protection. db_watch() does not require such

protections because it does not use db_get_record() internally, it leaves it to the user to sort out any mismatches.

Also it would be nice if "odbedit watch /" did not have the nasty side effect of making all odb unchangable (presently it only makes

things undeletable).

To sort it all out, I am moving in this direction:

1) replace all uses of db_get_record() with db_get_record1() which automatically cures any structure mismatch

2) replace all uses of db_open_record(MODE_READ) with db_watch() in conjunction with db_get_record1(). This is done in mfe.c

and seems to work ok.

2a) automatic repair of structure mismatch is presently defeated by db_create_record() refusing to work on hotlinked odb entries.

3) with db_get_record() and db_open_record(MODE_READ) removed from use, turn off hotlink protection in item (b) above. This will

fix problem (2a).

4) maybe replace db_open_record(MODE_WRITE) with explicit db_set_record(). I personally do not like it's "magical" operation,

when in fact, it is just a short hand for "db_get_key/db_set_record" hidden inside db_send_changed_records().

4a) db_open_record(MODE_WRITE) works well enough right now, no need to touch it.

K.O. |

|

1296

|

31 May 2017 |

Konstantin Olchanski | Bug Report | problem with odb strings and db_get_record() | > 2) replace all uses of db_open_record(MODE_READ) with db_watch() in conjunction with db_get_record1().

Done to all in-tree programs, except for mana.c (not using it), sequencer.cxx (cannot test it) and a few places where watching a TID_INT.

Nothing more needs to be done, other than turn off the check for hotlink in db_create_record() & co (removed #define CHECK_OPEN_RECORD in odb.c).

K.O.

$ grep db_open_record src/* | grep MODE_READ

src/lazylogger.cxx: status = db_open_record(hDB, hKey, &run_state, sizeof(run_state), MODE_READ, NULL, NULL); // watch a TID_INT

src/mana.cxx: db_open_record(hDB, hkey, NULL, 0, MODE_READ, banks_changed, NULL);

src/mana.cxx: db_open_record(hDB, hkey, NULL, 0, MODE_READ, banks_changed, NULL);

src/mana.cxx: db_open_record(hDB, hkey, &out_info, sizeof(out_info), MODE_READ, NULL, NULL);

src/mana.cxx: db_open_record(hDB, hKey, ar_info, sizeof(AR_INFO), MODE_READ, update_request,

src/midas.c: status = db_open_record(hDB, hKey, &_requested_transition, sizeof(INT), MODE_READ, NULL, NULL);

src/mlogger.cxx: status = db_open_record(hDB, hKey, hist_log[index].buffer, size, MODE_READ, log_history, NULL);

src/mlogger.cxx: db_open_record(hDB, hVarKey, NULL, varkey.total_size, MODE_READ, log_system_history, (void *) (POINTER_T) index);

src/mlogger.cxx: db_open_record(hDB, hHistKey, NULL, size, MODE_READ, log_system_history, (void *) (POINTER_T) index);

src/odbedit.cxx: db_open_record(hDB, hKey, data, size, MODE_READ, key_update, NULL);

src/sequencer.cxx: status = db_open_record(hDB, hKey, &seq, sizeof(seq), MODE_READ, NULL, NULL);

8s-macbook-pro:midas 8ss$ |

|

1297

|

02 Jun 2017 |

Stefan Ritt | Bug Report | problem with odb strings and db_get_record() | That all makes sense to me.

Stefan

> > What a mess.

>

> The mess with db_get_record() and db_open_record() is even deeper than I thought. There are several anomalies.

>

> Records opened by db_open_record() are later accessed via db_get_record() which requires

> that the odb structure and the C structure match exactly.

>

> Of course anybody can modify anything in odb at any time, so there are protections against

> modifying the odb structures "from under" db_open_record():

>

> a) db_open_record(MODE_WRITE) makes the odb structure immutable by setting the "exclusive" flag. This works well. In the past

> there were problems with "exclusive mode" getting stuck behind dead clients, but these days it is efficiently cleaned and recovered

> by the odb validation code at the start of all midas programs.

>

> b) db_create_record(), db_reorder_key() and db_delete_key() refuse to function on watched/hotlinked odb structures. One would

> think this is good, but there is a side-effect. If I run "odbedit watch /", all odb delete operations fail (including deletion of temporary

> items in /system/tmp).

>

> c) db_create_key() and db_set_data()/db_set_value() do not have such protections, and they can (and do) add new odb entries and

> change size of existing entries (especially size of strings), and make db_get_record() fail. note that db_get_record() inside

> db_open_record() fails silently and odb hotlinks mysteriously stop working.

>

> One could keep fixing this by adding protections against modification of hotlinked odb structures, but unfortunately, one cannot tell

> db_watch() hotlinks from db_open_record() hotlinks. Only the latter ones require protection. db_watch() does not require such

> protections because it does not use db_get_record() internally, it leaves it to the user to sort out any mismatches.

>

> Also it would be nice if "odbedit watch /" did not have the nasty side effect of making all odb unchangable (presently it only makes

> things undeletable).

>

> To sort it all out, I am moving in this direction:

>

> 1) replace all uses of db_get_record() with db_get_record1() which automatically cures any structure mismatch

> 2) replace all uses of db_open_record(MODE_READ) with db_watch() in conjunction with db_get_record1(). This is done in mfe.c

> and seems to work ok.

> 2a) automatic repair of structure mismatch is presently defeated by db_create_record() refusing to work on hotlinked odb entries.

> 3) with db_get_record() and db_open_record(MODE_READ) removed from use, turn off hotlink protection in item (b) above. This will

> fix problem (2a).

> 4) maybe replace db_open_record(MODE_WRITE) with explicit db_set_record(). I personally do not like it's "magical" operation,

> when in fact, it is just a short hand for "db_get_key/db_set_record" hidden inside db_send_changed_records().

> 4a) db_open_record(MODE_WRITE) works well enough right now, no need to touch it.

>

>

> K.O. |

|

1298

|

06 Jun 2017 |

Konstantin Olchanski | Bug Report | problem with odb strings and db_get_record() | > Done to all in-tree programs, except for mana.c (not using it), sequencer.cxx (cannot test it) and a few places where watching a TID_INT.

> Nothing more needs to be done, other than turn off the check for hotlink in db_create_record() & co (removed #define CHECK_OPEN_RECORD in odb.c).

Fixed a bug in mfe.c - it was overwriting odb /eq/xxx/common with default values. fixed now.

Running with CHECK_OPEN_RECORD seems to work okey so far. Will test some more before proposing to make it the default.

K.O. |

|

1006

|

06 Jun 2014 |

Alexey Kalinin | Forum | problem with writing data on disk | Hello,

Our experiment based on MIDAS 2.x DAQ.

I'm using several identical frontend-%d with only lam source & event id changed,

running on 2 computers(~3frontends per one).

Each recieve about 10k Events (Max_SIZE =8*1024, but usually it is less then

sizeof(DWORD)*400) per 7sec.

With no mlogger running it works just fine, but when I'm starting mlogger (on 3-d

computer with mserver running)... looking at ethernet stat graph first 2-3 spills

goes well, with one peak per 7 sec, then it becomes junky and everithing crushed

(mlogger and frontends).

I tried to increase SYSTEM buffer and restart everything. What I saw was Logger

writes only half of recieved events from sum of frontends, it stays running for

awhile ~15minutes. If I push STOP button before crashing, mlogger continious

writing data on disk enough priod of time.

I will try to look at disk usage for bad sectors @HDD, but may be there is an easy

way to fix this problem and i did something wrong.

structure of frontend has code like

EQ_POLLED , POLL for 500,

frontend_loop{

read big buffer with 10k events;bufferread=true;

}

poll_event{

for (i=0;i<count;i++){

if (bufferread) lam=1;

if (!test) return lam;

}

return 0;

}

read_trigger{

bk_init32();

//fill event with buffer until current word!=0xffffffff

if (currentposition+2 >buffer_size) bufferread=false

}

|

Help needed, please. Suggestions.

Thanks, Alexey. |

|

1009

|

16 Jun 2014 |

Alexey Kalinin | Forum | problem with writing data on disk | Hello, once again.

What I found is when I tryed to stop the run, mlogger still working and writing some

data, that i'm sure is not right, because frontend's are in stopped state

( for ex. every 3*frontend got 50k, mlogger showes 120k . Stop button pushed, but data

in .mid file collect more then 150k~300k ev)

. And it continue writing until it crashes by the default waiting period 10s. |

|

1010

|

18 Jun 2014 |

Alexey Kalinin | Forum | problem with writing data on disk | Hello,

I'm in deppression.

I removed Everything from computer with mserver and reinstall system and midas.

Then I tried to run tutorial example.

Often run did not stop by pushing STOP button (mlogger stuck it, odbedit stop

works)

After first START button pushed number of event taken by frontend equals mlogger

events

written. Next run (without mlogger restarting) mlogger double the number of

events taken by

frontend.(see attachment).Restarting mlogger fix this double counting.

What i've did wrong? |

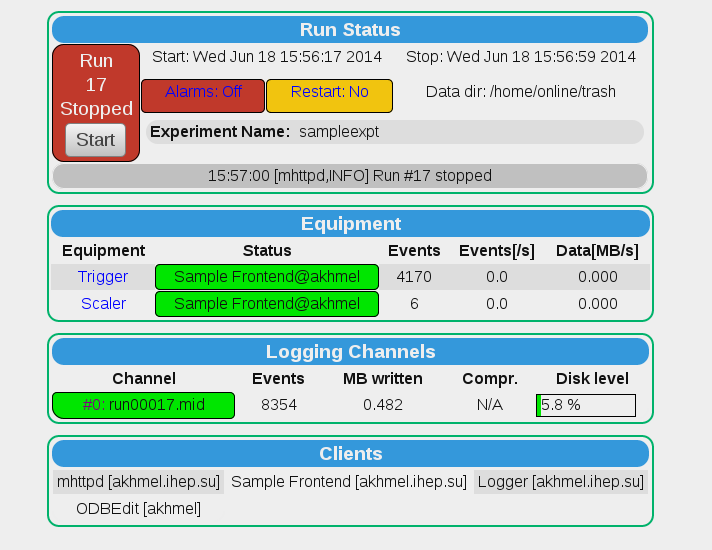

| Attachment 1: 39.png

|

|

|