|

|

|

Back

Midas

Rome

Roody

Rootana

|

| Midas DAQ System, Page 1 of 51 |

Not logged in |

|

|

|

| New entries since: | Wed Dec 31 16:00:00 1969 | |

|

|

01 Jun 2026, Yiwen Yang, Suggestion, Multithreaded deferred transitions 01 Jun 2026, Yiwen Yang, Suggestion, Multithreaded deferred transitions

|

Hi,

On the DAQ system for T2K's ND280 near detector, we use deferred

transitions to make sure all triggered events were logged before issuing run

stops to frontends.

I've recently managed to update the frontends to use a

relatively modern version of MIDAS. I then noticed that run transitions are now

by default multithreaded, when issued from e.g. mhttpd, but deferred transitions

called by cm_check_deferred_transition are still performed synchronously.

It

would be nice to make run stops use multithreaded transitions as well. A naive

patch of adding the TR_MTHREAD flag does not work, since the client handling the

deferred transition attempts to communicate with itself instead of calling

cm_transition_call_direct.

After looking into the code a bit further, I noticed

that there is an intentional check against multithreaded transitions in the

logic for determining whether the client is the one calling the transition:

https://bitbucket.org/tmidas/midas/src/fd71f63c023b7e2d4a5c91e3121651b14bd9d27b/

src/midas.cxx#lines-5009

Was there a particular concern that lead to this

particular check?

Regards,

Yiwen. |

29 May 2026, Zaher Salman, Info, ODBvalue timeout 29 May 2026, Zaher Salman, Info, ODBvalue timeout

|

Dear all, I implemented an optional timeout for the wait ODBvalue command. The way it works is similar to the standard wait command:

WAIT ODBvalue, /Equipment/HV/Variables/Measured[3], <, 100, timeout, 60

where the "timeout" keyword start a countdown in seconds. If the ODB condition is not met after 60 seconds the sequencer moves on to the next line.

To use this feature you must recompile the msequencer, delete /Sequencer/State and start the freshly compiled msequencer. This will add two ODBs to the /Sequencer/State: "Timeout value" (the countdown) and "Timeout limit" (the limit given in the wait command).

I suggest that we add something similar to the pysequencer using the same ODBs. |

29 May 2026, Stefan Ritt, Info, ODBvalue timeout 29 May 2026, Stefan Ritt, Info, ODBvalue timeout

|

> Dear all, I implemented an optional timeout for the wait ODBvalue command. The way it works is similar to the standard wait command:

>

> WAIT ODBvalue, /Equipment/HV/Variables/Measured[3], <, 100, timeout, 60

>

> where the "timeout" keyword start a countdown in seconds. If the ODB condition is not met after 60 seconds the sequencer moves on to the next line.

>

> To use this feature you must recompile the msequencer, delete /Sequencer/State and start the freshly compiled msequencer. This will add two ODBs to the /Sequencer/State: "Timeout value" (the countdown) and "Timeout limit" (the limit given in the wait command).

>

> I suggest that we add something similar to the pysequencer using the same ODBs.

How can the MSL code figure out if the wait succeeded or timed out?

Stefan |

29 May 2026, Zaher Salman, Info, ODBvalue timeout 29 May 2026, Zaher Salman, Info, ODBvalue timeout

|

>

> How can the MSL code figure out if the wait succeeded or timed out?

>

> Stefan

You get a message, something like:

17:52:12.293 2026/05/29 [Sequencer,INFO] WAIT ODBValue timeout after 10.0 seconds: /Equipment/Test/Variables/V < 1 not satisfied

Do we need something else?

Zaher |

29 May 2026, Stefan Ritt, Info, ODBvalue timeout 29 May 2026, Stefan Ritt, Info, ODBvalue timeout

|

> >

> > How can the MSL code figure out if the wait succeeded or timed out?

> >

> > Stefan

>

> You get a message, something like:

> 17:52:12.293 2026/05/29 [Sequencer,INFO] WAIT ODBValue timeout after 10.0 seconds: /Equipment/Test/Variables/V < 1 not satisfied

>

> Do we need something else?

>

> Zaher

I mean how can the following code determine the timeout? |

29 May 2026, Zaher Salman, Info, ODBvalue timeout 29 May 2026, Zaher Salman, Info, ODBvalue timeout

|

> > >

> > > How can the MSL code figure out if the wait succeeded or timed out?

> > >

> > > Stefan

> >

> > You get a message, something like:

> > 17:52:12.293 2026/05/29 [Sequencer,INFO] WAIT ODBValue timeout after 10.0 seconds: /Equipment/Test/Variables/V < 1 not satisfied

> >

> > Do we need something else?

> >

> > Zaher

>

> I mean how can the following code determine the timeout?

My intention with this was dealing with something like setting a cryostat temperature or any non-critical parameter. If it is not reached within a given timeout we give up and move on with the plan rather than sitting and wasting a whole night of beam. If your ODBvalue is "mission critical" then the wait command should not be used with a timeout. If you do use the timeout option then you will have to check in the following lines what is the state of your ODBvalue (very easy). To me this is the simplest and most useful way for our use case. |

29 May 2026, Stefan Ritt, Info, ODBvalue timeout 29 May 2026, Stefan Ritt, Info, ODBvalue timeout

|

> > > >

> > > > How can the MSL code figure out if the wait succeeded or timed out?

> > > >

> > > > Stefan

> > >

> > > You get a message, something like:

> > > 17:52:12.293 2026/05/29 [Sequencer,INFO] WAIT ODBValue timeout after 10.0 seconds: /Equipment/Test/Variables/V < 1 not satisfied

> > >

> > > Do we need something else?

> > >

> > > Zaher

> >

> > I mean how can the following code determine the timeout?

>

> My intention with this was dealing with something like setting a cryostat temperature or any non-critical parameter. If it is not reached within a given timeout we give up and move on with the plan rather than sitting and wasting a whole night of beam. If your ODBvalue is "mission critical" then the wait command should not be used with a timeout. If you do use the timeout option then you will have to check in the following lines what is the state of your ODBvalue (very easy). To me this is the simplest and most useful way for our use case.

I was more thinking like a return value 0/1 if the wait function. If you change the condition, you only have to change it in one location. More like normal C functions work.

Stefan |

21 May 2026, Konstantin Olchanski, Bug Report, incompatible ODB XML dumps 21 May 2026, Konstantin Olchanski, Bug Report, incompatible ODB XML dumps

|

While testing manalyzer, I found that it dies from an exception on odbxx, error message is "/home/olchansk/packages/midas/include/odbxx.h:1231: No "handle"

attribute found in XML data".

Indeed, my data file is very old and it's XML ODB dump does not have the "handle" attribute:

daq00:midas$ more ~/git/midas/manalyzer/run9402bor.xml

<?xml version="1.0" encoding="ISO-8859-1"?>

<!-- created by MXML on Tue Aug 11 14:47:16 2020 -->

<odb root="/" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:noNamespaceSchemaLocation="http://midas.psi.ch/odb.xsd">

<dir name="Experiment">

<key name="ODB timeout" type="INT32">10000</key>

While current MIDAS XML ODB dumps have it:

<?xml version="1.0" encoding="ISO-8859-1"?>

<!-- created by MXML on Thu May 21 20:37:06 2026 -->

<odb root="/" filename="odb.xml" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:noNamespaceSchemaLocation="/home/olchansk/packages/midas/odb.xsd">

<dir name="System" handle="135320">

<dir name="Flush" handle="135408">

<key name="Flush period" type="UINT32" handle="135496">60</key>

And odbxx requires this attribute unconditionally:

if (mxml_get_attribute(node, "handle") == nullptr)

mthrow("No \"handle\" attribute found in XML data");

o->set_hkey(std::stoi(std::string(mxml_get_attribute(node, "handle"))));

The "handle" attribute was added to XML ODB dumps in September 2024 (not sure to what purpose, JSON ODB dumps do not have a "handle" attribute):

git blame src/odb.cxx

...

dd23558fbd src/odb.cxx (Stefan Ritt 2024-09-20 15:30:00 +0200 9387) mxml_write_attribute(writer, "handle", std::to_string(hKey).c_str());

This change makes MIDAS data files written before this date un-analyzable (unless odbxx is turned off).

I can prevent manalyzer from crashing by catching the exception, but I think it is better if odbxx code is updated to accept the pre-Sep-2024 ODB XML data

format (which were valid XML ODB dumps when they were made and users are stuck with them inside compress binary MIDAS data files).

P.S. also please check the odbxx code for other crashes on malformed XML ODB dumps, it should complain, fail to load the dump, but not core dump or abort.

Malformed ODB dumps is not a theoretical situation, I am currently looking at MIDAS data files (mid.lz4) that have invalid JSON ODB dumps created from

corrupted ODB. Luckily the JSON parser handles this gracefully, does not crash manalyzer and I can look at the data. I did have to go 10 runs into the past

to find an uncorrupted ODB dump to reload a good ODB. Fixes to the JSON encoder and fixes for corrupt ODB are in progress.

K.O. |

21 May 2026, Konstantin Olchanski, Info, manalyzer --save-odb 21 May 2026, Konstantin Olchanski, Info, manalyzer --save-odb

|

Due my oversight, the code for extracting ODB dumps from MIDAS data files from rootana/old_analyzer/event_dump.cxx was missing in

manalyzer.cxx.

This is now corrected, the new manalyzer command line flag is "--save-odb", to use it:

daq00:manalyzer$ ./manalyzer_test.exe --save-odb ~/git/midas/testexpt/run00002.mid.lz4

...

Saving begin of run ODB dump for run 2 from "/home/olchansk/git/midas/testexpt/run00002.mid.lz4" to "run2bor.json"

...

Saving end of run ODB dump for run 2 from "/home/olchansk/git/midas/testexpt/run00002.mid.lz4" to "run2eor.json"

...

manalyzer commit f4cbcb7426083edc9f74298965c90a3a91f461ab

K.O. |

06 May 2026, Jonas A. Krieger, Suggestion, numpy version compatibility 06 May 2026, Jonas A. Krieger, Suggestion, numpy version compatibility

|

There seems to be a version dependency with the numpy.bool , e.g. used here

https://bitbucket.org/tmidas/midas/src/c6ef4aff5e7e652df79160141e570bed5f4d6a3b/python/midas/sequencer.py?at=develop#sequencer.py-1714 .

This type alias does not exist for versions in-between 1.24.0 and 2.0.0 .

https://numpy.org/doc/stable/release/1.24.0-notes.html#np-str0-and-similar-are-now-deprecated

Would it be an option to specify midas-compatible numpy versions in the setup.py with extras_require ? |

06 May 2026, Ben Smith, Bug Fix, numpy version compatibility 06 May 2026, Ben Smith, Bug Fix, numpy version compatibility

|

> There seems to be a version dependency with the numpy.bool

Thanks for reporting this Jonas! I've just updated the code to reference `np.bool_`, which is present in all versions. We use `np.bool_`

elsewhere (e.g. in midas.event), but I mistakenly used `np.bool` in the sequencer.

I just tried some sequencer tests with 1.26.0 and 2.2.6 and they seem happy now.

Cheers,

Ben |

23 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB 23 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB

|

Dear MIDAS experts,

when I attempted to increase the max number of hotlinks in ODB , defined as

#define MAX_OPEN_RECORDS 256 /**< number of open DB records */

I started running into an assertion in midas/src/odb.cxx

https://bitbucket.org/tmidas/midas/src/fa5457b5274a6b42c5ed8b6dea5e3cdd43de38fe/src/odb.cxx#lines-1525 :

assert(sizeof(DATABASE_CLIENT) == 2112);

is it possible that the size of the DATABASE_CLIENT structure should be checked against 64+sizeof(OPEN_RECORD)*MAX_OPEN_RECORDS ?

- 64 clearly can be expressed in a better maintainable form

UPDATE: similar consideration holds for the size of the DATABLE_HEADER structure, which is also checked against a constant

https://bitbucket.org/tmidas/midas/src/fa5457b5274a6b42c5ed8b6dea5e3cdd43de38fe/src/odb.cxx#lines-1526

-- many thanks, regards, Pavel

|

24 Apr 2026, Konstantin Olchanski, Bug Report, increasing the max number of hot links in ODB 24 Apr 2026, Konstantin Olchanski, Bug Report, increasing the max number of hot links in ODB

|

> when I attempted to increase the max number of hotlinks in ODB , defined as

> #define MAX_OPEN_RECORDS 256 /**< number of open DB records */

> assert(sizeof(DATABASE_CLIENT) == 2112);

Yes, it is intended to work like this. If you change MAX_OPEN_RECORDS (and some settings),

you break binary compatibility with standard MIDAS and the asserts inform you about it.

It is not a light step to take - you have to recompile all MIDAS clients, and if you miss

one and run it against your non-standard MIDAS, kaboom everything will go,

there is no safety net against this.

In the ALPHA experiment at CERN, for years we have been running with MAX_OPEN_RECORDS set to 2560,

and it works, you have to change both MAX_OPEN_RECORDS in midas.h and the expected values

in the assert() statements.

The new correct values you do not need to guess or compute yourself, the code to print

them is right there and it is easy to enable.

Replacing the numeric constants with computed values of course would completely defeat

the purpose of the tests - to catch the situation where by mistake or by ignorance

(or by miscompilation) sizes of critical data structures become different from those

normally expected.

K.O. |

25 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB 25 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB

|

I see - thank you for the explanation!

Indeed, updating MIDAS clients on each and every RPI etc in a running experiment may be a real challenge.

Thinking forward - would it help if the ODB clients, upon initial connection but before doing anything else

were reading the ODB parameters from the ODB itself, so the clients were "learning" about the ODB structure

dynamically, at run time? Or that knowledge has to be static ?

-- thanks, regards, Pavel |

27 Apr 2026, Konstantin Olchanski, Bug Report, increasing the max number of hot links in ODB 27 Apr 2026, Konstantin Olchanski, Bug Report, increasing the max number of hot links in ODB

|

> Indeed, updating MIDAS clients on each and every RPI etc in a running experiment may be a real challenge.

actually, only local clients must be rebuilt, remote clients connecting to the mserver do not care about ODB

internal structure.

> Thinking forward - would it help if the ODB clients, upon initial connection but before doing anything else

> were reading the ODB parameters from the ODB itself, so the clients were "learning" about the ODB structure

> dynamically, at run time? Or that knowledge has to be static ?

unfortunately, the "open records" structure is allocated at compile-time inside the ODB header,

making any change to this would break binary compatibility.

I think it is possible to allocate "space for additional open records" in the ODB data area

and have the ODB open records code use it in addition to the compile-time allocated

space in the database header. This would also work for extending MAX_CLIENTS.

Of course in this approach, old midas clients would see only the clients and open records

in the database header, new midas clients would see the additional data.

It is not super hard to add this code...

K.O. |

27 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB 27 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB

|

> > Indeed, updating MIDAS clients on each and every RPI etc in a running experiment may be a real challenge.

>

> actually, only local clients must be rebuilt, remote clients connecting to the mserver do not care about ODB

> internal structure.

thanks! I see - local clients do know about the memory mapping, remote ones - don't

> unfortunately, the "open records" structure is allocated at compile-time inside the ODB header,

> making any change to this would break binary compatibility.

right, I guess, what I had in mind would require the very first fODB record to be a format descriptor,

and that would be a breaking change... Anyway, the practical part of the problem is addressed,

so I just add here a link which contains an answer to the original posting (I found it only after the fact):

https://daq00.triumf.ca/MidasWiki/index.php/FAQ#Increasing_Number_of_Hot-links

-- thanks again, regards, Pavel |

26 Apr 2026, Stefan Ritt, Bug Report, increasing the max number of hot links in ODB 26 Apr 2026, Stefan Ritt, Bug Report, increasing the max number of hot links in ODB

|

I wonder why one needs more than 256 hotlinks at all. Please note that with the odbxx "watch" API, you can hotline a whole subdirectory, and get notified if ANY of the

underlying values or subdirectories change. In principle, one could have one hotlink to "/" and see all changes in the ODB (although that does not make sense and might slow

down ODB access a bit).

Try the odbxx_test.cpp example in MIDAS. In line 210 it puts a single hotlink to /Experiment. If you change anything under /Experiment, the program gets notified. By checking the

path of the changed ODB entry, it can figure out which of the subways have been changed:

// watch ODB key for any change with lambda function

midas::odb ow("/Experiment");

ow.watch([](midas::odb &o) {

std::cout << "Value of key \"" + o.get_full_path() + "\" changed to " << o << std::endl;

});

Maybe that would solve your problem without having to change the maximum number of hotlinks.

Stefan |

27 Apr 2026, Konstantin Olchanski, Bug Report, increasing the max number of hot links in ODB 27 Apr 2026, Konstantin Olchanski, Bug Report, increasing the max number of hot links in ODB

|

> I wonder why one needs more than 256 hotlinks at all.

I confirm that ALPHA is running with MAX_OPEN_RECORDS changed from 256 to 2048,

this is the only experiment I know of that had to increase any MIDAS ODB defaults.

The reason for this is mlogger, it opens an open record for each variable in each equipment.

This should be changed to 1 db_watch per equipment. We talked about it, but I guess we never did it.

I think this task just went almost to the top of my MIDAS to-do list.

K.O. |

27 Apr 2026, Nick Hastings, Bug Report, increasing the max number of hot links in ODB 27 Apr 2026, Nick Hastings, Bug Report, increasing the max number of hot links in ODB

|

For the record, the ND280 (T2K near detector) MIDAS GSC was initially set up

with MAX_OPEN_RECORDS = 2560 and MAX_CLIENTS = 128.

In 2023 one fairly simple part of the detector was replaced with several

other more complex systems (many more midas frontends, equipments, and

variables being logged) so we updated MAX_OPEN_RECORDS = 4096 and

MAX_CLIENTS = 256.

Nick. |

27 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB 27 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB

|

> > I wonder why one needs more than 256 hotlinks at all.

>

> I confirm that ALPHA is running with MAX_OPEN_RECORDS changed from 256 to 2048,

> this is the only experiment I know of that had to increase any MIDAS ODB defaults.

>

> The reason for this is mlogger, it opens an open record for each variable in each equipment.

>

> This should be changed to 1 db_watch per equipment. We talked about it, but I guess we never did it.

>

> I think this task just went almost to the top of my MIDAS to-do list.

I definitely had many more than 256 variables successfully monitored with MAX_OPEN_RECORDS=256.

Is it possible that mlogger creates a hotlink per monitoring event, not per variable ?

- I think, that would make more sense in almost any scenario...

-- thanks, regards, Pavel |

27 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB 27 Apr 2026, Pavel Murat, Bug Report, increasing the max number of hot links in ODB

|

> I wonder why one needs more than 256 hotlinks at all. Please note that with the odbxx "watch" API, you can hotline a whole subdirectory, and get notified if ANY of the

> underlying values or subdirectories change. In principle, one could have one hotlink to "/" and see all changes in the ODB (although that does not make sense and might slow

> down ODB access a bit).

Thanks ! - I didn't know that. I did run into a number of hotlinks limit via mlogger which complained about not being able to create a hotlink

to yet another event. Doubling the default value of MAX_OPEN_RECORDS solved the problem.

I don't know the exact arithmetic defining the number of hotlinks in the system, but my today's case is a case of

- 36 (linux servers) +18 (RPI) monitoring frontends managing one or several different equipment items each.

- Each equipment item sends to ODB at least one monitoring event

- in addition, each frontend created an individual hotlink for handling interactive commands

- for MAX_OPEN_RECORDS=256, 4 equipment items per frontend easily make it into the dangerous zone.

"Equipment items" also include the online processes running on the distributed computing farm processing the data ..

(we are not using MIDAS event building capabilities)

>

> Try the odbxx_test.cpp example in MIDAS. In line 210 it puts a single hotlink to /Experiment. If you change anything under /Experiment, the program gets notified. By checking the

> path of the changed ODB entry, it can figure out which of the subways have been changed:

>

> // watch ODB key for any change with lambda function

> midas::odb ow("/Experiment");

> ow.watch([](midas::odb &o) {

> std::cout << "Value of key \"" + o.get_full_path() + "\" changed to " << o << std::endl;

> });

>

>

> Maybe that would solve your problem without having to change the maximum number of hotlinks.

I'll see how much mileage one can make here, but so far it looks that it is the number of various monitoring events

handled by the mlogger which drives the number of hotlinks

-- thanks, regards, Pavel |

16 Apr 2026, Konstantin Olchanski, Suggestion, mhttpd user permissions 16 Apr 2026, Konstantin Olchanski, Suggestion, mhttpd user permissions

|

We had our periodic discussion on MIDAS web page user permissions. (I cannot

find the link to the previous discussions, ouch!)

Currently any logged in user can do anything - start stop runs, start/stop

programs, edit odb, etc.

Regularly, we have experiments that ask about "read-only" access to MIDAS and

about more granular user permissions.

In the past, I suggested a permissions scheme that is easy to implement

with the current code base. Permission level for each user can

be stored in ODB and allow:

level 0 - root user, as now

level 1 - experiment user, any restrictions are implemented in javascript, i.e.

all custom pages work as they do now, but (i.e.) the odb editor is read-only

level 2 - experiment operator, restrictions are implemented in the mjsonrpc

code, i.e. can start/stop runs, start/stop programs, but cannot make any

changes, i.e. cannot write to ODB

level 3 - read-only user - only mjsonrpc calls that do not change anything are

permitted.

(to implement level 2, obviously, the "start run" mjsonrpc call has to be

changed to accept the run comments, current code writes them to odb directly and

that would fail).

First step towards implementing this was made today. Ben and Derek figured out

the apache incantation to pass the logged user name to MIDAS and I added

decoding of this user name in mhttpd. I do not do anything with it, yet.

In apache config, one change is needed:

> For Apache, add this line in your VirtualHost section (tested as working):

> RequestHeader set X-Remote-User %{REMOTE_USER}s

https://daq00.triumf.ca/DaqWiki/index.php/Ubuntu#Install_apache_httpd_proxy_for_midas_and_elog

K.O. |

09 Apr 2026, David Perez Loureiro, Forum, Migrate Legacy code to current Midas version 09 Apr 2026, David Perez Loureiro, Forum, Migrate Legacy code to current Midas version

|

I am an applied physicist at Canadian Nuclear Laboratories and am in the process

of migrating the full experiment configurationŚincluding the front-end interfaceŚ

of the CRIPT muon tomography detector from a legacy version of MIDAS (SVN Rev.

5238, circa 2012) to a more modern release.

The current system runs on Scientific Linux 6 and very old hardware. Due to

substantial changes in the MIDAS codebase over the years, I have encountered

multiple compatibility issues during the migration. I have also attempted to build

and run the legacy MIDAS version and the front-end code using GCC 4.8 on a modern

Linux system (Ubuntu 24.04), but without success.

Could you please advise on the recommended approach for upgrading or refactoring

legacy MIDAS front-end code to the current framework? Any guidance or best

practices would be greatly appreciated.

Many thanks in advance.

David Perez Loureiro, PhD (he/him)

Applied Physicist, Applied Physics Branch

Canadian Nuclear Laboratories |

09 Apr 2026, Nick Hastings, Forum, Migrate Legacy code to current Midas version 09 Apr 2026, Nick Hastings, Forum, Migrate Legacy code to current Midas version

|

> I am an applied physicist at Canadian Nuclear Laboratories and am in the process

> of migrating the full experiment configurationŚincluding the front-end interfaceŚ

> of the CRIPT muon tomography detector from a legacy version of MIDAS (SVN Rev.

> 5238, circa 2012) to a more modern release.

> The current system runs on Scientific Linux 6 and very old hardware. Due to

> substantial changes in the MIDAS codebase over the years, I have encountered

> multiple compatibility issues during the migration. I have also attempted to build

> and run the legacy MIDAS version and the front-end code using GCC 4.8 on a modern

> Linux system (Ubuntu 24.04), but without success.

I suggest updating the midas version. You should be able to get to at least midas 2024-12-a

without breaking anything.

> Could you please advise on the recommended approach for upgrading or refactoring

> legacy MIDAS front-end code to the current framework? Any guidance or best

> practices would be greatly appreciated.

Start here: https://daq00.triumf.ca/MidasWiki/index.php/Changelog

The 2019-06 release was the c -> c++ transition. Read that section carefully.

The 2020-12 update also had a change that you will also likely want to update your FEs for.

You will also likely encounter a quite a few non-midas issues related to the newer compilers.

Updates to your front ends may include things like needing to use "const char*" instead of "char*",

adding extra headers like <cstring> and <cstdlib>, using ctime_r() instead of ctime(), etc

Post back if you have specific questions.

P.S. I'm maintaining a number of midas FEs mostly written around 2007-2009.

These were running on SL5 and SL6 for many years and are now on Alma 9 with

midas 2024-12-a. |

16 Apr 2026, Konstantin Olchanski, Forum, Migrate Legacy code to current Midas version 16 Apr 2026, Konstantin Olchanski, Forum, Migrate Legacy code to current Midas version

|

> I am migrating the full CRIPT muon tomography detector from MIDAS (SVN Rev.

> 5238, circa 2012) to a more modern release.

> The current system runs on Scientific Linux 6 and very old hardware.

Right, good vintage midas and linux. But in the current security environment,

we must run currently supported OS (and MIDAS), and we must never fall off

the yearly/bi-yearly OS upgrade threadmill.

How old is your computer hardware and do you plan to update it, as well? If you OS

is installed on an HDD, definitely move to an SSD would be good. If you are hard

on money, a RaspberryPi5 with 16GB RAM may be good enough for what you have.

Anyhow new OS choice would be Ubuntu 24 or Debian 13. I do not recommend Red Hat based OS (vanilla

RHEL, Fedora, Alma, Rocky), they have become niche OSes with minimal vendor and community support.

> Due to substantial changes in the MIDAS codebase over the years ...

The big change in MIDAS land is move to c++, then c++11, then to c++17, and move from vanilla make to

cmake.

MIDAS API has been reasonably stable since then, but very old MIDAS frontends would fail to build with

latest compilers because of changes in c++ language and changes in the c and c++ libraries.

> I have encountered multiple compatibility issues during the migration. I have also attempted to

build and run the legacy MIDAS version and the front-end code using GCC 4.8 on a modern Linux system

(Ubuntu 24.04), but without success.

this is non-viable, latest C/C++ compilers reject perfectly good SL6-era C/C++ code, old MIDAS would

not compile, old frontends would not compile.

> Could you please advise on the recommended approach ...

What you are doing, we have done several times with TRIUMF experiments,

updating SL6 and CentOS-7 MIDAS instances to current MIDAS, C++ and OS:

1) new computer with Ubuntu 24 (or Debian or Raspbian). (U-26 will come out roughly in August, fo rth

epurposes of this discussion).

2) new MIDAS. we generally recommend the head of the develop branch, but older tagged version are

okey, too.

3) apache https proxy, etc, for secure browser connections, see

https://daq00.triumf.ca/DaqWiki/index.php/Ubuntu#Install_apache_httpd_proxy_for_midas_and_elog

4) reload your old ODB into the new MIDAS

5) your old history, etc should work

6a) build your old frontends, this will be a chore, but if you look at the compile errors, you will

see that most changes are very mechanical (i.e. const char*, etc). biggest hassle is ot make your old

C/C++ code to build with current C++17 compiler, smaller hassle is to update for minor changes in the

mfe.h API.

6b) bite the bullet and rewrite your frontends using the C++ tmfe API, start with

tmfe_example_everything.cxx, remove unnecessary, add required, pretty straightforward, I can guide you

through this (contact me directly by email).

7) minor tweeks to mlogger, mhttpd and history settings

8) rewrite all customs pages to the current mjsonrpc API

Best of luck, if you have more questions, please ask here or by direct email.

K.O. |

05 Feb 2026, Konstantin Olchanski, Bug Report, omnibus bugs from running DarkLight 05 Feb 2026, Konstantin Olchanski, Bug Report, omnibus bugs from running DarkLight

|

We finished running the DarkLight experiment and I am reporting accumulated bugs that we have run into.

1) history plots on 12 hrs, 24 hrs tend to hang with "page not responsive". most plots have 16-20 variables,

which are recorded at 1/sec interval. (yes, we must see all the variables at the same time and yes, we want to

record them with fine granularity).

2) starting runs gets into a funny mode if a GEM frontend aborts (hardware problems), transition page reports

"wwrrr, timeout 0", and stays stuck forever, "cancel transition" does nothing. observe it goes from "w"

(waiting) to "r" (RPC running) without a "c" (connecting...) and timeout should never be zero (120 sec in

ODB).

3) ODB editor clicking on hex number versus decimal number no longer allows editing in hex, Stefan implemented

this useful feature and it worked for a while, but now seems broken.

4) ODB editor "right click" to "delete" or "rename" key does not work, the right-click menu disappears

immediately before I can use it (dl-server-2), click on item (it is now blue), right-click menu disappears

before I can use it (daq17). it looks like a timing or race condition.

5) ODB editor "create link" link target name is limited to 32 bytes, links cannot be created (dl-server-2), ok

on daq17 with current MIDAS.

6) MIDAS on dl-server-2 is "installed" in such a way that there is no connection to the git repository, no way

to tell what git checkout it corresponds to. Help page just says "branch master", git-revision.h is empty. We

should discourage such use of MIDAS and promote our "normal way" where for all MIDAS binary programs we know

what source code and what git commit was used to build them.

6a) MIDAS on dl-server-2 had a pretty much non-functional history display, I reported it here, Stefan provided

a fix, I manually retrofitted it into dl-server-2 MIDAS and we were able to run the experiment. (good)

6b) bug (5) suggests that there is more bugs being introduced and fixed without any notice to other midas

users (via this forum or via the bitbucket bug tracker).

K.O. |

06 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight 06 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight

|

Thanks for the detailed report. Let me reply one-by-one.

> 1) history plots on 12 hrs, 24 hrs tend to hang with "page not responsive". most plots have 16-20 variables,

> which are recorded at 1/sec interval. (yes, we must see all the variables at the same time and yes, we want to

> record them with fine granularity).

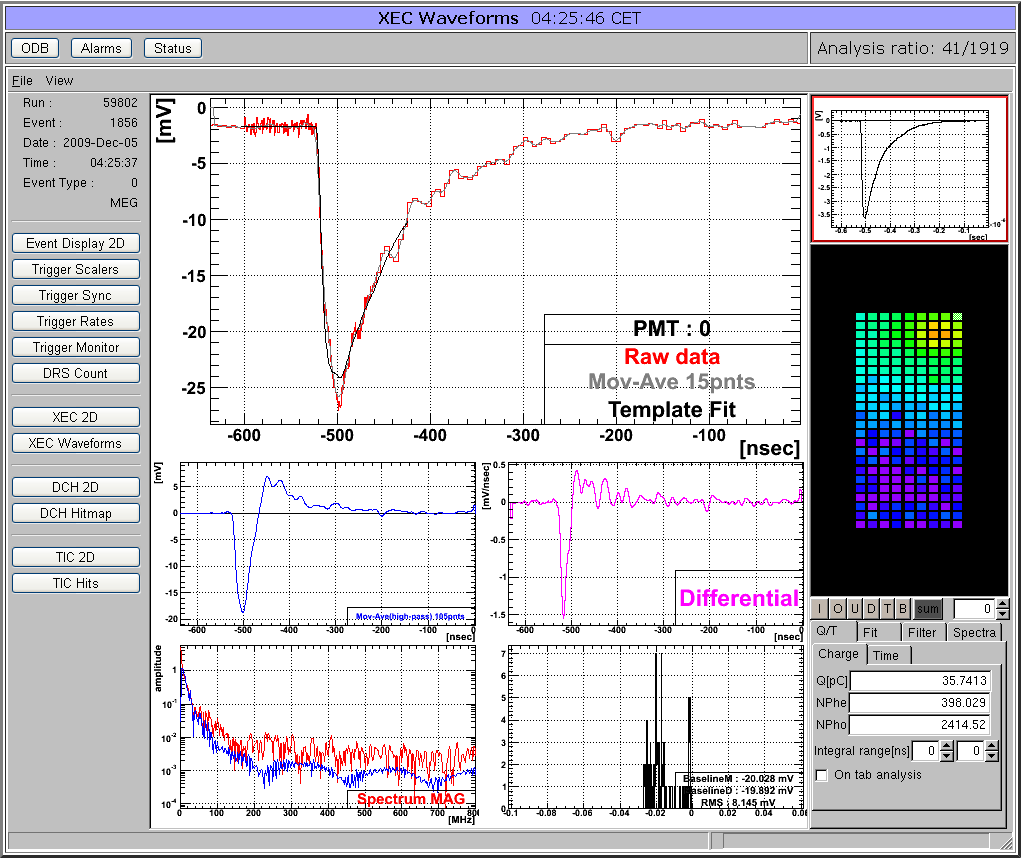

Attached is a similar plot. 8 values recorded every second, displayed for 24h. The backend is actually a Raspberry Pi! I see no issues there. Do you have

the current history version which does the re-binning? Actually the plot below is still without rebinding (see the "1" at the top right), and it contains ~72000 points x 8. The browser does not have any issue

with it.

Stefan |

12 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight 12 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight

|

Now I had a similar case that the browser froze when showing 24h of data. Tuned out that 80k points are a bit much. I changed the code so that it starts binning when showing 8h or more. This is not a perfect solution. The code should check at which interval data is written, then

automatically start binning when approaching 4000 points or more. That would however require more complicated code, so I leave it as it is right now. Feedback welcome.

Stefan |

06 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight 06 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight

|

> 3) ODB editor clicking on hex number versus decimal number no longer allows editing in hex, Stefan implemented

> this useful feature and it worked for a while, but now seems broken.

I cannot confirm. See below. There was some issue some time ago, but that's fixed since a while. Please pull on develop and try again.

Here is the change: https://bitbucket.org/tmidas/midas/commits/882974260876529c43811c63a16b4a32395d416a

Stefan |

06 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight 06 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight

|

> 4) ODB editor "right click" to "delete" or "rename" key does not work, the right-click menu disappears

> immediately before I can use it (dl-server-2), click on item (it is now blue), right-click menu disappears

> before I can use it (daq17). it looks like a timing or race condition.

Confirmed and fixed: https://bitbucket.org/tmidas/midas/commits/4ba30761683ac9aa558471d2d2d35ce05e72096a

/Stefan |

06 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight 06 Feb 2026, Stefan Ritt, Bug Report, omnibus bugs from running DarkLight

|

> 5) ODB editor "create link" link target name is limited to 32 bytes, links cannot be created (dl-server-2), ok

> on daq17 with current MIDAS.

Works for me with the current version.

> 6) MIDAS on dl-server-2 is "installed" in such a way that there is no connection to the git repository, no way

> to tell what git checkout it corresponds to. Help page just says "branch master", git-revision.h is empty. We

> should discourage such use of MIDAS and promote our "normal way" where for all MIDAS binary programs we know

> what source code and what git commit was used to build them.

Not sure if you have seen it. I make a "install" script to clone, compile and install midas. Some people use this already. Maybe give it a shot. Might need

adjustment for different systems, I certainly haven't covered all corner cases. But on a RaspberryPi it's then just one command to install midas, modify

the environment, install mhttpd as a service and load the ODB defaults. I know that some people want it "their way" and that's ok, but for the novice user

that might be a good starting point. It's documented here: https://daq00.triumf.ca/MidasWiki/index.php/Install_Script

The install script is plain shell, so should be easy to be understandable.

> 6a) MIDAS on dl-server-2 had a pretty much non-functional history display, I reported it here, Stefan provided

> a fix, I manually retrofitted it into dl-server-2 MIDAS and we were able to run the experiment. (good)

>

> 6b) bug (5) suggests that there is more bugs being introduced and fixed without any notice to other midas

> users (via this forum or via the bitbucket bug tracker).

If I would notify everybody about a new bug I introduced, I would know that it's a bug and I would not introduce it ;-)

For all the fixes I encourage people to check the commit log. Doing an elog entry for every bug fix would be considered spam by many people because

that can be many emails per week. The commit log is here: https://bitbucket.org/tmidas/midas/commits/branch/develop

If somebody volunteers to consolidate all commits and make a monthly digest to be posted here, I'm all in favor, but I'm not that individual.

Stefan |

23 Jan 2026, Mathieu Guigue, Info, Homebrew support for midas 23 Jan 2026, Mathieu Guigue, Info, Homebrew support for midas

|

Dear all,

For my personal convenience, I started to add an homebrew formula

for

midas (*):

https://github.com/guiguem/homebrew-tap/blob/main/Formula/

midas.rb

It

is convenient in particular to deploy as it automatically gets all

the right

dependencies; for MacOS (**), there are bottles already available.

The

installation would then be

brew tap guiguem/tap

brew install midas

I

thought I

would share it here, if this is helpful to someone else (***).

This

was tested

rather extensively, including the development of manalyzer modules

using this

bottled version as backend.

A possible upgrade (if people are

interested) would

be to develop/deploy a "mainstream" midas version (and I would

rename mine

"midas-mod").

Cheers

Mathieu

-----

Notes:

(*) The version installed

by this

formula is a very slightly modified version of midas, designed to

support more

than 100 front-ends (needed for HK).

See commits here:

https://

gitlab.in2p3.fr/

hk/clocks/midas/-/

commit/060b77afb38e38f9a3155d2606860f12d680f4de

https://

gitlab.in2p3.fr/hk/

clocks/midas/-/

commit/1da438ad1946de7ba697e849de6a6675ac45ebb8

I have the

recollection this

version might not be compatible with the main midas one.

(**) I also have some

stuff for Ubuntu, but Ubuntu seems to do additional

linkage to curl which needs

to be handled (easy).

That being said the

installation from sources works fine!

(***) Some oddities were unraveled such as

the fact that the build_interface

pointing to the source include directory are

still appearing in the

midasConfig.cmake files (leading to issues in brew). This

was fixed by replacing

the faulty path to the final installation location. Maybe

this should be fixed ? |

23 Jan 2026, Stefan Ritt, Info, Homebrew support for midas 23 Jan 2026, Stefan Ritt, Info, Homebrew support for midas

|

Hi Mathieu,

thanks for your contribution. Have you looked at the install.sh script I developed last week:

https://daq00.triumf.ca/MidasWiki/index.php/Install_Script

which basically does the same, plus it modifies the environment and installs mhttpd as a service.

Actually I modeled the installation after the way Homebrew is installed in the first place (using curl).

I wonder if the two things can kind of be integrated. Would be great to get with brew always the newest midas version, and it would also

check and modify the environment.

If you tell me exactly what is wrong MidasConfig.cmake.in I'm happy to fix it.

Best,

Stefan |

23 Jan 2026, Mathieu Guigue, Info, Homebrew support for midas 23 Jan 2026, Mathieu Guigue, Info, Homebrew support for midas

|

Thanks Stefan!

Actually, these two approaches are slightly different I guess:

- the installation script you are linking manages the

installation and the subsequent steps, but doesn't manage the dependencies: for instance on my machine, it didn't find root and so manalyzer

is built without root support.

Maybe this is just something to adapt?

Brew on the other hand manages root and so knows how to link these two

together.

- The nice thing I like about brew is that one can "ship bottles" aka compiled version of the code; it is great and fast for

deployment and avoid compilation issues.

- I like that your setup does deploy and launch all the necessary executables ! I know brew can do

this too via brew services (see an example here: https://github.com/Homebrew/homebrew-core/blob/HEAD/Formula/r/rabbitmq.rb#L83 ), maybe worth

investigating...?

- Brew relies on code tagging to better manage the bottles, so that it uses the tag to get a well-defined version of the

code and give a name to the version.

I had to implement my own tags e.g. midas-mod-2025-12-a to get a release.

I am not sure how to do in the

case of midas where the tags are not that frequent...

Thank you for the feedback, I will make the modifications (aka naming my formula

``midas-mod'') so that it doesn't collide with a future official midas one.

Concerning the MidasConfig.cmake issue, this is what I need

(note that the INTERFACE_INCLUDE_DIRECTORIES is pointing to

/opt/homebrew/Cellar/midas/midas-mod-2025-12-a/)

set_target_properties(midas::midas PROPERTIES

INTERFACE_COMPILE_DEFINITIONS "HAVE_CURL;HAVE_MYSQL;HAVE_SQLITE;HAVE_FTPLIB"

INTERFACE_COMPILE_OPTIONS "-I/opt/homebrew/Cellar/mariadb/12.1.2/include/mysql;-I/opt/homebrew/Cellar/mariadb/12.1.2/include/mysql/mysql"

INTERFACE_INCLUDE_DIRECTORIES "/opt/homebrew/Cellar/midas/midas-mod-2025-12-a/;${_IMPORT_PREFIX}/include"

INTERFACE_LINK_LIBRARIES "/opt/

homebrew/opt/zlib/lib/libz.dylib;-lcurl;-L/opt/homebrew/Cellar/mariadb/12.1.2/lib/ -lmariadb;/opt/homebrew/opt/sqlite/lib/libsqlite3.dylib"

)

whereas by default INTERFACE_INCLUDE_DIRECTORIES points to the source code location (in the case of brew, something like /private/<some-

hash> ).

Brew deletes the source code at the end of the installation, whereas midas seems to rely on the fact that the source code is still

present...

Does it help?

A way to fix is to search for this ``/private'' path and replace it, but this isn't ideal I guess...

This is what I

did in the midas formula:

--------

# Fix broken CMake export paths if they exist

cmake_files = Dir["#{lib}/**/*manalyzer*.cmake"]

cmake_files.each do |file|

if File.read(file).match?(%r{/private/tmp/midas-[^/"]+})

inreplace file, %r{/private/tmp/midas-

[^/"]+},

prefix.to_s

end

inreplace file, %r{/tmp/midas-[^/"]+}, prefix.to_s if File.read(file).match?(%r{/tmp/midas-[^/"]+})

end

cmake_files = Dir["#{lib}/**/*midas*.cmake"]

cmake_files.each do |file|

if File.read(file).match?(%r{/private/tmp/midas-

[^/"]+})

inreplace file, %r{/private/tmp/midas-[^/"]+},

prefix.to_s

end

inreplace file, %r{/tmp/midas-[^/"]+},

prefix.to_s if File.read(file).match?(%r{/tmp/midas-[^/"]+})

end

-----

I guess this code could be changed into some bash commands and

added to your script?

Thank you very much again!

Mathieu

> Hi Mathieu,

>

> thanks for your contribution. Have you looked at the

install.sh script I developed last week:

>

> https://daq00.triumf.ca/MidasWiki/index.php/Install_Script

>

> which basically does the

same, plus it modifies the environment and installs mhttpd as a service.

>

> Actually I modeled the installation after the way Homebrew is

installed in the first place (using curl).

>

> I wonder if the two things can kind of be integrated. Would be great to get with brew always

the newest midas version, and it would also

> check and modify the environment.

>

> If you tell me exactly what is wrong

MidasConfig.cmake.in I'm happy to fix it.

>

> Best,

> Stefan |

26 Jan 2026, Stefan Ritt, Info, Homebrew support for midas 26 Jan 2026, Stefan Ritt, Info, Homebrew support for midas

|

> Actually, these two approaches are slightly different I guess:

> - the installation script you are linking manages the

> installation and the subsequent steps, but doesn't manage the dependencies: for instance on my machine, it didn't find root and so manalyzer

> is built without root support.

> Maybe this is just something to adapt?

Yes indeed. From your perspective, you probably always want ROOT with MIDAS. But at PSI here we have several installation where we do not

need ROOT. These are mainly beamline control PCs which just connect to EPICS or pump station controls replacing Labview installations. All

graphics there is handled with the new mplot graphs which is better in some case.

I therefore added a check into install.sh which tells you explicitly if ROOT is found and included or not. Then it's up to the user to choose to

install ROOT or not.

> Brew on the other hand manages root and so knows how to link these two

> together.

If you really need it, yes.

> - The nice thing I like about brew is that one can "ship bottles" aka compiled version of the code; it is great and fast for

> deployment and avoid compilation issues.

> - I like that your setup does deploy and launch all the necessary executables ! I know brew can do

> this too via brew services (see an example here: https://github.com/Homebrew/homebrew-core/blob/HEAD/Formula/r/rabbitmq.rb#L83 ), maybe worth

> investigating...?

Indeed this is an advantage of brew, and I wholeheartedly support it therefore. If you decide to support this for the midas

community, I would like you to document it at

https://daq00.triumf.ca/MidasWiki/index.php/Installation

Please talk to Ben <bsmith@triumf.ca> who manages the documentation and can give you write access there. The downside is that you will

then become the supporter for the brew and all user requests will be forwarded to you as long as you are willing to maintain the package ;-)

> - Brew relies on code tagging to better manage the bottles, so that it uses the tag to get a well-defined version of the

> code and give a name to the version.

> I had to implement my own tags e.g. midas-mod-2025-12-a to get a release.

> I am not sure how to do in the

> case of midas where the tags are not that frequent...

Yes we always struggle with the tagging (what is a "release", when should we release, ...). Maybe it's the simplest if we tag once per month

blindly with midas-2026-02a or so. In the past KO took care of the tagging, he should reply here with his thoughts.

> Thank you for the feedback, I will make the modifications (aka naming my formula

> ``midas-mod'') so that it doesn't collide with a future official midas one.

Nope. The idea is that YOU do the future official midas realize from now on ;-)

> Concerning the MidasConfig.cmake issue, this is what I need ...

Let's take this offline not to spam others.

Best,

Stefan |

20 Jan 2026, Stefan Ritt, Info, New tabbed custom pages 20 Jan 2026, Stefan Ritt, Info, New tabbed custom pages

|

Tabbed custom pages have been implemented in MIDAS. Below you see and example. The documentation

is here:

https://daq00.triumf.ca/MidasWiki/index.php/Custom_Page#Tabbed_Pages

Stefan |

14 Jan 2026, Derek Fujimoto, Bug Report, DEBUG messages not showing and related 14 Jan 2026, Derek Fujimoto, Bug Report, DEBUG messages not showing and related

|

I have an application where I want to (optionally) send my own debugging messages to the midas.log file, but am having some problems with this:

* Messages with type MT_DEBUG don't show up in midas.log or on the messages page (calling cm_msg from python)

* messages.html is missing the DEBUG filter option

* Messages sent to other log files (not midas.log) don't get message banners on the web page. Is this intentional?

So I think either there is a bug, or I need to start MIDAS with some flag to enable debugging. Looking at the source, I don't see why these messages wouldn't get logged.

Any insight would be appreciated! |

14 Jan 2026, Stefan Ritt, Bug Report, DEBUG messages not showing and related 14 Jan 2026, Stefan Ritt, Bug Report, DEBUG messages not showing and related

|

MT_DEBUG messages are there for debugging, not logging. They only go into the SYSMSG buffer and NOT to the log file. If you want anything logged, just use MT_INFO.

Not sure if that's missing in the documentation. Anyhow, there are my original ideas (from 1995 ;-) )

MT_ERROR

Error message, to be displayed in red

MT_INFO

Info or status message

MT_DEBUG

Only sent to SYSMSG buffer, not to midas.log file. Handy if you produce lots of message and don't want to flood the message file. Plus it does not change the timing of your app, since the SYSMSG buffer is much faster than writing

to a file.

MT_USER

Message generated interactively by a user, like in the chat window or via the odbedit "msg" command

MT_LOG

Messages with are only logged but not put into the SYSMSG buffer

MT_TALK

Messages which should go through the speech synthesis in the browser and are "spoken"

MT_CALL

Message which would be forwarded to the user via a messaging app (historically this was an actual analog telephone call via a modem ;-) )

If that is missing in the documentation, please feel free to copy/paste it to the appropriate place.

Stefan |

14 Jan 2026, Derek Fujimoto, Bug Report, DEBUG messages not showing and related 14 Jan 2026, Derek Fujimoto, Bug Report, DEBUG messages not showing and related

|

Ok thanks for the quick and clear response!

Derek

> MT_DEBUG messages are there for debugging, not logging. They only go into the SYSMSG buffer and NOT to the log file. If you want anything logged, just use MT_INFO.

>

> Not sure if that's missing in the documentation. Anyhow, there are my original ideas (from 1995 ;-) )

>

> MT_ERROR

> Error message, to be displayed in red

>

> MT_INFO

> Info or status message

>

> MT_DEBUG

> Only sent to SYSMSG buffer, not to midas.log file. Handy if you produce lots of message and don't want to flood the message file. Plus it does not change the timing of your app, since the SYSMSG buffer is much faster than writing

> to a file.

>

> MT_USER

> Message generated interactively by a user, like in the chat window or via the odbedit "msg" command

>

> MT_LOG

> Messages with are only logged but not put into the SYSMSG buffer

>

> MT_TALK

> Messages which should go through the speech synthesis in the browser and are "spoken"

>

> MT_CALL

> Message which would be forwarded to the user via a messaging app (historically this was an actual analog telephone call via a modem ;-) )

>

> If that is missing in the documentation, please feel free to copy/paste it to the appropriate place.

>

>

> Stefan |

09 Jan 2026, Stefan Ritt, Forum, MIDAS installation 09 Jan 2026, Stefan Ritt, Forum, MIDAS installation

|

Since we have no many RaspberryPi based control systems running at our lab with midas, I want to

streamline the midas installation, such that a non-expert can install it on these devices.

First, midas has to be cloned under "midas" in the user's home directory with

git clone https://bitbucket.org/tmidas/midas.git --recurse-submodules

For simplicity, this puts midas right into /home/<user>/midas, and not into any "packages" subdirectory

which I believe is not necessary.

Then I wrote a setup script midas/midas_setup.sh which does the following:

- Add midas environment variables to .bashrc / .zschenv depending on the shell being used

- Compile and install midas to midas/bin

- Load an initial ODB which allows insecure http access to port 8081

- Install mhttpd as a system service and start it via systemctl

Since I'm not a linux system expert, the current file might be a bit clumsy. I know that automatic shell

detection can be made much more elaborate, but I wanted a script which can easy be understood even by

non-experts and adapted slightly if needed.

If you know about shell scripts and linux administration, please have a quick look at the attached script

and give me any feedback.

Stefan |

10 Jan 2026, Marius Koeppel, Forum, MIDAS installation 10 Jan 2026, Marius Koeppel, Forum, MIDAS installation

|

Dear Stefan,

ThatÆs a great idea. For a private home automation project using a Raspberry Pi Zero, I used the

following setup:

https://github.com/makoeppel/midasHome/

This server has been running for about a year now

and reports the temperature in my home. Looking at your script, I think we are conceptually doing the same

thing.

I see three parts I would do slightly differently:

1. I would create an .env file to hold the

variables:

export PATH="$HOME/midas/bin:$PATH"

export MIDASSYS="$HOME/midas"

export MIDAS_DIR="$HOME/online"

export MIDAS_EXPT_NAME="Online"

2. For odbedit -c "load midas_setup.odb" > /dev/null

I would consider making

this a bit more explicit (using odbedit) so users can change the configuration if neededŚpossibly by

introducing a .conf file.

3. In my project, I used the MIDAS Python bindings, which are currently missing in

your script:

export PYTHONPATH=$PYTHONPATH:$MIDASSYS/python

I also have one additional comment regarding

Docker. I think it would make sense to support a Docker image for MIDAS. This would give non-expert users an

even simpler setup. I created a related project some time ago:

https://github.com/makoeppel/midasDocker

I'd

be happy to help with this part as well.

Best regards,

Marius |

13 Jan 2026, Stefan Ritt, Forum, MIDAS installation 13 Jan 2026, Stefan Ritt, Forum, MIDAS installation

|

Thanks for your feedback. I reworked the installation script, and now also called it "install.sh" since it includes also the git clone. I modeled it after

homebrew a mit (https://brew.sh). This means you can now run the script on a prison linux system with:

/bin/bash -c "$(curl -sS https://bitbucket.org/tmidas/midas/raw/HEAD/install.sh)"

It contains three defaults for MIDASSYS, MIDAS_DIR and MIDAS_EXPT_NAME, but when you run in, you can overwrite these

defaults interactively. The script creates all directories, clones midas, compiles and installs it, installs and runs mhttpd as a system

service, then starts the logger and the example frontend. I also added your PYTHONPATH variable. The RC file is now automatically

detected.

Yes one could add more config files, but I want to have this basic install as simple as possible. If more things are needed, they

should be added as separate scripts or .ODB files.

Please have a look and let me know what you think about. I tested it on a RaspberryPi, but not yet on other systems.

Stefan |

13 Jan 2026, Stefan Ritt, Forum, MIDAS installation 13 Jan 2026, Stefan Ritt, Forum, MIDAS installation

|

I put the documentation under

https://daq00.triumf.ca/MidasWiki/index.php/Install_Script

Would be good if anybody could check that.

Stefan |

04 Jan 2026, Stefan Ritt, Info, Ad-hoc history plots of slow control equipment 04 Jan 2026, Stefan Ritt, Info, Ad-hoc history plots of slow control equipment

|

After popular demand and during some quite holidays I implemented ad-hoc history plots. To enable this

for a certain equipment, put

/Equipment/<name>/Settings/History buttons = true

into the ODB. You will then see a graph button after each variable. Pressing this button reveals the history

for this variable, see attachment.

Stefan |

17 Dec 2025, Derek Fujimoto, Info, mplot updates 17 Dec 2025, Derek Fujimoto, Info, mplot updates

|

Hello everyone,

Stefan and I have make a few updates to mplot to clean up some of the code and make it more usable directly from Javascript. With one exception this does not change the html interface. The below describes changes up to commit cd9f85c.

Breaking Changes:

- The idea is to have a "graph" be the overarching figure object, whereas the "plot" is the line or points associated with a single dataset.

- Some internal variable names have been changed to reflect this while minimizing breaking changes

- defaultParam renamed defaultGraphParam.

- There is no longer an initialized defaultParam.plot[0], these defaults are now defaultPlotParam which is a separate global variable

- MPlotGraph constructor signature MPlotGraph(divElement, param) changed to MPlotGraph(divElement, graphParam)

- HTML key data-bgcolor changed to data-zero-color as the former was misleading

New Features

- New addPlot() function.

- While the functionality of setData is preserved you can now use addPlot(plotParam) to add a new plot to the graph with minimal copying of the old defaultParam.plot[0]

- Minimal example, from plot_example.html: given some div container with id "P6":

let d = document.getElementById("P6"); // get div

d.mpg = new MPlotGraph(d, { title: { text: "Generated" }}); // make graph

d.mpg.addPlot( { xData: [0, 1, 2, 3, 4], yData: [10, 12, 12, 14, 11] } ); // add plot to the graph

- modifyPlot() and deletePlot() still to come

- New lines styles: none, solid, dashed, dotted

- Barplot-style category plots

|

08 Dec 2025, Konstantin Olchanski, Bug Report, odbxx memory leak with JSON ODB dump 08 Dec 2025, Konstantin Olchanski, Bug Report, odbxx memory leak with JSON ODB dump

|

I was testing odbxx with manalyzer, decided to print an odb value in every event,

and it worked fine in online mode, but bombed out when running from a data file

(JSON ODB dump). The following code has a memory leak. No idea if XML ODB dump

has the same problem.

int memory_leak()

{

midas::odb::set_odb_source(midas::odb::STRING, std::string(run.fRunInfo-

>fBorOdbDump.data(), run.fRunInfo->fBorOdbDump.size()));

while (1) {

int time = midas::odb("/Runinfo/Start time binary");

printf("time %d\n", time);

}

}

K.O. |

09 Dec 2025, Stefan Ritt, Bug Report, odbxx memory leak with JSON ODB dump 09 Dec 2025, Stefan Ritt, Bug Report, odbxx memory leak with JSON ODB dump

|

Thanks for reporting this. It was caused by a

MJsonNode* node = MJsonNode::Parse(str.c_str());

not followed by a

delete node;

I added that now in odb::odb_from_json_string(). Can you try again?

Stefan |

09 Dec 2025, Konstantin Olchanski, Bug Report, odbxx memory leak with JSON ODB dump 09 Dec 2025, Konstantin Olchanski, Bug Report, odbxx memory leak with JSON ODB dump

|

> Thanks for reporting this. It was caused by a

>

> MJsonNode* node = MJsonNode::Parse(str.c_str());

>

> not followed by a

>

> delete node;

>

> I added that now in odb::odb_from_json_string(). Can you try again?

>

> Stefan

Close, but no cigar, node you delete is not the node you got from Parse(), see "node = subnode;".

If I delete the node returned by Parse(), I confirm the memory leak is gone.

BTW, it looks like we are parsing the whole JSON ODB dump (200k+) on every odbxx access. Can you parse it just once?

K.O. |

10 Dec 2025, Stefan Ritt, Bug Report, odbxx memory leak with JSON ODB dump 10 Dec 2025, Stefan Ritt, Bug Report, odbxx memory leak with JSON ODB dump

|

> BTW, it looks like we are parsing the whole JSON ODB dump (200k+) on every odbxx access. Can you parse it just once?

You are right. I changed the code so that the dump is only parsed once. Please give it a try.

Stefan |

11 Dec 2025, Konstantin Olchanski, Bug Report, odbxx memory leak with JSON ODB dump 11 Dec 2025, Konstantin Olchanski, Bug Report, odbxx memory leak with JSON ODB dump

|

> > BTW, it looks like we are parsing the whole JSON ODB dump (200k+) on every odbxx access. Can you parse it just once?

> You are right. I changed the code so that the dump is only parsed once. Please give it a try.

Confirmed fixed, thanks! There are 2 small changes I made in odbxx.h, please pull.

K.O. |

11 Dec 2025, Stefan Ritt, Bug Report, odbxx memory leak with JSON ODB dump 11 Dec 2025, Stefan Ritt, Bug Report, odbxx memory leak with JSON ODB dump

|

> Confirmed fixed, thanks! There are 2 small changes I made in odbxx.h, please pull.

There was one missing enable_jsroot in manalyzer, please pull yourself.

Stefan |

12 Dec 2025, Konstantin Olchanski, Bug Report, odbxx memory leak with JSON ODB dump 12 Dec 2025, Konstantin Olchanski, Bug Report, odbxx memory leak with JSON ODB dump

|

> > Confirmed fixed, thanks! There are 2 small changes I made in odbxx.h, please pull.

> There was one missing enable_jsroot in manalyzer, please pull yourself.

pulled, pushed to rootana, thanks for fixing it!

K.O. |

09 Dec 2025, Mark Grimes, Bug Report, manalyzer fails to compile on some systems because of missing #include <cmath> 09 Dec 2025, Mark Grimes, Bug Report, manalyzer fails to compile on some systems because of missing #include <cmath>

|

Hi,

We're getting errors in our build system like:

/code/midas/manalyzer/manalyzer.cxx: In member function ævoid Profiler::Begin(TARunInfo*,

std::vector<TARunObject*>)Æ:

/code/midas/manalyzer/manalyzer.cxx:799:27: error: æpowÆ was not declared in this scope

799 | bins[i] = TimeRange*pow(1.1,i)/pow(1.1,Nbins);

The solution is to add "#include <cmath>" at the top of manalyzer.cxx; I guess on a lot of systems the

include is implicit from some other include so doesn't cause errors. I don't have the permissions to push

branches, could this be added please?

Thanks,

Mark. |

09 Dec 2025, Konstantin Olchanski, Bug Report, manalyzer fails to compile on some systems because of missing #include <cmath> 09 Dec 2025, Konstantin Olchanski, Bug Report, manalyzer fails to compile on some systems because of missing #include <cmath>

|

> /code/midas/manalyzer/manalyzer.cxx:799:27: error: æpowÆ was not declared in this scope

> 799 | bins[i] = TimeRange*pow(1.1,i)/pow(1.1,Nbins);

math.h added, pushed. nice catch.

implicit include of math.h came through TFile.h (ROOT v6.34.02), perhaps you have a newer ROOT

and they jiggled the include files somehow.

TFile.h -> TDirectoryFile.h -> TDirectory.h -> TNamed.h -> TString.h -> TMathBase.h -> cmath -> math.h

K.O. |

11 Dec 2025, Mark Grimes, Bug Report, manalyzer fails to compile on some systems because of missing #include <cmath> 11 Dec 2025, Mark Grimes, Bug Report, manalyzer fails to compile on some systems because of missing #include <cmath>

|

Thanks. Are you happy for me to update the submodule commit in Midas to use this fix? I should have sufficient permission if you agree.

> > /code/midas/manalyzer/manalyzer.cxx:799:27: error: æpowÆ was not declared in this scope

> > 799 | bins[i] = TimeRange*pow(1.1,i)/pow(1.1,Nbins);

>

> math.h added, pushed. nice catch.

>

> implicit include of math.h came through TFile.h (ROOT v6.34.02), perhaps you have a newer ROOT

> and they jiggled the include files somehow.

>

> TFile.h -> TDirectoryFile.h -> TDirectory.h -> TNamed.h -> TString.h -> TMathBase.h -> cmath -> math.h

>

> K.O. |

11 Dec 2025, Konstantin Olchanski, Bug Report, manalyzer fails to compile on some systems because of missing #include <cmath> 11 Dec 2025, Konstantin Olchanski, Bug Report, manalyzer fails to compile on some systems because of missing #include <cmath>

|

> TFile.h -> TDirectoryFile.h -> TDirectory.h -> TNamed.h -> TString.h -> TMathBase.h -> cmath -> math.h

reading ROOT release notes, 6.38 removed TMathBase.h from TString.h, with a warning "This change may cause errors during compilation of

ROOT-based code". Upright citizens, nice guys!

> Thanks. Are you happy for me to update the submodule commit in Midas to use this fix? I should have sufficient permission if you agree.

I am doing some last minute tests, will pull it into midas and rootana later today.

K.O. |

19 May 2025, Jonas A. Krieger, Suggestion, manalyzer root output file with custom filename including run number 19 May 2025, Jonas A. Krieger, Suggestion, manalyzer root output file with custom filename including run number

|

Hi all,

Would it be possible to extend manalyzer to support custom .root file names that include the run number?

As far as I understand, the current behavior is as follows:

The default filename is ./root_output_files/output%05d.root , which can be customized by the following two command line arguments.

-Doutputdirectory: Specify output root file directory

-Ooutputfile.root: Specify output root file filename

If an output file name is specified with -O, -D is ignored, so the full path should be provided to -O.

I am aiming to write files where the filename contains sufficient information to be unique (e.g., experiment, year, and run number). However, if I specify it with -O, this would require restarting manalyzer after every run; a scenario that I would like to avoid if possible.

Please find a suggestion of how manalyzer could be extended to introduce this functionality through an additional command line argument at

https://bitbucket.org/krieger_j/manalyzer/commits/24f25bc8fe3f066ac1dc576349eabf04d174deec

Above code would allow the following call syntax: ' ./manalyzer.exe -O/data/experiment1_%06d.root --OutputNumbered '

But note that as is, it would fail if a user specifies an incompatible format such as -Ooutput%s.root .

So a safer, but less flexible option might be to instead have the user provide only a prefix, and then attach %05d.root in the code.

Thank you for considering these suggestions! |

12 Nov 2025, Jonas A. Krieger, Suggestion, manalyzer root output file with custom filename including run number 12 Nov 2025, Jonas A. Krieger, Suggestion, manalyzer root output file with custom filename including run number

|

Hi all,

Could you please get back to me about whether something like my earlier suggestion might be considered, or if I should set up some workaround to rename files at EOR for our experiments?

https://daq00.triumf.ca/elog-midas/Midas/3042 :

-----------------------------------------------

> Hi all,

>

> Would it be possible to extend manalyzer to support custom .root file names that include the run number?

>

> As far as I understand, the current behavior is as follows:

> The default filename is ./root_output_files/output%05d.root , which can be customized by the following two command line arguments.

>

> -Doutputdirectory: Specify output root file directory

> -Ooutputfile.root: Specify output root file filename

>

> If an output file name is specified with -O, -D is ignored, so the full path should be provided to -O.

>

> I am aiming to write files where the filename contains sufficient information to be unique (e.g., experiment, year, and run number). However, if I specify it with -O, this would require restarting manalyzer after every run; a scenario that I would like to avoid if possible.

>

> Please find a suggestion of how manalyzer could be extended to introduce this functionality through an additional command line argument at

> https://bitbucket.org/krieger_j/manalyzer/commits/24f25bc8fe3f066ac1dc576349eabf04d174deec

>

> Above code would allow the following call syntax: ' ./manalyzer.exe -O/data/experiment1_%06d.root --OutputNumbered '

> But note that as is, it would fail if a user specifies an incompatible format such as -Ooutput%s.root .

>

> So a safer, but less flexible option might be to instead have the user provide only a prefix, and then attach %05d.root in the code.

>

> Thank you for considering these suggestions! |

25 Nov 2025, Konstantin Olchanski, Suggestion, manalyzer root output file with custom filename including run number 25 Nov 2025, Konstantin Olchanski, Suggestion, manalyzer root output file with custom filename including run number

|

Hi, Jonas, thank you for reminding me about this. I hope to work on manalyzer in the next few weeks and I will review the ROOT output file name scheme.

K.O.

> Hi all,

>

> Could you please get back to me about whether something like my earlier suggestion might be considered, or if I should set up some workaround to rename files at EOR for our experiments?

>

> https://daq00.triumf.ca/elog-midas/Midas/3042 :

> -----------------------------------------------

> > Hi all,

> >

> > Would it be possible to extend manalyzer to support custom .root file names that include the run number?

> >

> > As far as I understand, the current behavior is as follows:

> > The default filename is ./root_output_files/output%05d.root , which can be customized by the following two command line arguments.

> >

> > -Doutputdirectory: Specify output root file directory

> > -Ooutputfile.root: Specify output root file filename

> >

> > If an output file name is specified with -O, -D is ignored, so the full path should be provided to -O.

> >

> > I am aiming to write files where the filename contains sufficient information to be unique (e.g., experiment, year, and run number). However, if I specify it with -O, this would require restarting manalyzer after every run; a scenario that I would like to avoid if possible.

> >

> > Please find a suggestion of how manalyzer could be extended to introduce this functionality through an additional command line argument at

> > https://bitbucket.org/krieger_j/manalyzer/commits/24f25bc8fe3f066ac1dc576349eabf04d174deec

> >

> > Above code would allow the following call syntax: ' ./manalyzer.exe -O/data/experiment1_%06d.root --OutputNumbered '

> > But note that as is, it would fail if a user specifies an incompatible format such as -Ooutput%s.root .

> >

> > So a safer, but less flexible option might be to instead have the user provide only a prefix, and then attach %05d.root in the code.

> >

> > Thank you for considering these suggestions! |

08 Dec 2025, Konstantin Olchanski, Suggestion, manalyzer root output file with custom filename including run number 08 Dec 2025, Konstantin Olchanski, Suggestion, manalyzer root output file with custom filename including run number

|

I updated the root helper constructor to give the user more control over ROOT output file names.

You can now change it to anything you want in the module run constructor, see manalyzer_example_esoteric.cxx

Is this good enough?

struct ExampleE1: public TARunObject

{

ExampleE1(TARunInfo* runinfo)

: TARunObject(runinfo)

{

#ifdef HAVE_ROOT

if (runinfo->fRoot)

runinfo->fRoot->fOutputFileName = "my_custom_file_name.root";

#endif

}

}

K.O. |

17 Sep 2025, Mark Grimes, Suggestion, Get manalyzer to configure midas::odb when running offline 17 Sep 2025, Mark Grimes, Suggestion, Get manalyzer to configure midas::odb when running offline

|

Hi,

Lots of users like the midas::odb interface for reading from the ODB in manalyzers. It currently doesn't

work offline however without a few manual lines to tell midas::odb to read from the ODB copy in the run

header. The code also gets a bit messy to work out the current filename and get midas::odb to reopen the

file currently being processed. This would be much cleaner if manalyzer set this up automatically, and then

user code could be written that is completely ignorant of whether it is running online or offline.

The change I suggest is in the `set_offline_odb` branch, commit 4ffbda6, which is simply:

diff --git a/manalyzer.cxx b/manalyzer.cxx

index 371f135..725e1d2 100644

--- a/manalyzer.cxx

+++ b/manalyzer.cxx

@@ -15,6 +15,7 @@

#include "manalyzer.h"

#include "midasio.h"

+#include "odbxx.h"

//////////////////////////////////////////////////////////

@@ -2075,6 +2076,8 @@ static int ProcessMidasFiles(const std::vector<std::string>& files, const std::v

if (!run.fRunInfo) {

run.CreateRun(runno, filename.c_str());

run.fRunInfo->fOdb = MakeFileDumpOdb(event->GetEventData(), event->data_size);

+ // Also set the source for midas::odb in case people prefer that interface

+ midas::odb::set_odb_source(midas::odb::STRING, std::string(event->GetEventData(), event-

>data_size));

run.BeginRun();

}

It happens at the point where the ODB record is already available and requires no effort from the user to

be able to read the ODB offline.

Thanks,

Mark. |

17 Sep 2025, Konstantin Olchanski, Suggestion, Get manalyzer to configure midas::odb when running offline 17 Sep 2025, Konstantin Olchanski, Suggestion, Get manalyzer to configure midas::odb when running offline

|

> Lots of users like the midas::odb interface for reading from the ODB in manalyzers.

> +#include "odbxx.h"

This is a useful improvement. Before commit of this patch, can you confirm the RunInfo destructor

deletes this ODB stuff from odbxx? manalyzer takes object life times very seriously.

There is also the issue that two different RunInfo objects would load two different ODB dumps

into odbxx. (inability to access more than 1 ODB dump is a design feature of odbxx).

This is not an actual problem in manalyzer because it only processes one run at a time

and only 1 or 0 RunInfo objects exists at any given time.

Of course with this patch extending manalyzer to process two or more runs at the same time becomes impossible.

K.O. |

18 Sep 2025, Mark Grimes, Suggestion, Get manalyzer to configure midas::odb when running offline 18 Sep 2025, Mark Grimes, Suggestion, Get manalyzer to configure midas::odb when running offline

|

> ....Before commit of this patch, can you confirm the RunInfo destructor

> deletes this ODB stuff from odbxx? manalyzer takes object life times very seriously.

The call stores the ODB string in static members of the midas::odb class. So these will have a lifetime of the process or until they're replaced by another

call. When a midas::odb is instantiated it reads from these static members and then that data has the lifetime of that instance.

> Of course with this patch extending manalyzer to process two or more runs at the same time becomes impossible.

Yes, I hadn't realised that was an option. For that to work I guess the aforementioned static members could be made thread local storage, and

processing of each run kept to a specific thread. Although I could imagine user code making assumptions and breaking, like storing a midas::odb as a

class member or something.