| ID |

Date |

Author |

Topic |

Subject |

|

2222

|

18 Jun 2021 |

Konstantin Olchanski | Info | 1000 Mbytes/sec through midas achieved! | > In MEG II we also kind of achieved this rate.

>

> Instead of an expensive high-grade switch, we chose a cheap "Chinese" high-grade switch.

Right. We built this DAQ system about 3 years ago and the cheep Chineese switches arrived

on the market about 1 year after we purchased the big 96 port juniper switch. Bad timing/good timing.

Actually I have a very nice 24-port 1gige switch ($2000 about 3 years ago), I could have

used 4 of them in parallel, but they were discontinued and replaced with a $5000 switch

(+$3000 for a 10gige uplink. I think I got the last very last one cheap switch).

But not all Chineese switches are equal. We have an Ubiquity 10gige switch, and it does

not have working end-to-end ethernet flow control. (yikes!).

BTW, for this project we could not use just any cheap switch, we must have 64 fiber SFP ports

for connecting on-TPC electronics. This narrows the market significantly and it does

not match the industry standard port counts 8-16-24-48-96.

> MikroTik CRS354-48G-4S+2Q+RM 54 port

> MikroTik CRS326-24S-2Q+RM 26 Port

We have a hard time buying this stuff in Vancouver BC, Canada. Most of our regular suppliers

are US based and there is a technology trade war still going on between the US and China.

I guess we could buy direct on alibaba, but for the risk of scammers, scalpers and iffy shipping.

> both cost in the order of 500 US$

tell one how much we overpay for US based stuff. not surprising, with how Cisco & co can afford

to buy sports arenas, etc.

> We were astonished that they don't loose UDP packets when all inputs send a packet at the

> same time, and they have to pipe them to the single output one after the other,

> but apparently the switch have enough buffers.

You probably see ethernet flow control in action. Look at the counters for ethernet pause frames

in your daq boards and in your main computer.

> (which is usually NOT written in the data sheets).

True, when I looked into this, I found a paper by somebody in Berkley for special

technique to measure the size of such buffers.

(The big Juniper switch has only 8 Mbytes of buffer. The current wisdom for backbone networks

is to have as little buffering as possible).

> To avoid UDP packet loss for several events, we do traffic shaping by arming the trigger only when the previous event is

> completely received by the frontend. This eliminates all flow control and other complicated methods. Marco can tell you the

> details.

We do not do this. (very bad!). When each trigger arrives, all 64+8 DAQ boards send a train of UDP packets

at maximum line speed (64+8 at 1 gige) all funneled into one 10 gige ((64+8)/10 oversubscription).

Before we got ethernet flow control to work properly, we had to throttle all the 1gige links by about 60%

to get any complete events at all. This would not have been acceptable for physics data taking.

> Another interesting aspect: While we get the data into the frontend, we have problems in getting it through midas. Your

> bm_send_event_sg() is maybe a good approach which we should try. To benchmark the out-of-the-box midas, I run the dummy frontend

> attached on my MacBook Pro 2.4 GHz, 4 cores, 16 GB RAM, 1 TB SSD disk.

Dummy frontend is not very representative, because limitation is the memory bandwidth

and CPU load, and a real ethernet receiver has quite a bit of both (interrupt processing,

DMA into memory, implicit memcpy() inside the socket read()).

For example, typical memcpy() speeds are between 22 and 10 Gbytes/sec for current

generation CPUs and DRAM. This translates for a total budget of 22 and 10 memcpy()

at 10gige speeds. Subtract from this 1 memcpy() to DMA data from ethernet into memory

and 1 memcpy() to DMA data from memory to storage. Subtract from this 2 implicit

memcpy() for read() in the frontend and write() in mlogger. (the Linux sendfile() syscall

was invented to cut them out). Subtract from this 1 memcpy() for instruction and incidental

data fetch (no interesting program fits into cache). Subtract from this memory bandwidth

for running the rest of linux (systemd, ssh, cron jobs, NFS, etc). Hardly anything

left when all is said and done. (Found it, the alphagdaq memcpy() runs at 14 Gbytes/sec,

so total budget of 14 memcpy() at 10gige speeds).

And the event builder eats up 2 CPU cores to process the UDP packets at 10gige rate,

there is quite a bit of CPU-expensive data unpacking, inspection and processing

going on that cannot be cut out. (alphagdaq has 4 cores, "8 threads").

K.O.

P.S. Waiting for rack-mounted machines with AMD "X" series processors... K.O. |

|

2223

|

18 Jun 2021 |

Konstantin Olchanski | Info | 1000 Mbytes/sec through midas achieved! | > ... MEG II ... 34 crates each with 32 DRS4 digitiser chips and a single 1 Gbps readout link through a Xilinx Zynq SoC.

>

> Zynq ... embedded ethernet MAC does not support jumbo frames (always read the fine prints in the manuals!)

> and the embedded Linux ethernet stack seems to struggle when we go beyond 250 Mbps of UDP traffic.

that's an ouch. we use the altera ethernet mac, and jumbo frames are supported, but the firmware data path

was originally written assuming 1500-byte packets and it is too much work to rewrite it for jumbo frames.

we send the data directly from the FPGA fabric to the ethernet, there is an avalon/axi bus multiplexer

to split the ethernet packets to the NIOS slow control CPU. not sure if such scheme is possible

for SoC FPGAs with embedded ARM CPUs.

and yes, a 1 GHz ARM CPU will not do 10gige. You see it yourself, measure your memcpy() speed. Where

typical PC will have dual-channel 128-bit wide memory (and the famous for it's low latency

Intel memory controller), ARM SoC will have at best 64-bit wide memory (some boards are only 32-bit wide!),

with DDR3 (not DDR4) severely under-clocked (i.e. DDR3-900, etc). This is why the new Apple ARM chips

are so interesting - can Apple ARM memory controller beat the Intel x86 memory controller?

> On the receiver side, we have the DAQ server with an Intel E5-2630 v4 CPU

that's the right gear for the job. quad-channel memory with nominal "Max Memory Bandwidth 68.3 GB/s",

10 CPU cores. My benchmark of memcpy() for the much older duad-channel memory i7-4820 with DDR3-1600 DIMMs

is 20 Gbytes/sec. waiting for ARM CPU with similar specs.

> and a 10 Gbit connection to the network using an Intel X710 Network card.

> In the past, we used also a "cheap" 10 Gbit card from Tehuti but the driver performance was so bad that it could not digest more than 5 Gbps of data.

yup, same here. use Intel ethernet exclusively, even for 1gige links.

> A major modification to Konstantin scheme is that we need to calibrate all WFMs online so that a software zero suppression

I implemented hardware zero suppression in the FPGA code. I think 1 GHz ARM CPU does not have the oomph for this.

> rb_get_wp() returns almost always DB_TIMEOUT

replace rb_xxx() with std::deque<std::vector<char>> (protected by a mutex, of course). lots of stuff in the mfe.c frontend

is obsolete in the same way. check out the newer tmfe frontends (tmfe.md, tmfe.h and tmfe examples).

> It is difficult to report three years of development in a single Elog

but quite successful at it. big thanks for your write-up. I think our info is quite useful for the next people.

K.O. |

|

2224

|

18 Jun 2021 |

Konstantin Olchanski | Info | Add support for rtsp camera streams in mlogger (history_image.cxx) | > mlogger (history_image) now supports rtsp cameras

my goodness, we will drive the video surveillance industry out of business.

> My suggestion / request would be to move the camera management out of

> mlogger and into a new program (mcamera?), so that users can choose to off

> load the CPU load to another system (I understand the OpenCV will use GPU

> decoders if available also, which can also lighten the CPU load).

every 2 years I itch to separate mlogger into two parts - data logger

and history logger.

but then I remember that the "I" in MIDAS stands for "integrated",

and "M" stands for "maximum" and I say, "nah..."

(I guess we are not maximum integrated enough to have mhttpd, mserver

and mlogger to be one monolithic executable).

There is also a line of thinking that mlogger should remain single-threaded

for maximum reliability and ease of debugging. So if we keep adding multithreaded

stuff to it, perhaps it should be split-apart after all. (anything that makes

the size of mlogger.cxx smaller is a good thing, imo).

K.O. |

|

2257

|

09 Jul 2021 |

Konstantin Olchanski | Info | cannot push to bitbucket | the day has arrived when I cannot git push to bitbucket. cloud computing rules!

I have never seen this error before and I do not think we have any hooks installed,

so it must be some bitbucket stuff. their status page says some kind of maintenance

is happening, but the promised error message is "repository is read only" or something

similar.

I hope this clears out automatically. I am updating all the cmake crud and I have no idea

which changes I already pushed and which I did not, so no idea if anything will work for

people who pull from midas until this problem is cleared out.

daq00:mvodb$ git push

X11 forwarding request failed on channel 0

Enumerating objects: 3, done.

Counting objects: 100% (3/3), done.

Delta compression using up to 12 threads

Compressing objects: 100% (2/2), done.

Writing objects: 100% (2/2), 247 bytes | 247.00 KiB/s, done.

Total 2 (delta 1), reused 0 (delta 0)

remote: null value in column "attempts" violates not-null constraint

remote: DETAIL: Failing row contains (13586899, 2021-07-10 01:13:28.812076+00, 1970-01-01

00:00:00+00, 1970-01-01 00:00:00+00, 65975727, null).

To bitbucket.org:tmidas/mvodb.git

! [remote rejected] master -> master (pre-receive hook declined)

error: failed to push some refs to 'git@bitbucket.org:tmidas/mvodb.git'

daq00:mvodb$

K.O. |

|

2258

|

11 Jul 2021 |

Konstantin Olchanski | Info | midas cmake update | I reworked the midas cmake files:

- install via CMAKE_INSTALL_PREFIX should work correctly now:

- installed are bin, lib and include - everything needed to build against the midas library

- if built without CMAKE_INSTALL_PREFIX, a special mode "MIDAS_NO_INSTALL_INCLUDE_FILES" is activated, and the include path

contains all the subdirectories need for compilation

- -I$MIDASSYS/include and -L$MIDASSYS/lib -lmidas work in both cases

- to "use" midas, I recommend: include($ENV{MIDASSYS}/lib/midas-targets.cmake)

- config files generated for find_package(midas) now have correct information (a manually constructed subset of information

automatically exported by cmake's install(export))

- people who want to use "find_package(midas)" will have to contribute documentation on how to use it (explain the magic used to

find the "right midas" in /usr/local/midas or in /midas or in ~/packages/midas or in ~/pacjages/new-midas) and contribute an

example superproject that shows how to use it and that can be run from the bitpucket automatic build. (features that are not part

of the automatic build we cannot insure against breakage).

On my side, here is an example of using include($ENV{MIDASSYS}/lib/midas-targets.cmake). I posted this before, it is used in

midas/examples/experiment and I will ask ben to include it into the midas wiki documentation.

Below is the complete cmake file for building the alpha-g event bnuilder and main control frontend. When presented like this, I

have to agree that cmake does provide positive value to the user. (the jury is still out whether it balances out against the

negative value in the extra work to "just support find_package(midas) already!").

#

# CMakeLists.txt for alpha-g frontends

#

cmake_minimum_required(VERSION 3.12)

project(agdaq_frontends)

include($ENV{MIDASSYS}/lib/midas-targets.cmake)

add_compile_options("-O2")

add_compile_options("-g")

#add_compile_options("-std=c++11")

add_compile_options(-Wall -Wformat=2 -Wno-format-nonliteral -Wno-strict-aliasing -Wuninitialized -Wno-unused-function)

add_compile_options("-DTMFE_REV0")

add_compile_options("-DOS_LINUX")

add_executable(feevb feevb.cxx TsSync.cxx)

target_link_libraries(feevb midas)

add_executable(fectrl fectrl.cxx GrifComm.cxx EsperComm.cxx JsonTo.cxx KOtcp.cxx $ENV{MIDASSYS}/src/tmfe_rev0.cxx)

target_link_libraries(fectrl midas)

#end |

|

2261

|

13 Jul 2021 |

Stefan Ritt | Info | MidasConfig.cmake usage | Thanks for the contribution of MidasConfig.cmake. May I kindly ask for one extension:

Many of our frontends require inclusion of some midas-supplied drivers and libraries

residing under

$MIDASSYS/drivers/class/

$MIDASSYS/drivers/device

$MIDASSYS/mscb/src/

$MIDASSYS/src/mfe.cxx

I guess this can be easily added by defining a MIDAS_SOURCES in MidasConfig.cmake, so

that I can do things like:

add_executable(my_fe

myfe.cxx

$(MIDAS_SOURCES}/src/mfe.cxx

${MIDAS_SOURCES}/drivers/class/hv.cxx

...)

Does this make sense or is there a more elegant way for that?

Stefan |

|

2262

|

13 Jul 2021 |

Konstantin Olchanski | Info | MidasConfig.cmake usage | > $MIDASSYS/drivers/class/

> $MIDASSYS/drivers/device

> $MIDASSYS/mscb/src/

> $MIDASSYS/src/mfe.cxx

>

> I guess this can be easily added by defining a MIDAS_SOURCES in MidasConfig.cmake, so

> that I can do things like:

>

> add_executable(my_fe

> myfe.cxx

> $(MIDAS_SOURCES}/src/mfe.cxx

> ${MIDAS_SOURCES}/drivers/class/hv.cxx

> ...)

1) remove $(MIDAS_SOURCES}/src/mfe.cxx from "add_executable", add "mfe" to

target_link_libraries() as in examples/experiment/frontend:

add_executable(frontend frontend.cxx)

target_link_libraries(frontend mfe midas)

2) ${MIDAS_SOURCES}/drivers/class/hv.cxx surely is ${MIDASSYS}/drivers/...

If MIDAS is built with non-default CMAKE_INSTALL_PREFIX, "drivers" and co are not

available, as we do not "install" them. Where MIDASSYS should point in this case is

anybody's guess. To run MIDAS, $MIDASSYS/resources is needed, but we do not install

them either, so they are not available under CMAKE_INSTALL_PREFIX and setting

MIDASSYS to same place as CMAKE_INSTALL_PREFIX would not work.

I still think this whole business of installing into non-default CMAKE_INSTALL_PREFIX

location has not been thought through well enough. Too much thinking about how cmake works

and not enough thinking about how MIDAS works and how MIDAS is used. Good example

of "my tool is a hammer, everything else must have the shape of a nail".

K.O. |

|

2283

|

11 Oct 2021 |

Stefan Ritt | Info | Modification in the history logging system | A requested change in the history logging system has been made today. Previously, history values were

logged with a maximum frequency (usually once per second) but also with a minimum frequency, meaning

that values were logged for example every 60 seconds, even if they did not change. This causes a problem.

If a frontend is inactive or crashed which produces variables to be logged, one cannot distinguish between

a crashed or inactive frontend program or a history value which simply did not change much over time.

The history system was designed from the beginning in a way that values are only logged when they actually

change. This design pattern was broken since about spring 2021, see for example this issue:

https://bitbucket.org/tmidas/midas/issues/305/log_history_periodic-doesnt-account-for

Today I modified the history code to fix this issue. History logging is now controlled by the value of

common/Log history in the following way:

* Common/Log history = 0 means no history logging

* Common/Log history = 1 means log whenever the value changes in the ODB

* Common/Log history = N means log whenever the value changes in the ODB and

the previous write was more than N seconds ago

So most experiments should be happy with 0 or 1. Only experiments which have fluctuating values due to noisy

sensors might benefit from a value larger than 1 to limit the history logging. Anyhow this is not the preferred

way to limit history logging. This should be done by the front-end limiting the updates to the ODB. Most of the

midas slow control drivers have a �threshold� value. Only if the input changes by more then the threshold are

written to the ODB. This allows a per-channel �dead band� and not a per-event limit on history logging

as �log history� would do. In addition, the threshold reduces the write accesses to the ODB, although that is

only important for very large experiments.

Stefan |

|

2316

|

26 Jan 2022 |

Konstantin Olchanski | Info | MityCAMAC Login | For those curious about CAMAC controllers, this one was built around 2014 to

replace the aging CAMAC A1/A2 controllers (parallel and serial) in the TRIUMF

cyclotron controls system (around 50 CAMAC crates). It implements the main

and the auxiliary controller mode (single width and double width modules).

The design predates Altera Cyclone-5 SoC and has separate

ARM processor (TI 335x) and Cyclone-4 FPGA connected by GPMC bus.

ARM processor boots Linux kernel and CentOS-7 userland from an SD card,

FPGA boots from it's own EPCS flash.

User program running on the ARM processor (i.e. a MIDAS frontend)

initiates CAMAC operations, FPGA executes them. Quite simple.

K.O. |

|

2348

|

23 Feb 2022 |

Stefan Ritt | Info | Midas slow control event generation switched to 32-bit banks | The midas slow control system class drivers automatically read their equipment and generate events containing midas banks. So far these have been 16-bit banks using bk_init(). But now more and more experiments use large amount of channels, so the 16-bit address space is exceeded. Until last week, there was even no check that this happens, leading to unpredictable crashes.

Therefore I switched the bank generation in the drivers generic.cxx, hv.cxx and multi.cxx to 32-bit banks via bk_init32(). This should be in principle transparent, since the midas bank functions automatically detect the bank type during reading. But I thought I let everybody know just in case.

Stefan |

|

2350

|

03 Mar 2022 |

Konstantin Olchanski | Info | zlib required, lz4 internal | as of commit 8eb18e4ae9c57a8a802219b90d4dc218eb8fdefb, the gzip compression

library is required, not optional.

this fixes midas and manalyzer mis-build if the system gzip library

is accidentally not installed. (is there any situation where

gzip library is not installed on purpose?)

midas internal lz4 compression library was renamed to mlz4 to avoid collision

against system lz4 library (where present). lz4 files from midasio are now

used, lz4 files in midas/include and midas/src are removed.

I see that on recent versions of ubuntu we could switch to the system version

of the lz4 library. however, on centos-7 systems it is usually not present

and it still is a supported and widely used platform, so we stay

with the midas-internal library for now.

K.O. |

|

2351

|

03 Mar 2022 |

Konstantin Olchanski | Info | manalyzer updated | manalyzer was updated to latest version. mostly multi-threading improvements from

Joseph and myself. K.O. |

|

2355

|

16 Mar 2022 |

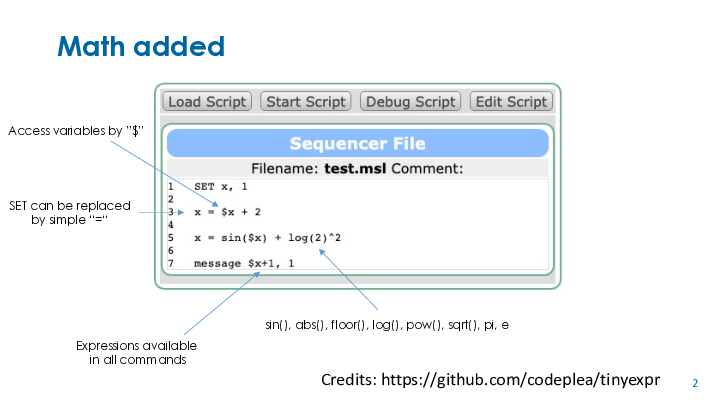

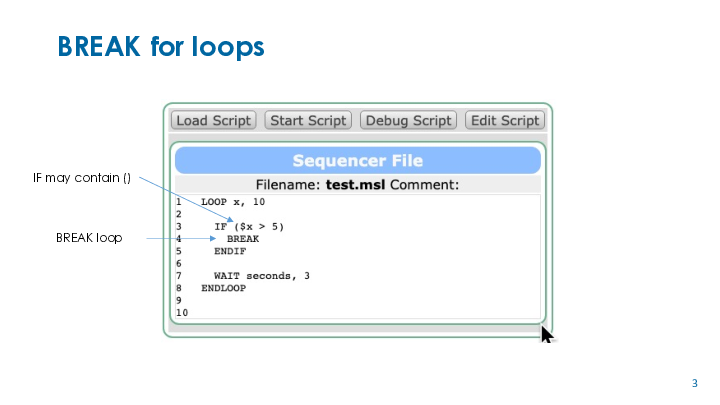

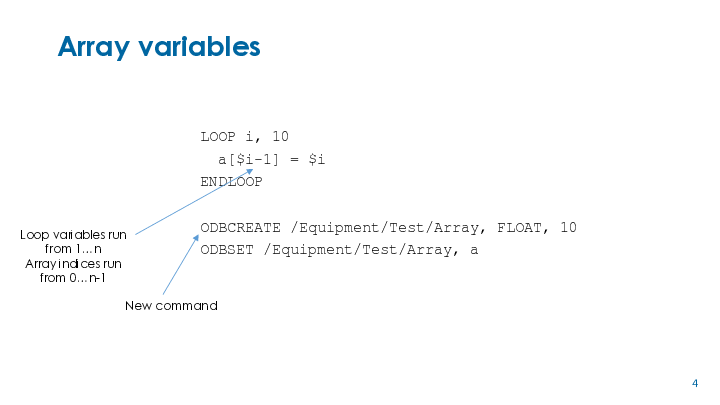

Stefan Ritt | Info | New midas sequencer version | A new version of the midas sequencer has been developed and now available in the

develop/seq_eval branch. Many thanks to Lewis Van Winkle and his TinyExpr library

(https://codeplea.com/tinyexpr), which has now been integrated into the sequencer

and allow arbitrary Math expressions. Here is a complete list of new features:

* Math is now possible in all expressions, such as "x = $i*3 + sin($y*pi)^2", or

in "ODBSET /Path/value[$i*2+1], 10"

* "SET <var>,<value>" can be written as "<var>=<value>", but the old syntax is

still possible.

* There are new functions ODBCREATE and ODBDLETE to create and delete ODB keys,

including arrays

* Variable arrays are now possible, like "a[5] = 0" and "MESSAGE $a[5]"

If the branch works for us in the next days and I don't get complaints from

others, I will merge the branch into develop next week.

Stefan |

|

2358

|

22 Mar 2022 |

Stefan Ritt | Info | New midas sequencer version | After several days of testing in various experiments, the new sequencer has

been merged into the develop branch. One more feature was added. The path to

the ODB can now contain variables which are substituted with their values.

Instead writing

ODBSET /Equipment/XYZ/Setting/1/Switch, 1

ODBSET /Equipment/XYZ/Setting/2/Switch, 1

ODBSET /Equipment/XYZ/Setting/3/Switch, 1

one can now write

LOOP i, 3

ODBSET /Equipment/XYZ/Setting/$i/Switch, 1

ENDLOOP

Of course it is not possible for me to test any possible script. So if you

have issues with the new sequencer, please don't hesitate to report them

back to me.

Best,

Stefan |

|

2382

|

12 Apr 2022 |

Konstantin Olchanski | Info | ODB JSON support | > > > > odbedit can now save ODB in JSON-formatted files.

> > encode NaN, Inf and -Inf as JSON string values "NaN", "Infinity" and "-Infinity". (Corresponding to the respective Javascript values).

> http://docs.oasis-open.org/odata/odata-json-format/v4.0/os/odata-json-format-v4.0-os.html

> > Values of types [...] Edm.Single, Edm.Double, and Edm.Decimal are represented as JSON numbers,

> except for NaN, INF, and �INF which are represented as strings "NaN", "INF" and "-INF".

> https://xkcd.com/927/

Per xkcd, there is a new json standard "json5". In addition to other things, numeric

values NaN, +Infinity and -Infinity are encoded as literals NaN, Infinity and -Infinity (without quotes):

https://spec.json5.org/#numbers

Good discussion of this mess here:

https://stackoverflow.com/questions/1423081/json-left-out-infinity-and-nan-json-status-in-ecmascript

K.O. |

|

2383

|

13 Apr 2022 |

Stefan Ritt | Info | ODB JSON support | > Per xkcd, there is a new json standard "json5". In addition to other things, numeric

> values NaN, +Infinity and -Infinity are encoded as literals NaN, Infinity and -Infinity (without quotes):

> https://spec.json5.org/#numbers

Just for curiosity: Is this implemented by the midas json library now? |

|

2384

|

13 Apr 2022 |

Konstantin Olchanski | Info | ODB JSON support | > > Per xkcd, there is a new json standard "json5". In addition to other things, numeric

> > values NaN, +Infinity and -Infinity are encoded as literals NaN, Infinity and -Infinity (without quotes):

> > https://spec.json5.org/#numbers

>

> Just for curiosity: Is this implemented by the midas json library now?

MIDAS encodes NaN, Infinity and -Infinity as javascript compatible "NaN", "Infinity" and "-Infinity",

this encoding is popular with other projects and allows correct transmission of these values

from ODB to javascript. The test code for this is on the MIDAS "Example" page, scroll down

to "Test nan and inf encoding".

I think this type of encoding, using strings to encode special values, is more in the spirit of json,

compared to other approaches such as adding special literals just for a few special cases

leaving other special cases in the cold (ieee-754 specifies several different types of NaN,

you can encode them into different nan-strings, but not into the one nan-literal (need more nan-literals,

requires change to the standard and change to every json parser).

As editorial comment, it boggles my mind, what university or kindergarden these people went to

who made the biggest number, the smallest number and the imaginary number (sqrt(-1))

all equal to zero (all encoded as literal null).

K.O. |

|

2385

|

15 Apr 2022 |

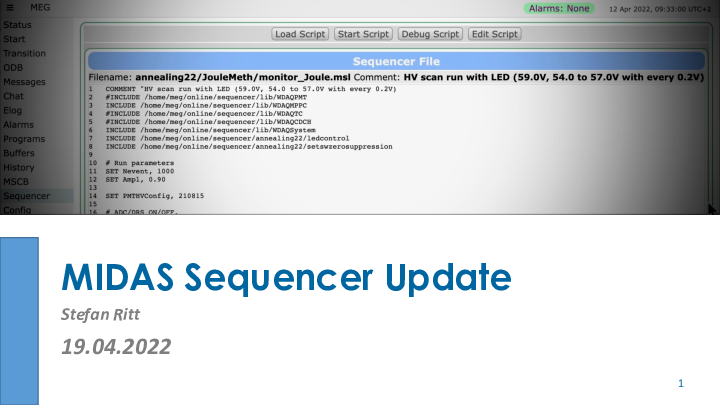

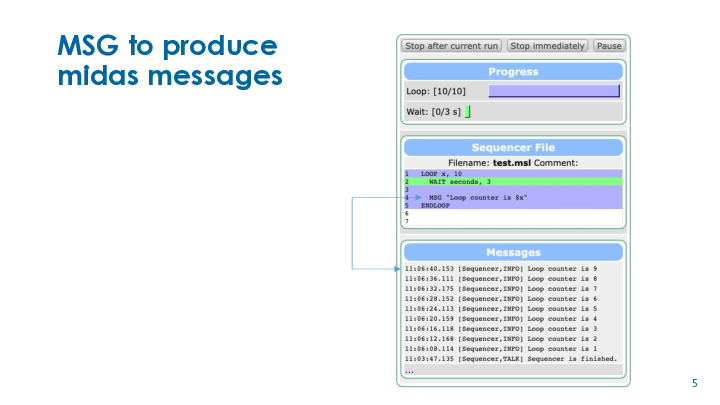

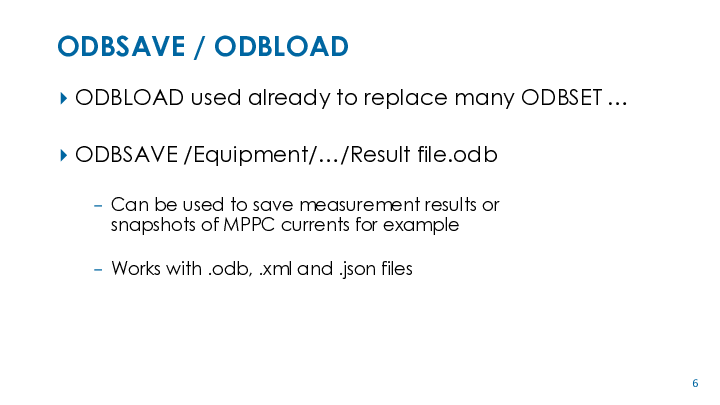

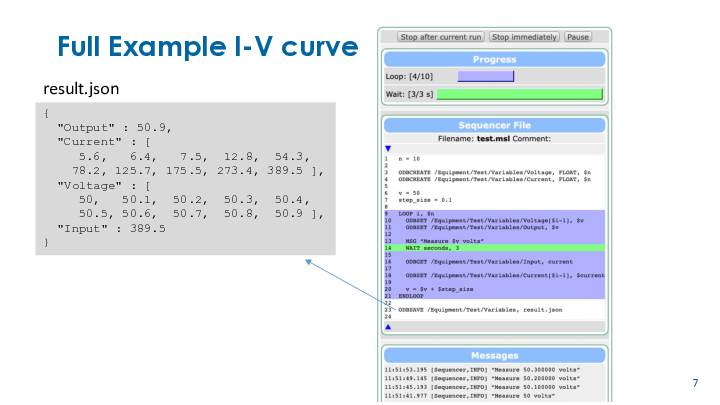

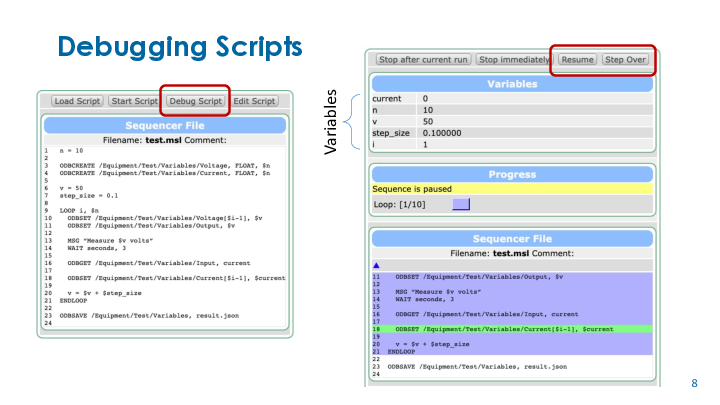

Stefan Ritt | Info | New midas sequencer version | I prepared some slides about the new features of the sequencer and post it here so

people can have a quick look at get some inspiration.

Stefan |

| Attachment 1: sequencer.pdf

|

|

|

2389

|

30 Apr 2022 |

Konstantin Olchanski | Info | added web pages for "show odb clients" and "show open records" | for a long time, midas web pages have been missing the equivalent of odbedit

"scl" and "sor" to display current odb clients and current odb open records.

this is now added as buttons "show open records" and "show odb clients" in the

odb editor page.

as in odbedit, "sor" shows open records under the current subtree, i.e. if you

are looking at /equipment, you will not see open records for /experiment. to see

all open records, go to "/".

commit b1ab7e67ecf785744fff092708d8389f222b14a4

K.O. |

|

2390

|

01 May 2022 |

Konstantin Olchanski | Info | added web page for "mdump" | added JSON RPC for bm_receive_event() and added a web page for "mdump".

the event dump is a hex dump for now.

if somebody can contribute a javascript decoder for midas bank format, it would be greatly appreciated.

otherwise, I will eventually write my own decoder library patterned on midasio.h and midasio.cxx.

as of commit 5882d55d1f5bbbdb0d9238ada639e63ac27d8825

K.O. |

|